当前位置:网站首页>[Deep Learning] Today's bug (August 2)

[Deep Learning] Today's bug (August 2)

2022-08-03 16:18:00 【O o o front】

前言

博主主页:阿阿阿阿锋的主页_CSDN

代码来源:《动手学深度学习》

Got an error message today:TypeError: 'method' object is not iterable.

意思是:类型错误:“方法”对象不可迭代.

然后对mxnetThe understanding of automatic gradient calculation is a little clearer.

文章目录

一. TypeError: ‘method’ object is not iterable

1. 错误提示 && 部分代码

错误提示:

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-40-9ae7d7a05a23> in <module>

4 # From numeric labels to text labels

5 true_labels = d2l.get_fashion_mnist_labels(y.asnumpy())

----> 6 pred_labels = d2l.get_fashion_mnist_labels(net(X).argmax(axis=1).asnumpy)

7 titles = [true + '\n' + pred for true, pred in zip(true_labels, pred_labels)]

8

D:\anaconda\lib\site-packages\d2lzh\utils.py in get_fashion_mnist_labels(labels)

183 text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat',

184 'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

--> 185 return [text_labels[int(i)] for i in labels]

186

187

TypeError: 'method' object is not iterable

Usually the error message is still very useful,It can effectively help us locatebug的位置.

部分代码段:

for X, y in test_iter:

break

# From numeric labels to text labels

true_labels = d2l.get_fashion_mnist_labels(y.asnumpy())

pred_labels = d2l.get_fashion_mnist_labels(net(X).argmax(axis=1).asnumpy)

titles = [true + '\n' + pred for true, pred in zip(true_labels, pred_labels)]

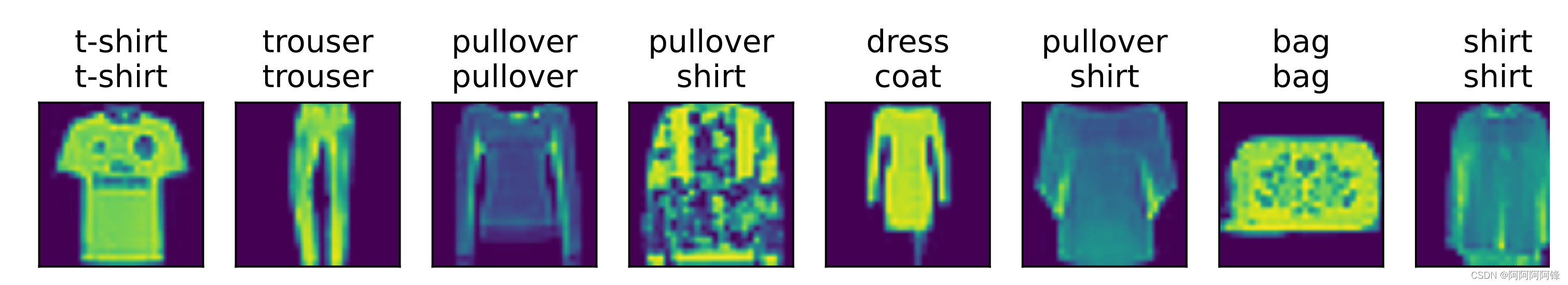

d2l.show_fashion_mnist(X[0:9], titles[0:9])

2. 消灭bug

I am looking at this error,当时就懵了,I didn't understand what it meant.A closer inspection of the code later found that,It turned out to be calling a function

asnumpy时,函数名后面的()掉了.于是Originally I wanted to call the function,recognized as an object,passed as a parameter to another function,Then a further type error was thrown(TypeError).

plus missing

()后,一切正常.程序跑起来了:

二. 自动求梯度,Find the value of the function?

代码:

num_epochs = 3

lr = 0.1

# 用于训练模型

def train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, params=None, lr=None, trainer=None):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

with autograd.record():

y_hat = net(X)

l = loss(y_hat, y).sum()

l.backward()

d2l.sgd(params, lr, batch_size)

y = y.astype('float32')

train_l_sum += l.asscalar()

train_acc_sum += (y_hat.argmax(axis=1) == y).sum().asscalar()

n += y.size

test_acc = evaluate_accuracy(test_iter, net)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f' % (epoch + 1, train_l_sum / n, train_acc_sum / n, test_acc))

I've been a little confused about this code,主要在

train_l_sum += l.asscalar()这一语句.Variables are used herel的值,Used to calculate the loss of the model on the training set.但是lwhere does the value come from?

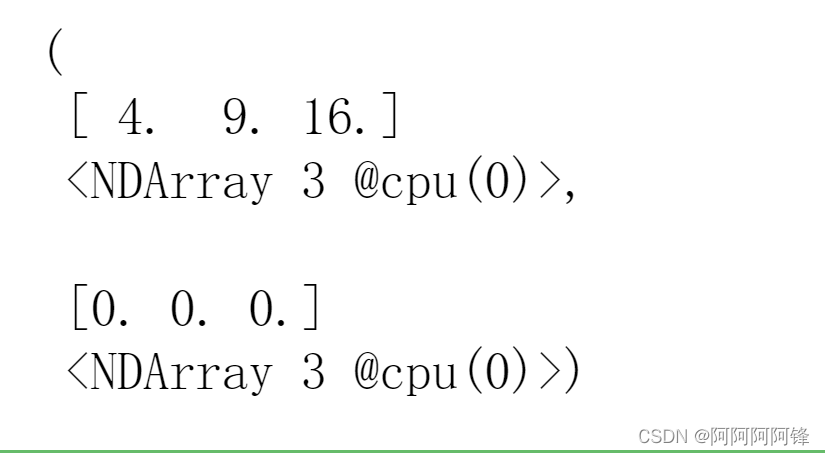

我们看下面这段代码:

%matplotlib inline

import d2lzh as d2l

from mxnet import gluon, autograd, nd

X = nd.array([2, 3, 4])

X.attach_grad()

with autograd.record():

y = X ** 2

# y.backward()

y, X.grad

输出:

原来在使用mxnetin the process of automatically finding the gradient,在

y = X ** 2这一步,It has already been requestedy的值.It is more than just a function expression that requires derivation,It is also an assignment statement.

小结

Spend a lot of time on some small mistakes,真的划不来.

Don't do it again next time马虎了啊.

There are often questions,想明白了之后,I just feel so stupid before.

边栏推荐

- leetcode-693.交替位二进制数

- STM32的HAL和LL库区别和性能对比

- Detailed ReentrantLock

- Neural networks, cool?

- TCP 可靠吗?为什么?

- 用户侧有什么办法可以自检hologres单表占用内存具体是元数据、计算、缓存的使用情况?

- 泰山OFFICE技术讲座:段落边框的绘制难点在哪里?

- QT QT 】 【 to have developed a good program for packaging into a dynamic library

- 在 360 度绩效评估中应该问的 20 个问题

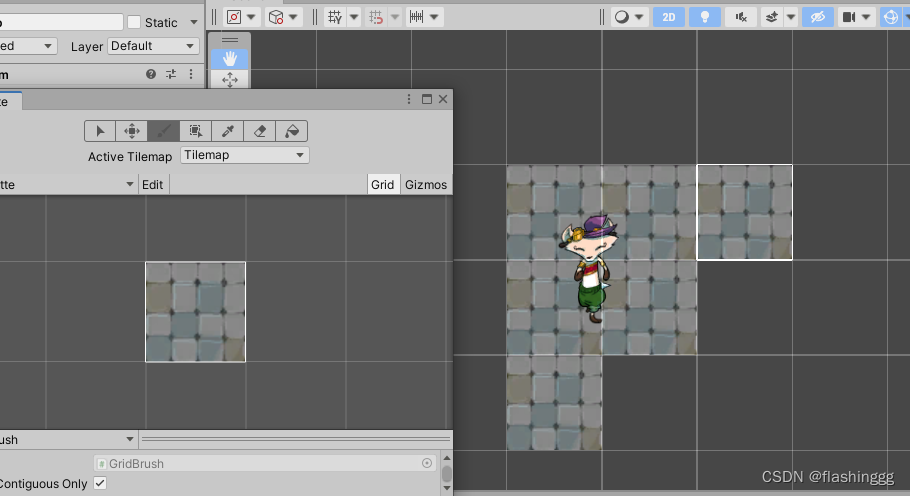

- [Unity Getting Started Plan] Basic Concepts (8) - Tile Map TileMap 01

猜你喜欢

【Unity入门计划】基本概念(8)-瓦片地图 TileMap 01

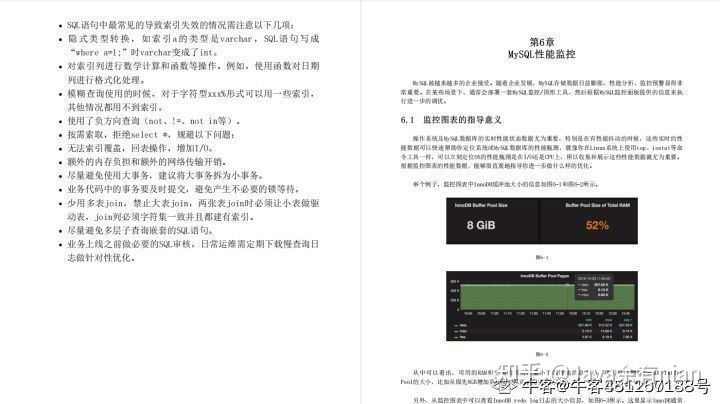

面了个腾讯35k出来的,他让我见识到什么叫精通MySQL调优

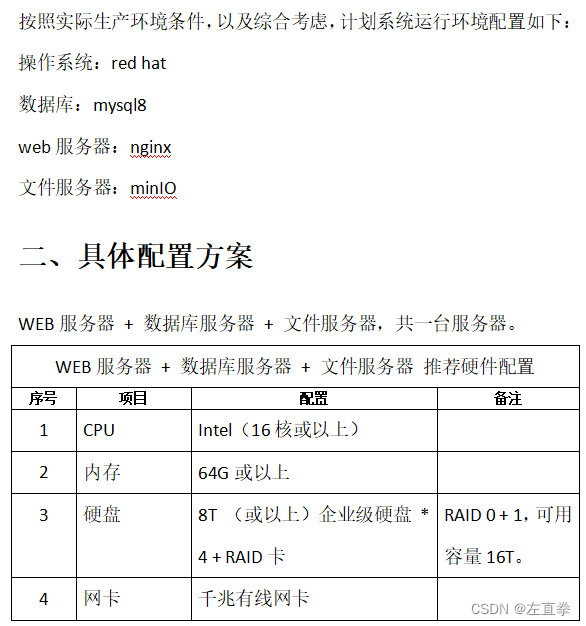

一个文件管理系统的软硬件配置清单

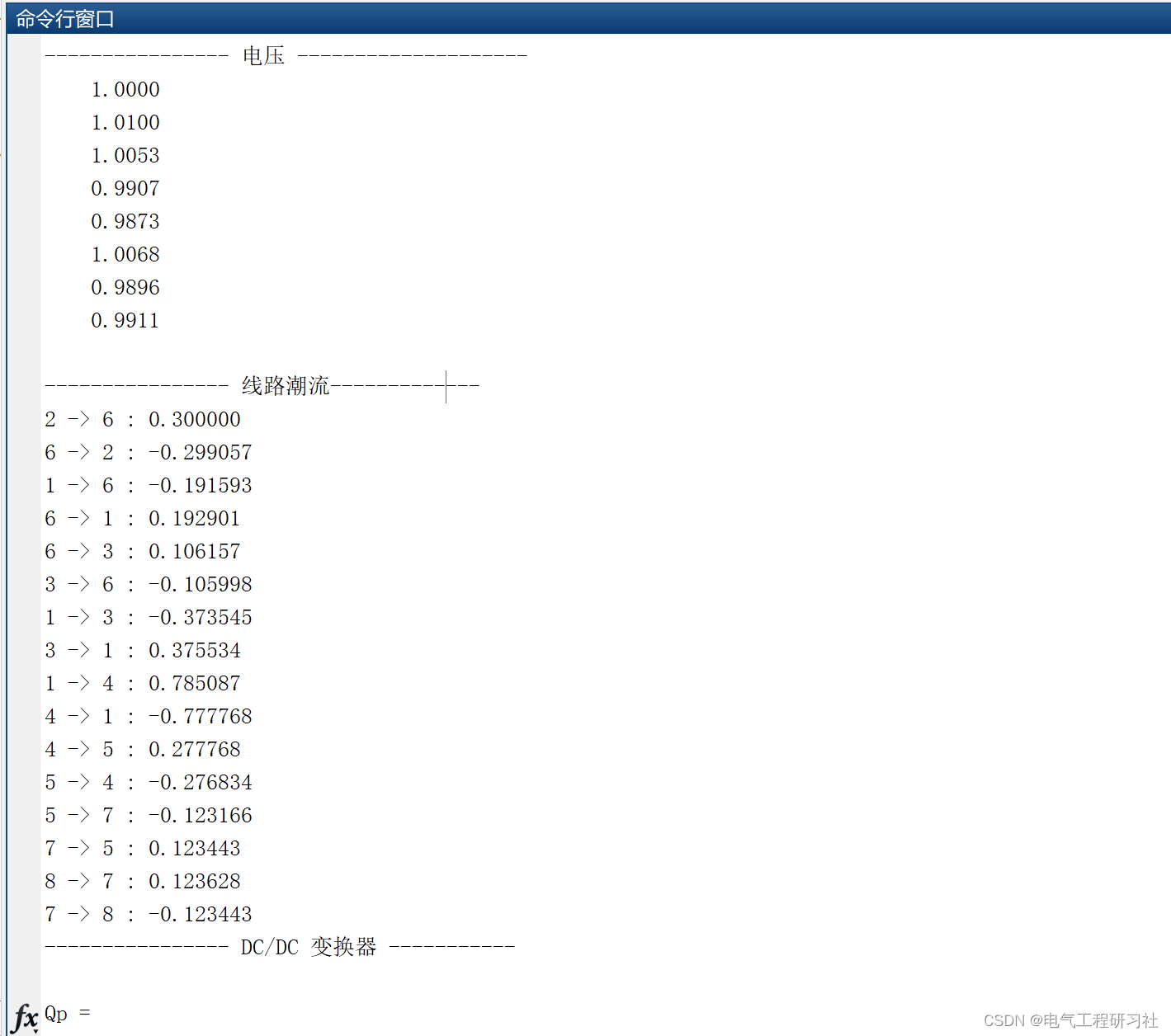

Optimal Power Flow (OPF) for High Voltage Direct Current (HVDC) (Matlab code implementation)

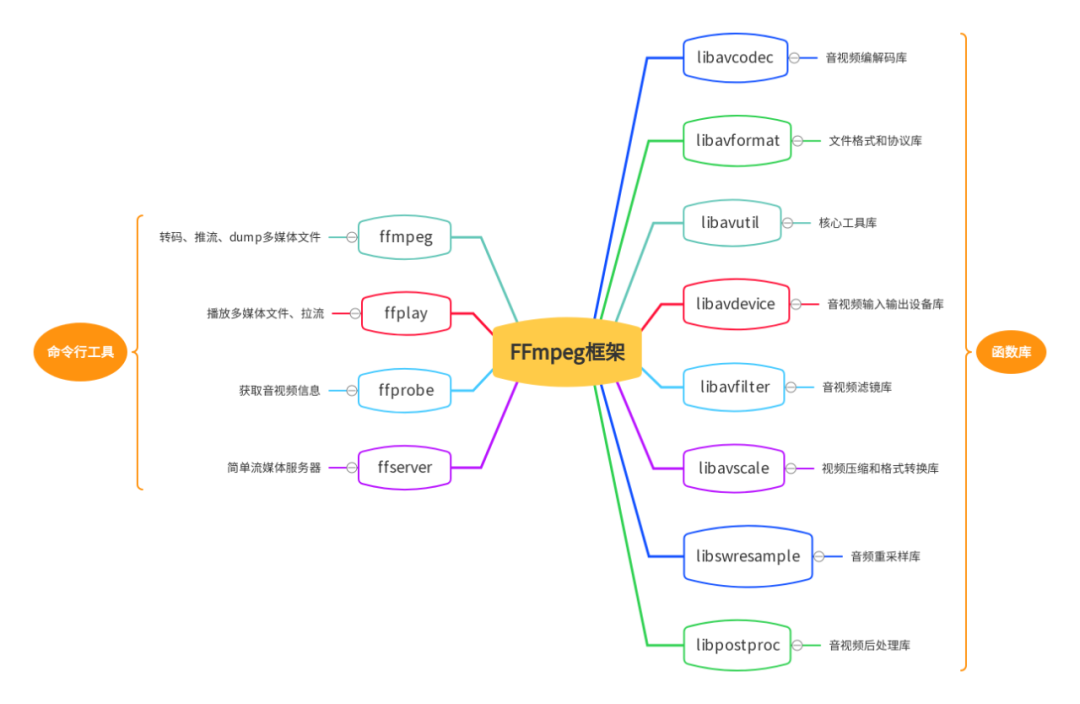

ffplay视频播放原理分析

技术干货|如何将 Pulsar 数据快速且无缝接入 Apache Doris

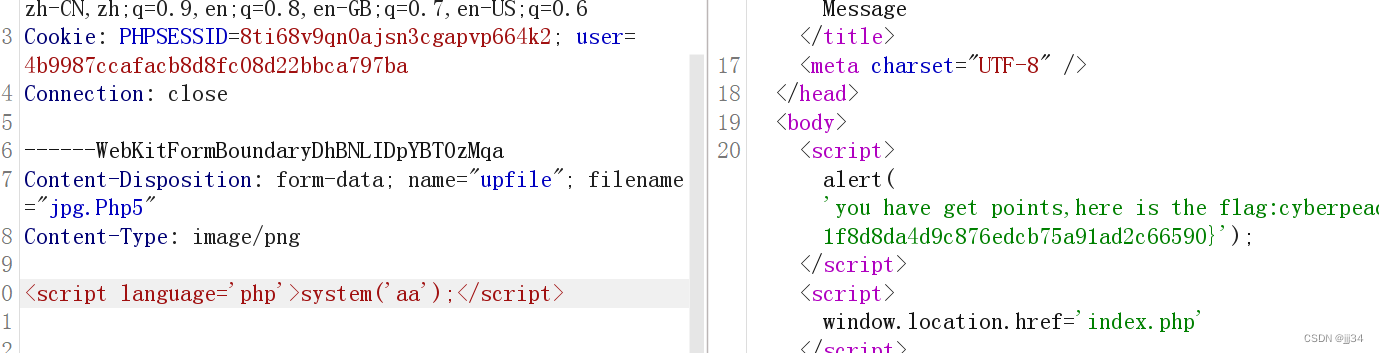

攻防世界----bug

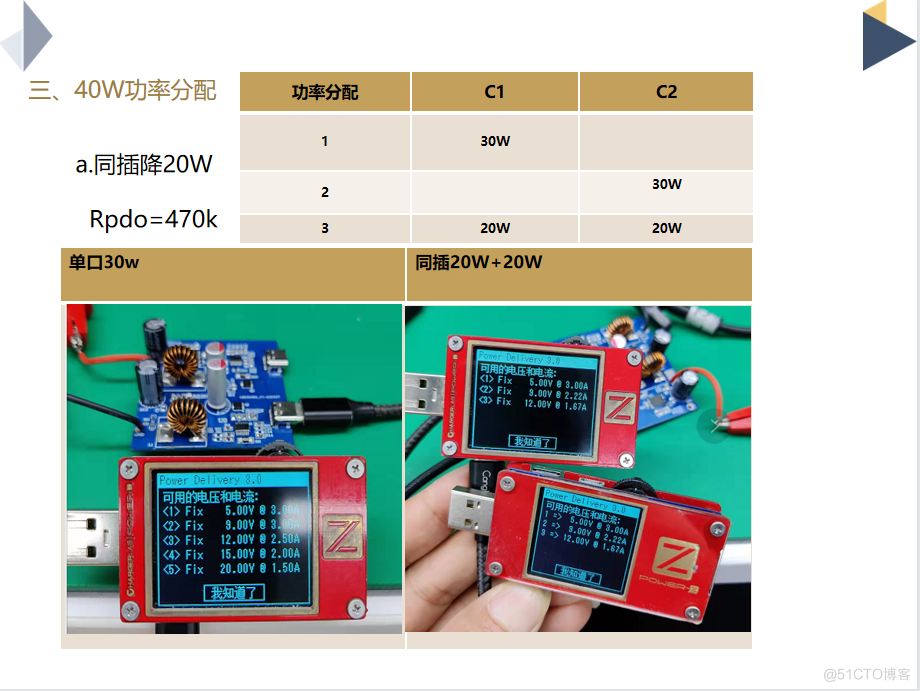

DC-DC 2C(40W/30W) JD6606SX2退功率应用

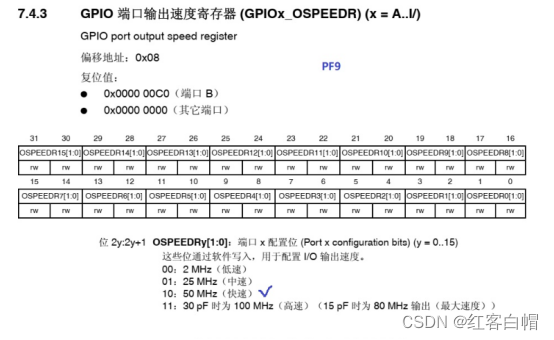

STM32 GPIO LED和蜂鸣器实现【第四天】

美国国防部更“青睐”光量子系统研究路线

随机推荐

js中的基础知识点 —— 事件

Reptile attention

Neural networks, cool?

深入浅出Flask PIN

红蓝对抗经验分享:CS免杀姿势

MySQL性能优化_小表驱动大表

Some optional strategies and usage scenarios for PWA application Service Worker caching

如何选择合适的损失函数,请看......

使用VS Code搭建ESP-IDF环境

unity用代码生成LightProbeGroup

证实了,百度没有快照了

用友YonSuite与旺店通数据集成对接-技术篇2

详谈RDMA技术原理和三种实现方式

瞌睡检测系统介绍

Tolstoy: There are only two misfortunes in life

2021年数据泄露成本报告解读

spark入门学习-1

使用Make/CMake编译ARM裸机程序(基于HT32F52352 Cortex-M0+)

Interpretation of the 2021 Cost of Data Breach Report

带你了解什么是 Web3.0