当前位置:网站首页>Crawler Xiaobai Notes (yesterday's supplement to pay attention to parsing data)

Crawler Xiaobai Notes (yesterday's supplement to pay attention to parsing data)

2022-08-04 15:40:00 【Always sweat than talent】

import reimport urllib.requestfrom bs4 import BeautifulSoupdef main():#1. Crawl the web (parse the data one by one in this)baseurl = 'https://movie.douban.com/top250?start='datalist = getData(baseurl)#2. Save dataprint()#movie linkfindLink = re.compile(r'')#movie picturesfindImg = re.compile(r'(.*?)')#video ratingfindRating = re.compile(r'')#The number of reviewersfindJudge = re.compile(r'(\d*)people evaluation')#profilefindInq = re.compile(r'(.*?)')#video related contentfindBd = re.compile(r'(.*?)

',re.S)#crawl the webdef getData(baseurl):#First you need to get a page of data, and then use a loop to get the information of each page#datalist stores one page of data eachdatalist = []for i in range(0,10):url = baseurl + str(i*25)html = askURL(url)#Parse the data of each page one by one in the loop#loop through each moviesoup = BeautifulSoup(html,"html.parser")for item in soup.find_all('div',class_ = 'item'):data = []# used to store the information of each movieitem = str(item)link = re.findall(findLink,item)[0]data.append(link)img = re.findall(findImg,item)[0]data.append(img)title = re.findall(findTitle, item)if(len(title) == 2) :ctitle = title[0]data.append(ctitle)etitle = title[1].replace("/","")data.append(etitle)else:data.append(title[0])data.append(' ')#When there is no English name, keep the position with a spacejudgeNum = re.findall(findJudge,item)[0]data.append(judgeNum)Inq = re.findall(findInq,item)if len(Inq) != 0:inq = Inq[0].replace('.'," ")data.append(inq)else :data.append(" ")#If there is none, leave it blankbd = re.findall(findBd,item)[0]bd = re.sub('(\s+)?'," ",bd)bd = re.sub("/"," ",bd)data.append(bd.strip())#Remove the spaces before and afterdatalist.append(data)# Put the processed movie information into the datalistprint(datalist)return datalist#Request web pagedef askURL(url):header = {"User-Agent": "Mozilla/5.0(Linux;Android6.0;Nexus5 Build / MRA58N) AppleWebKit / 537.36(KHTML, likeGecko) Chrome / 103.0.5060.134MobileSafari / 537.36Edg / 103.0.1264.77"}request = urllib.request.Request(url, headers = header)html = ""try :response = urllib.request.urlopen(request)html = response.read().decode()except urllib.error.URLerror as e:if hasattr(e,"code"):print(e.code)if hasattr(e,"reason"):print(e.reason)return html#save datadef saveData() :print()if __name__ == '__main__':main() 边栏推荐

猜你喜欢

随机推荐

游戏网络 UDP+FEC+KCP

Nuget 通过 dotnet 命令行发布

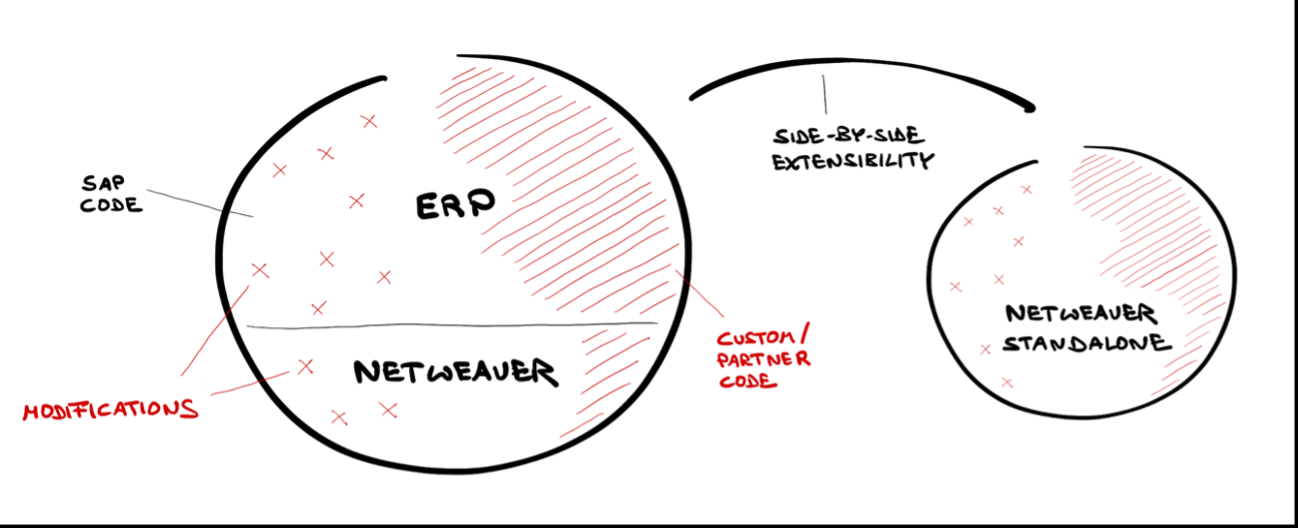

SAP ABAP SteamPunk 蒸汽朋克的最新进展 - 嵌入式蒸汽朋克

Tinymce plugins [Tinymce 扩展插件集合]

C# 判断文件编码

基于 Next.js实现在线Excel

What is an artifact library in a DevOps platform?What's the use?

AAAI‘22 推荐系统论文梳理

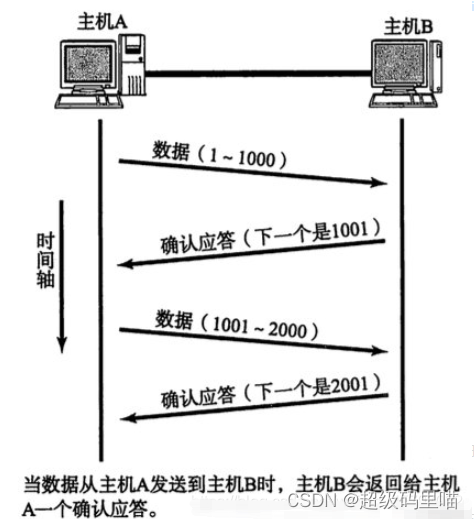

保证通信的机制有哪些

Latex 去掉行号

卖家寄卖流程梳理

Go Go 简单的很,标准库之 fmt 包的一键入门

使用百度EasyDL实现森林火灾预警识别

实战:10 种实现延迟任务的方法,附代码!

What is the difference between ITSM software and a work order system?

一文解答DevOps平台的制品库是什么

什么是 DevOps?看这一篇就够了!

For循环控制

IP第十六天笔记

IP报文头解析