当前位置:网站首页>Pytorch auto derivation

Pytorch auto derivation

2022-07-01 00:26:00 【andrew P】

1. Derivation

params = torch.tensor([1.0, 0.0], requires_grad=True)Notice the tensor constructor require_grad = True Do you ? This parameter tells us PyTorch need track stay params All tensors resulting from operations on . let me put it another way , Any params Tensors for ancestors can be accessed from params To the... Called by the tensor Function chain . If these functions are differentiable ( majority PyTorch Tensor operations are all differentiable ), Then the value of the derivative will be automatically stored in the grad Properties of the .

You can put any number of tensors require_grad Set to True And combine any function . under these circumstances ,

PyTorch Along the entire function chain ( That is, the calculation chart ) Calculate the derivative of the loss , And in these tensors ( That is, calculate the leaf nodes of the graph ) Of grad Attribute to accumulate these derivative values (accumulate) get up

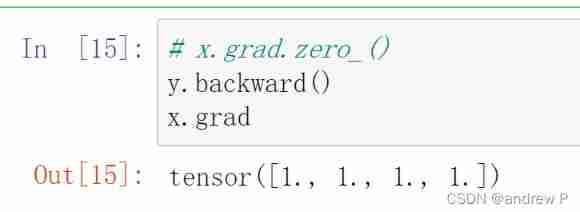

Warning :PyTorch novice ( And many experienced people ) Things that are often overlooked : It's accumulation (accumulate) Not storage (store).

To prevent this from happening , You need to explicitly clear the gradient at each iteration . You can use the in place method zero_ Do it easily :

if params.grad is not None:

params.grad.zero_()# This can be done by calling backward Previously done at any time in the loop If not used params.grad.zero_() There will be a gradient accumulation effect

First derivative

Second derivative

The gradient of the sum is 1, But the gradient does not return 0 It's accumulated

Please note that , When updating parameters , You also performed a strange .detach().requires_grad_() . Understand why , Please consider the calculation diagram you build . To avoid repeated use of variable names , We reconstruct params Parameter update line : p1 = (p0 * lr *

p0.grad) . here p0 Is the random weight used to initialize the model , p0.grad Is based on the loss function p0 And training data .

2. Optimize

Each optimizer has two methods : zero_grad and step . The former will construct all parameters passed to the optimizer grad Attribute zeroing ; The latter updates the values of these parameters according to the optimization policies implemented by the specific optimizer .

Now create parameters and instantiate a gradient descent optimizer :

t_p = model(t_u, *params)

loss = loss_fn(t_p, t_c)

loss.backward()

optimizer.step()

params call step after params The value of is updated , No need to update it personally ! call step What happened was : The optimizer uses the

params subtract learning_rate And grad The product of params , This is exactly the same as the previous manual update process .

params = (params - learning_rate *params.grad).detach().requires_grad_()Here is the code to prepare the loop , Need to be in the right place ( Calling backward Before ) Insert additional zero_grad :

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-2

optimizer = optim.SGD([params], lr=learning_rate)

t_p = model(t_un, *params)

loss = loss_fn(t_p, t_c)

optimizer.zero_grad() # This call can be earlier in the loop

loss.backward()

optimizer.step()

paramsfor Loop start

def training_loop(n_epochs, learning_rate, params, t_u, t_c):

for epoch in range(1, n_epochs + 1):

if params.grad is not None:

params.grad.zero_() # This can be done by calling backward Previously done at any time in the loop

t_p = model(t_u, *params)

loss = loss_fn(t_p, t_c)

loss.backward()

params = (params - learning_rate *params.grad).detach().requires_grad_()

if epoch % 500 == 0:

print('Epoch %d, Loss %f' % (epoch, float(loss)))

return paramsStart training

t_un = 0.1 * t_u

training_loop(

n_epochs = 5000,

learning_rate = 1e-2,

params = torch.tensor([1.0, 0.0], requires_grad=True),

t_u = t_un,

t_c = t_c)Optimizer settings

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-5

optimizer = optim.SGD([params], lr=learning_rate)边栏推荐

- Basic knowledge of Embedded Network - introduction of mqtt

- ABAQUS 2022 software installation package and installation tutorial

- MySQL variables, stored procedures and functions

- Arthas debugging problem determination Toolkit

- Rhai - rust's embedded scripting engine

- 20220215-ctf-misc-buuctf-ningen--binwalk analysis --dd command separation --archpr brute force cracking

- 2022-2028 global 3D printing ASA consumables industry research and trend analysis report

- 2022-2028 global rotary transmission system industry research and trend analysis report

- Pycharm useful shortcut keys

- Quick start of wechat applet -- project introduction

猜你喜欢

ABAQUS 2022 latest edition - perfect realistic simulation solution

20220216 misc buuctf another world WinHex, ASCII conversion flag zip file extraction and repair if you give me three days of brightness zip to rar, Morse code waveform conversion mysterious tornado br

How to edit special effects in VR panorama? How to display detailed functions?

![[leetcode] [SQL] notes](/img/8d/160a03b9176b8ccd8d52f59d4bb47f.png)

[leetcode] [SQL] notes

Redis - sentinel mode

20220215 CTF misc buuctf the world in the mirror the use of stegsolve tool data extract

20220216 misc buuctf backdoor killing (d shield scanning) - clues in the packet (Base64 to image)

C WinForm program interface optimization example

Teach you how to use Hal library to get started -- become a lighting master

To tell you the truth, ThreadLocal is really not an advanced thing

随机推荐

Never use redis expired monitoring to implement scheduled tasks!

Why should VR panoramic shooting join us? Leverage resources to achieve win-win results

20220215-ctf-misc-buuctf-ningen--binwalk analysis --dd command separation --archpr brute force cracking

如何关闭一个开放的DNS解析器

Yboj mesh sequence [Lagrange interpolation]

LVM snapshot: backup based on LVM snapshot

left join左连接匹配数据为NULL时显示指定值

When is it appropriate to replace a virtual machine with a virtual machine?

在指南针上买基金安全吗?

Quick start of wechat applet -- project introduction

Random ball size, random motion collision

Redis - understand the master-slave replication mechanism

Tide - rust web framework based on async STD

PS2 handle-1 "recommended collection"

五分钟搞懂探索式测试

Summer Challenge [FFH] harmonyos mobile phone remote control Dayu development board camera

The programmer's girlfriend gave me a fatigue driving test

Matlab saves triangulation results as STL files

Wordpress blog uses volcano engine veimagex for static resource CDN acceleration (free)

Error 2059 when Navicat connects to MySQL