当前位置:网站首页>Pytorch - Distributed Model Training

Pytorch - Distributed Model Training

2022-08-01 14:16:00 【CyrusMay】

Pytorch —— 分布式模型训练

1.数据并行

1.1 单机单卡

import torch

from torch import nn

import torch.nn.functional as F

import os

model = nn.Sequential(nn.Linear(in_features=10,out_features=20),

nn.ReLU(),

nn.Linear(in_features=20,out_features=2),

nn.Sigmoid())

data = torch.rand([100,10])

optimizer = torch.optim.Adam(model.parameters(),lr = 0.001)

print(torch.cuda.is_available())

# Specifies to use only one graphics card

# Can be run in the terminal CUDA_VISIBLE_DEVICES="0"

os.environ["CUDA_VISIBLE_DEVICES"]="0"

# selected graphics card

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# 模型拷贝

model.to(device)

# 数据拷贝

data = data.to(device)

# 模型存储

torch.save({

"model_state_dict":model.state_dict(),

"optimizer_state_dict":optimizer.state_dict()},"./model")

# 模型加载

checkpoint = torch.load("./model",map_location=device)

model.load_state_dict(checkpoint["model_state_dict"])

optimizer.load_state_dict(checkpoint["optimizer_state_dict"])

1.2 单机多卡

代码

import torch

import torch.nn.functional as F

from torch import nn

import os

# 获取当前gpu的编号

local_rank = int(os.environ["LOCAL_RANK"])

torch.cuda.set_device(local_rank)

device = torch.device("cuda",local_rank)

dataset = torch.rand([1000,10])

model = nn.Sequential(

nn.Linear(),

nn.ReLU(),

nn.Linear(),

nn.Sigmoid()

)

optimizer = torch.optim.Adam(model.parameters,lr=0.001)

# 检测GPU的数目

n_gpus = torch.cuda.device_count()

# Initialize a process group

torch.distributed.init_process_group(backend="nccl",init_method="env://") # backendfor communication

# 模型拷贝,放入DistributedDataParallel

model = torch.nn.parallel.DistributedDataParallel(model,device_ids=[local_rank],output_device=local_rank)

# 构建分布式的sampler

sampler = torch.utils.data.distributed.DistributedSampler(dataset)

# 构建dataloader

BATCH_SIZE = 128

dataloader = torch.utils.data.DataLoader(dataset=dataset,

batch_size=BATCH_SIZE,

num_workers = 8,

sampler = sampler)

for epoch in range(1000):

for x in dataloader:

sampler.set_epoch(epoch) # play differentlyshuffle作用

if local_rank == 0:

# 模型存储

torch.save({

"model_state_dict":model.module.state_dict()

},"./model")

# 模型加载

checkpoint = torch.load("./model",map_location=local_rank)

model.load_state_dict(checkpoint["model_state_dict"],

)

Start the task in the terminal

torchrun --nproc_per_node=n_gpus train.py

1.3 多机多卡

代码

import torch

import torch.nn.functional as F

from torch import nn

import os

# 获取当前gpu的编号

local_rank = int(os.environ["LOCAL_RANK"])

torch.cuda.set_device(local_rank)

device = torch.device("cuda",local_rank)

dataset = torch.rand([1000,10])

model = nn.Sequential(

nn.Linear(),

nn.ReLU(),

nn.Linear(),

nn.Sigmoid()

)

optimizer = torch.optim.Adam(model.parameters,lr=0.001)

# 检测GPU的数目

n_gpus = torch.cuda.device_count()

# Initialize a process group

torch.distributed.init_process_group(backend="nccl",init_method="env://") # backendfor communication

# 模型拷贝,放入DistributedDataParallel

model = torch.nn.parallel.DistributedDataParallel(model,device_ids=[local_rank],output_device=local_rank)

# 构建分布式的sampler

sampler = torch.utils.data.distributed.DistributedSampler(dataset)

# 构建dataloader

BATCH_SIZE = 128

dataloader = torch.utils.data.DataLoader(dataset=dataset,

batch_size=BATCH_SIZE,

num_workers = 8,

sampler = sampler)

for epoch in range(1000):

for x in dataloader:

sampler.set_epoch(epoch) # play differentlyshuffle作用

if local_rank == 0:

# 模型存储

torch.save({

"model_state_dict":model.module.state_dict()

},"./model")

# 模型加载

checkpoint = torch.load("./model",map_location=local_rank)

model.load_state_dict(checkpoint["model_state_dict"],

)

The terminal starts the task

Do it once on each node

torchrun --nproc_per_node=n_gpus --nodes=2 --node_rank=0 --master_addr="主节点IP" --master_port="主节点端口号" train.py

2 模型并行

略

by CyrusMay 2022 07 29

边栏推荐

猜你喜欢

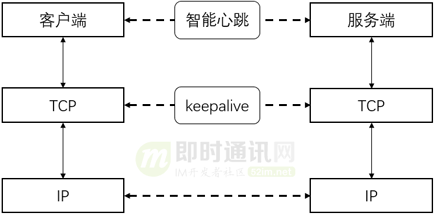

Chat technology in live broadcast system (8): Architecture practice of IM message module in vivo live broadcast system

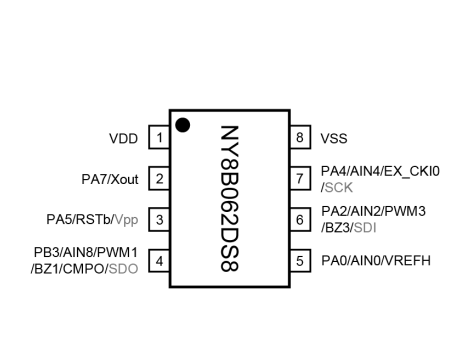

AD单片机九齐单片机NY8B062D SOP16九齐

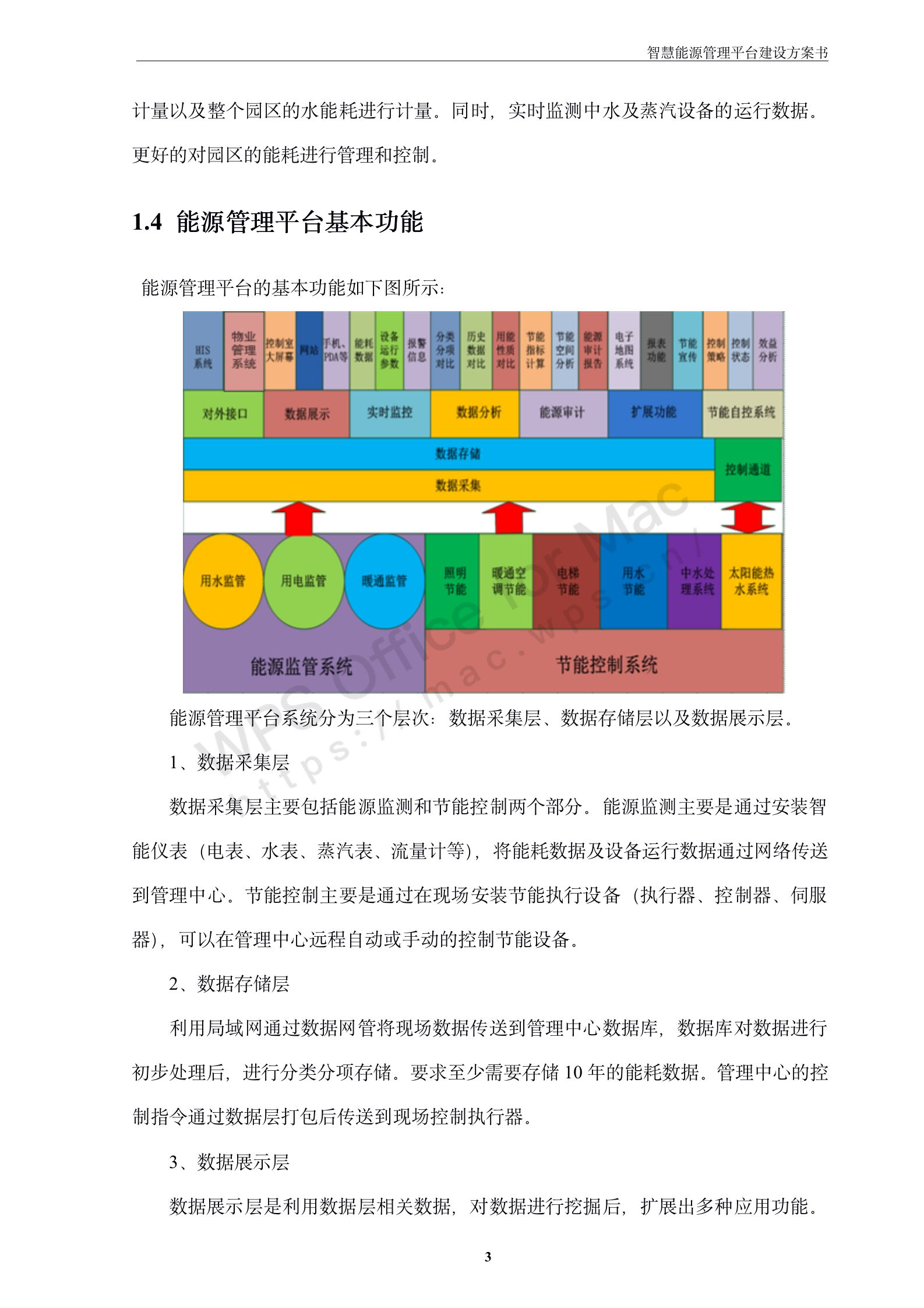

170页6万字智慧能源管理平台建设方案书

【每日一题】1331. 数组序号转换

响应式2022英文企业官网源码,感觉挺有创意的

Koreographer Professional Edition丨一款Unity音游插件教程

HTB-Shocker

沃文特生物IPO过会:年营收4.8亿 养老基金是股东

openEuler 社区12位开发者荣获年度开源贡献之星

线性代数的简单应用

随机推荐

使用open3d可视化3d人脸

final关键字的作用 final和基本类型、引用类型

math.pow()函数用法[通俗易懂]

从零开始Blazor Server(4)--登录系统

[机缘参悟-57]:《素书》-4-修身养志[本德宗道章第四]

leetcode.26 删除有序数组中的重复项(set/直接遍历)

Two Permutations

stm32l476芯片介绍(nvidia驱动无法找到兼容的图形硬件)

openEuler 社区12位开发者荣获年度开源贡献之星

MCU开发是什么?国内MCU产业现状如何

免费使用高性能的GPU和TPU—谷歌Colab使用教程

Yann LeCun开怼谷歌研究:目标传播早就有了,你们创新在哪里?

170页6万字智慧能源管理平台建设方案书

Longkou united chemical registration: through 550 million revenue xiu-mei li control 92.5% stake

HTB-Mirai

牛客刷SQL--6

阿里巴巴测试开发岗P6面试题

【二叉树】路径总和II

Wovent Bio IPO: Annual revenue of 480 million pension fund is a shareholder

What Can Service Mesh Learn from SDN?