当前位置:网站首页>A preliminary study on the middleware of script Downloader

A preliminary study on the middleware of script Downloader

2022-07-03 22:42:00 【Keep a low profile】

Preliminary learning of downloader middleware , This thing is still quite complicated

Mainly complicated in his request 、 Changes in response , If there is no interception , This is easier

stay settings.py It's enabled inside

DOWNLOADER_MIDDLEWARES = {

'test_middle_demo.middlewares.TestMiddleDemoDownloaderMiddleware': 543,

}

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

first spider_opened and The following functions work together

def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

print('1. The crawler is running ')

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request

# - or return a Response object

# - or return a Request object

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

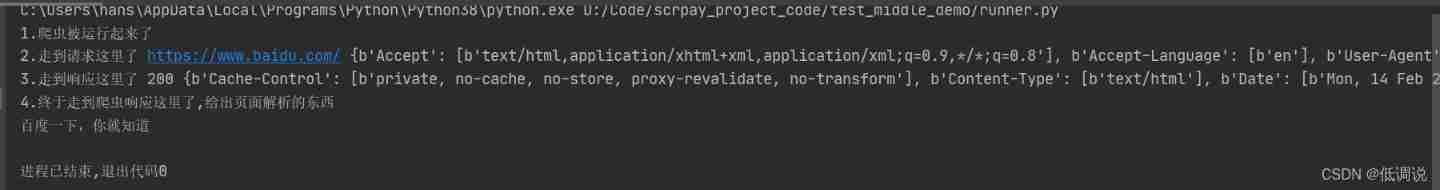

print('2. Come to the request ', request.url, request.headers)

return None

"""

return none Continue to send the request to the middleware or downloader No interception

return Response Direct return response , The middleware Downloader is not executed , Forward pass

return Request Return the request object Return to the engine , engine Return to scheduler , Continue with the following process

""

def process_response(self, request, response, spider):

# Called with the response returned from the downloader.

# Must either;

# - return a Response object # Respond to the upper layer , To the engine

# - return a Request object # Return request , Give the engine , To the scheduler

# - or raise IgnoreRequest

print('3. Here we are ', response.status, response.headers)

return response

import scrapy

from bs4 import BeautifulSoup

class TestMSpider(scrapy.Spider):

name = 'test_m'

allowed_domains = ['baidu.com']

start_urls = ['https://www.baidu.com/']

def parse(self, response, **kwargs):

print('4. Finally came to the reptile response here , Give something about page parsing ')

soup = BeautifulSoup(response.text, 'lxml')

title = soup.find('title').text

print(title)

Then you will get such a result

Take a chestnut

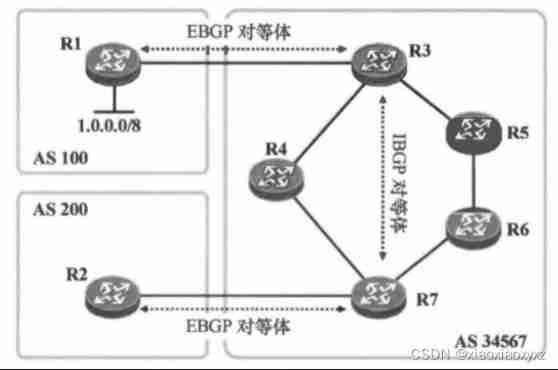

If it is multiple downloader middleware , As shown in the following code

Focus on

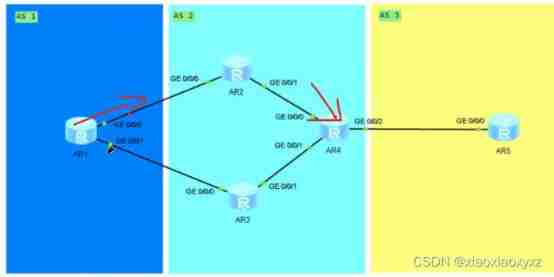

This 100,200 This number Namely Middleware to The distance of the engine

The movement of this thing is linear

So this walking method is shown in the figure below 1,3,4,2

DOWNLOADER_MIDDLEWARES = {

'test_middle_demo.middlewares.TestMiddleDemoDownloaderMiddleware_01': 100,

'test_middle_demo.middlewares.TestMiddleDemoDownloaderMiddleware_02': 200,

}

class TestMiddleDemoDownloaderMiddleware_01:

def process_request(self, request, spider):

print(1)

return None

def process_response(self, request, response, spider):

print(2)

return response

class TestMiddleDemoDownloaderMiddleware_02:

def process_request(self, request, spider):

print(3)

return None

def process_response(self, request, response, spider):

print(4)

return response

边栏推荐

- [actual combat record] record the whole process of the server being attacked (redis vulnerability)

- Es6~es12 knowledge sorting and summary

- Ten minutes will take you in-depth understanding of multithreading. Multithreading on lock optimization (I)

- How to solve win10 black screen with only mouse arrow

- Kali2021.4a build PWN environment

- [Android reverse] use DB browser to view and modify SQLite database (download DB browser installation package | install DB browser tool)

- On my first day at work, this API timeout optimization put me down!

- [flax high frequency question] leetcode 426 Convert binary search tree to sorted double linked list

- How to obtain opensea data through opensea JS

- [dynamic programming] Ji Suan Ke: Suan tou Jun breaks through the barrier (variant of the longest increasing subsequence)

猜你喜欢

Hcip day 12 notes

webAssembly

![[Android reverse] application data directory (files data directory | lib application built-in so dynamic library directory | databases SQLite3 database directory | cache directory)](/img/b8/e2a59772d009b6ee262fb4807f2cd2.jpg)

[Android reverse] application data directory (files data directory | lib application built-in so dynamic library directory | databases SQLite3 database directory | cache directory)

Blue Bridge Cup Guoxin Changtian MCU -- program download (III)

string

Redis single thread and multi thread

Hcip day 16 notes

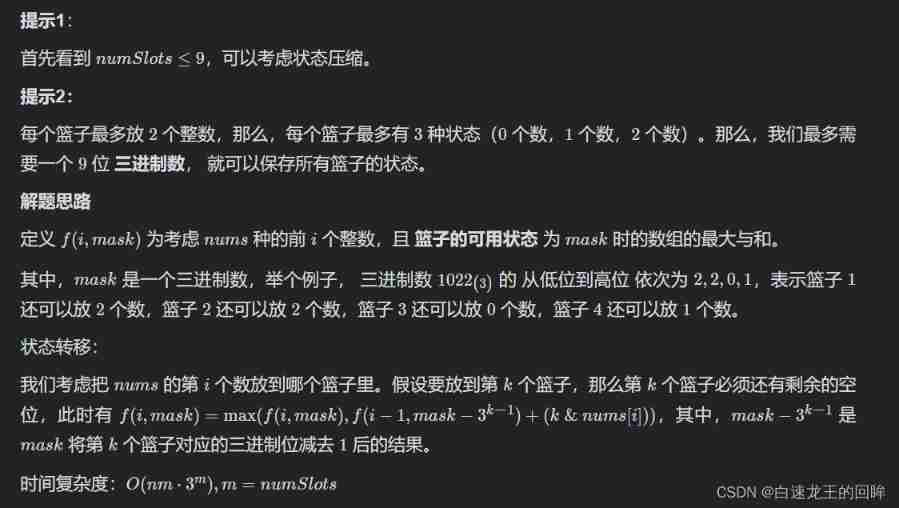

Leetcode week 4: maximum sum of arrays (shape pressing DP bit operation)

Hcip 13th day notes

On my first day at work, this API timeout optimization put me down!

随机推荐

3 environment construction -standalone

Hcip day 16 notes

IDENTITY

How about agricultural futures?

User login function: simple but difficult

Ansible common usage scenarios

Awk getting started to proficient series - awk quick start

Data consistency between redis and database

Quick one click batch adding video text watermark and modifying video size simple tutorial

On my first day at work, this API timeout optimization put me down!

Common problems in multi-threaded learning (I) ArrayList under high concurrency and weird hasmap under concurrency

Druids connect to mysql8.0.11

pycuda._ driver. LogicError: explicit_ context_ dependent failed: invalid device context - no currently

Programming language (1)

Pointer concept & character pointer & pointer array yyds dry inventory

Pooling idea: string constant pool, thread pool, database connection pool

. Net ADO splicing SQL statement with parameters

Rest reference

Kali2021.4a build PWN environment

How to solve win10 black screen with only mouse arrow