当前位置:网站首页>Neural network of PRML reading notes (1)

Neural network of PRML reading notes (1)

2022-07-05 12:39:00 【NLP journey】

Forward neural network

In the classification and regression problems , The final form can be expressed as

y(x,w)=f(∑Mj=1wiϕj(x)) (1)

among , If it's a classification problem ,f(·) Is an activation function , If it's a question of return ,f(·) Is the regression function . The goal now is to adjust during training w, Adapt it to ϕj(x) Prepare training data .

Now let's look at a basic three-layer neural network model : First we will have M About input variables x1,..xd Linear combination function of :

aj=∑Di=1w(1)jixi+w(1)j0 (2)

among j=1,…,M, meanwhile w The superscript of (1) It represents the number of layers of neural network . Then there will be a nonlinear function h(·):

zj=h(aj) (3)

(3) In the same form as (1) Exactly the same as . This is only the output of a unit in the hidden layer , These inputs will go through another linear combination :

ak=∑Mj=1w(2)kjzj+w(2)k0 (4)

among k=1,…,k And start the number of all outputs . For the return question ,yk=ak. For the classification problem ,yk=σ(ak) (5)

among ,σ(a)=11+exp(−1) (6)

Writing all the above formulas together is :

yk(x,w)=σ(∑Mj=1w(2)kjh(∑Di=1w(1)jixi+w(i)j0)+w(2)k0)

Therefore, the essence of neural network model is input variables { xi} In the parameter vector w Nonlinear transformation under .(7)

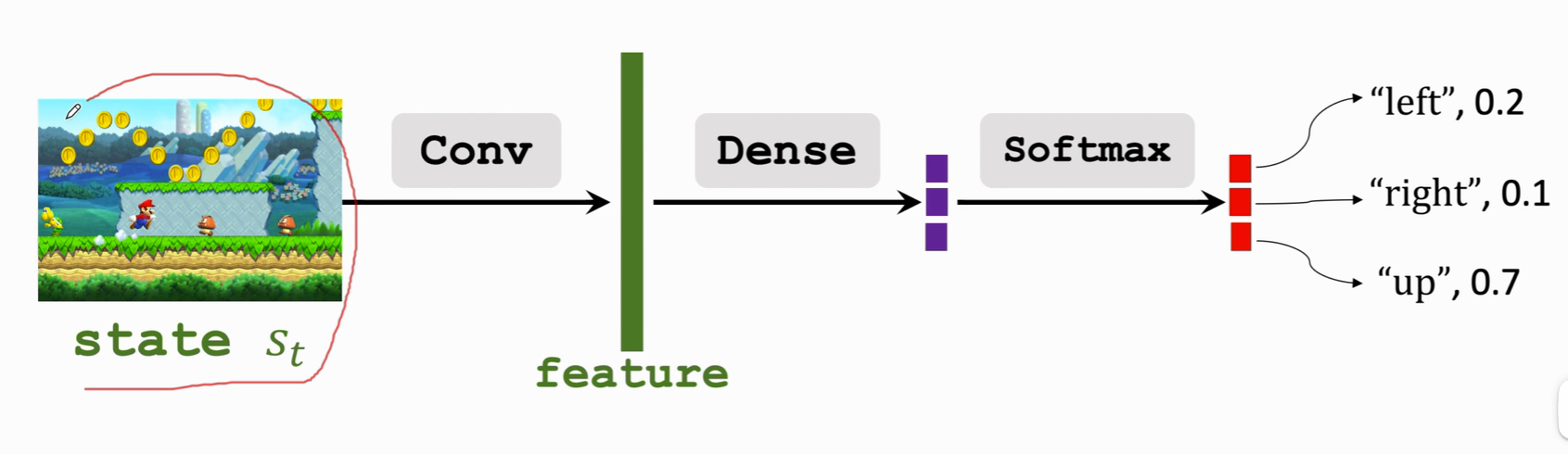

This network can be represented by the following figure :

By introducing subscripts 0 And set x1 You can put the formula 7 to :

yk(x,w)=σ(∑Mj=0w(2)kjh(∑Di=0w(1)jixi)) (8)

Because the combination of linear transformation is still linear transformation , If the activation functions of all hidden layers are linear transformations , Such a neural network can find an equivalent network without hidden layer . If the number of cells in the hidden layer is less than that of the input layer or the output layer , Information will be lost in the process of transmission .

chart 5.1 The network structure in is the most common neural network . There are different names in terms , It can be called three-layer neural network ( According to the number of layers of nodes ) Or single hidden layer neural network ( Number of hidden layers ), Or two-layer neural network ( According to the number of layers of parameters ,PRML Recommended name ).

One way to extend the neural network is to add hop layer connections , In other words, the output layer is not just connected as the hidden layer , It is also directly connected to the input layer . Although it is mentioned in the book that sigmoidal Function as the activation function of the hidden layer, the network can achieve the same effect as the one containing the thermocline connection by adjusting the weight of the input and output , It may be more effective to display the connection with jump layer on time .

On the premise of forward propagation ( That is, it will not contain closed rings , Output cannot be your own input ), There can be more complex networks . The input of the next layer can be all the previous layers or subsets , Or other nodes in the current layer .. An example is as follows :

In this case z1,z2,z3 Is the hidden layer , meanwhile z1 again z2 and z3 The input of .

The approximation ability of neural networks has been widely studied and has become a universal approximator . For example, a two-layer network with linear output can approximate any continuous function with any accuracy in a compact input space , As long as the network has enough hidden layers .

Symmetry of weight space

A property of feedforward neural network is that for the same input and output , There are many possibilities for the weight parameters of the network .

First consider an image 5.1 Two layer neural network . Yes M Hidden layers , And adopt full connection ( Each lower layer is connected to all inputs of the previous layer ), The activation function is tanh. For a unit of the hidden layer . If you change the sign of the input weight of all the units , Then the symbol after linear transformation will be changed , Again because tanh It's an odd function ,tanh(-a)=-tanh(a), Then the output of the unit will also change the symbol . By changing the symbol of the output weight of the unit, the value of the layer finally flowing into the output will not change . That is, by changing the symbols of the weights of the input to the hidden layer and the hidden layer to the output layer at the same time , The input and output of the whole network will not be affected . For having M Hidden layer of units , So there's a total of 2M Group production raw phase Same as transport Enter into − transport Out network Collateral Of power heavy ( Every time individual single element two individual ).

Due to the symmetry of the middle hidden layer , You can swap the input and output parameters of any two hidden layer objects , It will not affect the input and output results of the whole network . therefore , All in all M! In different ways ( That is, in the middle M The number of permutations of hidden layers ).

So for a middle one M For a two-layer neural network with hidden units , The total number of symmetric weights is 2M∗M!. And not only for the activation function tanh It was established. .

边栏推荐

- Preliminary exploration of basic knowledge of MySQL

- ZABBIX agent2 monitors mongodb templates and configuration operations

- Distributed solution - Comprehensive decryption of distributed task scheduling platform - xxljob scheduling center cluster

- Database connection pool & jdbctemplate

- NPM install reports an error

- GPON other manufacturers' configuration process analysis

- The evolution of mobile cross platform technology

- GPS数据格式转换[通俗易懂]

- Distributed solution - distributed session consistency problem

- Pytorch two-layer loop to realize the segmentation of large pictures

猜你喜欢

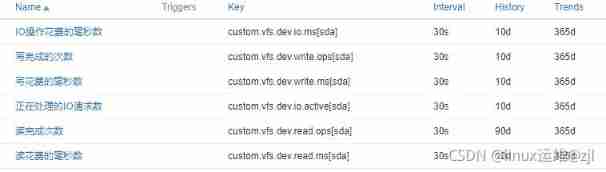

ZABBIX customized monitoring disk IO performance

Reinforcement learning - learning notes 3 | strategic learning

JSON parsing error special character processing (really speechless... Troubleshooting for a long time)

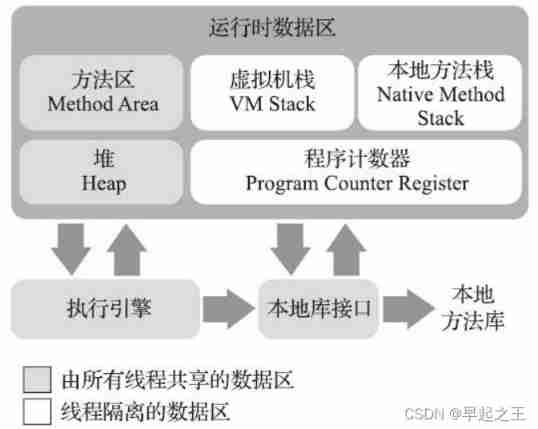

Learn the memory management of JVM 02 - memory allocation of JVM

MySQL index - extended data

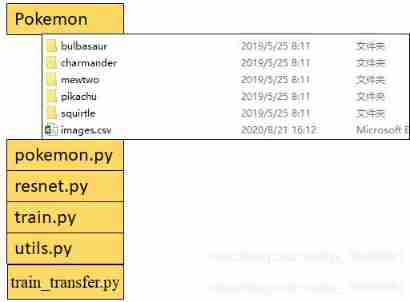

Resnet18 actual battle Baoke dream spirit

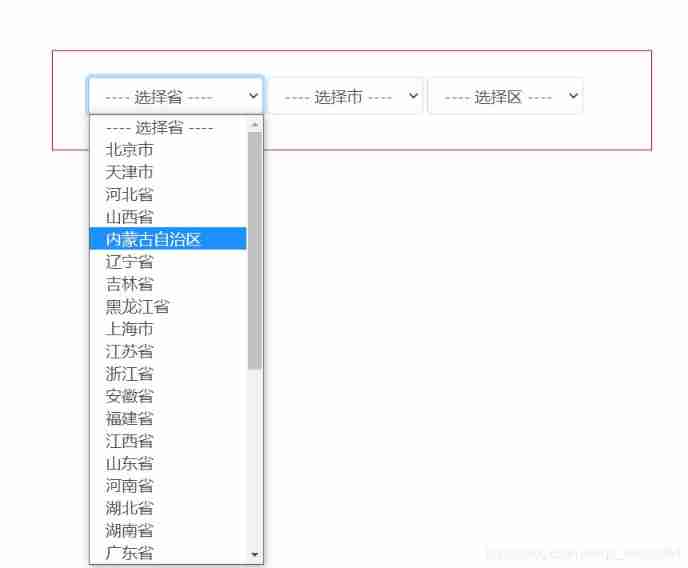

Select drop-down box realizes three-level linkage of provinces and cities in China

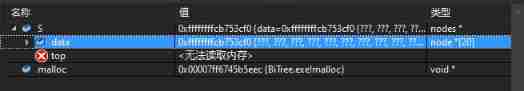

Summary of C language learning problems (VS)

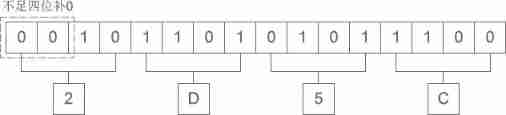

Hexadecimal conversion summary

ActiveMQ installation and deployment simple configuration (personal test)

随机推荐

Hexadecimal conversion summary

Read and understand the rendering mechanism and principle of flutter's three trees

byte2String、string2Byte

MySQL function

MySQL index (1)

Automated test lifecycle

struct MySQL

Get the variable address of structure member in C language

Kotlin函数

Correct opening method of redis distributed lock

The relationship between the size change of characteristic graph and various parameters before and after DL convolution operation

Time conversion error

Add a new cloud disk to Huawei virtual machine

Solve the problem of cache and database double write data consistency

Migrate data from Mysql to neo4j database

View and terminate the executing thread in MySQL

Seven polymorphisms

ZABBIX agent2 monitors mongodb nodes, clusters and templates (official blog)

MySQL multi table operation

Swift - add navigation bar