当前位置:网站首页>4. Data splitting of Flink real-time project

4. Data splitting of Flink real-time project

2022-07-03 21:27:00 【Contestant No.1 position】

1. Abstract

The log data we collected earlier has been saved to Kafka in , As log data ODS layer , from kafka Of ODS The log data read by the layer is divided into 3 class , Page log 、 Start log and exposure log . Although these three types of data are user behavior data , But it has a completely different data structure , So we need to split it . Write the split logs back to Kafka In different topics , As log DWD layer .

The page log is output to the main stream , The startup log is output to the startup side output stream , The exposure log is output to the exposure side output stream

2. Identify new and old users

The client business itself has the identification of new and old users , But it's not accurate enough , Need to reconfirm with real-time calculation ( Business operations are not involved , Just make a simple status confirmation ).

Data splitting is realized by using side output stream

According to the content of log data , Divide the log data into 3 class : Page log 、 Start log and exposure log . Push the data of different streams to the downstream kafka Different Topic in

3. Code implementation

In the bag app Create flink Mission BaseLogTask.java,

adopt flink consumption kafka The data of , Then record the consumption checkpoint Deposit in hdfs in , Remember to create the path manually , Then give the authority

checkpoint Optional use , You can turn it off during the test .

MyKafkaUtil.java Tool class

4. New and old visitor status repair

Rules for identifying new and old customers

Identify new and old visitors , The front end will record the status of new and old customers , Maybe not , Here again , preservation mid State of one day ( Save first visit date as status ), Wait until there is a log in the back equipment , Get the date from the status and compare the log generation date , If the status is not empty , And the status date is not equal to the current date , It means it's a regular visitor , If is_new Mark is 1, Then repair its state .

5. Data splitting is realized by using side output stream

After the above new and old customers repair , Then divide the log data into 3 class

Start log label definition : OutputTag<String> startTag = new OutputTag<String>("start"){};

And exposure log label definitions : OutputTag<String> displayTag = new OutputTag<String>("display"){};

The page log is output to the main stream , The startup log is output to the startup side output stream , The exposure log is output to the exposure log side output stream .

The data is split and sent to kafka

- dwd_start_log: start log

- dwd_display_log: Exposure log

- dwd_page_log: Page log

边栏推荐

- Is it OK for fresh students to change careers to do software testing? The senior answered with his own experience

- Decompile and modify the non source exe or DLL with dnspy

- MySQL——JDBC

- (5) User login - services and processes - History Du touch date stat CP

- Study diary: February 14th, 2022

- Yiwen teaches you how to choose your own NFT trading market

- 常用sql集合

- JS notes (III)

- Hcie security Day12: supplement the concept of packet filtering and security policy

- MySQL - database backup

猜你喜欢

MySQL——JDBC

Collections SQL communes

Goodbye 2021, how do programmers go to the top of the disdain chain?

设计电商秒杀系统

Custom view incomplete to be continued

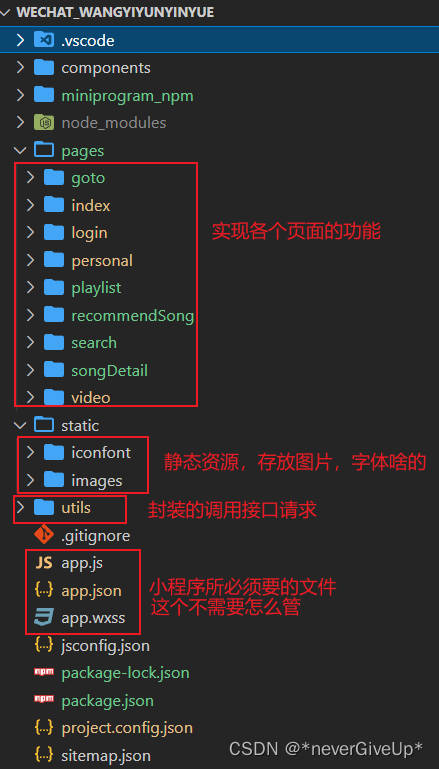

Imitation Netease cloud music applet

不同业务场景该如何选择缓存的读写策略?

(5) Web security | penetration testing | network security operating system database third-party security, with basic use of nmap and masscan

Nacos common configuration

Tidb's initial experience of ticdc6.0

随机推荐

Link aggregation based on team mechanism

Global and Chinese market of AC induction motors 2022-2028: Research Report on technology, participants, trends, market size and share

Service discovery and load balancing mechanism -service

JS three families

不同业务场景该如何选择缓存的读写策略?

内存分析器 (MAT)

[secretly kill little buddy pytorch20 days -day02- example of image data modeling process]

How to install sentinel console

Is it OK for fresh students to change careers to do software testing? The senior answered with his own experience

Visiontransformer (I) -- embedded patched and word embedded

全网都在疯传的《老板管理手册》(转)

请教大家一个问题,用人用过flink sql的异步io关联MySQL中的维表吗?我按照官网设置了各种

Nmap and masscan have their own advantages and disadvantages. The basic commands are often mixed to increase output

JVM JNI and PVM pybind11 mass data transmission and optimization

Global and Chinese market of gallic acid 2022-2028: Research Report on technology, participants, trends, market size and share

Ask and answer: dispel your doubts about the virtual function mechanism

@Scenario of transactional annotation invalidation

Remember the experience of automatically jumping to spinach station when the home page was tampered with

MySQL - idea connects to MySQL

Capture de paquets et tri du contenu externe - - autoresponder, composer, statistiques [3]