当前位置:网站首页>Detailed explanation of the output end (head) of yolov5 | CSDN creation punch in

Detailed explanation of the output end (head) of yolov5 | CSDN creation punch in

2022-07-03 05:06:00 【TT ya】

Introduction to deep learning, rookie , I hope it's like taking notes and recording what I've learned , Also hope to help the same entry-level people , I hope the big guys can help correct it ~ Tort made delete .

notes : Because some friends like to see code parsing sentence by sentence , So I sorted out two , One is to analyze the code one by one , One is complete code parsing ( The analysis is all in the notes , Copy and paste directly to VScode It looks more comfortable ), Both are the same .

Catalog

One 、Bounding box Loss function

2、YOLOv5 The loss function used -- CIOU_Loss

Two 、NMS Non maximum suppression

3、 ... and 、 Source code analysis (Yolo.py Medium class Detect)

2、 Code annotation analysis integration

One 、Bounding box Loss function

1、IOU_Loss

IOU It's Cross and compare , Here, it refers to the ratio of the area of the intersection of the predicted object frame and the real object frame to the area of the Union .

IOU_Loss It's based on IOU Loss function of :IOU_Loss = 1 - IOU

But it has some disadvantages :

(1) If your prediction box doesn't coincide with the real box at all , So your IOU by 0, There is no way to show how far your prediction box is from the real box , The loss function is not differentiable , This makes it impossible to optimize .

(2) There may be two IOU equally , Corresponding 2 The area of each frame is the same , But the intersection is completely different , that IOU_Loss It will be impossible to distinguish the differences they intersect .

2、YOLOv5 The loss function used -- CIOU_Loss

because IOU_Loss The defects of , therefore YOLOv5 It's using CIOU_Loss.( In fact, there are also several kinds with IOU Relevant loss function :GIOU_Loss,DIOU_Loss)

C: The minimum circumscribed matrix of prediction box and real box

Distance_2: Euclidean distance between the center point of the prediction box and the center point of the real box

Distance_C:C Diagonal distance of

v( among w To be wide ,h For the high ,gt For the real box ,p For the prediction box ) Aspect ratio influence factor

CIOU_Loss The overlapping area is considered , Length width ratio and center point distance .

Two 、NMS Non maximum suppression

1、 Put forward the reason

(1) If the object is large , And the grid is very small , An object may be recognized by multiple meshes

(2) How to judge that these grids recognize the same object , Instead of multiple objects of the same class ?

2、YOLO Recognition principle

Want to say NMS What is it , I can't avoid talking YOLO The principle of recognition .

YOLO Split the picture into s^2 Grid (s) . Each grid is the same size , And let s^2 Each grid can predict B Boundary box ( Prediction box ). Every predicted boundary box has 5 Information quantity : The central position of the object (x,y), Height of object h, The width of the object w And the confidence of this prediction ( The confidence level of predicting whether there are targets in this grid ). Each grid not only predicts B Boundary box , Also predict what kind of grid this is . Suppose we want to predict C Class object , Then there are C Confidence ( Prediction is the confidence of a certain kind of target ). Then the information of this prediction is s*s*(5*B+C) individual .

3、NMS What is? ?

Scheme 1 : Select the confidence level of the prediction category ( The confidence level of predicting whether there are targets in this grid ) Stay tall , The rest of the forecasts are deleted . But this approach cannot solve the above problems (2).

Option two : Put confidence ( The confidence level of predicting whether there are targets in this grid ) The boundary box of the highest grid is regarded as the maximum boundary box , Calculate the maximum boundary box and several other grid boundary boxes IOU, If it exceeds a threshold , for example 0.5, It is thought that these two grids actually predict the same object , Delete the one with relatively small confidence .nice~

3、 ... and 、 Source code analysis (Yolo.py Medium class Detect)

1、 Copy by copy analysis

Let's divide according to his method 3 There are four sections to explain .

def __init__

The first is the setting of some parameters

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

#yolov5 Medium anchors(3 individual , Corresponding Neck The one that came out 3 Outputs ), initial anchor By w,h Width height composition , The pixel size of the original image is used , Set to each layer 3 individual , So there is 3 * 3 = 9 individual

super().__init__()

self.nc = nc # Number of predicted classes

self.no = nc + 5

# Every prediction box (anchor) Number of outputs , Corresponding to each kind of confidence (nc), The height and width of the prediction box , Center point coordinates , The confidence level of whether there is an object in the prediction frame , common 5 Kind of information .

self.nl = len(anchors) # Number of prediction layers

self.na = len(anchors[0]) // 2 # Number of prediction boxes yolov5 Medium anchors(3 individual , Corresponding Neck The one that came out 3 Outputs ), initial anchor By w,h Width height composition , The pixel size of the original image is used , Set to each layer 3 individual , So there is 3 * 3 = 9 individual

nc: The number of classes to be predicted

no: Every prediction box (anchor) Number of outputs , Corresponding to each kind of confidence (nc), The height and width of the prediction box , Center point coordinates , The confidence level of whether there is an object in the prediction frame , common 5 + 80 = 85 Kind of information .

nl: Number of prediction layers

na: Number of prediction boxes

self.grid = [torch.zeros(1)] * self.nl # Initial mesh , For each prediction layer, there is an initial grid generation

self.anchor_grid = [torch.zeros(1)] * self.nl # Initial prediction box grid

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2))

# shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch)

# output conv, Output results : Output results of each prediction box * Number of prediction boxes

self.inplace = inplace # use in-place ops (e.g. slice assignment)grid: Initial mesh , For each prediction layer, there is an initial grid generation

anchor_grid: Initial prediction box grid

self.m:output conv, Output results : Output results of each prediction box * Number of prediction boxes

def _make_grid

Prepare the grid , All predicted unit lengths are based on grid Level rather than original , And on each floor grid All sizes are different , And the output size of each layer w,h It's the same .

def _make_grid(self, nx=20, ny=20, i=0):

# Prepare the grid , All predicted unit lengths are based on grid Level rather than original , And on each floor grid All sizes are different , And the output size of each layer w,h It's the same .

d = self.anchors[i].device

if check_version(torch.__version__, '1.10.0'): # torch>=1.10.0 meshgrid workaround for torch>=0.7 compatibility

yv, xv = torch.meshgrid([torch.arange(ny, device=d), torch.arange(nx, device=d)], indexing='ij')

#torch.meshgrid() Generate grid , Can be used to generate coordinates , Size nx * ny;ny The range is the vertical coordinate ;nx The range is the horizontal coordinate

else:

yv, xv = torch.meshgrid([torch.arange(ny, device=d), torch.arange(nx, device=d)])

grid = torch.stack((xv, yv), 2).expand((1, self.na, ny, nx, 2)).float()

anchor_grid = (self.anchors[i].clone() * self.stride[i]) \

.view((1, self.na, 1, 1, 2)).expand((1, self.na, ny, nx, 2)).float()

return grid, anchor_grid # Make a grid and return The basic framework is to check the version first , Carry out different operations of the same paragraph for different versions

torch.meshgrid() Generate grid , Can be used to generate coordinates , Size nx * ny;ny The range is the vertical coordinate ;nx The range is the horizontal coordinate

def forward

The main structure is to cycle the processing of each layer

def forward(self, x):

z = [] # inference output

for i in range(self.nl): # Each layer circulates The core operation is carried out in the loop

x[i] = self.m[i](x[i]) # conv Convolution Convolution processing

bs, _, ny, nx = x[i].shape #bs The meaning of the prediction layer Extract some data , among bs It should mean the prediction layer ??? I'm not sure here

x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

#view() Transform shape , The data remains the same , x(bs,255,20,20) to x(bs,3,85,20,20), Put... In a prediction layer 3 individual anchor The information of , The number of prediction information in each prediction box is self.no( Here for 85)

#permute(0, 1, 3, 4, 2),x[i] Yes 5 Dimensions ,(2,3,4) become (3,4,2),x(bs,3,85,20,20)to x(bs,3,20,20,85)

#contiguous() Make a copy view() Transform shape , The data remains the same , x(bs,255,20,20) to x(bs,3,85,20,20), Put... In a prediction layer 3 individual anchor The information of , The number of prediction information in each prediction box is self.no( Here for 85)

permute(0, 1, 3, 4, 2),x[i] Yes 5 Dimensions ,(2,3,4) become (3,4,2),x(bs,3,85,20,20)to x(bs,3,20,20,85)

contiguous() Make a copy

Then enter a framework of whether to train (if not self.training:)

if not self.training: # inference

if self.onnx_dynamic or self.grid[i].shape[2:4] != x[i].shape[2:4]:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

# Make the grid of the prediction layer Determine whether it is necessary to make a i Grid of prediction layer

y = x[i].sigmoid()

# Activation function , Complete the soft decision of logical regression , Variables are mapped to 0,1 Between S Type of function , So the last y It means a fraction of the grid ( Yes center Of x,y,w,h All normalized )Activation function , Complete the soft decision of logical regression , Variables are mapped to 0,1 Between S Type of function , So the last y It means a fraction of the grid ( Yes center Of x,y,w,h All normalized )

if self.inplace:

y[..., 0:2] = (y[..., 0:2] * 2 - 0.5 + self.grid[i]) * self.stride[i] # xy

#box center Of x,y Is multiplied by 2 And minus 0.5, Let his prediction range become (-0.5,1.5) It is able to predict across half a grid

# And then add self.grid[i], Is to add the width of the grid / Height

# Finally, take self.stride[i], It's the step length , Locate to the point originally predicted

y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

# Processing the height and width of the prediction frame

else: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953

xy = (y[..., 0:2] * 2 - 0.5 + self.grid[i]) * self.stride[i] # xy

wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, y[..., 4:]), -1)

Here's the analysis self.inplace The situation of :

The first sentence is about the central point x,y The operation of .

(1)box center Of x,y Is multiplied by 2 And minus 0.5, Let his prediction range become (-0.5,1.5) It is able to predict across half a grid

(2) And then add self.grid[i], Is to add the width of the grid / Height

(3) Finally, take self.stride[i], It's the step length , Locate to the point originally predicted

The second sentence is to operate the width and height of the prediction box .

z.append(y.view(bs, -1, self.no))

# Fill the results in z:( The prediction layer of which layer ),( Predicted center Of x,y, And the prediction box w and h),( Corresponding 85 Kind of information -- Personally, I think this 85 Of all kinds of information x,y,w,h No more , It mainly takes out confidence information )Fill the results in z:( The prediction layer of which layer ),( Predicted center Of x,y, And the prediction box w and h),( Corresponding 85 Kind of information -- Personally, I think this 85 Of all kinds of information x,y,w,h No more , It mainly takes out confidence information )

return x if self.training else (torch.cat(z, 1), x)Finally, after a judgment return, If you still have to train, you'll return x, If the training is over , Then return (torch.cat(z, 1), x)

2、 Code annotation analysis integration

class Detect(nn.Module):

stride = None # strides computed during build

onnx_dynamic = False # ONNX export parameter

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

#yolov5 Medium anchors(3 individual , Corresponding Neck The one that came out 3 Outputs ), initial anchor By w,h Width height composition , The pixel size of the original image is used , Set to each layer 3 individual , So there is 3 * 3 = 9 individual

super().__init__()

self.nc = nc # Number of predicted classes

self.no = nc + 5

# Every prediction box (anchor) Number of outputs , Corresponding to each kind of confidence (nc), The height and width of the prediction box , Center point coordinates , The confidence level of whether there is an object in the prediction frame , common 5 Kind of information .

self.nl = len(anchors) # Number of prediction layers

self.na = len(anchors[0]) // 2 # Number of prediction boxes

self.grid = [torch.zeros(1)] * self.nl # Initial mesh , For each prediction layer, there is an initial grid generation

self.anchor_grid = [torch.zeros(1)] * self.nl # Initial prediction box grid

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2))

# shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch)

# output conv, Output results : Output results of each prediction box * Number of prediction boxes

self.inplace = inplace # use in-place ops (e.g. slice assignment)

def forward(self, x):

z = [] # inference output

for i in range(self.nl): # Each layer circulates

x[i] = self.m[i](x[i]) # conv Convolution

bs, _, ny, nx = x[i].shape

#bs The meaning of the prediction layer

x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

#view() Transform shape , The data remains the same , x(bs,255,20,20) to x(bs,3,85,20,20), Put... In a prediction layer 3 individual anchor The information of , The number of prediction information in each prediction box is self.no( Here for 85)

#permute(0, 1, 3, 4, 2),x[i] Yes 5 Dimensions ,(2,3,4) become (3,4,2),x(bs,3,85,20,20)to x(bs,3,20,20,85)

#contiguous() Make a copy

if not self.training: # inference

if self.onnx_dynamic or self.grid[i].shape[2:4] != x[i].shape[2:4]:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

# Make the grid of the prediction layer

y = x[i].sigmoid()

# Activation function , Complete the soft decision of logical regression , Variables are mapped to 0,1 Between S Type of function , So the last y It means a fraction of the grid ( Yes center Of x,y,w,h All normalized )

if self.inplace:

y[..., 0:2] = (y[..., 0:2] * 2 - 0.5 + self.grid[i]) * self.stride[i] # xy

#box center Of x,y Is multiplied by 2 And minus 0.5, Let his prediction range become (-0.5,1.5) It is able to predict across half a grid

# And then add self.grid[i], Is to add the width of the grid / Height

# Finally, take self.stride[i], It's the step length , Locate to the point originally predicted

y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

# Processing the height and width of the prediction frame

else: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953

xy = (y[..., 0:2] * 2 - 0.5 + self.grid[i]) * self.stride[i] # xy

wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, y[..., 4:]), -1)

z.append(y.view(bs, -1, self.no))

# Fill the results in z:( The prediction layer of which layer ),( Predicted center Of x,y, And the prediction box w and h),( Corresponding 85 Kind of information -- Personally, I think this 85 Of all kinds of information x,y,w,h No more , It mainly takes out confidence information )

return x if self.training else (torch.cat(z, 1), x)

def _make_grid(self, nx=20, ny=20, i=0):

# Prepare the grid , All predicted unit lengths are based on grid Level rather than original , And on each floor grid All sizes are different , And the output size of each layer w,h It's the same .

d = self.anchors[i].device

if check_version(torch.__version__, '1.10.0'): # torch>=1.10.0 meshgrid workaround for torch>=0.7 compatibility

yv, xv = torch.meshgrid([torch.arange(ny, device=d), torch.arange(nx, device=d)], indexing='ij')

#torch.meshgrid() Generate grid , Can be used to generate coordinates , Size nx * ny;ny The range is the vertical coordinate ;nx The range is the horizontal coordinate

else:

yv, xv = torch.meshgrid([torch.arange(ny, device=d), torch.arange(nx, device=d)])

grid = torch.stack((xv, yv), 2).expand((1, self.na, ny, nx, 2)).float()

anchor_grid = (self.anchors[i].clone() * self.stride[i]) \

.view((1, self.na, 1, 1, 2)).expand((1, self.na, ny, nx, 2)).float()

return grid, anchor_grid # Make a grid and return You are welcome to criticize and correct in the comment area , Thank you. ~

边栏推荐

- Class loading mechanism (detailed explanation of the whole process)

- Objects. Requirenonnull method description

- leetcode860. Lemonade change

- RT thread flow notes I startup, schedule, thread

- Shell script Basics - basic grammar knowledge

- Notes | numpy-07 Slice and index

- Market status and development prospect forecast of global button dropper industry in 2022

- [backtrader source code analysis 5] rewrite several time number conversion functions in utils with Python

- Current market situation and development prospect forecast of the global fire boots industry in 2022

- Without 50W bride price, my girlfriend was forcibly dragged away. What should I do

猜你喜欢

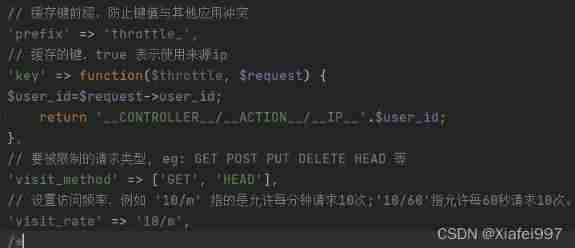

Interface frequency limit access

Actual combat 8051 drives 8-bit nixie tube

Retirement plan fails, 64 year old programmer starts work again

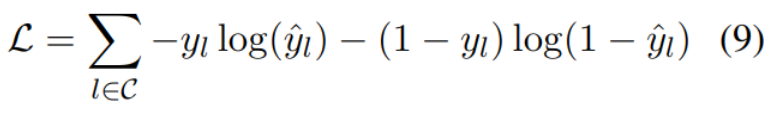

Thesis reading_ Chinese NLP_ ELECTRA

Thesis reading_ Chinese medical model_ eHealth

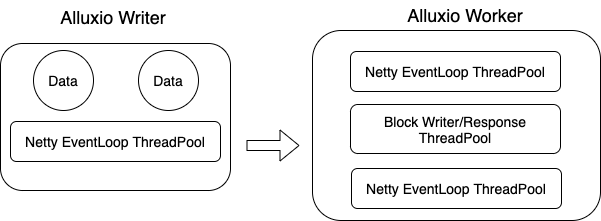

Shuttle + Alluxio 加速内存Shuffle起飞

论文阅读_ICD编码_MSMN

Valentine's day limited withdrawal guide: for one in 200 million of you

Career planning of counter attacking College Students

Use Sqlalchemy module to obtain the table name and field name of the existing table in the database

随机推荐

Gbase8s composite index (I)

编译GCC遇到的“pthread.h” not found问题

[develop wechat applet local storage with uni app]

Notes | numpy-10 Iterative array

Wechat applet waterfall flow and pull up to the bottom

Current market situation and development prospect forecast of the global fire boots industry in 2022

Common methods of JS array

Learn to use the idea breakpoint debugging tool

JDBC database operation

SSM framework integration

Silent authorization login and registration of wechat applet

[backtrader source code analysis 4] use Python to rewrite the first function of backtrader: time2num, which improves the efficiency by 2.2 times

1111 online map (30 points)

Do you know UVs in modeling?

Wechat applet distance and map

Interface frequency limit access

@RequestMapping

appium1.22. Appium inspector after X version needs to be installed separately

Market status and development prospects of the global autonomous marine glider industry in 2022

Force GCC to compile 32-bit programs on 64 bit platform