当前位置:网站首页>pytorch的tensor创建和操作记录

pytorch的tensor创建和操作记录

2022-08-02 19:51:00 【此何人哉tan】

目录

Indexing, Slicing, Joining, Mutating Ops

一、Tensr

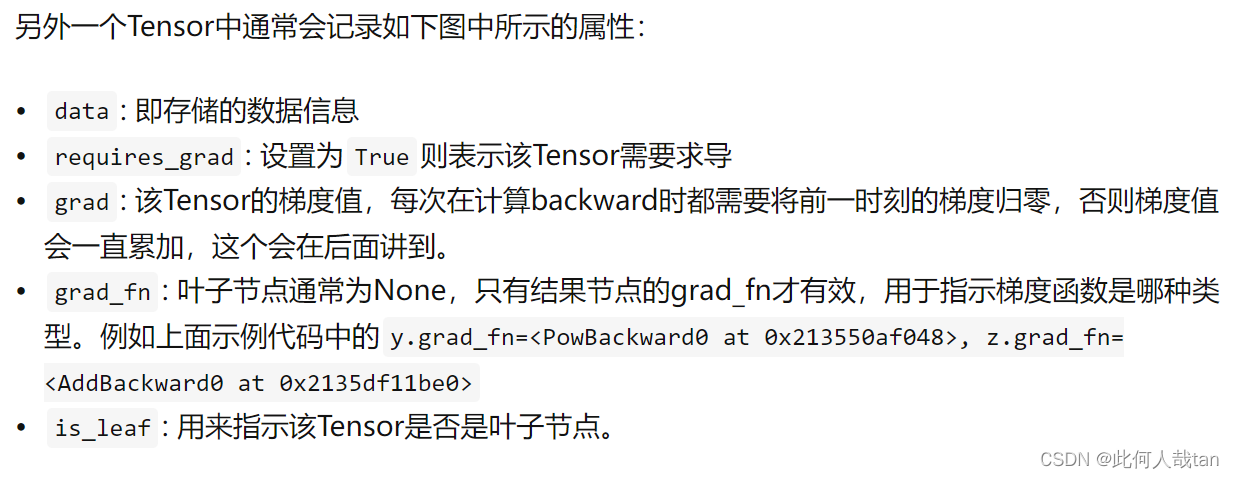

1、Tensor属性

numel # Returns the total number of elements in the input tensor.

repeat_interleaves

>>> a = torch.randn(1, 2, 3, 4, 5)

>>> torch.numel(a)

120

>>> a = torch.zeros(4,4)

>>> torch.numel(a)

16

>>> x = torch.tensor([1, 2, 3])

>>> x.repeat_interleave(2)

tensor([1, 1, 2, 2, 3, 3])

>>> y = torch.tensor([[1, 2], [3, 4]])

>>> torch.repeat_interleave(y, 2)

tensor([1, 1, 2, 2, 3, 3, 4, 4])

>>> torch.repeat_interleave(y, 3, dim=1)

tensor([[1, 1, 1, 2, 2, 2],

[3, 3, 3, 4, 4, 4]])

>>> torch.repeat_interleave(y, torch.tensor([1, 2]), dim=0)

tensor([[1, 2],

[3, 4],

[3, 4]])

>>> torch.repeat_interleave(y, torch.tensor([1, 2]), dim=0, output_size=3)

tensor([[1, 2],

[3, 4],

[3, 4]]2、创建

(1)

(2) 其它

>>> torch.eye(3)

tensor([[ 1., 0., 0.],

[ 0., 1., 0.],

[ 0., 0., 1.]])二、Tensor基本操作

Indexing, Slicing, Joining, Mutating Ops

tensor.stack()

tensor.cat()

torch.squeeze()

torch.unsqueeze()

>>> x = torch.zeros(2, 1, 2, 1, 2)

>>> x.size()

torch.Size([2, 1, 2, 1, 2])

>>> y = torch.squeeze(x)

>>> y.size()

torch.Size([2, 2, 2])

>>> y = torch.squeeze(x, 0)

>>> y.size()

torch.Size([2, 1, 2, 1, 2])

>>> y = torch.squeeze(x, 1)

>>> y.size()

torch.Size([2, 2, 1, 2])三、Random sampling

bernoulli

multinomial

normal

poisson

rand

rand_like

randint

randint_like

randn

randn_like

randperm

# In-place random sampling

torch.Tensor.bernoulli_()

torch.Tensor.cauchy_()

torch.Tensor.exponential_()

torch.Tensor.geometric_()

torch.Tensor.log_normal_()

torch.Tensor.normal_()

torch.Tensor.random_()

torch.Tensor.uniform_() 四、数学运算

1、按元素操作

torch — PyTorch 1.12 documentation https://pytorch.org/docs/stable/torch.html#pointwise-ops

https://pytorch.org/docs/stable/torch.html#pointwise-ops

(1)加减乘除、绝对值

add

sub # subtract, Alias for torch.sub().

mul # multiply, Alias for torch.mul().

div # divide , Alias for torch.div().

abs # absolute, Alias for torch.abs()加法举例:

import torch

a_list = [[1, -2, 3], [-4, 5, -6]]

a_tensor = torch.tensor(list1)

print(a_tensor)

# output:

tensor([[ 1, -2, 3],

[-4, 5, -6]])

# 加法操作

# ops 1

a_tensor.add(10)

# output1

tensor([[11, 8, 13],

[ 6, 15, 4]])

# ops 2

b_tensor = torch.ones_like(a_tensor)

a_tensor.add(b_tensor, alpha=19) # alpha=1(默认),a_tensor = alpha*b_tensor + a_tensor

# output1

tensor([[20, 17, 22],

[15, 24, 13]])(2)指数、对数、幂运算、开方运算

(3)三角&反三角函数运算函数运算

(4)双曲线反双曲线运算

(5) 其他常用操作

clamp

reshape

view

clamp # clip Alias for torch.clamp().

a = torch.randn(4)

# output1

tensor([-1.7120, 0.1734, -0.0478, -0.0922])

torch.clamp(a, min=-0.5, max=0.5)

# output2

tensor([-0.5000, 0.1734, -0.0478, -0.0922])(6)

# torch.bmm

>>> input = torch.randn(10, 3, 4)

>>> mat2 = torch.randn(10, 4, 5)

>>> res = torch.bmm(input, mat2)

>>> res.size()

torch.Size([10, 3, 5])参考: torch.clamp — PyTorch 1.12 documentation

PyTorch:view() 与 reshape() 区别详解_地球被支点撬走啦的博客-CSDN博客_reshape和view

边栏推荐

猜你喜欢

随机推荐

【数据分析】:什么是数据分析?

ALV报表学习总结

服务器Centos7 静默安装Oracle Database 12.2

网络协议介绍

golang刷leetcode 经典(9)为运算表达式设计优先级

Kali命令ifconfig报错command not found

golang刷leetcode 经典(11) 朋友圈

J9数字货币论:识别Web3新的稀缺性:开源开发者

2022-07-28

Geoserver+mysql+openlayers2

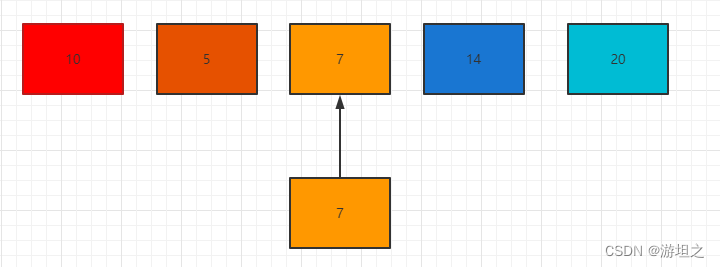

顺序查找和折半查找,看这篇就够了

我用这一招让团队的开发效率提升了 100%!

Thread线程类基本使用(上)

姑姑:给小学生出点口算题

Caldera(一)配置完成的虚拟机镜像及admin身份简单使用

LeetCode:622. 设计循环队列【模拟循环队列】

成为黑客不得不学的语言,看完觉得你们还可吗?

AI Scientist: Automatically discover hidden state variables of physical systems

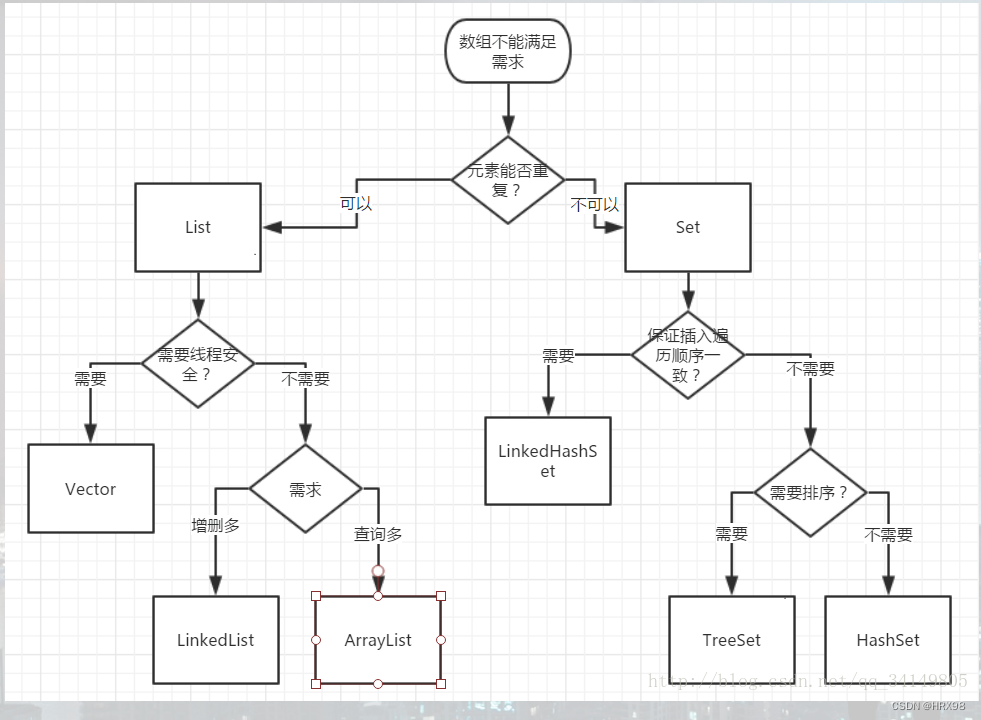

解析Collection接口中的常用的被实现子类重写的方法

AI科学家:自动发现物理系统的隐藏状态变量