当前位置:网站首页>Unityshader introduction essentials personal summary -- Basic chapter (I)

Unityshader introduction essentials personal summary -- Basic chapter (I)

2022-07-07 08:54:00 【Zero if】

A summary of my study blog, References include but are not limited to Mao Xingyun rtr3 Refine the summary , The illustrations are basically from Introduction to topic and Refine the summary

Chapter two Render pipeline

It feels a little different from the division method learned before , Compared with Mao's rtr3 Refining books is also this division , It's better this way

Three major stages

There are three major stages : Application stage 、 Geometric stage 、 Rasterize

1. Application stage

summary

This stage is mainly for preparation , from CPU Give... As a starting point GPU, Including scene data , Including camera position viewing cone, etc , Then a coarse-grained culling is performed to cut the invisible objects , Finally, set the rendering state ( What does the material texture use shader), Get one Rendering elements ( Point line triangular surface ) To the geometric stage

To subdivide

- Load data into video memory

- Set render state

- call DrawCall

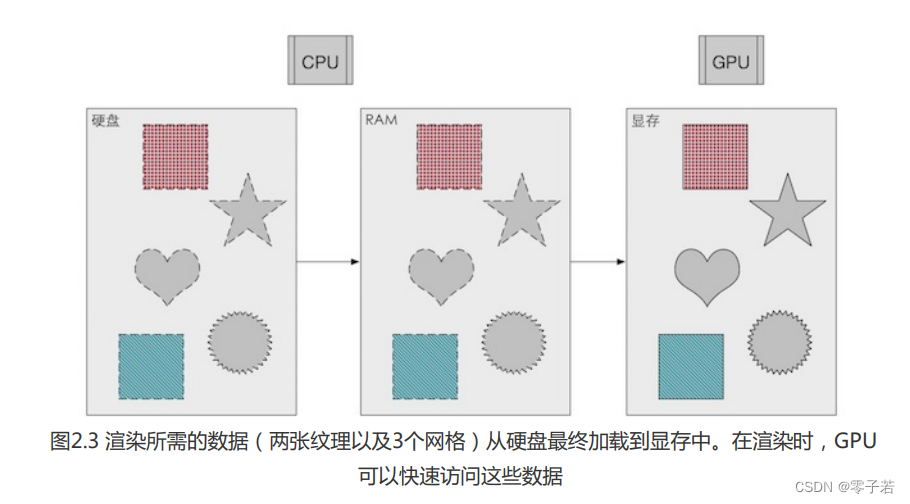

(1) Load data into video memory

Render data : From hard disk --> system memory (RAM) --> memory

Why load into video memory ( The graphics card ) On ?( I haven't learned the operating system well , You can skip this note if you learn it well …)

First , The graphics card is faster to access data from the video memory , Even many graphics cards are right RAM No data access , So load it into the video memory

So what is the meaning of the graphics card for rendering ?

It has to be said GPU Relationship with graphics card ,GPU(Graphic Processing Unit-- Graphics processor ) Is one of the core components of the graphics card , And video memory , Motherboard radiator so on.

So the starting point of rendering is CPU, The data is finally given to GPU

(2) Set render state

The words of the original book : The state defines how the mesh in the scene is rendered . Include which shaders to use ( Like surface subdivision shaders / The geometric shader It's all optional ), There are also light source materials and so on

(3) call DrawCall

Namely CPU towards GPU A rendering command issued (CPU: Let's play up this thing GPU:OK!)

DrawCall Why does it affect efficiency ? How to reduce ?

1 Because every time I call DrawCall,CPU Will prepare the contents for GPU, There is no difference in rendering , however DrawCall The more CPU More time to submit DrawCall Reduce efficiency

2 The solution is The batch , Put small DrawCall Merge into a big one , More suitable for static objects . Avoid using very small meshes , Avoid using too many materials

So the following 2、3 The stages are all in GPU Conduct

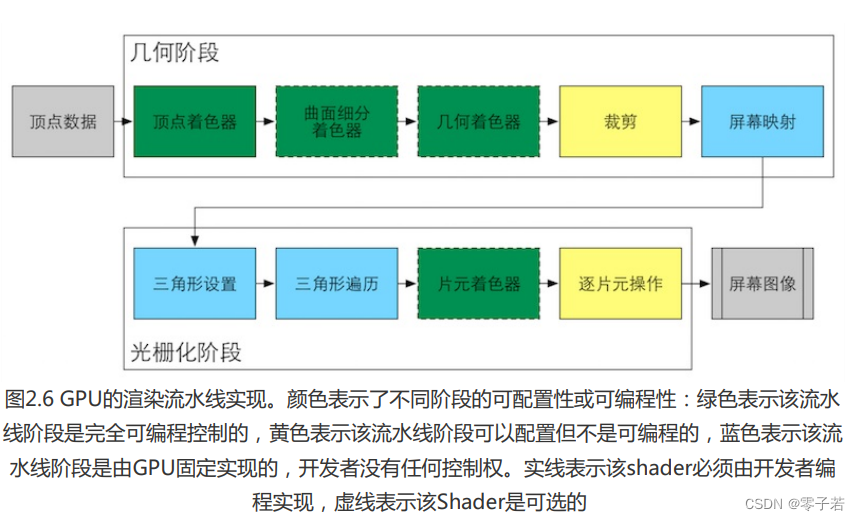

2. Geometric stage

summary

This stage is responsible for most vertices and polygons and almost all geometry related things , What elements need to be drawn , How to draw , Where to draw .

To subdivide

- Vertex shader

- Projection

- tailoring

- Screen mapping

(1) Vertex shader

Here are two main tasks Coordinate transformation and vertex shading , Mao is subdivided into two stages , Feng's book is combined into vertex shaders , It's essentially the same thing .

Coordinate transformation : Transform the model into a space suitable for rendering

classical MVP The transformation process , hold Vertex coordinates are transformed from model space to homogeneous clipping space .

Light up by the top : The aim is to determine the lighting effect of the material at the top of the model .

So-called Shader, Shaders , The operation of determining the lighting effect on a material is called shading

After the coordinate conversion, I still need to know what the coordinate will look like , Shading per vertex according to the shading equation , Calculate the result , Including color texture coordinates and so on , Put this result in rasterization .

(2) Projection

The process of projecting a model from a three-dimensional space to a two-dimensional space

Notice a concept here NDC, Normalized equipment coordinates , The coordinates of the last visible model will be placed in a [-1,1] Of cube in , This is normalization . It is usually orthographic and perspective projection , For details on how to normalize, please refer to Games101

(3) tailoring

Well understood. , It will be displayed in the canonical cube , Other cameras are invisible and do not need to be rendered , So cut the other parts .

(4) Screen mapping

Mainly Viewport transform , Put it in cube How to map the three-dimensional coordinates in to the two-dimensional screen for display . So the main thing is the matrix scaling transformation ,[-1,1] To width width high height The screen is zoomed .

But notice , One more Z Axis direction data , There is no treatment at this stage , But the data will be passed down , It's important Depth reference information .

3. Rasterize

summary

Convert points on a two-dimensional screen into pixels . Missing pixel interpolation between vertices complements .

It is mainly divided into two steps , Primitives cover those pixels , Calculate their final color .

To subdivide

- Triangle settings

- Triangle traversal

- Chip shader

- Output merge ( Slice by slice operation )

(1) Triangle settings

A series of vertex data obtained before , Assemble them into the triangular mesh we need .

(2) Triangle traversal

Check whether the pixels are covered by triangles , Coverage generates fragment .

This stage includes Interpolation operations , The triangle vertex information interpolates the entire coverage area .

Pay attention to the difference between slices and pixels

A slice is not really a pixel , It's a collection of states , Including depth finding texture coordinates , Finally, it takes a series of tests to become a pixel

(3) Chip shader

The result of interpolation , Output color value . So the most important thing at this stage Texture mapping , Get pixel color .

But so far , There is no change in the specific pixels of the screen , These are preliminary data , until Output merge

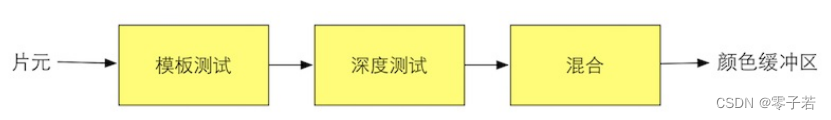

(4) Output merge ( Slice by slice operation )

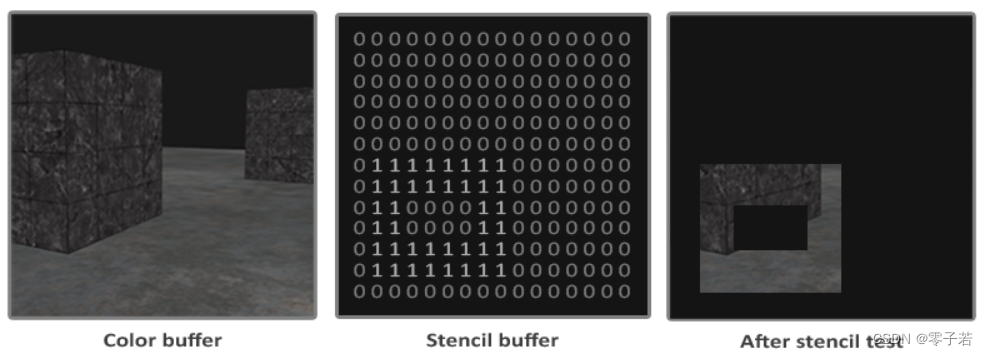

Template testing : Then you need a template buffer. Similar to the depth test , But it's more like a mask, Set a fixed value ,mask The display , Do not display

Depth testing : The camera can only see nearby , There is no need to render if the occluded distant objects cannot be seen ;

The most typical is Z-Buffer technology , Only closer objects are eligible for updating frame.

blend

Here also mentioned some about transparent object rendering Tips:

as everyone knows , Opaque objects are of course the closest to seeing , What about transparent ones ? You need to mix the color in the slice shader and the color buffer . for instance , Put a glass in front of you , I can see the glass , You can see behind the glass , What you actually see is a mixture of these two colors

unity The medium depth test is before the slice shader , This is called Early-Z , First determine the nearby figure , Then calculate the pixels , More efficient , But the problem is that transparent objects cannot be rendered , Because the distant objects have been eliminated .

4. ending ( Double buffering )

Elements in rendering must not be displayed , It's like changing the background on the stage , The background is ready , Pull the red cloth , The stage unfolded before everyone .

Double buffer is divided into pre buffer and post buffer The front is the image that can be seen on the screen , The post is in the rendering ,GPU Constantly exchange the two contents , Make sure you see a continuous picture .

边栏推荐

- Explain Huawei's application market in detail, and gradually reduce 32-bit package applications and strategies in 2022

- Simple use of Xray

- A bug using module project in idea

- Quick sorting (detailed illustration of single way, double way, three way)

- 数据分析方法论与前人经验总结2【笔记干货】

- OpenGL帧缓冲

- Newly found yii2 excel processing plug-in

- leetcode134. gas station

- Problems encountered in the use of go micro

- Required String parameter ‘XXX‘ is not present

猜你喜欢

使用Typora编辑markdown上传CSDN时图片大小调整麻烦问题

![[step on the pit] Nacos registration has been connected to localhost:8848, no available server](/img/ee/ab4d62745929acec2f5ba57155b3fa.png)

[step on the pit] Nacos registration has been connected to localhost:8848, no available server

硬核分享:硬件工程师常用工具包

Calling the creation engine interface of Huawei game multimedia service returns error code 1002, error message: the params is error

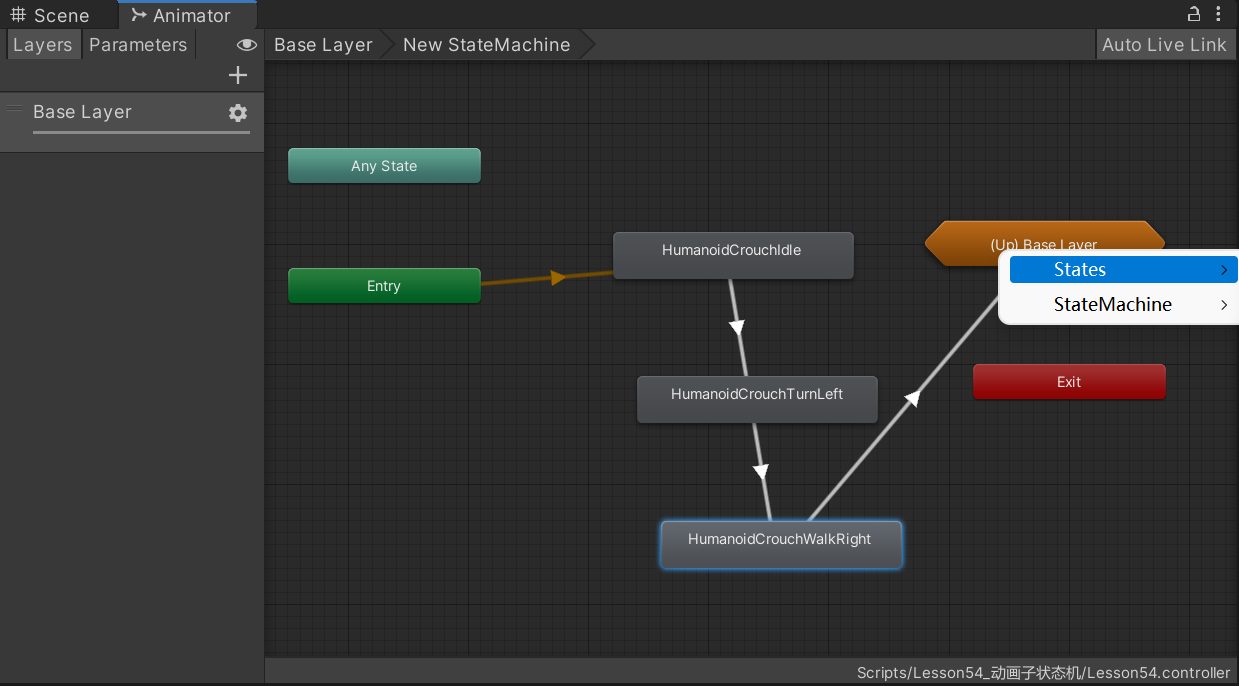

2022-07-06 Unity核心9——3D动画

Calf problem

平台化,强链补链的一个支点

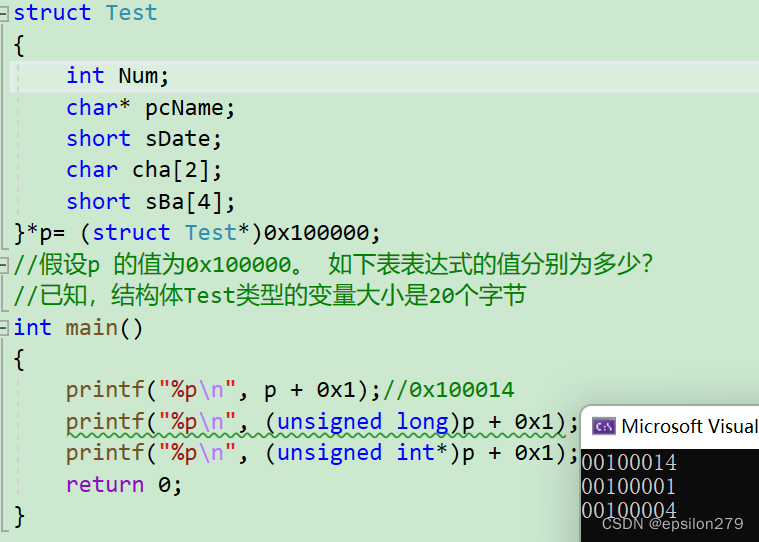

Pointer advanced, string function

![[Yugong series] February 2022 U3D full stack class 007 - production and setting skybox resources](/img/e3/3703bdace2d0ca47c1a585562dc15e.jpg)

[Yugong series] February 2022 U3D full stack class 007 - production and setting skybox resources

oracle一次性说清楚,多种分隔符的一个字段拆分多行,再多行多列多种分隔符拆多行,最终处理超亿亿。。亿级别数据量

随机推荐

数据分析方法论与前人经验总结2【笔记干货】

Platformization, a fulcrum of strong chain complementing chain

mysql分区讲解及操作语句

为不同类型设备构建应用的三大更新 | 2022 I/O 重点回顾

IP地址的类别

使用Typora编辑markdown上传CSDN时图片大小调整麻烦问题

Opencv converts 16 bit image data to 8 bits and 8 to 16

let const

Digital triangle model acwing 275 Pass a note

说一个软件创业项目,有谁愿意投资的吗?

Explain Huawei's application market in detail, and gradually reduce 32-bit package applications and strategies in 2022

Tronapi wave field interface - source code without encryption - can be opened twice - interface document attached - package based on thinkphp5 - detailed guidance of the author - July 6, 2022 - Novice

About using CDN based on Kangle and EP panel

【MySQL】数据库进阶之触发器内容详解

Calling the creation engine interface of Huawei game multimedia service returns error code 1002, error message: the params is error

Required String parameter ‘XXX‘ is not present

Lenovo hybrid cloud Lenovo xcloud: 4 major product lines +it service portal

Newly found yii2 excel processing plug-in

Unity Shader入门精要初级篇(一)-- 基础光照笔记

JS operation