当前位置:网站首页>Mish shake the new successor of the deep learning relu activation function

Mish shake the new successor of the deep learning relu activation function

2022-07-02 11:51:00 【A ship that wants to learn】

The study of activation function has never stopped ,ReLU Or the activation function that governs deep learning , however , This situation may be Mish change .

Diganta Misra One of them is entitled “Mish: A Self Regularized Non-Monotonic Neural Activation Function” This paper introduces a new deep learning activation function , This function is more accurate than Swish(+.494%) and ReLU(+ 1.671%) All have improved .

Their small FastAI The team used Mish Instead of ReLU, Broke before in FastAI Part of the accuracy score record on the global leaderboard . combination Ranger Optimizer ,Mish Activate ,Flat + Cosine Annealing and self attention layer , They can get 12 New leaderboard records !

We 12 Items in the leaderboard record 6 term . Every record uses Mish instead of ReLU.( Blue highlights ,400 epoch The accuracy of is 94.6, Slightly higher than our 20 epoch The accuracy of is 93.8:)

As part of their own tests , about ImageWoof Data sets 5 epoch test , They say :

Mish It is superior to ReLU (P < 0.0001).(FastAI Forum @ Seb)

Mish Already in 70 Tested on multiple benchmarks , Including image classification 、 Segmentation and generation , And with others 15 Three activation functions are compared .

What is? Mesh

Look directly at Mesh The code will be simpler , Just to summarize ,Mish=x * tanh(ln(1+e^x)).

Other activation functions ,ReLU yes x = max(0,x),Swish yes x * sigmoid(x).

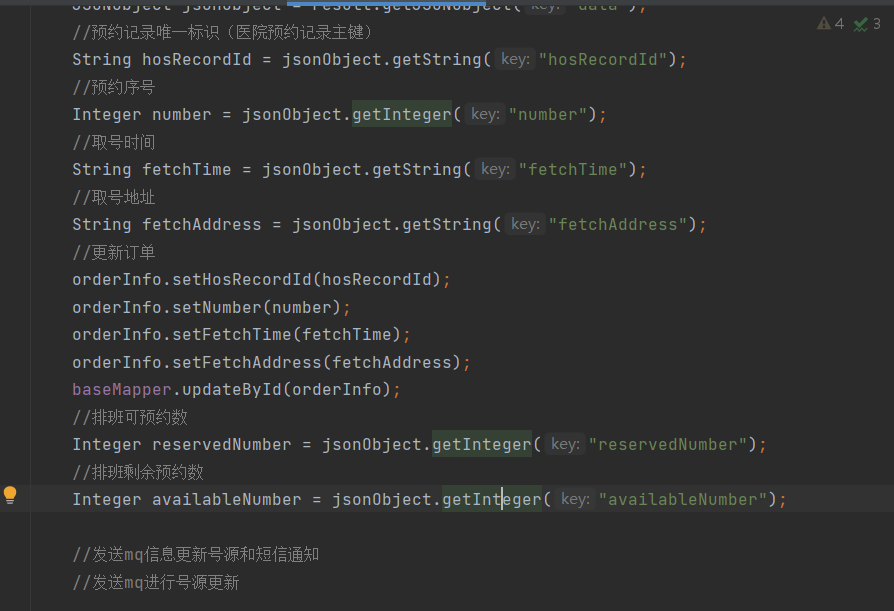

PyTorch Of Mish Realization :

Tensorflow Medium Mish function :

Tensorflow:x = x *tf.math.tanh(F.softplus(x))

Mish How does it compare with other activation functions ?

The image below shows Mish Test results with some other activation functions . This is as much as 73 The result of a test , In different architectures , On different tasks :

Why? Mish Behave so well ?

There are no boundaries ( That is, a positive value can reach any height ) Avoid saturation due to capping . Theoretically, a slight allowance for negative values allows for better gradient flow , Not like it ReLU A hard zero boundary like in .

Last , Maybe the most important , The current idea is , The smooth activation function allows better information to penetrate the neural network , So as to get better accuracy and generalization .

For all that , I tested many activation functions , They also satisfy many of these ideas , But most of them cannot be implemented . The main difference here may be Mish The smoothness of the function at almost all points on the curve .

Such passage Mish The ability to activate curve smoothness to push information is shown in the following figure , In a simple test of this article , More and more layers are added to a test neural network , There is no unified function . As the layer depth increases ,ReLU The precision drops rapidly , The second is Swish. by comparison ,Mish It can better maintain accuracy , This may be because it can better spread information :

Smoother activation allows information to flow more deeply …… Be careful , As the number of layers increases ,ReLU Rapid descent .

How to make Mish Put it on your own network ?

Mish Of PyTorch and FastAI The source code of can be found in github Two places to find :

1、 official Mish github:https://github.com/digantamisra98/Mish

2、 Unofficial Mish Use inline Speed up :https://github.com/lessw2020/mish

summary

ReLU There are some known weaknesses , But usually it's very light , And very light in calculation .Mish It has a strong theoretical origin , In the test , In terms of training stability and accuracy ,Mish The average performance of is better than ReLU.

The complexity is only slightly increased (V100 GPU and Mish, be relative to ReLU, Every time epoch Increase by about 1 second ), Considering the improvement of training stability and final accuracy , It seems worthwhile to add a little more time .

Final , After testing a large number of new activation functions this year ,Mish Take the lead in this regard , Many people suspect that it is likely to become AI New in the future ReLU.

English article address :https://medium.com/@lessw/meet-mish-new-state-of-the-art-ai-activation-function-the-successor-to-relu-846a6d93471f

边栏推荐

- Principe du contrat évolutif - delegatecall

- The computer screen is black for no reason, and the brightness cannot be adjusted.

- 可昇級合約的原理-DelegateCall

- HOW TO ADD P-VALUES ONTO A GROUPED GGPLOT USING THE GGPUBR R PACKAGE

- 基于Hardhat编写合约测试用例

- flutter 问题总结

- [visual studio 2019] create and import cmake project

- 基于 Openzeppelin 的可升级合约解决方案的注意事项

- How to Create a Beautiful Plots in R with Summary Statistics Labels

- to_ Bytes and from_ Bytes simple example

猜你喜欢

YYGH-9-预约下单

Wechat applet uses Baidu API to achieve plant recognition

GGPLOT: HOW TO DISPLAY THE LAST VALUE OF EACH LINE AS LABEL

GGPUBR: HOW TO ADD ADJUSTED P-VALUES TO A MULTI-PANEL GGPLOT

HOW TO ADD P-VALUES ONTO A GROUPED GGPLOT USING THE GGPUBR R PACKAGE

可升级合约的原理-DelegateCall

CTF record

RPA进阶(二)Uipath应用实践

MySQL linked list data storage query sorting problem

JS -- take a number randomly from the array every call, and it cannot be the same as the last time

随机推荐

PgSQL string is converted to array and associated with other tables, which are displayed in the original order after matching and splicing

Dynamic memory (advanced 4)

程序员成长第六篇:如何选择公司?

Is the Ren domain name valuable? Is it worth investing? What is the application scope of Ren domain name?

YYGH-BUG-05

由粒子加速器产生的反中子形成的白洞

to_ Bytes and from_ Bytes simple example

php 二维、多维 数组打乱顺序,PHP_php打乱数组二维数组多维数组的简单实例,php中的shuffle函数只能打乱一维

预言机链上链下调研

HOW TO CREATE AN INTERACTIVE CORRELATION MATRIX HEATMAP IN R

bedtools使用教程

HOW TO CREATE A BEAUTIFUL INTERACTIVE HEATMAP IN R

数据分析 - matplotlib示例代码

FLESH-DECT(MedIA 2021)——一个material decomposition的观点

可昇級合約的原理-DelegateCall

HOW TO EASILY CREATE BARPLOTS WITH ERROR BARS IN R

R HISTOGRAM EXAMPLE QUICK REFERENCE

【多线程】主线程等待子线程执行完毕在执行并获取执行结果的方式记录(有注解代码无坑)

BEAUTIFUL GGPLOT VENN DIAGRAM WITH R

GGHIGHLIGHT: EASY WAY TO HIGHLIGHT A GGPLOT IN R