当前位置:网站首页>Convolution emotion analysis task4

Convolution emotion analysis task4

2022-07-03 13:14:00 【Levi Bebe】

# 1. Data preprocessing

import torch

from torchtext.legacy import data

from torchtext.legacy import datasets

import random

import numpy as np

SEED = 1234

random.seed(SEED)

np.random.seed(SEED)

torch.manual_seed(SEED)

torch.backends.cudnn.deterministic = True

TEXT = data.Field(tokenize = 'spacy',

tokenizer_language = 'en_core_web_sm',

batch_first = True)

LABEL = data.LabelField(dtype = torch.float)

train_data, test_data = datasets.IMDB.splits(TEXT, LABEL)

train_data, valid_data = train_data.split(random_state = random.seed(SEED))

# structure vocab, Load pre training words embedded

MAX_VOCAB_SIZE = 25_000

TEXT.build_vocab(train_data,

max_size = MAX_VOCAB_SIZE,

vectors = "glove.6B.100d",

unk_init = torch.Tensor.normal_)

LABEL.build_vocab(train_data)

# Create iterator

BATCH_SIZE = 64

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

train_iterator, valid_iterator, test_iterator = data.BucketIterator.splits(

(train_data, valid_data, test_data),

batch_size = BATCH_SIZE,

device = device)

# 2. Build the model

import torch.nn as nn

import torch.nn.functional as F

class CNN(nn.Module):

def __init__(self, vocab_size, embedding_dim, n_filters, filter_sizes, output_dim,

dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx = pad_idx)

self.conv_0 = nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (filter_sizes[0], embedding_dim))

self.conv_1 = nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (filter_sizes[1], embedding_dim))

self.conv_2 = nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (filter_sizes[2], embedding_dim))

self.fc = nn.Linear(len(filter_sizes) * n_filters, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

#text = [batch size, sent len]

embedded = self.embedding(text)

#embedded = [batch size, sent len, emb dim]

embedded = embedded.unsqueeze(1)

#embedded = [batch size, 1, sent len, emb dim]

conved_0 = F.relu(self.conv_0(embedded).squeeze(3))

conved_1 = F.relu(self.conv_1(embedded).squeeze(3))

conved_2 = F.relu(self.conv_2(embedded).squeeze(3))

#conved_n = [batch size, n_filters, sent len - filter_sizes[n] + 1]

pooled_0 = F.max_pool1d(conved_0, conved_0.shape[2]).squeeze(2)

pooled_1 = F.max_pool1d(conved_1, conved_1.shape[2]).squeeze(2)

pooled_2 = F.max_pool1d(conved_2, conved_2.shape[2]).squeeze(2)

#pooled_n = [batch size, n_filters]

cat = self.dropout(torch.cat((pooled_0, pooled_1, pooled_2), dim = 1))

#cat = [batch size, n_filters * len(filter_sizes)]

return self.fc(cat)

class CNN(nn.Module):

def __init__(self, vocab_size, embedding_dim, n_filters, filter_sizes, output_dim,

dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx = pad_idx)

self.convs = nn.ModuleList([

nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (fs, embedding_dim))

for fs in filter_sizes

])

self.fc = nn.Linear(len(filter_sizes) * n_filters, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

#text = [batch size, sent len]

embedded = self.embedding(text)

#embedded = [batch size, sent len, emb dim]

embedded = embedded.unsqueeze(1)

#embedded = [batch size, 1, sent len, emb dim]

conved = [F.relu(conv(embedded)).squeeze(3) for conv in self.convs]

#conved_n = [batch size, n_filters, sent len - filter_sizes[n] + 1]

pooled = [F.max_pool1d(conv, conv.shape[2]).squeeze(2) for conv in conved]

#pooled_n = [batch size, n_filters]

cat = self.dropout(torch.cat(pooled, dim = 1))

#cat = [batch size, n_filters * len(filter_sizes)]

return self.fc(cat)

class CNN1d(nn.Module):

def __init__(self, vocab_size, embedding_dim, n_filters, filter_sizes, output_dim,

dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx = pad_idx)

self.convs = nn.ModuleList([

nn.Conv1d(in_channels = embedding_dim,

out_channels = n_filters,

kernel_size = fs)

for fs in filter_sizes

])

self.fc = nn.Linear(len(filter_sizes) * n_filters, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

#text = [batch size, sent len]

embedded = self.embedding(text)

#embedded = [batch size, sent len, emb dim]

embedded = embedded.permute(0, 2, 1)

#embedded = [batch size, emb dim, sent len]

conved = [F.relu(conv(embedded)) for conv in self.convs]

#conved_n = [batch size, n_filters, sent len - filter_sizes[n] + 1]

pooled = [F.max_pool1d(conv, conv.shape[2]).squeeze(2) for conv in conved]

#pooled_n = [batch size, n_filters]

cat = self.dropout(torch.cat(pooled, dim = 1))

#cat = [batch size, n_filters * len(filter_sizes)]

return self.fc(cat)

INPUT_DIM = len(TEXT.vocab)

EMBEDDING_DIM = 100

N_FILTERS = 100

FILTER_SIZES = [3,4,5]

OUTPUT_DIM = 1

DROPOUT = 0.5

PAD_IDX = TEXT.vocab.stoi[TEXT.pad_token]

model = CNN(INPUT_DIM, EMBEDDING_DIM, N_FILTERS, FILTER_SIZES, OUTPUT_DIM, DROPOUT, PAD_IDX)

def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)

print(f'The model has {

count_parameters(model):,} trainable parameters')

pretrained_embeddings = TEXT.vocab.vectors

model.embedding.weight.data.copy_(pretrained_embeddings)

UNK_IDX = TEXT.vocab.stoi[TEXT.unk_token]

model.embedding.weight.data[UNK_IDX] = torch.zeros(EMBEDDING_DIM)

model.embedding.weight.data[PAD_IDX] = torch.zeros(EMBEDDING_DIM)

# 3. Training models

import torch.optim as optim

optimizer = optim.Adam(model.parameters())

criterion = nn.BCEWithLogitsLoss()

model = model.to(device)

criterion = criterion.to(device)

# Realize precision calculation

def binary_accuracy(preds, y):

""" Returns accuracy per batch, i.e. if you get 8/10 right, this returns 0.8, NOT 8 """

#round predictions to the closest integer

rounded_preds = torch.round(torch.sigmoid(preds))

correct = (rounded_preds == y).float() #convert into float for division

acc = correct.sum() / len(correct)

return acc

def train(model, iterator, optimizer, criterion):

epoch_loss = 0

epoch_acc = 0

model.train()

for batch in iterator:

optimizer.zero_grad()

predictions = model(batch.text).squeeze(1)

loss = criterion(predictions, batch.label)

acc = binary_accuracy(predictions, batch.label)

loss.backward()

optimizer.step()

epoch_loss += loss.item()

epoch_acc += acc.item()

return epoch_loss / len(iterator), epoch_acc / len(iterator)

def evaluate(model, iterator, criterion):

epoch_loss = 0

epoch_acc = 0

model.eval()

with torch.no_grad():

for batch in iterator:

predictions = model(batch.text).squeeze(1)

loss = criterion(predictions, batch.label)

acc = binary_accuracy(predictions, batch.label)

epoch_loss += loss.item()

epoch_acc += acc.item()

return epoch_loss / len(iterator), epoch_acc / len(iterator)

import time

def epoch_time(start_time, end_time):

elapsed_time = end_time - start_time

elapsed_mins = int(elapsed_time / 60)

elapsed_secs = int(elapsed_time - (elapsed_mins * 60))

return elapsed_mins, elapsed_secs

N_EPOCHS = 5

best_valid_loss = float('inf')

for epoch in range(N_EPOCHS):

start_time = time.time()

train_loss, train_acc = train(model, train_iterator, optimizer, criterion)

valid_loss, valid_acc = evaluate(model, valid_iterator, criterion)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

if valid_loss < best_valid_loss:

best_valid_loss = valid_loss

torch.save(model.state_dict(), 'tut4-model.pt')

print(f'Epoch: {

epoch+1:02} | Epoch Time: {

epoch_mins}m {

epoch_secs}s')

print(f'\tTrain Loss: {

train_loss:.3f} | Train Acc: {

train_acc*100:.2f}%')

print(f'\t Val. Loss: {

valid_loss:.3f} | Val. Acc: {

valid_acc*100:.2f}%')

4. Model validation

import spacy

nlp = spacy.load('en_core_web_sm')

def predict_sentiment(model, sentence, min_len = 5):

model.eval()

tokenized = [tok.text for tok in nlp.tokenizer(sentence)]

if len(tokenized) < min_len:

tokenized += ['<pad>'] * (min_len - len(tokenized))

indexed = [TEXT.vocab.stoi[t] for t in tokenized]

tensor = torch.LongTensor(indexed).to(device)

tensor = tensor.unsqueeze(0)

prediction = torch.sigmoid(model(tensor))

return prediction.item()

predict_sentiment(model, "This film is terrible")

predict_sentiment(model, "This film is great")

Some don't understand , Later, I will review and understand !

边栏推荐

- 2022-02-13 plan for next week

- [Database Principle and Application Tutorial (4th Edition | wechat Edition) Chen Zhibo] [sqlserver2012 comprehensive exercise]

- Sword finger offer 14- I. cut rope

- 【习题五】【数据库原理】

- Sword finger offer 14- ii Cut rope II

- 关于CPU缓冲行的理解

- Useful blog links

- C graphical tutorial (Fourth Edition)_ Chapter 18 enumerator and iterator: enumerator samplep340

- 2022-01-27 redis cluster technology research

- 【判断题】【简答题】【数据库原理】

猜你喜欢

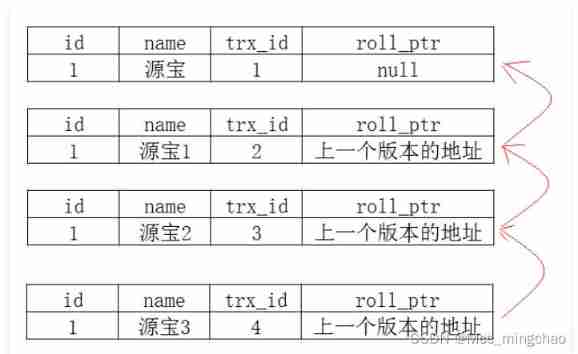

Deeply understand the mvcc mechanism of MySQL

![[combinatorics] permutation and combination (the combination number of multiple sets | the repetition of all elements is greater than the combination number | the derivation of the combination number](/img/9d/6118b699c0d90810638f9b08d4f80a.jpg)

[combinatorics] permutation and combination (the combination number of multiple sets | the repetition of all elements is greater than the combination number | the derivation of the combination number

February 14, 2022, incluxdb survey - mind map

显卡缺货终于到头了:4000多块可得3070Ti,比原价便宜2000块拿下3090Ti

Grid connection - Analysis of low voltage ride through and island coexistence

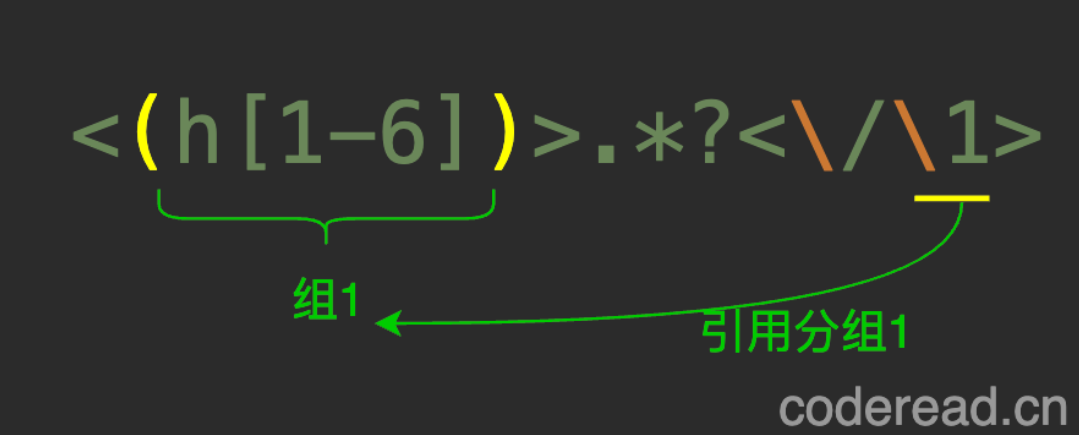

regular expression

人身变声器的原理

【Colab】【使用外部数据的7种方法】

高效能人士的七个习惯

【数据库原理及应用教程(第4版|微课版)陈志泊】【第三章习题】

随机推荐

Flink SQL knows why (XI): weight removal is not only count distinct, but also powerful duplication

Setting up Oracle datagurd environment

(first) the most complete way to become God of Flink SQL in history (full text 180000 words, 138 cases, 42 pictures)

正则表达式

2022-01-27 redis cluster cluster proxy predixy analysis

[combinatorics] permutation and combination (multiple set permutation | multiple set full permutation | multiple set incomplete permutation all elements have a repetition greater than the permutation

人身变声器的原理

剑指 Offer 14- II. 剪绳子 II

Analysis of the influence of voltage loop on PFC system performance

Method overloading and rewriting

luoguP3694邦邦的大合唱站队

Sitescms v3.1.0 release, launch wechat applet

The foreground uses RSA asymmetric security to encrypt user information

Node. Js: use of express + MySQL

Seven second order ladrc-pll structure design of active disturbance rejection controller

The difference between session and cookie

php:&nbsp; The document cannot be displayed in Chinese

[exercise 7] [Database Principle]

Useful blog links

剑指 Offer 15. 二进制中1的个数