当前位置:网站首页>机器人手眼标定Ax=xB(eye to hand和eye in hand)及平面九点法标定

机器人手眼标定Ax=xB(eye to hand和eye in hand)及平面九点法标定

2022-07-26 15:29:00 【华为云】

一、背景

Calibration是机器人开发者永远的痛。虽然说方法说起来几十年前就有,但每一个要用摄像头的人都还是要经过一番痛苦的踩坑,没有轻轻松松拿来就效果好的包。

机器人视觉应用中,手眼标定是一个非常基础且关键的问题。简单来说手眼标定的目的就是获取机器人坐标系和相机坐标系的关系,最后将视觉识别的结果转移到机器人坐标系下。

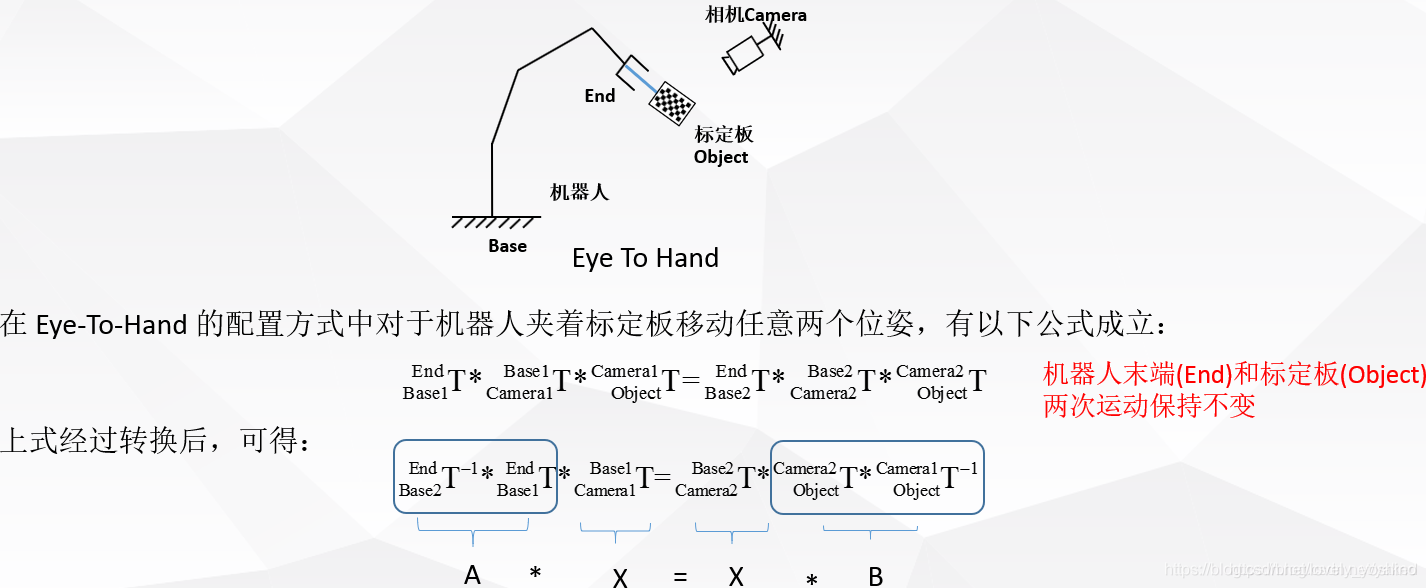

手眼标定行业内分为两种形式,根据相机固定的地方不同,如果相机和机器人末端固定在一起,就称之为“眼在手”(eye in hand),如果相机固定在机器人外面的底座上,则称之为“眼在外”(eye to hand)。

二、手眼关系的数学描述

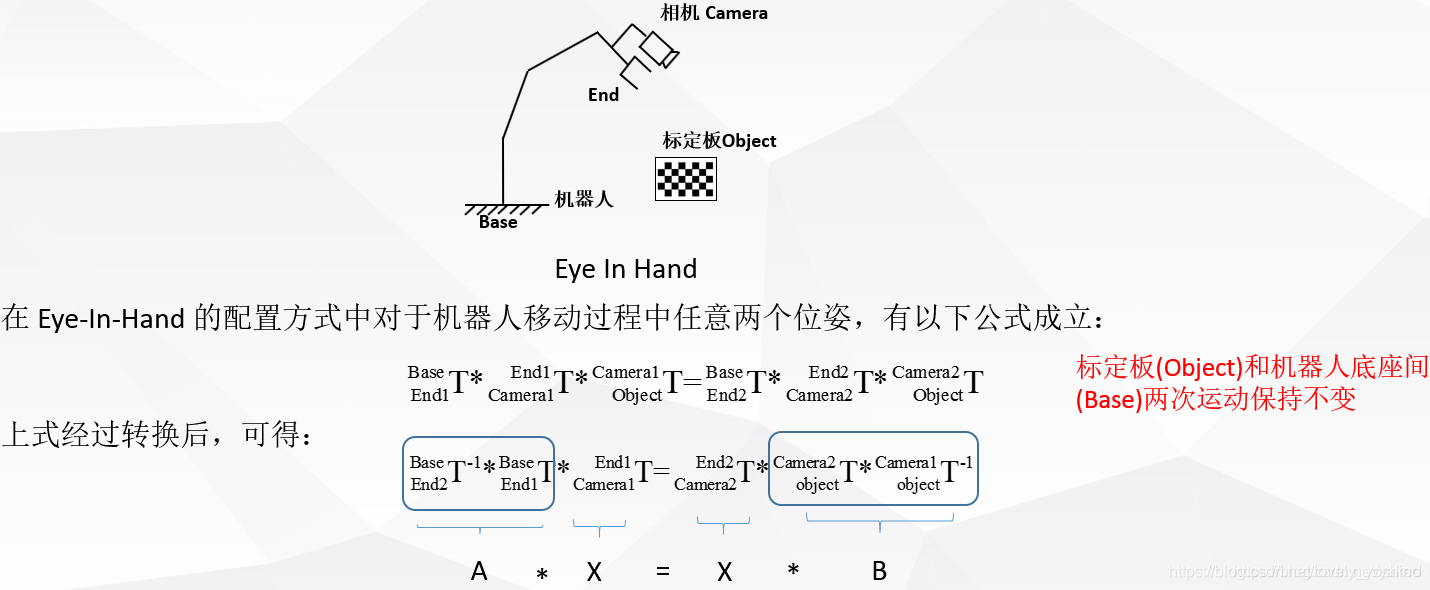

- eye in hand,这种关系下,两次运动,机器人底座和标定板的关系始终不变。求解的量为相机和机器人末端坐标系的位姿关系。

- eye to hand,这种关系下,两次运动,机器人末端和标定板的位姿关系始终不变。求解的量为相机和机器人底座坐标系之间的位姿关系。

三、AX = XB问题的求解

- Shiu Y C, Ahmad S. Calibration of wrist-mounted robotic sensors by

solving homogeneous transform equations of the form AX=XB[J]. IEEE

Transactions on Robotics & Automation, 1989, 5(1):16-29.

相关网上的英文教程 http://math.loyola.edu/~mili/Calibration/index.html其中也有一些AX= XB的matlab代码可以使用。

ROS 下也有相关的一些package可以利用

https://github.com/IFL-CAMP/easy_handeye

http://visp-doc.inria.fr/doxygen/visp-daily/calibrateTsai_8cpp-example.html#_a0

http://campar.in.tum.de/Chair/HandEyeCalibration TUM这里也有很多手眼标定求解的参考链接

四、其他参考资料

https://mp.weixin.qq.com/s/nJx1dlpBXaL2_iT_J4W5Kg 邱强Flyqq 微信文章

https://blog.csdn.net/u011089570/article/details/47945733 图不错

https://blog.csdn.net/qq_16481211/article/details/79764730 部分halocon代码

https://blog.csdn.net/qq_16481211/article/details/79767100 halocon代码

https://blog.csdn.net/happyjume/article/details/80847822 部分原理

https://blog.csdn.net/zhang970187013/article/details/81098175 UR5 与easy hand eye

一般用“两步法”求解基本方程,即先从基本方程上式求解出旋转部分,再代入求解出平移部分。

https://blog.csdn.net/yunlinwang/article/details/51622143

五、Matlab下手眼标定解算

我的Matlab版本R2013a,利用机器人工具箱的一些角度转换函数和显示,它安装和基本使用参考这里:https://blog.csdn.net/yaked/article/details/48933603

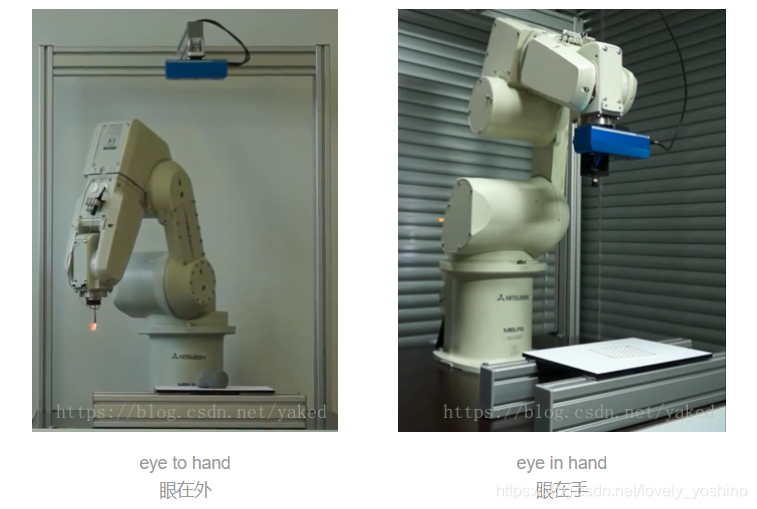

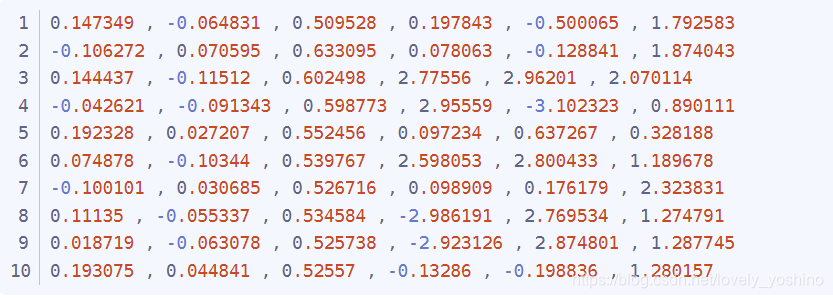

相机与机器人是eye-to-hand模式,机器人为加拿大Kinova 6轴机械臂,机器人pose为末端相对于基座的x,y,z,rx,ry,rz,单位为米和弧度

2017.08.29Kinova_pose_all_10_1.txt

pattern pose为标定板相对于相机的x,y,z,rx,ry,rz,单位为米和弧度

2017.08.29Pattern_pose_all_10_1.txt

此Matlab文件调用数据进行离线解算。http://math.loyola.edu/~mili/Calibration/index.html 的这部分Registering Two Sets of 6DoF Data with 1 Unknown,有code下载,下载好命名为shiu.m和tsai.m供下面程序调用就行

Jaco_handeye_rewrite_10.m

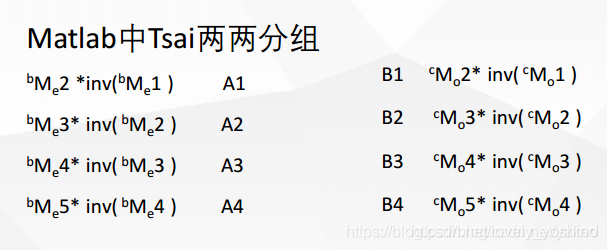

%% Eye to Hand calibration with Ensenso N20 and Kinova robotics arm.% input : Pattern pose to camera and arm cartesian pose in base coordiante.% output: The left eye of Ensenso N20 to the arm base coordiante.% % Robot Pose(Homogeneous) stored in cell A. -------------------10 pose% clear;close all;clc; JacoCartesianPose = importdata('D:\\jaco\\2017.08.29Kinova_pose_all_10_1.txt'); [m,n] = size(JacoCartesianPose); % 10* 6A = cell(1,m); % 1*10 for i = 1: 1: m A{1,i} = transl(JacoCartesianPose(i,1), JacoCartesianPose(i,2), JacoCartesianPose(i,3)) * trotx(JacoCartesianPose(i,4)) * troty(JacoCartesianPose(i,5))* trotz(JacoCartesianPose(i,6));end % Pattern Pose(Homogeneous) stored in cell B.patternInCamPose = importdata('D:\\jaco\\2017.08.29Pattern_pose_all_10_1.txt'); [melem,nelem] = size(patternInCamPose); % 10*6B=cell(1,melem);for x=1:1:melem B{1,x} = transl(patternInCamPose(x,1), patternInCamPose(x,2), patternInCamPose(x,3)) * trotx(patternInCamPose(x,4)) * troty(patternInCamPose(x,5))* trotz(patternInCamPose(x,6));end%%机器人位姿获取记得以五角星的形式获取,参照Tsai的论文n2=m;TA=cell(1,n2);TB=cell(1,n2); %--------------------- 10 ----------------------------------- for j=[1,6]% Only begin. TA{1,j}=A{1,j+1}*inv(A{1,j}); TA{1,j+1}=A{1,j+2}*inv(A{1,j+1}); TA{1,j+2}=A{1,j+3}*inv(A{1,j+2}); TA{1,j+3}=A{1,j+4}*inv(A{1,j+3}); TA{1,j+4}=A{1,j}*inv(A{1,j+4}); TB{1,j}=B{1,j+1}*inv(B{1,j}); TB{1,j+1}=B{1,j+2}*inv(B{1,j+1}); TB{1,j+2}=B{1,j+3}*inv(B{1,j+2}); TB{1,j+3}=B{1,j+4}*inv(B{1,j+3}); TB{1,j+4}=B{1,j}*inv(B{1,j+4}); end %M1=[TA{1,1} TA{1,2} TA{1,3} TA{1,4} TA{1,5} TA{1,6} TA{1,7} TA{1,8} TA{1,9}... TA{1,10} ]; %M2=[TB{1,1} TB{1,2} TB{1,3} TB{1,4} TB{1,5} TB{1,6} TB{1,7} TB{1,8} TB{1,9}... TB{1,10} ]; M1=[TA{1,1} TA{1,2} TA{1,3} TA{1,4} TA{1,5} TA{1,6} TA{1,7} TA{1,8} TA{1,9} ]; M2=[TB{1,1} TB{1,2} TB{1,3} TB{1,4} TB{1,5} TB{1,6} TB{1,7} TB{1,8} TB{1,9} ];%--------------------- 10 ----------------------------------- C_Tsai=tsai(M1,M2);T_Tsai = (transl(C_Tsai))';C_Tsai_rad = tr2rpy(C_Tsai);C_Tsai_rpy_rx_ry_rz =rad2deg(C_Tsai_rad);fprintf('Tsai(rad) = \n%f, %f, %f, %f, %f, %f\n',T_Tsai(1,1), T_Tsai(1,2), T_Tsai(1,3), C_Tsai_rad(1,1), C_Tsai_rad(1,2), C_Tsai_rad(1,3));fprintf('Tsai(deg) = \n%f, %f, %f, %f, %f, %f\n\n',T_Tsai(1,1), T_Tsai(1,2), T_Tsai(1,3), C_Tsai_rpy_rx_ry_rz(1,1), C_Tsai_rpy_rx_ry_rz(1,2), C_Tsai_rpy_rx_ry_rz(1,3));C_Shiu=shiu(M1,M2);T_Shiu= [C_Shiu(1,4) C_Shiu(2,4) C_Shiu(3,4)] ;C_Shiu_rad = tr2rpy(C_Shiu);C_Shiu_rpy_rx_ry_rz = rad2deg(C_Shiu_rad);fprintf('Shiu(rad) = \n%f, %f, %f, %f, %f, %f\n',T_Shiu(1,1), T_Shiu(1,2), T_Shiu(1,3), C_Shiu_rad(1,1), C_Shiu_rad(1,2), C_Shiu_rad(1,3));fprintf('Shiu(deg) = \n%f, %f, %f, %f, %f, %f\n\n',T_Shiu(1,1), T_Shiu(1,2), T_Shiu(1,3), C_Shiu_rpy_rx_ry_rz(1,1), C_Shiu_rpy_rx_ry_rz(1,2), C_Shiu_rpy_rx_ry_rz(1,3));C_Ijrr=ijrr1995(M1,M2);T_ijrr= [C_Ijrr(1,4) C_Ijrr(2,4) C_Ijrr(3,4)] ;C_ijrr_rad = tr2rpy(C_Ijrr);C_ijrr_rpy_rx_ry_rz = rad2deg(C_ijrr_rad);fprintf('Ijrr(rad) = \n%f, %f, %f, %f, %f, %f\n',C_Ijrr(1,1), C_Ijrr(1,2), C_Ijrr(1,3), C_ijrr_rad(1,1), C_ijrr_rad(1,2), C_ijrr_rad(1,3));fprintf('Ijrr(deg) = \n%f, %f, %f, %f, %f, %f\n\n',C_Ijrr(1,1), C_Ijrr(1,2), C_Ijrr(1,3), C_ijrr_rpy_rx_ry_rz(1,1), C_ijrr_rpy_rx_ry_rz(1,2), C_ijrr_rpy_rx_ry_rz(1,3));%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%% robotHcam =[ -0.076, -0.674, 0.760631455868699, 178.7221124879378, -0.0735038591212, -11.5304192925905 ];% robotHcam1 = transl(robotHcam(1,1), robotHcam(1,2), robotHcam(1,3)) * trotx(robotHcam(1,4),'deg') * troty(robotHcam(1,5), 'deg')* trotz(robotHcam(1,6), 'deg')* trotx(90,'deg'); % rotx 90robotHcam =[ 0.013, -0.94, 0.86, -90.0, 0.0, 0.0 ];% robotHcam =[ 0.25, 0.22, 1.1, 180.0, 0.0, 90.0 ]; % bind to the stack robotHcam1 = transl(robotHcam(1,1), robotHcam(1,2), robotHcam(1,3)) * trotx(robotHcam(1,4),'deg') * troty(robotHcam(1,5), 'deg')* trotz(robotHcam(1,6), 'deg');fprintf('robotHcam used in Program(rad) = \n%f, %f, %f, %f, %f, %f\n',robotHcam(1,1), robotHcam(1,2), robotHcam(1,3), deg2rad(robotHcam(1,4)), deg2rad(robotHcam(1,5)), deg2rad(robotHcam(1,6)));fprintf('robotHcam used in Program(deg) = \n%f, %f, %f, %f, %f, %f\n\n',robotHcam(1,1), robotHcam(1,2), robotHcam(1,3), robotHcam(1,4), robotHcam(1,5), robotHcam(1,6)); t1 = eye(4);trplot(t1,'frame','R','arrow','width', '1', 'color', 'r', 'text_opts', {'FontSize', 10, 'FontWeight', 'light'},'view', [-0.3 0.5 0.6],'thick',0.9,'dispar',0.8 );% Display Robot coordinatehold on;trplot(robotHcam1,'frame','C', 'color', 'black'); % Display Camera coordinate used in Programtrplot(C_Tsai,'frame','T', 'color', 'b'); % Display Tsaitrplot(C_Shiu,'frame','S', 'color', 'g'); % Display Shiu手眼标定(eye to hand) 构造A和B。eye in hand方式时,矩阵取反的地方不一样。也就是matlab代码的TA{1,j}=A{1,j+1}*inv(A{1,j});和TB{1,j}=B{1,j+1}*inv(B{1,j});取反的地方不同,千万注意。

clc;clear;close all;% D:\jaco\ConsoleApplication1/get_saveCartesian.cpp——Kinova_pose.txtrobotAeef = [-0.0860801, -0.641813, -0.0987199, 3.13316, 0.000389122, -0.297456];robotBeef = transl(robotAeef(1,1), robotAeef(1,2), robotAeef(1,3)) * trotx(robotAeef(1,4)) * troty(robotAeef(1,5))* trotz(robotAeef(1,6));% D:\jaco\C#\nxCsExamples.sln —— Pattern_pose_all.txtcamAobj = [0.011651 , -0.069043 , 0.857845 , -3.12825 , 3.137609 , 3.048224];camBobj =transl(camAobj(1,1), camAobj(1,2), camAobj(1,3)) * trotx(camAobj(1,4)) * troty(camAobj(1,5))* trotz(camAobj(1,6));robotAcam = robotBeef * inv(camBobj);robotAcam_Trans0 = (transl(robotAcam))'; fprintf('robotAcam_T = \n%f, %f, %f\n',robotAcam_Trans0(1,1), robotAcam_Trans0(1,2), robotAcam_Trans0(1,3));robotAcam_R_rad = tr2rpy((robotAcam));fprintf('robotAcam_R(rad) = \n%f, %f, %f\n',robotAcam_R_rad(1,1), robotAcam_R_rad(1,2), robotAcam_R_rad(1,3));R_degree0 = rad2deg(robotAcam_R_rad);fprintf('robotAcam_R(deg) = \n%f, %f, %f\n\n',R_degree0(1,1), R_degree0(1,2), R_degree0(1,3));% [theta, v] = tr2angvec(robotAcam)% robotAcam = [% robotAcam(1, 1) robotAcam(1, 2) robotAcam(1, 3) -0.097;% robotAcam(2, 1) robotAcam(2, 2) robotAcam(2, 3) -0.695;% robotAcam(3, 1) robotAcam(3,2) robotAcam(3, 3) robotAcam(3, 4);% 0 0 0 1;% ]% Trans1 = (transl(robotAcam))'% R_rad1 = tr2rpy((robotAcam))% R_degree1 = rad2deg(R_rad1)fprintf('===============Test point===============\n');% camAobj2= [ 0.011634 , -0.068815 , 0.858039 , -3.124779 , 3.139759 , 3.046957]; % Workspace home. camAobj2= [ -0.102468 , -0.059197 , 0.858 , -3.127464 , 3.136249 , 3.053341];camBobj2 = transl(camAobj2(1,1), camAobj2(1,2), camAobj2(1,3)) * trotx(camAobj2(1,4)) * troty(camAobj2(1,5))* trotz(camAobj2(1,6));robotAobj2 = robotAcam * camBobj2; robotAobj2_T = (transl(robotAobj2))'; fprintf('robotAobj2_T = \n%f, %f, %f\n',robotAobj2_T(1,1), robotAobj2_T(1,2), robotAobj2_T(1,3));robotAobj2_R_rad = tr2rpy((robotAobj2));fprintf('robotAobj2_R(rad) = \n%f, %f, %f\n',robotAobj2_R_rad(1,1), robotAobj2_R_rad(1,2), robotAobj2_R_rad(1,3));robotAobj2_R_degree = rad2deg(robotAobj2_R_rad);fprintf('robotAobj2_R(deg) = \n%f, %f, %f\n',robotAobj2_R_degree(1,1), robotAobj2_R_degree(1,2), robotAobj2_R_degree(1,3));==============平面九点标定法==============

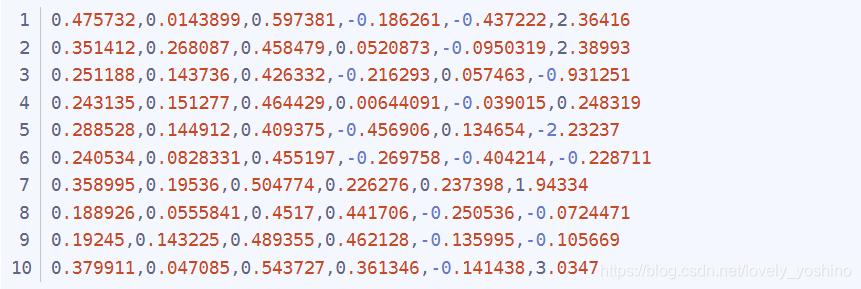

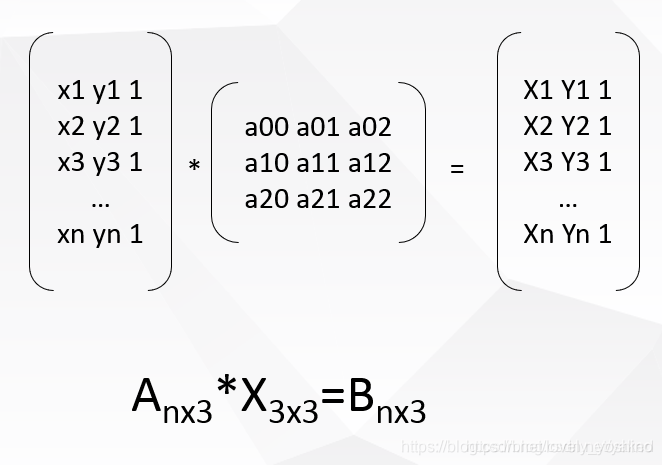

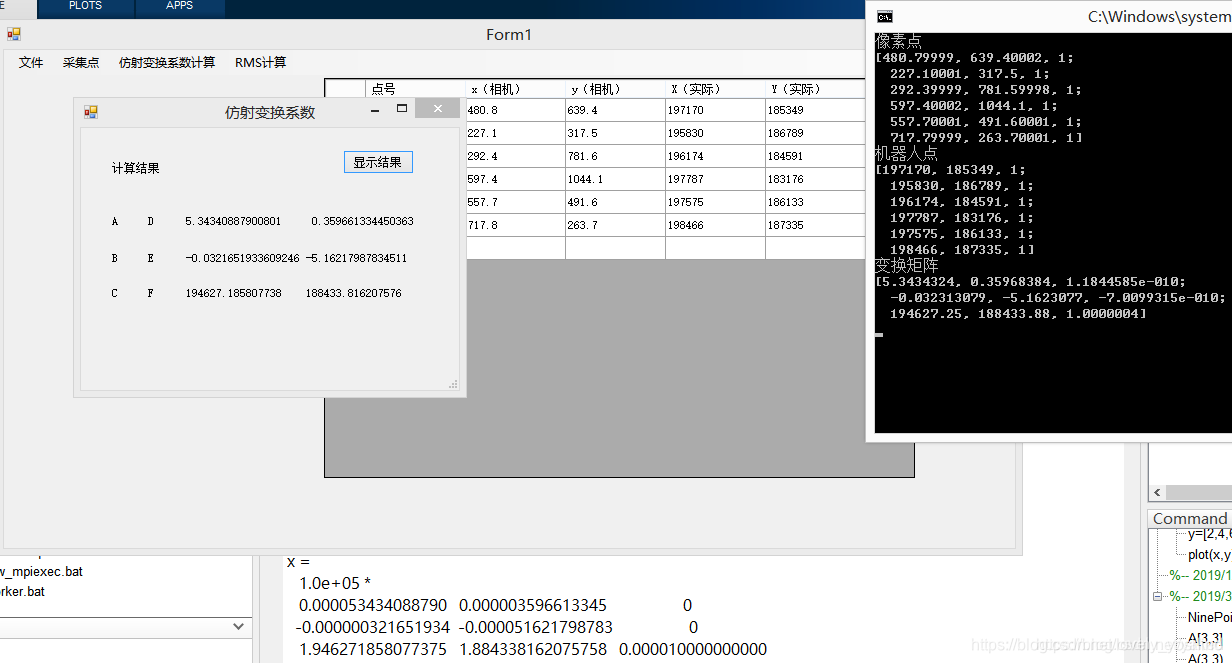

当利用RGB相机或者是机器人只进行平面抓取(也即固定姿态抓取,机器人六自由度位置和姿态简化为只考虑平移,姿态人为给定并且固定,这时机器人可以移动到目标点上方),问题可以简化为平面RGB图像的目标像素点集A(x1,y1)与机器人在平面(X1,Y1)的点对关系。具体做法是相机识别像素点给到A,然后利用示教器查看机器人在基座标系下的坐标,当做B。

相机坐标和机器人坐标写成齐次的形式,投影矩阵X是一个3x3的矩阵我们需要6组对应点来求解最小配置解。利用奇异值分解SVD来求取。

D:\opencv_work\cubeSolver\cv_solver\ConsoleApplication1\CV_SVD.cpp

D:\Matlab_work\handeye\NinePoints_Calibration.m

//Solve equation:AX=b #include <cv.h>#include<opencv2/opencv.hpp>using namespace std;using namespace cv; int main(int argc, char** argv){ printf("\nSolve equation:AX=b\n\n"); //Mat A = (Mat_<float>(6, 3) << //480.8, 639.4, 1, //227.1, 317.5, 1, //292.4, 781.6, 1, //597.4, 1044.1, 1, //557.7, 491.6, 1, //717.8, 263.7, 1 // );// 4x3 //Mat B = (Mat_<float>(6, 3) << //197170, 185349, 1, //195830, 186789, 1, //196174, 184591, 1, //197787, 183176, 1, //197575, 186133, 1, //198466, 187335, 1 // ); Mat A = (Mat_<float>(4, 3) << 2926.36, 2607.79, 1, 587.093, 2616.89, 1, 537.031, 250.311, 1, 1160.53, 1265.21, 1);// 4x3 Mat B = (Mat_<float>(4, 3) << 320.389, 208.197, 1, 247.77, 209.726, 1, 242.809, 283.182, 1, 263.152, 253.715, 1); Mat X; cout << "A=" << endl << A << endl; cout << "B=" << endl << B << endl; solve(A, B, X, CV_SVD); cout << "X=" << endl << X << endl; Mat a1 = (Mat_<float>(1, 3) << 1864, 1273, 1); Mat b1 = a1*X; cout << "b1=" << endl << b1 << endl; cout << "真实值为:" << "283.265, 253.049, 1" << endl; getchar(); return 0;}https://docs.opencv.org/3.1.0/d2/de8/group__core__array.html#ga12b43690dbd31fed96f213eefead2373

结果对比:左halcon C#(第三列为0,0,1,没做显示),右opencv c++,底下为Matlab结果,三者一致,算法检测通过。

https://blog.csdn.net/Stones1025/article/details/100085338

这种方法利用点对,求仿摄变换矩阵

#include "pch.h"#include <iostream> #include <cv.h>#include<opencv2/opencv.hpp> using namespace cv; int main(int argc, char** argv){ printf("\nSolve equation:AX=b\n\n"); Point2f srcTri[3]; srcTri[0] = Point2f(480.8f, 639.4f); srcTri[1] = Point2f(227.1f, 317.5f); srcTri[2] = Point2f(292.4f, 781.6f); Point2f dstTri[3]; dstTri[0] = Point2f(197170.0f, 185349.0f); dstTri[1] = Point2f(195830.0f, 186789.0f); dstTri[2] = Point2f(196174.0f, 184591.0f); Mat warp_mat = getAffineTransform(srcTri, dstTri); // 计算图像坐标 std::cout << "img_corners:\n" << srcTri << std::endl; std::cout << "robot_corners:\n" << dstTri << std::endl; std::cout << warp_mat << std::endl; // //[5.284835627018051, -0.00236967559639317, 194630.5662011061; //0.4056177440315953, -4.793119669651512, 188218.6997054448] return 0;}边栏推荐

- 筑牢生态安全防线,广州开展突发环境事件应急演练

- TI C6000 TMS320C6678 DSP+ Zynq-7045的PS + PL异构多核案例开发手册(3)

- PS + PL heterogeneous multicore case development manual for Ti C6000 tms320c6678 DSP + zynq-7045 (3)

- R language wilcox The test function compares whether there is a significant difference in the central position of the population of two nonparametric samples (if the two sample data are paired data, s

- FTP协议

- 【静态代码质量分析工具】上海道宁为您带来SonarSource/SonarQube下载、试用、教程

- Qt最基本的布局,创建window界面

- 什么是传输层协议TCP/UDP???

- 邻接矩阵的COO格式

- Deep packet inspection using cuckoo filter paper summary

猜你喜欢

信用卡数字识别(opencv,代码分析)

Unity URP entry practice

cs224w(图机器学习)2021冬季课程学习笔记5

【5分钟Paper】Pointer Network指针网络

PS + PL heterogeneous multicore case development manual for Ti C6000 tms320c6678 DSP + zynq-7045 (3)

VS添加作者信息和时间信息的设置

认识JS基础与浏览器引擎

ES6高级-查询商品案例

Continuous integration (I) brief introduction to basic concepts

Digital commerce cloud: lead the digital upgrading of chemical industry and see how Mobei can quickly open up the whole scene of mutual integration and interoperability

随机推荐

白话详解决策树模型之使用信息熵构建决策树

请问参数化视图可以根据传入参数的特点得到不同行数的SQL吗?比如这里我想根据传输参数@field中列

pytorch安装 CUDA对应

Chapter 7 supporting CORS in rest services

[leave some code] Apply transformer to target detection, and understand the model operation process of the model through debug

持续集成(二)Jenkins基本使用介绍

R language Visual scatter diagram, geom using ggrep package_ text_ The rep function avoids overlapping labels between data points (set the min.segment.length parameter to 0 to add line segments to the

什么是虚拟摄像头

撤回就看不到了?三步让你微信防撤回。

教程篇(7.0) 05. 通过FortiClient EMS发放FortiClient * FortiClient EMS * Fortinet 网络安全专家 NSE 5

[leetcode daily question] - 121. The best time to buy and sell stocks

FOC learning notes - coordinate transformation and simulation verification

VP video structured framework

04 callable and common auxiliary classes

USB转串口参数配置功能

FTP协议

Creation and traversal of binary tree

OSPF综合实验

R language uses LM function to build multiple linear regression model, writes regression equation according to model coefficient, and uses fitted function to calculate y value (response value) vector

线程和进程