当前位置:网站首页>GFS distributed file system

GFS distributed file system

2022-07-05 17:50:00 【Jinling town】

GFS System introduction

Any system has its own applicable scenarios , So when we talk about a system , The first thing to be clear is , Under what circumstances did this system come into being , For what purpose , What assumptions or limitations have been made .

about GFS, hypothesis :

The system is built on ordinary 、 On cheap machines , So the fault is normal, not unexpected

The system hopes to store a large number of large files ( Single file size It's big )

The system supports two types of read operations : A large number of sequential reads and small-scale random reads (large streaming reads and small random reads.)

The write operation of the system is mainly sequential additional write , Instead of overwriting writes

The system has a lot of optimization for a large number of concurrent additional writes on clients , To ensure the efficiency and consistency of writing , Mainly due to atomic operation record append

The system pays more attention to the continuous and stable bandwidth than the delay of a single read and write

GFS framework

Can see GFS The system consists of three parts :GFS master、GFS Client、GFS chunkserver. among ,GFS master There is only one at any time , and chunkserver and gfs client There may be more than one .

A document is divided into multiple fixed size chunk( Default 64M), Every chunk There is a globally unique file handle -- One 64 Bit chunk ID, Every one chunk Will be copied to multiple chunkserver( The default value is 3), To ensure availability and reliability .chunkserver take chunk As ordinary Linux Files are stored on local disk .

GFS master It is the metadata server of the system , The metadata maintained includes : Command space (GFS Manage files by hierarchical directory )、 File to chunk Mapping ,chunk The location of . among , The first two will persist , and chunk The location information of comes from Chunkserver Report of .

GFS master It is also responsible for the centralized scheduling of distributed systems :chunk lease management , Garbage collection ,chunk Migration and other important system control .master And chunkserver Keep a regular heartbeat , To determine chunkserver The state of .

GFS client It's for applications API, these API Interface and POSIX API similar .GFS Client Will cache from GFS master Read the chunk Information ( Metadata ), Try to minimize and GFS master Interaction .

The flow of a file reading operation is like this :

Application calls GFS client Provided interface , Indicates the file name to read 、 The offset 、 length .

GFS Client Translate the offset into chunk Serial number , Send to master

master take chunk id And chunk The copy location of tells GFS client

GFS client Send a copy to the nearest Chunkserver Send a read request , The request contains chunk id And scope

ChunkServer Read the corresponding file , Then send the contents of the document to GFS client.

GFS Replica Control Protocol

stay 《 Study the central replication set of distributed systems with problems 》 In the article , Introduced the common copy control protocol in distributed system .GFS For availability and reliability , And they use ordinary and cheap machines , Therefore, redundant copy mechanism is also adopted , About the same data (chunk) Copy to physical machine (chunkserver).

Centralized replica control protocol

GFS It adopts centralized copy control protocol , That is, there is a central node to coordinate and manage the update operation of the replica set , Transform distributed concurrent operations into single point concurrent operations , So as to ensure the consistency of all nodes in the replica set . stay GFS in , The central node is called Primary, Non central nodes become Secondary. The central node is GFS Master adopt lease Elected .

Granularity of data redundancy

GFS in , Data redundancy is based on Chunk In basic units , Not files or machines . This is in Liu Jie's 《 Introduction to distributed principle 》 There is a detailed description of , Redundancy granularity is generally divided into : In machine units , In data segment .

In machine units , That is, several machines are copies of each other , The data between the replica machines is exactly the same . A little bit is very simple , Less metadata . The disadvantage is that the efficiency of replying to data is not high 、 Poor scalability , Can't make full use of resources .

Above picture ,o p q Data segment , Compared with machine granular copies , Take data segment as independent copy mechanism , Although the metadata maintained is more , But the system has better scalability , Faster fault recovery , Resource utilization is more uniform . Like in the picture above , When the machine 1 After permanent failure , Need data o p q Add a copy each , Respectively, it can be from the machine 2 3 4 Read the data .

stay GFS in , The data segment is Chunk, above-mentioned , In this way, there will be more metadata , And GFS master Itself is a single point , Is there a problem with this .GFS say , No big problem , because GFS in , One Chunk Information about ,64byte That's enough , And Chunk The particle size itself is very large (64M), So the amount of data will not be too large , and , stay GFS master in ,chunk The location information of is not persistent .

stay MongoDB in , The redundancy of replicas is based on machine granularity .

Data writing process

stay GFS in , Data flow and control flow are separated , As shown in the figure

step1 Client towards master request Chunk Copy information for , And which copy (Replica) yes Primary

step2 maste reply client,client Cache this information locally

step2 client Put the data (Data) Chain push to all copies

step4 Client notice Primary Submit

step5 primary After you successfully submit , Inform all Secondary Submit

step6 Secondary towards Primary Reply to submit results

step7 primary reply client Submit results

First , Why separate data flow from control messages , And use the chain push method , The goal is Maximize the network bandwidth of each machine , Avoid network bottlenecks and high latency connections , Minimize push delay .

Another push mode is master-slave mode :

Client First, push the data to Primary, Again by Primary Push to all secodnary. Obviously ,Primary There will be a lot of pressure , stay GFS in , Since it is to maximize the balanced use of network bandwidth , Then you don't want a bottleneck . and , Whether it's Client still replica We all know which node is closer to us , So we can choose the best path .

and ,GFS Use TCP Streaming data , To minimize latency . once chunkserver Receive the data , Immediately start pushing , That is, a replica Don't send the complete data to the next one replica.

Synchronous data writing

In the above process, the first step is 3 The three step , Just write the data to the temporary cache , To really take effect, you need to control the message ( The first 4 5 Step ). stay GFS in , The writing of control messages is synchronous , namely Primary Need to wait until all Secondary The reply of is returned to the client . This is it. write all, Ensure the consistency of data between replicas , So you can read data from any copy when you can read . About synchronous writing 、 Asynchronous write , May refer to 《Distributed systems for fun and profit》.

Copy consistency guarantee

The biggest problem of replica redundancy is replica consistency : The data read from different copies is inconsistent .

Here are two terms :consistent, defined

consistent: For file areas A, If all clients read the same data from any replica , that A It's the same .

defined: If A It's consistent , And the client can see the variation (mutation) Complete data written , that A Namely defined, That is, the result is predictable .

obviously ,defined Is based on consistent Of , And have higher requirements .

surface 1 in , For write operations (write, Write at the user specified file offset ), If it's written in sequence , Then it must be defined; If it is written concurrently , Then the copies must be consistent , But the result is undefined Of , There may be mutual coverage . While using GFS Provided record append This atomic operation ( About append, Can operate linux Of O_APPEND Options , That is, the declaration is written at the end of the file ), The content must also be defined. But on the watch 1 in , Is written “interspersed with inconsistent”, This is because if someone chunkserver Failed to write data , Will be written into the process step3 Start trying again , This leads to chunk Some of the data in are inconsistent in different copies .

record append Atomic writing is guaranteed , And it's at least once, But there is no guarantee that it is written only once , It is possible to write a part (padding) It's just unusual , Then you need to try again ; It may also be due to other copy write failures , Even if I write it successfully , Also write another one .

GFS The consistency guarantee provided is called “relaxed consistency”,relaxed Refer to , In some cases, the system does not guarantee consistency , Such as reading data that has not been completely written ( In the database Dirty Read); As mentioned above padding( have access to checksum Mechanism solution ); For example, the repetition mentioned above append data ( The application that reads data guarantees idempotency by itself ). Under these abnormal conditions ,GFS There is no guarantee of consistency , Applications are needed to handle .

Personally feel , Writing multiple copies is also a distributed transaction , Or write it all , Or don't write , If you use something like 2PC Methods , Then it won't show up padding Or repeat , however 2PC It's expensive , Very affecting performance , therefore GFS Try again to deal with exceptions , Throw the problem to the application .

High performance 、 Highly available GFS master

stay GFS in ,master It's a single point , Anytime , only one master be in active state . Single point simplifies the design , Centralized scheduling is much more convenient , Don't worry about the bad “ Split brain ” problem . But the throughput of a single point to system 、 Usability presents challenges . So how to avoid a single point becoming a bottleneck ? Two possible ways : Reduce interaction , fast failover.

master Metadata needs to be maintained in memory , At the same time GFS client,chunkserver Interaction . As for memory , It's not a big problem , because GFS The system usually deals with large files (GB In units of )、 Large block ( Default 64M). Every 64M Of chunk, The corresponding metadata information does not exceed 64byte. And for files , Used file command space , Using prefix compression , The metadata information of a single file is also less than 64byte.

GFS client Try to have less contact with GFS master Interaction : Cache and batch read ( Pre read ). First , allow Chunk Of size The larger , This reduces client thinking master Probability of requesting data . in addition ,client Will chunk The information is cached locally for a period of time , Until the cache expires or the application reopens the file , and ,GFS by chunk An incremental version number is assigned (version),client visit chunk Will bring their own cache when version, It solves the problem of cache inconsistency .

GFS Client In the request chunk When , Generally, I will ask for more follow-up chunk Information about :

In fact, the client typically asks for multiple chunks in the same request and the master can also include the informationfor chunks immediately following those requested. This extra information sidesteps several future client-master interactionsat practically no extra cost.

master The high availability of is through the redundancy of operation logs + Fast failover To achieve .

master Data that needs to be persisted ( File command space 、 File to chunk Mapping ) Through the operation log and checkpoint Storage to multiple machines , Only when the metadata operation log has been successful flush To local disk and all master The copy will be considered successful . This is a write all The operation of , Theoretically, it will have a certain impact on the performance of write operations , therefore GFS Will merge some write operations , Together flush, Minimize the impact on system throughput .

about chunk Location information for ,master It's not persistent , It starts from chunkserver Inquire about , And with chunkserver Get from the regular heartbeat message of . although chunk Which one is created chunkserver On is master designated , But only chunkserver Yes chunk Responsible for location information ,chunkserver The information on is real-time and accurate , For example when chunkserver When it goes down . If in master Also maintain chunk Location information for , In order to maintain a consistent view, you have to add a lot of messages and mechanisms .

a chunkserver has the final word over what chunks it does or does not have on its own disks.

There is no point in trying to maintain a consistent view of this information on the master because errors on a chunkserver may cause chunks to vanish spontaneously (e.g., a disk may go bad and be disabled) or an operator may rename a chunkserver.

If master fault , It can be restarted almost instantaneously , If master Machine fault , Then the new one will be started on another redundant machine master process , Of course , This new machine is holding all the operation logs with checkpoint The information of .

master After reboot ( Whether the original physical machine is restarted , Or a new physical machine ), Need to restore the memory state , Part comes from checkpoint And operation log , Another part ( namely chunk Copy location information of ) It comes from chunkserver Report of .

System scalability 、 Usability 、 reliability

As a distributed storage system , We need good scalability to face the growth of storage business ; It is necessary to ensure high availability when faults become normal ; The most important , It is necessary to ensure the reliability of data , In this way, the application can safely store the data in the system .

Scalability

GFS Good scalability , Add... Directly to the system Chunkserver that will do , And as mentioned earlier , Because it is with chunk Replica redundancy for granularity , It is allowed to add one at a time ChunkServer. The bottleneck of system theory lies in master, therefore master It's a single point , A lot of metadata needs to be maintained in memory , Need and GFS client、GFS chunkserver Interaction , But based on the above analysis ,master It is also difficult to become a de facto bottleneck . System to chunk Replica redundancy for granularity , In this way, when adding 、 When deleting the machine , Not one chunkserver、 A certain file has great influence .

Usability

metadata server (GFS master) The availability guarantee of has been mentioned above , Here's the user data ( file ) The usability of .

The data to chunk Redundancy for units in multiple chunkserver On , and , The default is cross rack (rack) Redundancy of , In this way, faults affecting the whole rack occur in time ( If the switch fails 、 Power failure ) It will not affect availability . and , The read operation of data can also be better distributed across racks , Make full use of network bandwidth , Make the application more likely to find the nearest copy location .

When Master I found some chunk When the number of redundant copies of cannot meet the requirements ( For example, a certain chunkserver Break down ), For this chunk Add a new copy ; When there is a new chunkserver When added to the system , Copy migration will also be carried out -- take chunk For higher load chunkserver Migrate to low load chunkserver On , Achieve the effect of dynamic load balancing .

When you need to choose one chunkserver To persist a chunk when , The following factors will be considered :

- Choose one with reduced disk utilization chunkserver;

- I don't want one chunkserver Create a lot in a short time chunk;

- chunk Cross rack

reliability

Reliability means that data is not lost 、 Do not damage (data corruption). Replica redundancy mechanism ensures that data will not be lost ; and GFS It also provides checksum Mechanism , Ensure that the data will not be damaged due to disk .

About checksum, One chunk It's broken down into multiple 64KB The block , Each block has a corresponding 32 Bit checksum.checksum Stored in memory , And use log persistence to save , It is isolated from user data , Of course , The memory and persistence here are in chunkserver On . When chunkserver When receiving a request to read data , Will compare the file data with the corresponding checksum, If a mismatch is found , Will inform client,client Read from others ; meanwhile , You'll also tell master,master Choose a new chunkserver Come on restore This is damaged chunk

other

First of all :chunk Lazy allocation of storage space

second : Use copy on write To create a snapshot (snapshot)

Third : The best way to solve the problem is not to solve , Leave it to the user to solve :

The first point ,GFS For the concurrent reading and writing of files, consistency is not guaranteed , First, standard documents API There is no guarantee , Second, it also greatly simplifies the design of the system by leaving this problem to the users themselves

Second point , because Chunk size more , Then when the file is small, there is only one chunk, If the file is read frequently , Corresponding chunkserver It may become pressure . The solution is for users to increase the redundancy level , Then don't concentrate on reading files at one time , Share the chunkserver The pressure of the .

Fourth : Methods to prevent file namespace deadlock :

An operation must apply for locks in a specific order : First, sort by the hierarchy of the namespace tree , At the same level, in lexicographic order .

they are first ordered by level in the namespace tree and lexicographically within the same level

边栏推荐

- Cartoon: interesting [pirate] question

- Ten top automation and orchestration tools

- 哈趣K1和哈趣H1哪个性价比更高?谁更值得入手?

- 世界上最难的5种编程语言

- 神经网络自我认知模型

- What are the requirements for PMP certification? How much is it?

- Action avant ou après l'enregistrement du message teamcenter

- 統計php程序運行時間及設置PHP最長運行時間

- 2022新版PMP考试有哪些变化?

- 论文阅读_医疗NLP模型_ EMBERT

猜你喜欢

Oracle Recovery Tools ----oracle数据库恢复利器

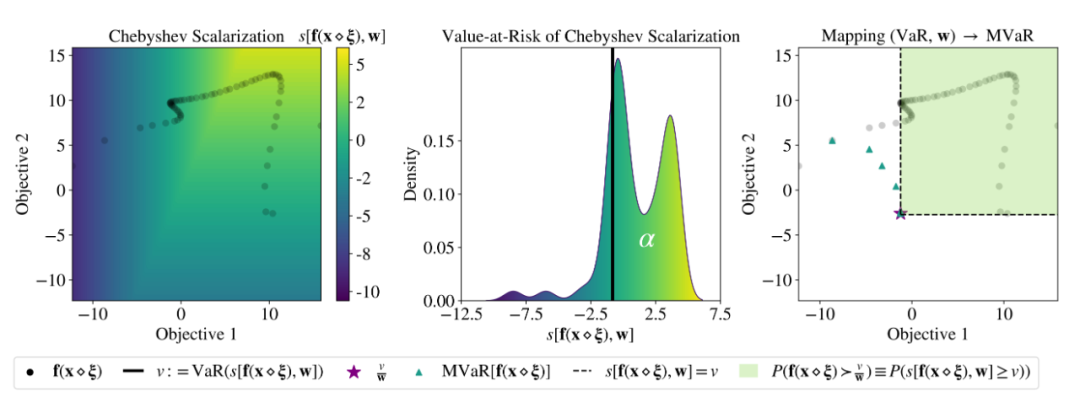

ICML 2022 | Meta提出鲁棒的多目标贝叶斯优化方法,有效应对输入噪声

LeetCode 练习——206. 反转链表

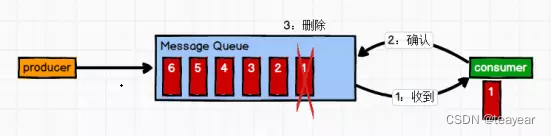

Kafaka technology lesson 1

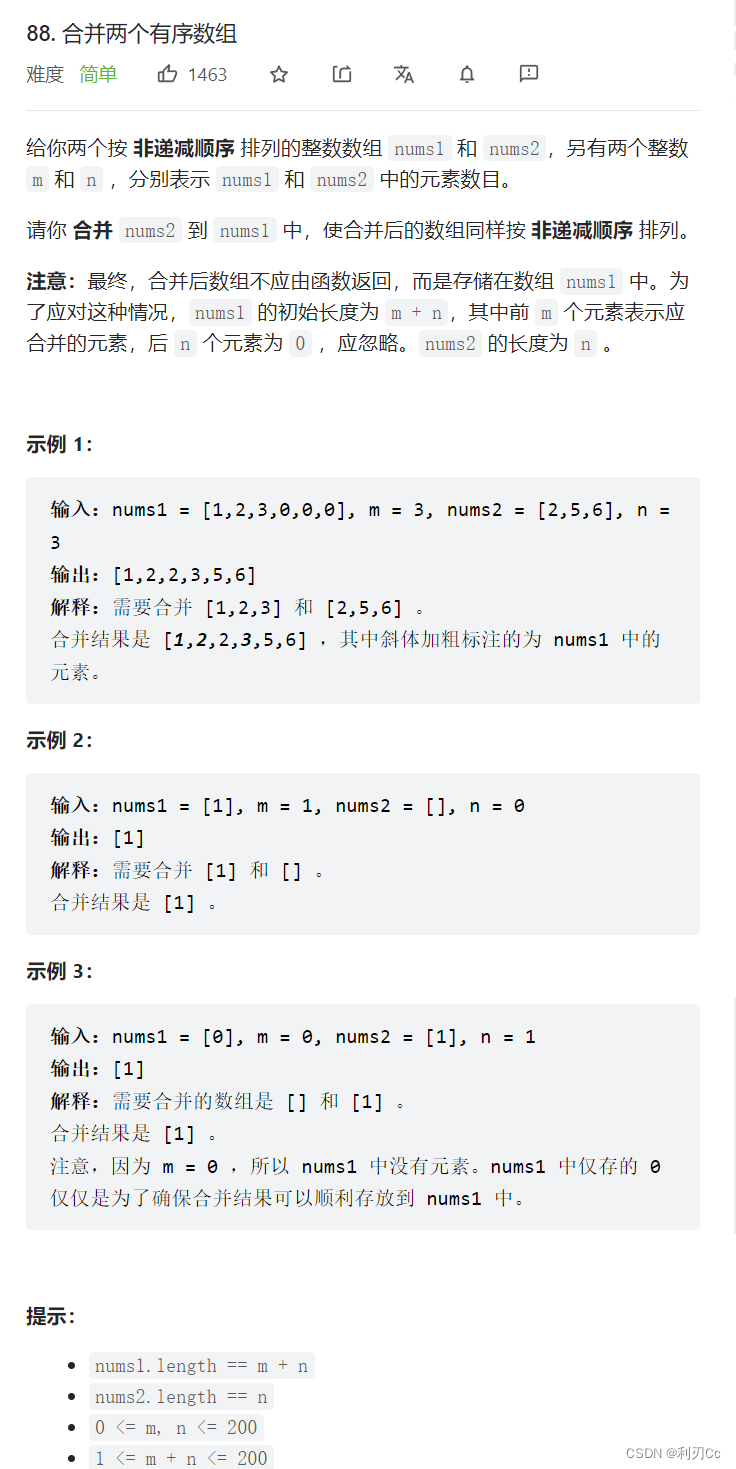

Leetcode daily question: merge two ordered arrays

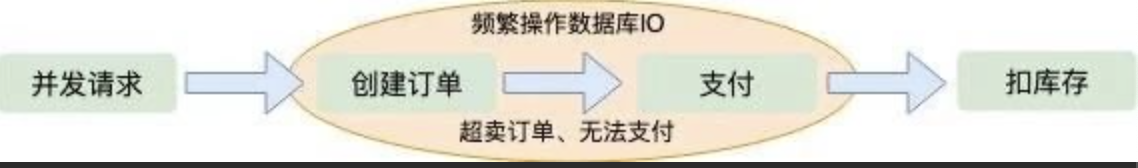

“12306” 的架构到底有多牛逼?

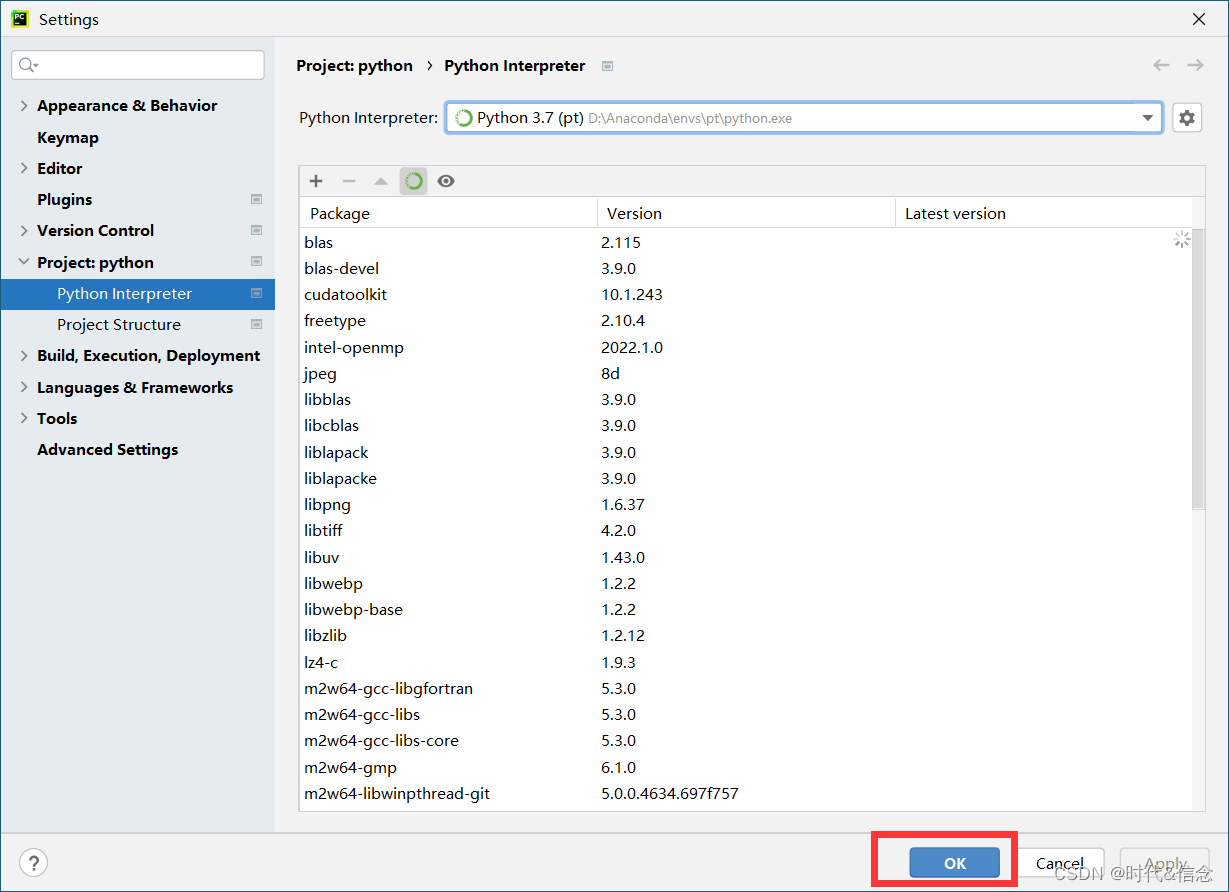

Configure pytorch environment in Anaconda - win10 system (small white packet meeting)

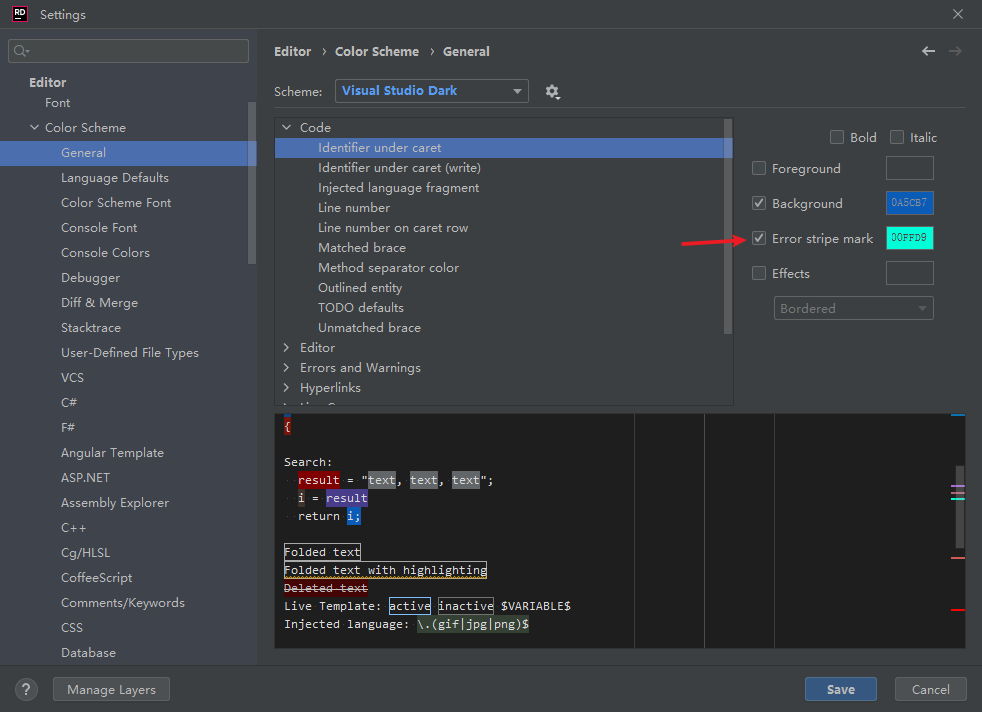

Rider set the highlighted side of the selected word, remove the warning and suggest highlighting

Six bad safety habits in the development of enterprise digitalization, each of which is very dangerous!

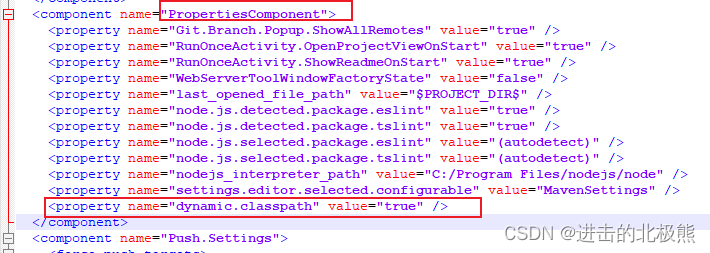

IDEA 项目启动报错 Shorten the command line via JAR manifest or via a classpath file and rerun.

随机推荐

Humi analysis: the integrated application of industrial Internet identity analysis and enterprise information system

Compter le temps d'exécution du programme PHP et définir le temps d'exécution maximum de PHP

How to modify MySQL fields as self growing fields

What are the changes in the 2022 PMP Exam?

Webapp development - Google official tutorial

Cartoon: interesting [pirate] question

Knowing that his daughter was molested, the 35 year old man beat the other party to minor injury level 2, and the court decided not to sue

Cmake tutorial Step3 (requirements for adding libraries)

c#图文混合,以二进制方式写入数据库

云主机oracle异常恢复----惜分飞

哈趣K1和哈趣H1哪个性价比更高?谁更值得入手?

Anaconda中配置PyTorch环境——win10系统(小白包会)

Sentinel-流量防卫兵

这个17岁的黑客天才,破解了第一代iPhone!

Oracle recovery tools -- Oracle database recovery tool

Use QT designer interface class to create two interfaces, and switch from interface 1 to interface 2 by pressing the key

Disabling and enabling inspections pycharm

中国银河证券开户安全吗 开户后多久能买股票

外盘黄金哪个平台正规安全,怎么辨别?

Kafaka technology lesson 1