当前位置:网站首页>Pytorch linear regression

Pytorch linear regression

2022-07-05 11:42:00 【My abyss, my abyss】

1、 Import table data

filename = "./data.csv"

data = pd.read_csv(filename)

features = data.iloc[:,1:]

labels = data.iloc[:,0]

2、 Turn into Tensor form

''' DataFrame ----> Tensor '''

features = torch.tensor(features.values, dtype=torch.float32)

labels = torch.tensor(labels.values, dtype=torch.float32)

labels = torch.reshape(labels,(-1,1))

3、 Generate iterators , Read data in batches

from torch.utils import data

def load_array(data_arrays , batch_size , is_train = True):

dataset = data.TensorDataset(*data_arrays)

return data.DataLoader(dataset , batch_size , shuffle = is_train)

batch_size = 10

data_iter = load_array((features,labels),batch_size)

next(iter(data_iter))

4、 Define neural networks

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.layer1 = nn.Linear(2, 1)

def forward(self, x):

return self.layer1(x)

5、 Training network

model = LinearRegression()

gpu = torch.device('cuda')

mse_loss = nn.MSELoss()

optimizer = optim.Adam(model.parameters(), lr=0.003)

Loss = []

epochs = 1000

def train():

for i in range(epochs):

for X , y in data_iter:

y_hat = model(X) # Calculation model output results

loss = mse_loss(y_hat, y) # Loss function

loss_numpy = loss.detach().numpy()

Loss.append(loss_numpy)

optimizer.zero_grad() # Gradient clear

loss.backward() # Calculate weights

optimizer.step() # Modify weights

print(i, loss.item(), sep='\t')

train() # Training

for parameter in model.parameters():

print(parameter)

plt.plot(Loss)

6 The overall code

import matplotlib.pyplot as plt

import pandas as pd

import torch

from torch import nn, optim

from torch.utils import data

class LinearRegression (nn.Module):

def __init__(self, feature_nums):

super (LinearRegression, self).__init__ ()

self.layer1 = nn.Linear (feature_nums, 1)

def forward(self, x):

return self.layer1 (x)

def load_array(data_arrays, batch_size, is_train=True):

dataset = data.TensorDataset (*data_arrays)

return data.DataLoader (dataset, batch_size, shuffle=is_train)

def Linear_Regression_pytorch(filepath, feature_nums, batch_size, learning_rate, epochs):

filename = filepath

data = pd.read_csv (filename)

features = data.iloc[:, 1:]

labels = data.iloc[:, 0]

features = torch.tensor (features.values)

features = torch.tensor (features, dtype=torch.float32)

labels = torch.tensor (labels.values)

labels = torch.tensor (labels, dtype=torch.float32)

labels = torch.reshape (labels, (-1, 1))

batch_size = batch_size

data_iter = load_array ((features, labels), batch_size)

next (iter (data_iter))

model = LinearRegression (feature_nums)

gpu = torch.device ('cuda')

mse_loss = nn.MSELoss ()

optimizer = optim.Adam (model.parameters (), lr=learning_rate)

Loss = []

epochs = epochs

for i in range (epochs):

for X, y in data_iter:

y_hat = model (X) # Calculation model output results

loss = mse_loss (y_hat, y) # Loss function

loss_numpy = loss.detach ().numpy ()

optimizer.zero_grad () # Gradient clear

loss.backward () # Calculate weights

optimizer.step () # Modify weights

print (i, loss.item (), sep='\t')

Loss.append (loss.item ())

for parameter in model.parameters ():

print (parameter)

plt.plot (Loss)

plt.title("Loss")

plt.show ()

if __name__ == "__main__":

Linear_Regression_pytorch (filepath="data.csv",

feature_nums=2,

batch_size=10,

learning_rate=0.05,

epochs=1000

)

边栏推荐

- [office] eight usages of if function in Excel

- Open3D 网格(曲面)赋色

- Redis如何实现多可用区?

- 基于Lucene3.5.0怎样从TokenStream获得Token

- PHP中Array的hash函数实现

- How to protect user privacy without password authentication?

- ZCMU--1390: 队列问题(1)

- SET XACT_ABORT ON

- 项目总结笔记系列 wsTax KT Session2 代码分析

- Question and answer 45: application of performance probe monitoring principle node JS probe

猜你喜欢

11.(地图数据篇)OSM数据如何下载使用

The ninth Operation Committee meeting of dragon lizard community was successfully held

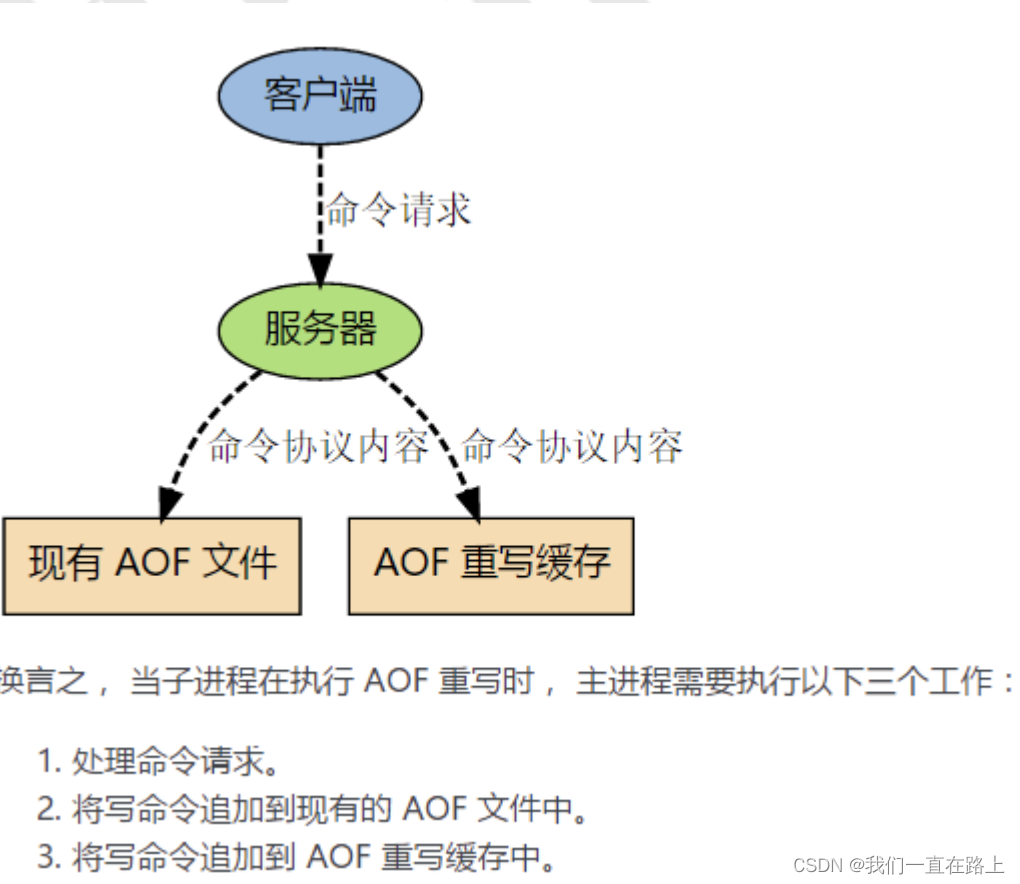

redis的持久化机制原理

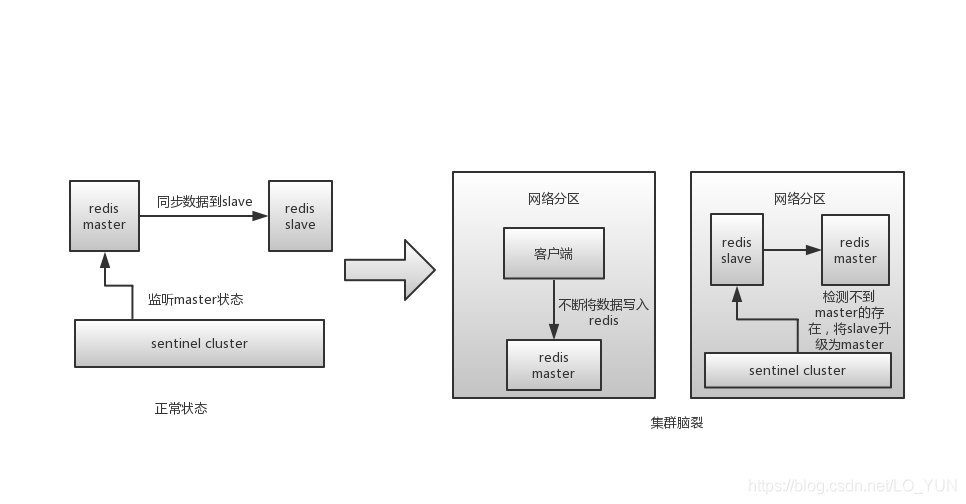

Redis集群(主从)脑裂及解决方案

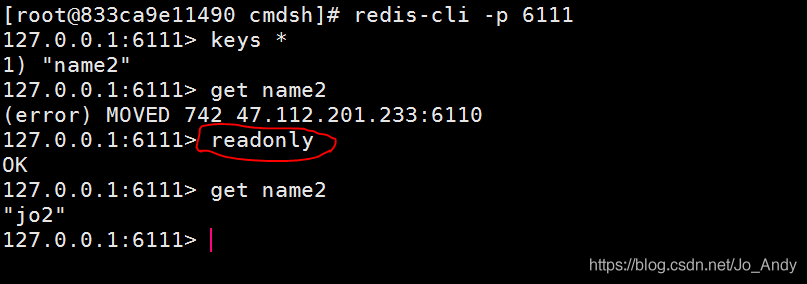

简单解决redis cluster中从节点读取不了数据(error) MOVED

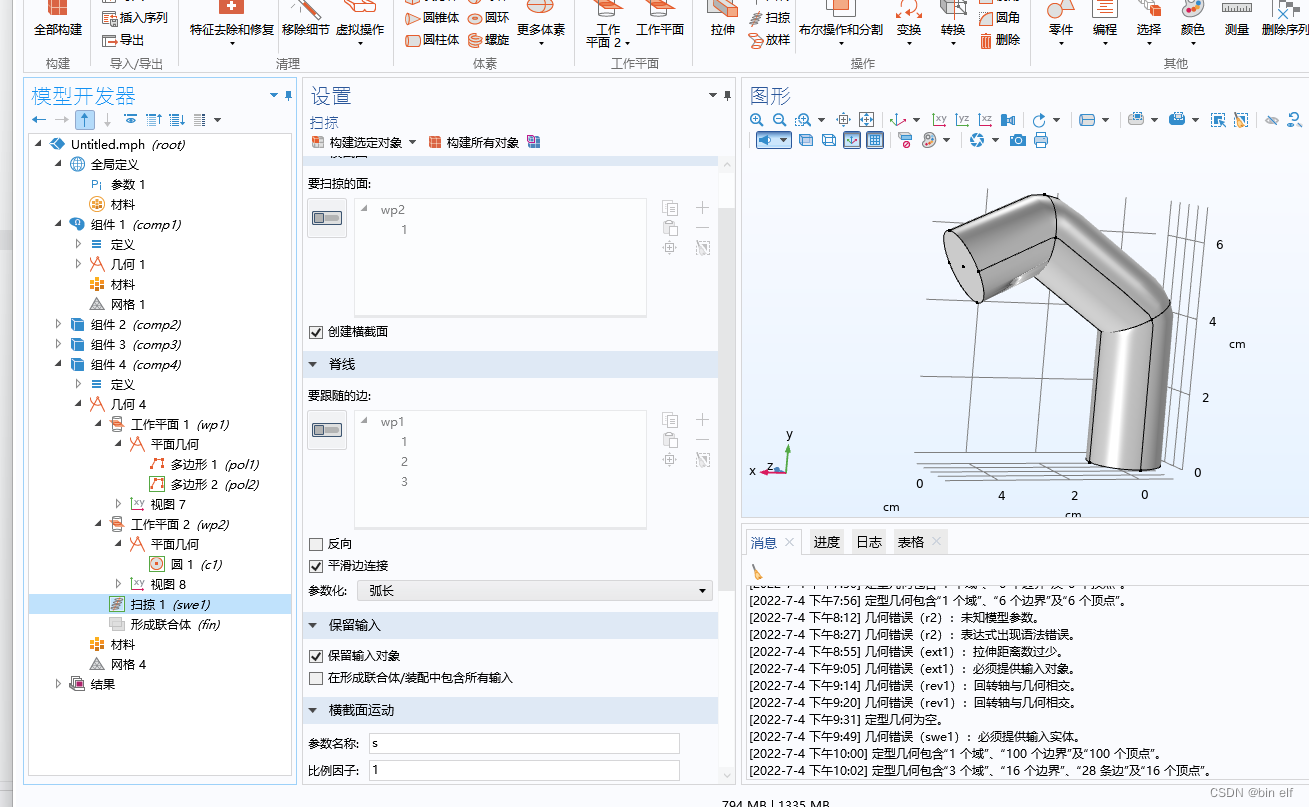

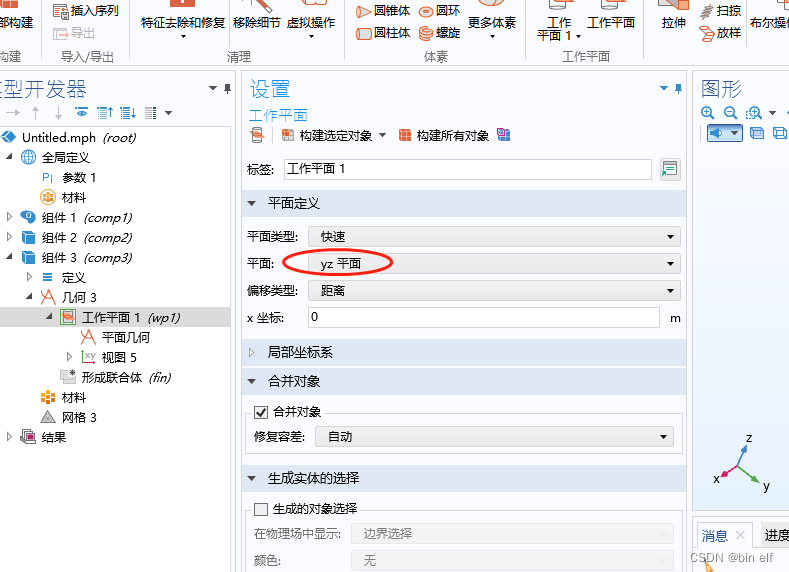

COMSOL--三维随便画--扫掠

comsol--三维图形随便画----回转

How can China Africa diamond accessory stones be inlaid to be safe and beautiful?

![[mainstream nivida graphics card deep learning / reinforcement learning /ai computing power summary]](/img/1a/dd7453bc5afc6458334ea08aed7998.png)

[mainstream nivida graphics card deep learning / reinforcement learning /ai computing power summary]

CDGA|数据治理不得不坚持的六个原则

随机推荐

How can China Africa diamond accessory stones be inlaid to be safe and beautiful?

Project summary notes series wstax kt session2 code analysis

Advanced technology management - what is the physical, mental and mental strength of managers

高校毕业求职难?“百日千万”网络招聘活动解决你的难题

以交互方式安装ESXi 6.0

【爬虫】charles unknown错误

Solve readobjectstart: expect {or N, but found n, error found in 1 byte of

如何让你的产品越贵越好卖

Evolution of multi-objective sorting model for classified tab commodity flow

【load dataset】

解决readObjectStart: expect { or n, but found N, error found in #1 byte of ...||..., bigger context ..

Ncp1342 chip substitute pn8213 65W gallium nitride charger scheme

Redis如何实现多可用区?

Spark Tuning (I): from HQL to code

解决grpc连接问题Dial成功状态为TransientFailure

Programmers are involved and maintain industry competitiveness

C operation XML file

12. (map data) cesium city building map

1个插件搞定网页中的广告

How to make your products as expensive as possible