当前位置:网站首页>Indoor user time series data classification baseline of 2020 ccfbdci training competition

Indoor user time series data classification baseline of 2020 ccfbdci training competition

2020-11-10 11:27:00 【osc-u 2koojuzp】

Indoor user time series data classification

Introduction to the contest question

Title of competition : Indoor user movement time series data classification

Track : Training track

background : With the accumulation of data , The processing demand of massive time series information is becoming increasingly prominent . As one of the important tasks in time series analysis , Time series classification is widely used and diverse . The purpose of time series classification is to assign a discrete marker to the time series . Traditional feature extraction algorithm uses statistical information in time series as the basis of classification . In recent years , Time series classification based on deep learning has made great progress . Based on the end-to-end feature extraction method , Deep learning can avoid tedious artificial feature design . How to classify time series effectively , From a complex data set, a sequence with a certain form is assigned to the same set , It is of great significance for academic research and industrial application .

Mission : Based on the above actual needs and the progress of deep learning , This training competition aims to build a general time series classification algorithm . Establish an accurate time series classification model through this question , I hope you will explore a more robust representation of time series features .

Match Links :https://www.datafountain.cn/competitions/484

Data brief

The data is collated from open data sets on the Internet UCI( Desensitized ), The dataset covers 2 Class different time series , This kind of dataset is widely used in business scenarios of time series classification .

| File category | file name | The contents of the document |

|---|---|---|

| Training set | train.csv | Training dataset tag file , label CLASS |

| Test set | test.csv | Test dataset tag file , No label |

| Field description | Field description .xlsx | Training set / Test set XXX Specific description of the fields |

| Submit sample | Ssample_submission.csv | There are only two fields ID\CLASS |

Data analysis

This question is a question of dichotomy , By observing the training set data , It turns out that the amount of data is very small (210 individual ) And it has a lot of features (240 individual ), And for the tag value of training data ,0 and 1 It's very evenly distributed ( About half of each ). Based on this , The use of direct neural network model will lead to too many parameters to be trained, so as to obtain unsatisfactory results . And use the tree model , Some hyperparameters need to be adjusted to fit the data , It's also complicated . Comprehensive analysis above , In this paper, we consider using the simplest support vector machine for classification , The results show that good results have been obtained .

Baseline Program

import pandas as pd

import numpy as np

from sklearn.model_selection import StratifiedKFold, KFold

from sklearn.svm import SVR

train = pd.read_csv('train.csv')

test = pd.read_csv('test.csv')

# Separate data sets

X_train_c = train.drop(['ID','CLASS'], axis=1).values

y_train_c = train['CLASS'].values

X_test_c = test.drop(['ID'], axis=1).values

nfold = 5

kf = KFold(n_splits=nfold, shuffle=True, random_state=2020)

prediction1 = np.zeros((len(X_test_c), ))

i = 0

for train_index, valid_index in kf.split(X_train_c, y_train_c):

print("\nFold {}".format(i + 1))

X_train, label_train = X_train_c[train_index],y_train_c[train_index]

X_valid, label_valid = X_train_c[valid_index],y_train_c[valid_index]

clf=SVR(kernel='rbf',C=1,gamma='scale')

clf.fit(X_train,label_train)

x1 = clf.predict(X_valid)

y1 = clf.predict(X_test_c)

prediction1 += ((y1)) / nfold

i += 1

result1 = np.round(prediction1)

id_ = range(210,314)

df = pd.DataFrame({

'ID':id_,'CLASS':result1})

df.to_csv("baseline.csv", index=False)

Submit results

Submit baseline, The score is 0.83653846154.

Because of the 50% discount on the data , So the score of the submitted results will fluctuate a little .

版权声明

本文为[osc-u 2koojuzp]所创,转载请带上原文链接,感谢

边栏推荐

- 计算机专业的学生要怎样做才能避免成为低级的码农?

- Q & A and book donation activities of harbor project are in hot progress

- Alibaba cloud ECS server is not understood by DDoS. Where should I go?

- Processing of one to many, many to many relations and functional interface in ABP framework (1)

- Imook nodejs personal learning notes (log)

- Express learning notes (MOOC)

- 子线程调用invalidate()产生“Only the original thread that created a view hierarchy can touch its views.”原因分析

- Idea submit SVN ignore file settings

- Call the open source video streaming media platform dawinffc

- 如何看待阿里云成立新零售事业部?

猜你喜欢

一不小心画了 24 张图剖析计网应用层协议!

Imook nodejs personal learning notes (log)

带劲!饿了么软件测试python自动化岗位核心面试题出炉,你全程下来会几个?

Mcp4725 driver based on FPGA

Production practice | Flink + live broadcast (1) | requirements and architecture

【CCPC】2020CCPC长春 F - Strange Memory | 树上启发式合并(dsu on a tree)、主席树

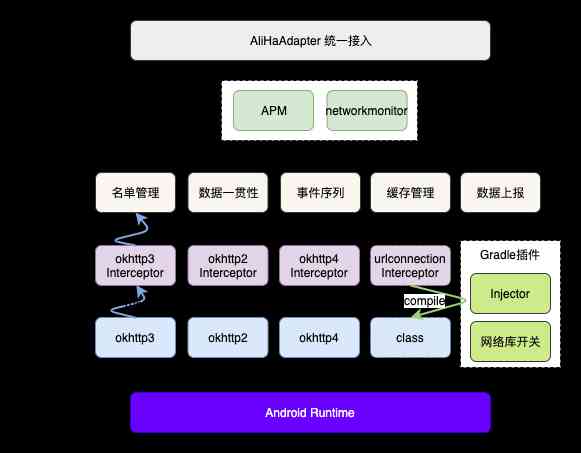

Android network performance monitoring scheme

今日数据行业日报-2020.11.9 - 知乎

![[C#.NET 拾遗补漏]11:最基础的线程知识](/img/5f/3db6e0620191da4893ad7b4e2ba064.jpg)

[C#.NET 拾遗补漏]11:最基础的线程知识

C language uses random number to generate matrix to realize fast transposition of triples.

随机推荐

刷题到底有什么用?你这么刷题还真没用

Taulia launches international payment terms database

Express learning notes (MOOC)

js 基础算法题(一)

To speed up the process of forming a global partnership between lifech and Alibaba Group

python pip命令的使用

微服务授权应该怎么做?

[Senior Test Engineer] three sets of 18K automated test interviews (Netease, byte jump, meituan)

CentOS7本地源yum配置

Leetcode 5561. Get the maximum value in the generated array

C++ 标准库头文件

LeetCode 5561. 获取生成数组中的最大值

Why should small and medium sized enterprises use CRM system

中小企业为什么要用CRM系统

ElasticSearch 集群基本概念及常用操作汇总(建议收藏)

Centos7 Rsync + crontab scheduled backup

不用懂代码,会打字就可以建站?1111 元礼包帮你一站配齐!

阿里巴巴开发手册强制使用SLF4J作为门面担当的秘密,我搞清楚了

STATISTICS STATS 380

Use Python to guess which numbers in the set are added to get the sum of a number