当前位置:网站首页>Redis introduction complete tutorial: client case analysis

Redis introduction complete tutorial: client case analysis

2022-07-07 02:49:00 【Gu Ge academic】

up to now , of Redis The relevant knowledge of the client has been basically introduced , This section will

adopt Redis Analysis of two cases encountered in development and operation , Let readers deepen their understanding of Redis Client phase

Understanding of knowledge .

4.6.1 Redis Memory surge

1. The phenomenon

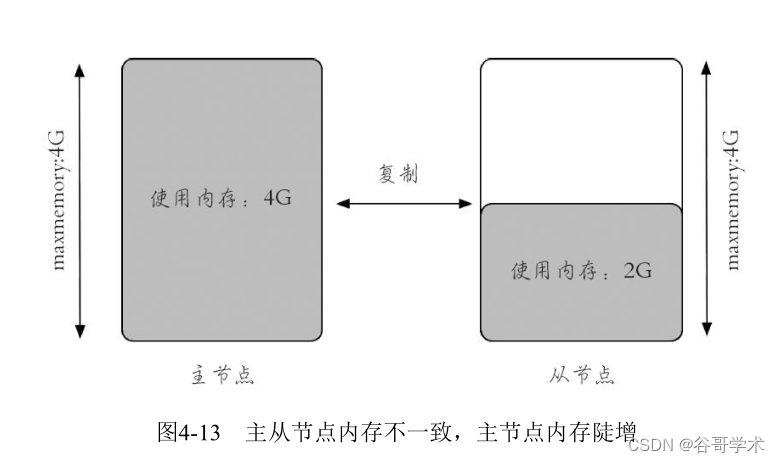

Server side phenomenon :Redis The memory of the master node increases sharply , Almost full maxmemory, And from the node

Memory has not changed ( The first 5 Chapter will introduce Redis Relevant knowledge of replication , All you need to know is that it's normal

In this case, the memory usage of the master and slave nodes is basically the same ), Pictured 4-13 Shown .

Client side phenomenon : The client generated OOM abnormal , That is to say Redis Memory used by the master node

Already exceeded maxmemory Set up , Unable to write new data :

redis.clients.jedis.exceptions.JedisDataException: OOM command not allowed when

used memory > 'maxmemory'

2. The analysis reason

Judging from the phenomenon , There are two possible reasons .

1) There are indeed a lot of writes , But there is a problem with master-slave replication : The query Redis Copy related

Information , Replication is normal , The master-slave data are basically the same .

The number of keys of the master node :

127.0.0.1:6379> dbsize

(integer) 2126870

Number of keys from node :

127.0.0.1:6380> dbsize

(integer) 2126870

2) The memory of the primary node is used too much due to other reasons : Check whether it is caused by the client buffer

The memory of the master node increases sharply , Use info clients Command query related information is as follows :

127.0.0.1:6379> info clients

# Clients

connected_clients:1891

client_longest_output_list:225698

client_biggest_input_buf:0

blocked_clients:0

Obviously, the output buffer is not normal , The maximum client output buffer queue has exceeded

20 Ten thousand objects , So we need to go through client list Command found omem Abnormal connection , Generally come

Say that most clients omem by 0( Because the processing speed will be fast enough ), So execute the following generation

code , find omem Non zero client connections :

redis-cli client list | grep -v "omem=0"

Found the following record :

id=7 addr=10.10.xx.78:56358 fd=6 name= age=91 idle=0 flags=O db=0 sub=0 psub=0

multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=224869 omem=2129300608 events=rw cmd=monitor

It is already obvious that there is a client executing monitor The order created .

3. Processing method and post-processing

The method of dealing with this problem is relatively simple , Just use client kill Order to kill the company

Pick up , Let other clients resume normal data writing . But what is more important is how to do it in time in the future

Find and avoid this problem , There are basically three points :

· Prohibit from the operation and maintenance level monitor command , For example, using rename-command Command reset

monitor The command is a random string , besides , If monitor Didn't do rename-

command, Also can be monitor Command to monitor accordingly ( for example client list).

· Training at the development level , Do not use in production environments monitor command , Because sometimes

Hou monitor Commands are useful when testing , It's not realistic to ban it completely .

· Limit the size of the output buffer .

· Use professional Redis Operation and maintenance tools , for example 13 Chapter will introduce CacheCloud, The above questions are

Cachecloud The corresponding alarm will be received in , Quickly identify and locate problems .

4.6.2 The client timed out periodically

1. The phenomenon

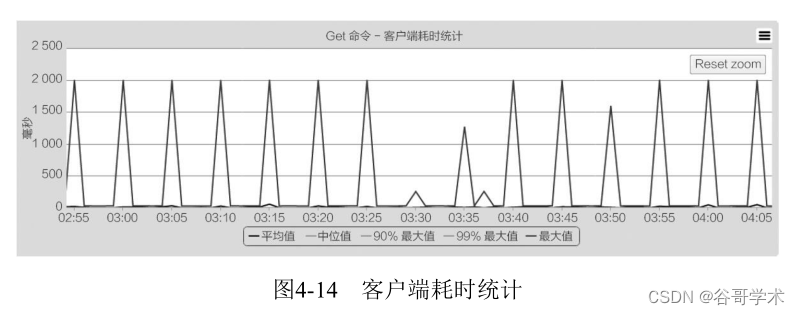

Client side phenomenon : The client experienced a large number of timeouts , After analysis, it is found that timeout occurs periodically

Of , This provides an important basis for finding problems :

Caused by: redis.clients.jedis.exceptions.JedisConnectionException: java.net.

SocketTimeoutException: connect timed out

Server side phenomenon : There is no obvious exception on the server , Just some slow query operations .

2. analysis

· Network reasons : There are periodic problems in the network between the server and the client , Through the observation network

Collaterals are normal .

·Redis In itself : After observation Redis Log statistics , No abnormality was found .

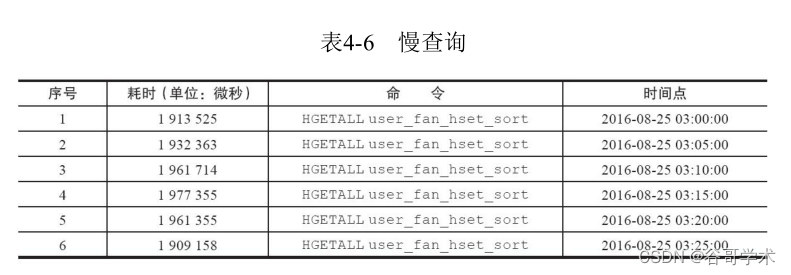

· client : Because it is a periodic problem , It corresponds to the history of the slow query log

Time , It is found that as long as the slow query appears , The client will generate a large number of connection timeouts , Two hours

The points are basically the same ( As shown in the table 4-6 Sum graph 4-14 Shown ).

Finally, the problem found is caused by slow query operation , Through execution hlen Found to have 200 Ten thousand yuan

plain , This operation will inevitably cause Redis Blocking , Through communication with the application party, it is learned that they have a decision

Time task , Every time 5 Once per minute hgetall operation .

127.0.0.1:6399> hlen user_fan_hset_sort

(integer) 2883279

The reason why the above problems can be located quickly , Thanks to the use of client monitoring tools, some statistics

Data collection , In this way, problems can be found more intuitively , If Redis It's black box operation , Believe in

It's hard to find this problem quickly . The speed of dealing with online problems is very important .

3. Processing method and post-processing

The solution to this problem is relatively simple , Only the business party needs to handle its own slow query in time, that is

can , But what is more important is how to find and avoid this problem in time in the future , Basically

Three points :

· From the operation and maintenance level , Monitor slow queries , Once the threshold is exceeded , Just call the police .

· From the development level , Strengthen for Redis The understanding of the , Avoid incorrect use .

· Use professional Redis Operation and maintenance tools , for example 13 Chapter will introduce CacheCloud, The above questions are

CacheCloud The corresponding alarm will be received in , Quickly identify and locate problems .

边栏推荐

- Unity webgl adaptive web page size

- Redis入门完整教程:问题定位与优化

- Redis入门完整教程:AOF持久化

- Niuke programming problem -- double pointer of 101 must be brushed

- Google Earth engine (GEE) -- 1975 dataset of Landsat global land survey

- C#/VB.NET 删除Word文檔中的水印

- Redis入門完整教程:問題定比特與優化

- wzoi 1~200

- Redis入门完整教程:RDB持久化

- PSINS中19维组合导航模块sinsgps详解(初始赋值部分)

猜你喜欢

Google Earth Engine(GEE)——Landsat 全球土地调查 1975年数据集

![[Mori city] random talk on GIS data (II)](/img/5a/dfa04e3edee5aa6afa56dfe614d59f.jpg)

[Mori city] random talk on GIS data (II)

换个姿势做运维!GOPS 2022 · 深圳站精彩内容抢先看!

Station B's June ranking list - feigua data up main growth ranking list (BiliBili platform) is released!

wireshark安装

Dotconnect for DB2 Data Provider

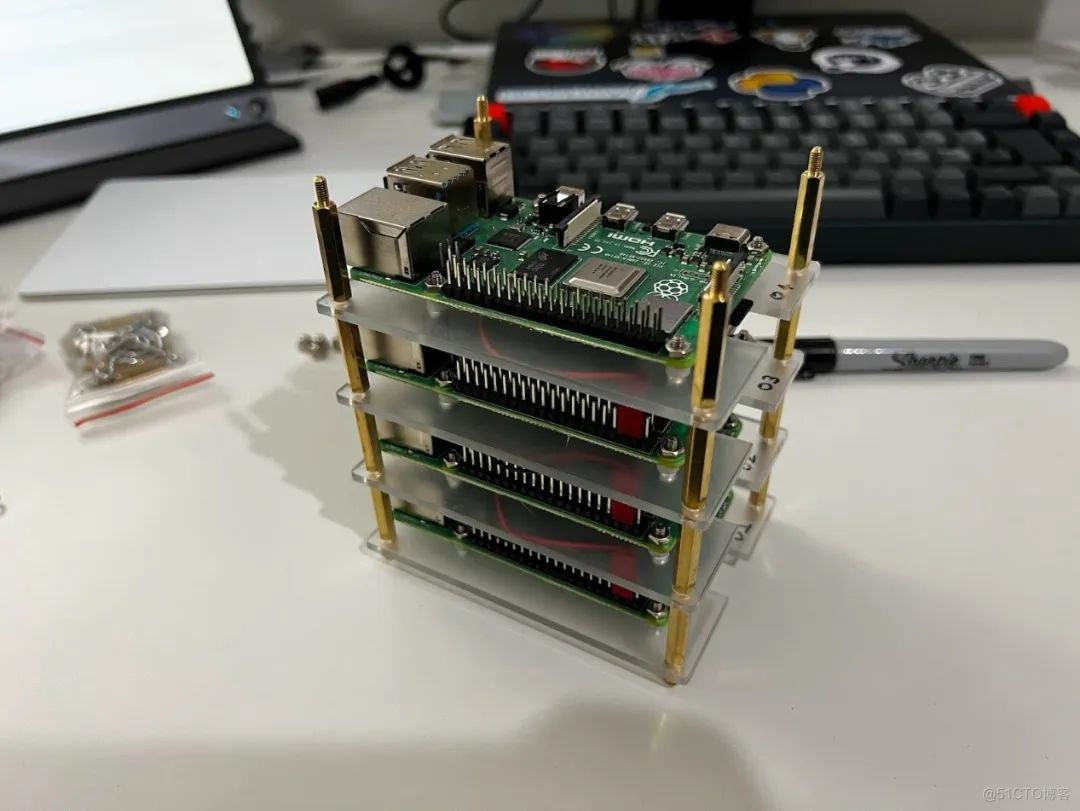

How to build a 32core raspberry pie cluster from 0 to 1

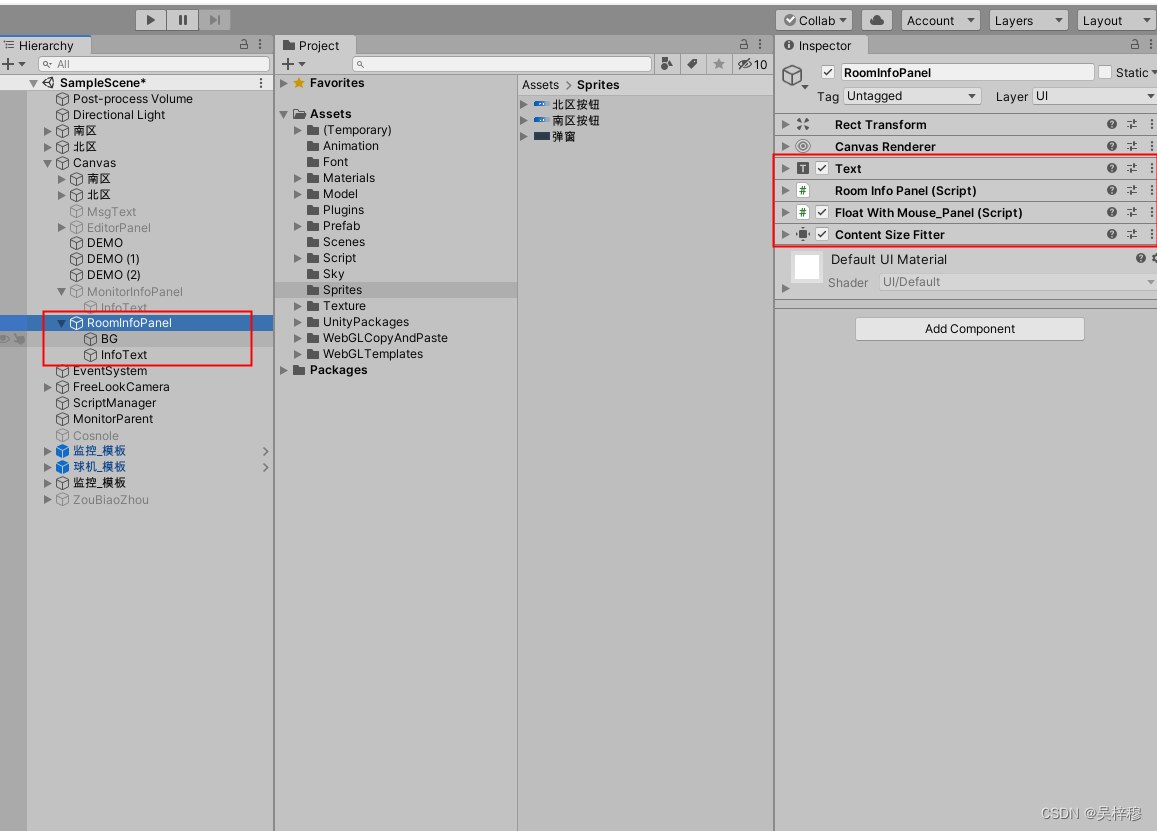

The panel floating with the mouse in unity can adapt to the size of text content

你不可不知道的Selenium 8种元素定位方法,简单且实用

The annual salary of general test is 15W, and the annual salary of test and development is 30w+. What is the difference between the two?

随机推荐

Dotconnect for DB2 Data Provider

基于ensp防火墙双击热备二层网络规划与设计

Examples of how to use dates in Oracle

牛客编程题--必刷101之双指针篇

What management points should be paid attention to when implementing MES management system

Redis入门完整教程:客户端常见异常

Summer Challenge database Xueba notes (Part 2)~

KYSL 海康摄像头 8247 h9 isapi测试

Work of safety inspection

Redis入门完整教程:客户端管理

Leetcode:minimum_ depth_ of_ binary_ Tree solutions

MySQL - common functions - string functions

Redis入门完整教程:复制拓扑

所谓的消费互联网仅仅只是做行业信息的撮合和对接,并不改变产业本身

ODBC database connection of MFC windows programming [147] (with source code)

[software test] the most complete interview questions and answers. I'm familiar with the full text. If I don't win the offer, I'll lose

Unity webgl adaptive web page size

Electrical engineering and automation

wzoi 1~200

Qpushbutton- "function refinement"