当前位置:网站首页>ICTCLAS用的字Lucene4.9捆绑

ICTCLAS用的字Lucene4.9捆绑

2022-07-05 19:52:00 【全栈程序员站长】

大家好,又见面了,我是全栈君,今天给大家准备了Idea注册码。

它一直喜欢的搜索方向,虽然无法做到。但仍保持了狂热的份额。记得那个夏天、这间实验室、这一群人,一切都随风而逝。踏上新征程。我以前没有自己。面对七三分技术的商业环境,我选择了沉淀。社会是一个大机器,我们只是一个小螺丝钉。我们不能容忍半点扭扭捏捏。

于一个时代的产物。也终将被时代所抛弃。言归正题,在lucene增加自己定义的分词器,须要继承Analyzer类。实现createComponents方法。同一时候定义Tokenzier类用于记录所需建立索引的词以及其在文章的位置,这里继承SegmentingTokenizerBase类,须要实现setNextSentence与incrementWord两个方法。当中。setNextSentence设置下一个句子,在多域(Filed)分词索引时,setNextSentence就是设置下一个域的内容,能够通过new String(buffer, sentenceStart, sentenceEnd – sentenceStart)获取。而incrementWord方法则是记录每一个单词以及它的位置。须要注意一点就是要在前面加clearAttributes(),否则可能出现first position increment must be > 0…错误。以ICTCLAS分词器为例,以下贴上个人代码,希望能给大家带来帮助,不足之处,多多拍砖。

import java.io.IOException;

import java.io.Reader;

import java.io.StringReader;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.analysis.Tokenizer;

import org.apache.lucene.analysis.core.LowerCaseFilter;

import org.apache.lucene.analysis.en.PorterStemFilter;

import org.apache.lucene.analysis.tokenattributes.CharTermAttribute;

import org.apache.lucene.util.Version;

/**

* 中科院分词器 继承Analyzer类。实现其 tokenStream方法

*

* @author ckm

*

*/

public class ICTCLASAnalyzer extends Analyzer {

/**

* 该方法主要是将文档转变成lucene建立索 引所需的TokenStream对象

*

* @param fieldName

* 文件名称

* @param reader

* 文件的输入流

*/

@Override

protected TokenStreamComponents createComponents(String fieldName, Reader reader) {

try {

System.out.println(fieldName);

final Tokenizer tokenizer = new ICTCLASTokenzier(reader);

TokenStream stream = new PorterStemFilter(tokenizer);

stream = new LowerCaseFilter(Version.LUCENE_4_9, stream);

stream = new PorterStemFilter(stream);

return new TokenStreamComponents(tokenizer,stream);

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

return null;

}

public static void main(String[] args) throws Exception {

Analyzer analyzer = new ICTCLASAnalyzer();

String str = "黑客技术";

TokenStream ts = analyzer.tokenStream("field", new StringReader(str));

CharTermAttribute c = ts.addAttribute(CharTermAttribute.class);

ts.reset();

while (ts.incrementToken()) {

System.out.println(c.toString());

}

ts.end();

ts.close();

}

}import java.io.IOException;

import java.io.Reader;

import java.text.BreakIterator;

import java.util.Arrays;

import java.util.Iterator;

import java.util.List;

import java.util.Locale;

import org.apache.lucene.analysis.tokenattributes.CharTermAttribute;

import org.apache.lucene.analysis.tokenattributes.OffsetAttribute;

import org.apache.lucene.analysis.util.SegmentingTokenizerBase;

import org.apache.lucene.util.AttributeFactory;

/**

*

* 继承lucene的SegmentingTokenizerBase,重载其setNextSentence与

* incrementWord方 法,记录所需建立索引的词以及其在文章的位置

*

* @author ckm

*

*/

public class ICTCLASTokenzier extends SegmentingTokenizerBase {

private static final BreakIterator sentenceProto = BreakIterator.getSentenceInstance(Locale.ROOT);

private final CharTermAttribute termAttr= addAttribute(CharTermAttribute.class);// 记录所需建立索引的词

private final OffsetAttribute offAttr = addAttribute(OffsetAttribute.class);// 记录所需建立索引的词在文章中的位置

private ICTCLASDelegate ictclas;// 分词系统的托付对象

private Iterator<String> words;// 文章分词后形成的单词

private int offSet= 0;// 记录最后一个词元的结束位置

/**

* 构造函数

*

* @param segmented 分词后的结果

* @throws IOException

*/

protected ICTCLASTokenzier(Reader reader) throws IOException {

this(DEFAULT_TOKEN_ATTRIBUTE_FACTORY, reader);

}

protected ICTCLASTokenzier(AttributeFactory factory, Reader reader) throws IOException {

super(factory, reader,sentenceProto);

ictclas = ICTCLASDelegate.getDelegate();

}

@Override

protected void setNextSentence(int sentenceStart, int sentenceEnd) {

// TODO Auto-generated method stub

String sentence = new String(buffer, sentenceStart, sentenceEnd - sentenceStart);

String result=ictclas.process(sentence);

String[] array = result.split("\\s");

if(array!=null){

List<String> list = Arrays.asList(array);

words=list.iterator();

}

offSet= 0;

}

@Override

protected boolean incrementWord() {

// TODO Auto-generated method stub

if (words == null || !words.hasNext()) {

return false;

} else {

String t = words.next();

while(t.equals("")||StopWordFilter.filter(t)){ //这里主要是为了过滤空白字符以及停用词

//StopWordFilter为自己定义停用词过滤类

if (t.length() == 0)

offSet++;

else

offSet+= t.length();

t =words.next();

}

if (!t.equals("") && !StopWordFilter.filter(t)) {

clearAttributes();

termAttr.copyBuffer(t.toCharArray(), 0, t.length());

offAttr.setOffset(correctOffset(offSet), correctOffset(offSet=offSet+ t.length()));

return true;

}

return false;

}

}

/**

* 重置

*/

public void reset() throws IOException {

super.reset();

offSet= 0;

}

public static void main(String[] args) throws IOException {

String content = "宝剑锋从磨砺出,梅花香自苦寒来!"; String seg = ICTCLASDelegate.getDelegate().process(content); //ICTCLASTokenzier test = new ICTCLASTokenzier(seg); //while (test.incrementToken()); } }import java.io.File;

import java.nio.ByteBuffer;

import java.nio.CharBuffer;

import java.nio.charset.Charset;

import ICTCLAS.I3S.AC.ICTCLAS50;

/**

* 中科院分词系统代理类

*

* @author ckm

*

*/

public class ICTCLASDelegate {

private static final String userDict = "userDict.txt";// 用户词典

private final static Charset charset = Charset.forName("gb2312");// 默认的编码格式

private static String ictclasPath =System.getProperty("user.dir");

private static String dirConfigurate = "ICTCLASConf";// 配置文件所在文件夹名

private static String configurate = ictclasPath + File.separator+ dirConfigurate;// 配置文件所在文件夹的绝对路径

private static int wordLabel = 2;// 词性标注类型(北大二级标注集)

private static ICTCLAS50 ictclas;// 中科院分词系统的jni接口对象

private static ICTCLASDelegate instance = null;

private ICTCLASDelegate(){ }

/**

* 初始化ICTCLAS50对象

*

* @return ICTCLAS50对象初始化化是否成功

*/

public boolean init() {

ictclas = new ICTCLAS50();

boolean bool = ictclas.ICTCLAS_Init(configurate

.getBytes(charset));

if (bool == false) {

System.out.println("Init Fail!");

return false;

}

// 设置词性标注集(0 计算所二级标注集。1 计算所一级标注集,2 北大二级标注集,3 北大一级标注集)

ictclas.ICTCLAS_SetPOSmap(wordLabel);

importUserDictFile(configurate + File.separator + userDict);// 导入用户词典

ictclas.ICTCLAS_SaveTheUsrDic();// 保存用户字典

return true;

}

/**

* 将编码格式转换为分词系统识别的类型

*

* @param charset

* 编码格式

* @return 编码格式相应的数字

**/

public static int getECode(Charset charset) {

String name = charset.name();

if (name.equalsIgnoreCase("ascii"))

return 1;

if (name.equalsIgnoreCase("gb2312"))

return 2;

if (name.equalsIgnoreCase("gbk"))

return 2;

if (name.equalsIgnoreCase("utf8"))

return 3;

if (name.equalsIgnoreCase("utf-8"))

return 3;

if (name.equalsIgnoreCase("big5"))

return 4;

return 0;

}

/**

* 该方法的作用是导入用户字典

*

* @param path

* 用户词典的绝对路径

* @return 返回导入的词典的单词个数

*/

public int importUserDictFile(String path) {

System.out.println("导入用户词典");

return ictclas.ICTCLAS_ImportUserDictFile(

path.getBytes(charset), getECode(charset));

}

/**

* 该方法的作用是对字符串进行分词

*

* @param source

* 所要分词的源数据

* @return 分词后的结果

*/

public String process(String source) {

return process(source.getBytes(charset));

}

public String process(char[] chars){

CharBuffer cb = CharBuffer.allocate (chars.length);

cb.put (chars);

cb.flip ();

ByteBuffer bb = charset.encode (cb);

return process(bb.array());

}

public String process(byte[] bytes){

if(bytes==null||bytes.length<1)

return null;

byte nativeBytes[] = ictclas.ICTCLAS_ParagraphProcess(bytes, 2, 0);

String nativeStr = new String(nativeBytes, 0,

nativeBytes.length-1, charset);

return nativeStr;

}

/**

* 获取分词系统代理对象

*

* @return 分词系统代理对象

*/

public static ICTCLASDelegate getDelegate() {

if (instance == null) {

synchronized (ICTCLASDelegate.class) {

instance = new ICTCLASDelegate();

instance.init();

}

}

return instance;

}

/**

* 退出分词系统

*

* @return 返回操作是否成功

*/

public boolean exit() {

return ictclas.ICTCLAS_Exit();

}

public static void main(String[] args) {

String str="结婚的和尚未结婚的";

ICTCLASDelegate id = ICTCLASDelegate.getDelegate();

String result = id.process(str.toCharArray());

System.out.println(result.replaceAll(" ", "-"));

}

}import java.util.Iterator;

import java.util.Set;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

/**

* 停用词过滤器

*

* @author ckm

*

*/

public class StopWordFilter {

private static Set<String> chineseStopWords = null;// 中文停用词集

private static Set<String> englishStopWords = null;// 英文停用词集

static {

init();

}

/**

* 初始化中英文停用词集

*/

public static void init() {

LoadStopWords lsw = new LoadStopWords();

chineseStopWords = lsw.getChineseStopWords();

englishStopWords = lsw.getEnglishStopWords();

}

/**

* 推断keyword的类型以及推断其是否为停用词 注意:临时仅仅考虑中文,英文。中英混合, 中数混合,英数混合这五种类型。当中中英 混合,

* 中数混合,英数混合还没特定的停用 词库或语法规则对其进行判别

*

* @param word

* keyword

* @return true表示是停用词

*/

public static boolean filter(String word) {

Pattern chinese = Pattern.compile("^[\u4e00-\u9fa5]+$");// 中文匹配

Matcher m1 = chinese.matcher(word);

if (m1.find())

return chineseFilter(word);

Pattern english = Pattern.compile("^[A-Za-z]+$");// 英文匹配

Matcher m2 = english.matcher(word);

if (m2.find())

return englishFilter(word);

Pattern chineseDigit = Pattern.compile("^[\u4e00-\u9fa50-9]+$");// 中数匹配

Matcher m3 = chineseDigit.matcher(word);

if (m3.find())

return chineseDigitFilter(word);

Pattern englishDigit = Pattern.compile("^[A-Za-z0-9]+$");// 英数匹配

Matcher m4 = englishDigit.matcher(word);

if (m4.find())

return englishDigitFilter(word);

Pattern englishChinese = Pattern.compile("^[A-Za-z\u4e00-\u9fa5]+$");// 中英匹配,这个必须在中文匹配与英文匹配之后

Matcher m5 = englishChinese.matcher(word);

if (m5.find())

return englishChineseFilter(word);

return true;

}

/**

* 推断keyword是否为中文停用词

*

* @param word

* keyword

* @return true表示是停用词

*/

public static boolean chineseFilter(String word) {

// System.out.println("中文停用词推断");

if (chineseStopWords == null || chineseStopWords.size() == 0)

return false;

Iterator<String> iterator = chineseStopWords.iterator();

while (iterator.hasNext()) {

if (iterator.next().equals(word))

return true;

}

return false;

}

/**

* 推断keyword是否为英文停用词

*

* @param word

* keyword

* @return true表示是停用词

*/

public static boolean englishFilter(String word) {

// System.out.println("英文停用词推断");

if (word.length() <= 2)

return true;

if (englishStopWords == null || englishStopWords.size() == 0)

return false;

Iterator<String> iterator = englishStopWords.iterator();

while (iterator.hasNext()) {

if (iterator.next().equals(word))

return true;

}

return false;

}

/**

* 推断keyword是否为英数停用词

*

* @param word

* keyword

* @return true表示是停用词

*/

public static boolean englishDigitFilter(String word) {

return false;

}

/**

* 推断keyword是否为中数停用词

*

* @param word

* keyword

* @return true表示是停用词

*/

public static boolean chineseDigitFilter(String word) {

return false;

}

/**

* 推断keyword是否为英中停用词

*

* @param word

* keyword

* @return true表示是停用词

*/

public static boolean englishChineseFilter(String word) {

return false;

}

public static void main(String[] args) {

/*

* Iterator<String> iterator=

* StopWordFilter.chineseStopWords.iterator(); int n=0;

* while(iterator.hasNext()){ System.out.println(iterator.next()); n++;

* } System.out.println("总单词量:"+n);

*/

boolean bool = StopWordFilter.filter("宝剑");

System.out.println(bool);

}

}import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStream;

import java.io.InputStreamReader;

import java.util.HashSet;

import java.util.Iterator;

import java.util.Set;

/**

* 载入停用词文件

*

* @author ckm

*

*/

public class LoadStopWords {

private Set<String> chineseStopWords = null;// 中文停用词集

private Set<String> englishStopWords = null;// 英文停用词集

/**

* 获取中文停用词集

*

* @return 中文停用词集Set<String>类型

*/

public Set<String> getChineseStopWords() {

return chineseStopWords;

}

/**

* 设置中文停用词集

*

* @param chineseStopWords

* 中文停用词集Set<String>类型

*/

public void setChineseStopWords(Set<String> chineseStopWords) {

this.chineseStopWords = chineseStopWords;

}

/**

* 获取英文停用词集

*

* @return 英文停用词集Set<String>类型

*/

public Set<String> getEnglishStopWords() {

return englishStopWords;

}

/**

* 设置英文停用词集

*

* @param englishStopWords

* 英文停用词集Set<String>类型

*/

public void setEnglishStopWords(Set<String> englishStopWords) {

this.englishStopWords = englishStopWords;

}

/**

* 载入停用词库

*/

public LoadStopWords() {

chineseStopWords = loadStopWords(this.getClass().getResourceAsStream(

"ChineseStopWords.txt"));

englishStopWords = loadStopWords(this.getClass().getResourceAsStream(

"EnglishStopWords.txt"));

}

/**

* 从停用词文件里载入停用词, 停用词文件是普通GBK编码的文本文件, 每一行 是一个停用词。凝视利用“//”, 停用词中包含中文标点符号,

* 中文空格, 以及使用率太高而对索引意义不大的词。

*

* @param input

* 停用词文件流

* @return 停用词组成的HashSet

*/

public static Set<String> loadStopWords(InputStream input) {

String line;

Set<String> stopWords = new HashSet<String>();

try {

BufferedReader br = new BufferedReader(new InputStreamReader(input,

"GBK"));

while ((line = br.readLine()) != null) {

if (line.indexOf("//") != -1) {

line = line.substring(0, line.indexOf("//"));

}

line = line.trim();

if (line.length() != 0)

stopWords.add(line.toLowerCase());

}

br.close();

} catch (IOException e) {

System.err.println("不能打开停用词库!。");

}

return stopWords;

}

public static void main(String[] args) {

LoadStopWords lsw = new LoadStopWords();

Iterator<String> iterator = lsw.getEnglishStopWords().iterator();

int n = 0;

while (iterator.hasNext()) {

System.out.println(iterator.next());

n++;

}

System.out.println("总单词量:" + n);

}

}这里须要ChineseStopWords.txt 与EnglishStopWords.txt中国和英国都存储停用词,在这里,我们不知道如何上传,有ICTCLAS基本的文件。

下载完整的项目:http://download.csdn.net/detail/km1218/7754907

发布者:全栈程序员栈长,转载请注明出处:https://javaforall.cn/117733.html原文链接:https://javaforall.cn

边栏推荐

- Postman核心功能解析-参数化和测试报告

- C - sequential structure

- Bitcoinwin (BCW)受邀参加Hanoi Traders Fair 2022

- Force buckle 1200 Minimum absolute difference

- 【obs】libobs-winrt :CreateDispatcherQueueController

- id选择器和类选择器的区别

- CADD课程学习(7)-- 模拟靶点和小分子相互作用 (半柔性对接 AutoDock)

- [hard core dry goods] which company is better in data analysis? Choose pandas or SQL

- Bitcoinwin (BCW) was invited to attend Hanoi traders fair 2022

- [AI framework basic technology] automatic derivation mechanism (autograd)

猜你喜欢

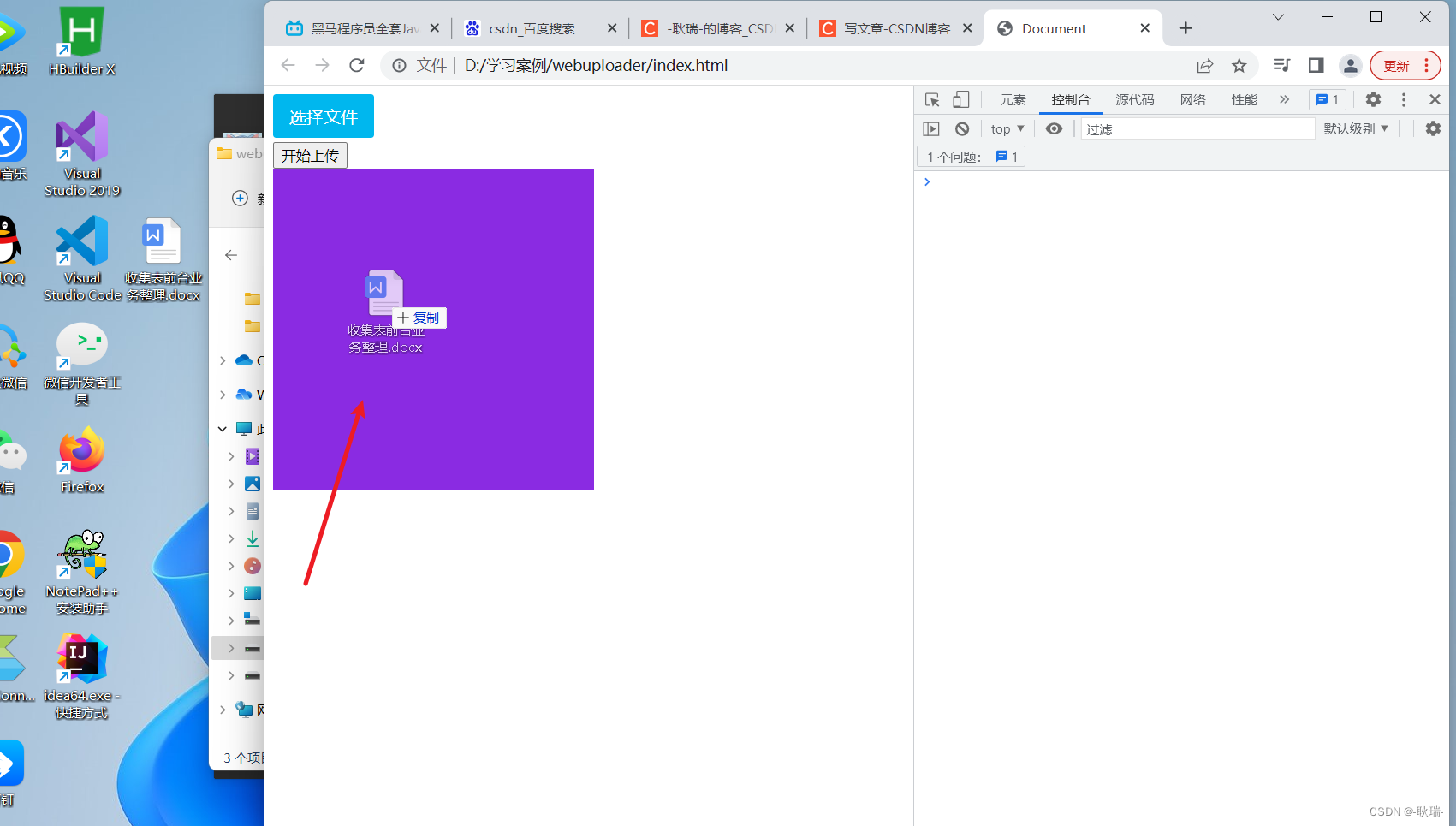

Webuploader file upload drag upload progress monitoring type control upload result monitoring control

建立自己的网站(16)

![[untitled]](/img/51/c89d35c855e299b02137d676790eb6.png)

[untitled]

Worthy of being a boss, byte Daniel spent eight months on another masterpiece

UWB ultra wideband positioning technology, real-time centimeter level high-precision positioning application, ultra wideband transmission technology

![[C language] string function and Simulation Implementation strlen & strcpy & strcat & StrCmp](/img/32/738df44b6005fd84b4a9037464e61e.jpg)

[C language] string function and Simulation Implementation strlen & strcpy & strcat & StrCmp

Bitcoinwin (BCW) was invited to attend Hanoi traders fair 2022

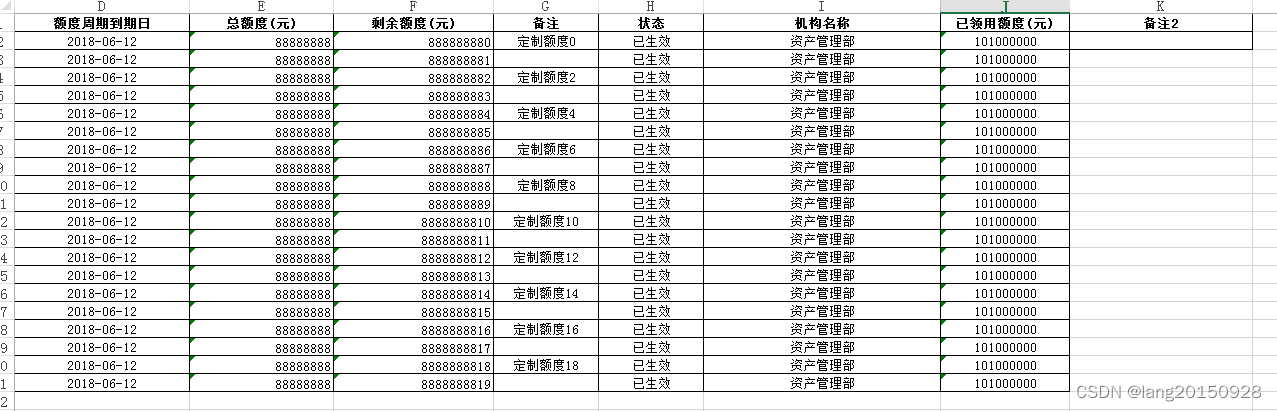

使用easyexcel模板导出的两个坑(Map空数据列错乱和不支持嵌套对象)

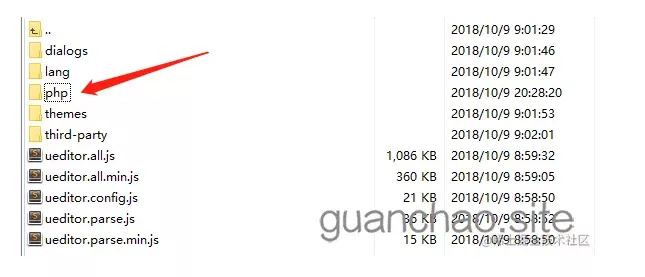

PHP uses ueditor to upload pictures and add watermarks

After 95, Alibaba P7 published the payroll: it's really fragrant to make up this

随机推荐

[untitled]

信息/数据

Zhongang Mining: analysis of the current market supply situation of the global fluorite industry in 2022

Which securities company is better and which platform is safer for mobile account opening

【C语言】字符串函数及模拟实现strlen&&strcpy&&strcat&&strcmp

Win10 x64环境下基于VS2017和cmake-gui配置使用zxing以及opencv,并实现data metrix码的简单检测

UWB ultra wideband positioning technology, real-time centimeter level high-precision positioning application, ultra wideband transmission technology

What is the core value of testing?

PHP uses ueditor to upload pictures and add watermarks

Fundamentals of shell programming (Chapter 9: loop)

淺淺的談一下ThreadLocalInsecureRandom

SecureRandom那些事|真伪随机数

Parler de threadlocal insecurerandom

Reptile exercises (II)

acm入门day1

【FAQ】华为帐号服务报错 907135701的常见原因总结和解决方法

【合集- 行业解决方案】如何搭建高性能的数据加速与数据编排平台

How to apply smart contracts more wisely in 2022?

通配符选择器

太牛了,看这篇足矣了