当前位置:网站首页>Mongodb meets spark (for integration)

Mongodb meets spark (for integration)

2022-07-07 13:12:00 【cui_ yonghua】

The basic chapter ( Can solve the problem of 80% The problem of ):

MongoDB data type 、 Key concepts and shell Commonly used instructions

MongoDB Various additions to documents 、 to update 、 Delete operation summary

Advanced :

Other :

One . And HDFS comparison ,MongoDB The advantages of

1、 In terms of storage mode ,HDFS In documents , The size of each file is 64M~128M, and mongo The performance is more fine grained ;

2、MongoDB Support HDFS There is no index concept , So it is faster in reading speed ;

3、MongoDB It is easier to modify data ;

4、HDFS The response level is minutes , and MongoDB The response category is milliseconds ;

5、 You can use MongoDB Powerful Aggregate Function for data filtering or preprocessing ;

6、 If you use MongoDB, There is no need to be like the traditional mode , To Redis After memory database calculation , Then save it to HDFS On .

Two . Hierarchical architecture of big data

MongoDB Can replace HDFS, As the core part of big data platform , It can be layered as follows :

The first 1 layer :MongoDB perhaps HDFS;

The first 2 layer : Resource management Such as YARN、Mesos、K8S;

The first 3 layer : Calculation engine Such as MapReduce、Spark;

The first 4 layer : Program interface Such as Pig、Hive、Spark SQL、Spark Streaming、Data Frame etc.

Reference resources :

mongo-python-driver: https://github.com/mongodb/mongo-python-driver/

Official documents :https://www.mongodb.com/docs/spark-connector/current/

3、 ... and . The source code is introduced

mongo-spark/examples/src/test/python/introduction.py

# -*- coding: UTF-8 -*-

#

# Copyright 2016 MongoDB, Inc.

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# To run this example use:

# ./bin/spark-submit --master "local[4]" \

# --conf "spark.mongodb.input.uri=mongodb://127.0.0.1/test.coll?readPreference=primaryPreferred" \

# --conf "spark.mongodb.output.uri=mongodb://127.0.0.1/test.coll" \

# --packages org.mongodb.spark:mongo-spark-connector_2.11:2.0.0 \

# introduction.py

from pyspark.sql import SparkSession

if __name__ == "__main__":

spark = SparkSession.builder.appName("Python Spark SQL basic example").getOrCreate()

logger = spark._jvm.org.apache.log4j

logger.LogManager.getRootLogger().setLevel(logger.Level.FATAL)

# Save some data

characters = spark.createDataFrame([("Bilbo Baggins", 50), ("Gandalf", 1000), ("Thorin", 195), ("Balin", 178), ("Kili", 77), ("Dwalin", 169), ("Oin", 167), ("Gloin", 158), ("Fili", 82), ("Bombur", None)], ["name", "age"])

characters.write.format("com.mongodb.spark.sql").mode("overwrite").save()

# print the schema

print("Schema:")

characters.printSchema()

# read from MongoDB collection

df = spark.read.format("com.mongodb.spark.sql").load()

# SQL

df.registerTempTable("temp")

centenarians = spark.sql("SELECT name, age FROM temp WHERE age >= 100")

print("Centenarians:")

centenarians.show()

边栏推荐

- ESP32系列专栏

- JS缓动动画原理教学(超细节)

- Pay close attention to the work of safety production and make every effort to ensure the safety of people's lives and property

- 关于 appium 如何关闭 app (已解决)

- MongoDB 遇见 spark(进行整合)

- [learning notes] zkw segment tree

- Smart cloud health listed: with a market value of HK $15billion, SIG Jingwei and Jingxin fund are shareholders

- 测试下摘要

- About how appium closes apps (resolved)

- 环境配置篇

猜你喜欢

centso7 openssl 报错Verify return code: 20 (unable to get local issuer certificate)

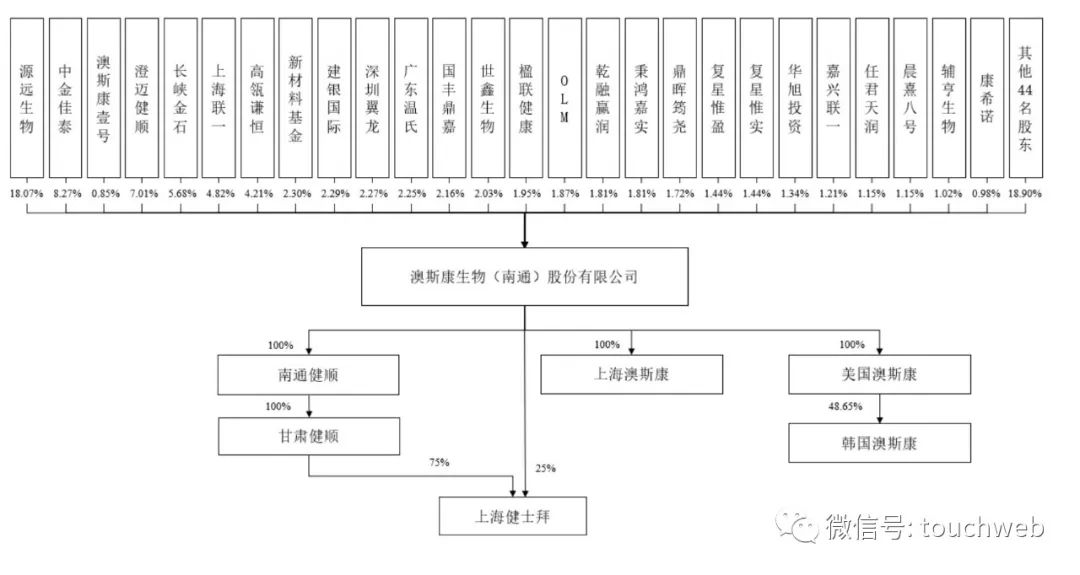

Aosikang biological sprint scientific innovation board of Hillhouse Investment: annual revenue of 450million yuan, lost cooperation with kangxinuo

日本政企员工喝醉丢失46万信息U盘,公开道歉又透露密码规则

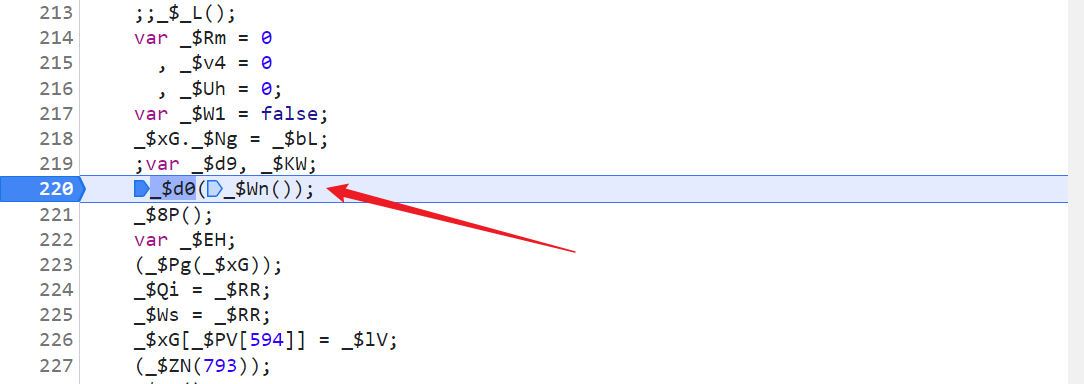

人均瑞数系列,瑞数 4 代 JS 逆向分析

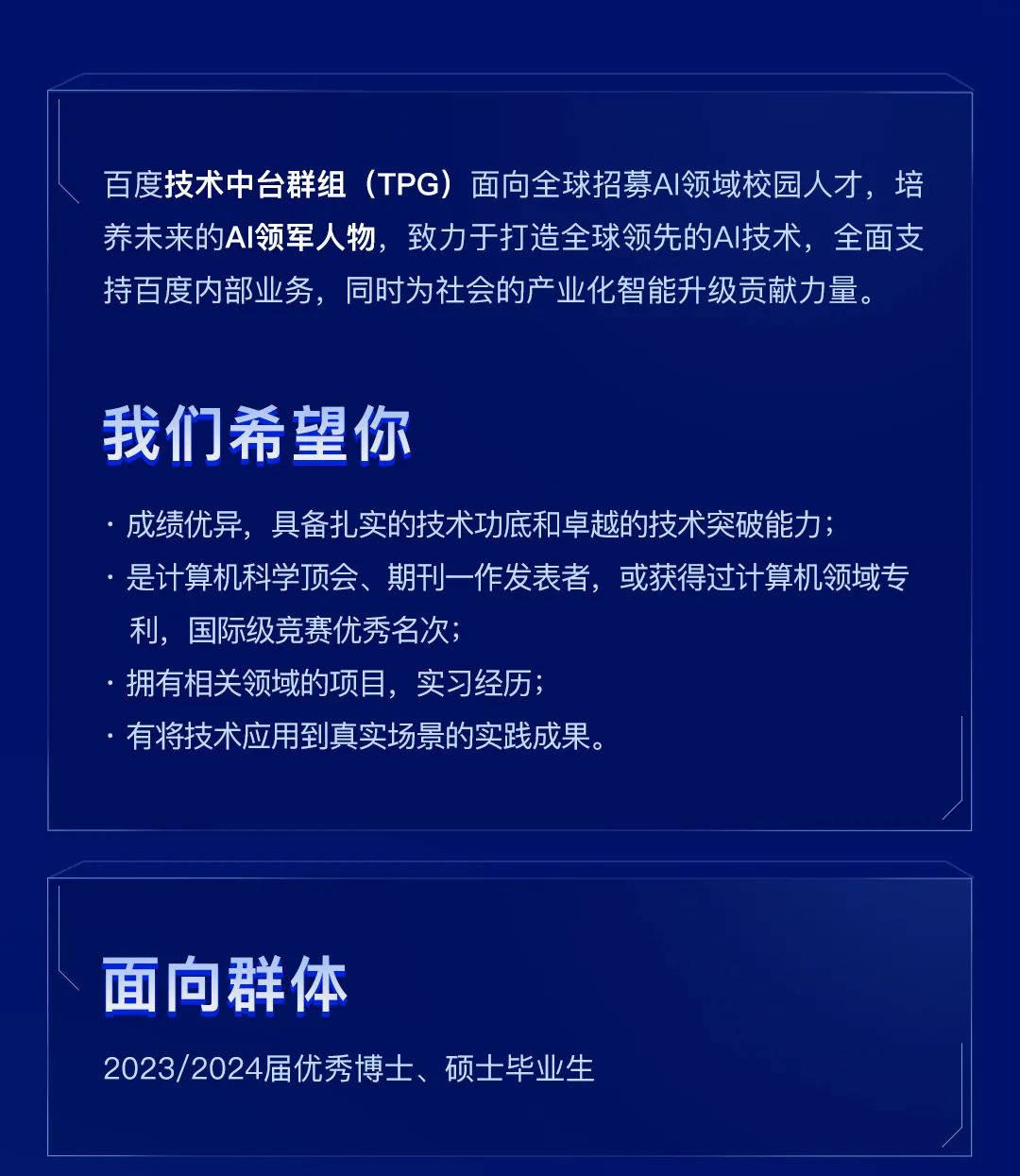

TPG x AIDU|AI领军人才招募计划进行中!

LIS 最长上升子序列问题(动态规划、贪心+二分)

COSCon'22 社区召集令来啦!Open the World,邀请所有社区一起拥抱开源,打开新世界~

Cinnamon 任务栏网速

【无标题】

ISPRS2021/遥感影像云检测:一种地理信息驱动的方法和一种新的大规模遥感云/雪检测数据集

随机推荐

事务的七种传播行为

JS判断一个对象是否为空

Pcap learning notes II: pcap4j source code Notes

共创软硬件协同生态:Graphcore IPU与百度飞桨的“联合提交”亮相MLPerf

国泰君安证券开户怎么开的?开户安全吗?

基于鲲鹏原生安全,打造安全可信的计算平台

About how appium closes apps (resolved)

. Net ultimate productivity of efcore sub table sub database fully automated migration codefirst

File operation command

Awk of three swordsmen in text processing

Go语言学习笔记-结构体(Struct)

初学XML

PHP calls the pure IP database to return the specific address

服务器到服务器 (S2S) 事件 (Adjust)

JS中为什么基础数据类型可以调用方法

Smart cloud health listed: with a market value of HK $15billion, SIG Jingwei and Jingxin fund are shareholders

shell 批量文件名(不含扩展名)小写改大写

PAcP learning note 3: pcap method description

飞桨EasyDL实操范例:工业零件划痕自动识别

Introduce six open source protocols in detail (instructions for programmers)