当前位置:网站首页>【Wing Loss】《Wing Loss for Robust Facial Landmark Localisation with Convolutional Neural Networks》

【Wing Loss】《Wing Loss for Robust Facial Landmark Localisation with Convolutional Neural Networks》

2022-07-02 07:45:00 【bryant_ meng】

CVPR-2018

List of articles

1 Background and Motivation

Key points of the face , Like the tip of the nose , Eye Center , For others such as face recognition / Facial recognition / 3D Face reconstruction and other face analysis tasks provide a wealth of geometric information

The development of deep learning has also greatly improved the face key point location task in unstructured face scenes

One crucial aspect of deep learning is to define a loss function leading to better-learnt representation from underlying data.

The author analyzes different loss Effect in face key point detection task , Put forward new losses wing loss

2 Related Work

- Different regressions are analyzed loss Advantages and disadvantages , Put forward wing loss For face key point location

- Put forward pose-based data balance

- Propose a new method of face key point detection Network structure

3 Advantages / Contributions

Network Architectures

Go straight back to

Forecast thermodynamic diagramDealing with Pose Variations

multiview models

use 3D face models

multi-task learning( Promote each other )Cascaded Networks

4 Method

1) Loss function

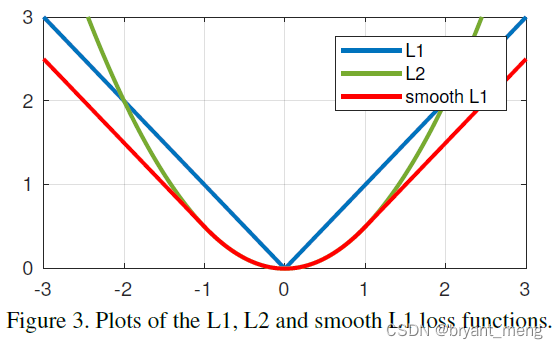

The loss functions commonly used in regression tasks are as follows

L1 / L2 / smooth L1

L2 loss is sensitive to outliers

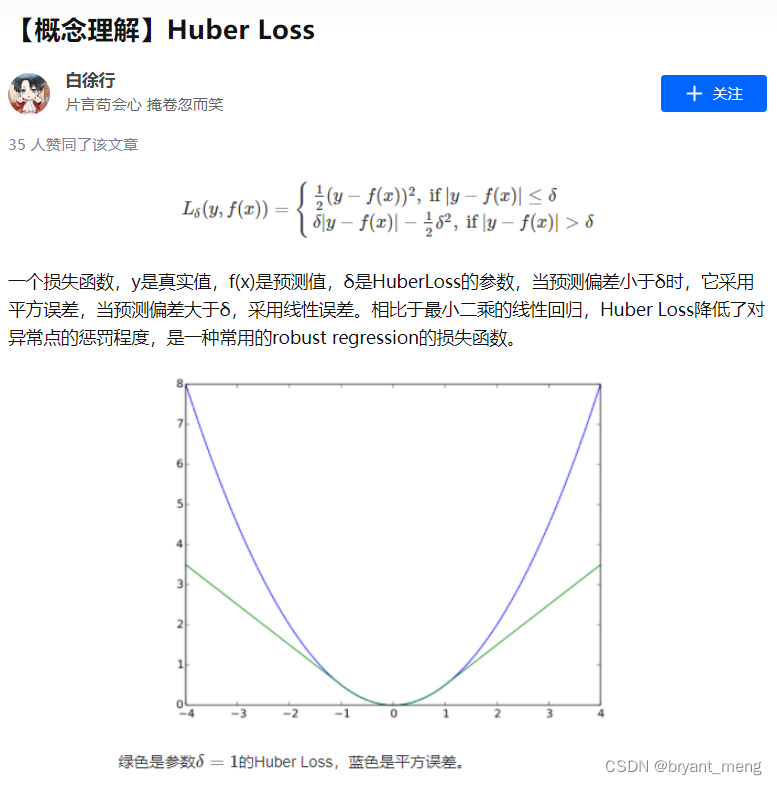

smooth L1 loss yes L1 and L2 The combination of , It's also a special case of the Huber loss

come from [ Face key point detection ] Wing loss Interpretation of the thesis

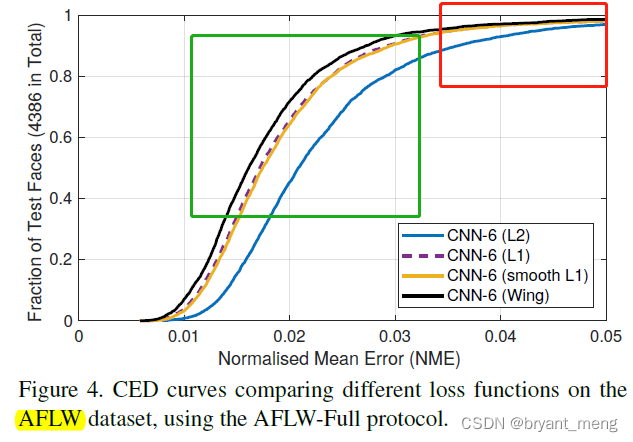

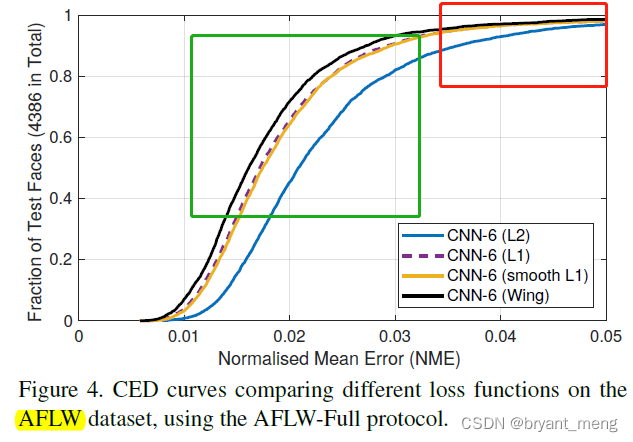

By analyzing AFLW On dataset NME(Normalised Mean Error) The cumulative distribution histogram of

loss functions analysed in the last section(L1 / L2 / Smooth L1) perform well for large errors( That is, the roughly red framed area , Large error , But the corresponding samples are relatively few )

more attention should be paid to small and medium range errors( It's roughly a green framed area ,NME partial medium and small, There are many samples —— The curve is steep )

The author further analyzes

Error is x x x Under the circumstances

L1 The magnitude of the loss gradient is 1, Optimize step size (optimal step sizes) Then for x x x, The greater the error , The more optimization times

L2 The magnitude of the loss gradient is x x x, The optimization step is 1, The greater the error , The greater the gradient

In both cases the update towards the solution will be dominated by larger errors

That is to say ,it is hard to correct relatively small displacements

The author introduces ln x x x loss To alleviate the above L1 / L2 loss The disadvantage of being too affected by large errors ,

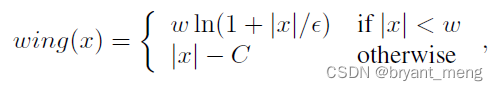

ln x x x Gradient magnitude 1 x \frac{1}{x} x1, The optimization step is x 2 x^2 x2, Small error when , Big gradient Small steps , Big error when , Small gradient Large step size

For large error and small error scenarios , Both gradient and step size have a good equilibrium

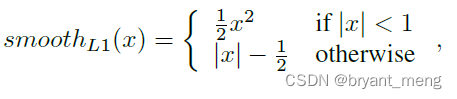

Considering the large initial error of face key point location task , To speed up convergence , The author introduces L1 loss(L2 Yes outline sensitive ), The overall design is as follows

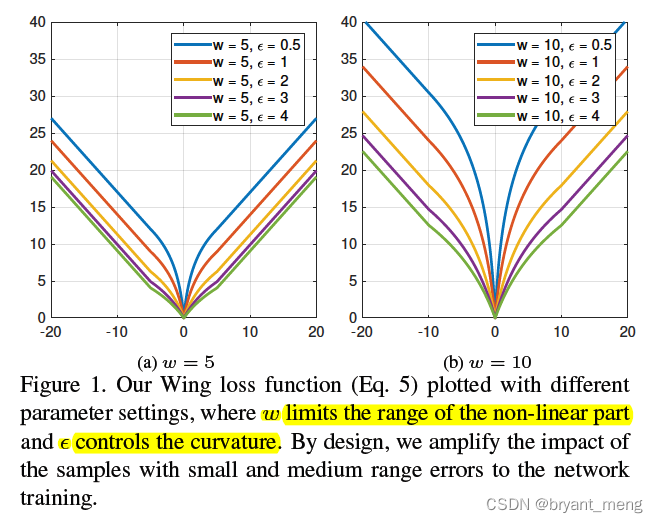

C = w − w l n ( 1 + ∣ w ∣ / ε ) C = w - wln(1+|w|/\varepsilon ) C=w−wln(1+∣w∣/ε) Constant , Ensure the smoothness of the piecewise function

ε \varepsilon ε It should not be set too small , According to the gradient − w ε + x -\frac{w}{\varepsilon+x} −ε+xw It can be seen that , ε \varepsilon ε After hours , In the face of small errors , There may be a gradient explosion

2)Pose-based data balancing

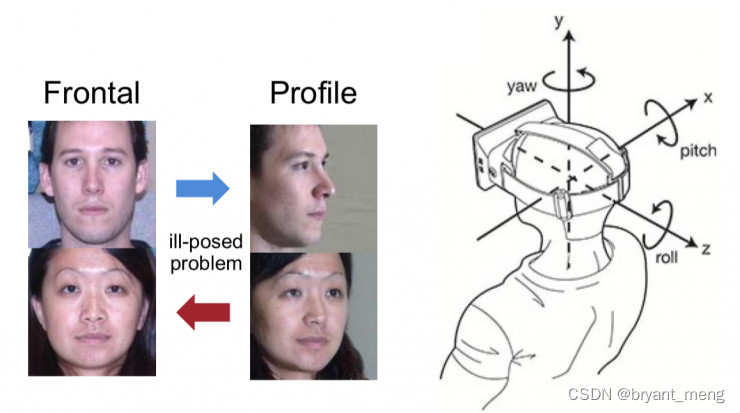

In order to solve extreme pose variations

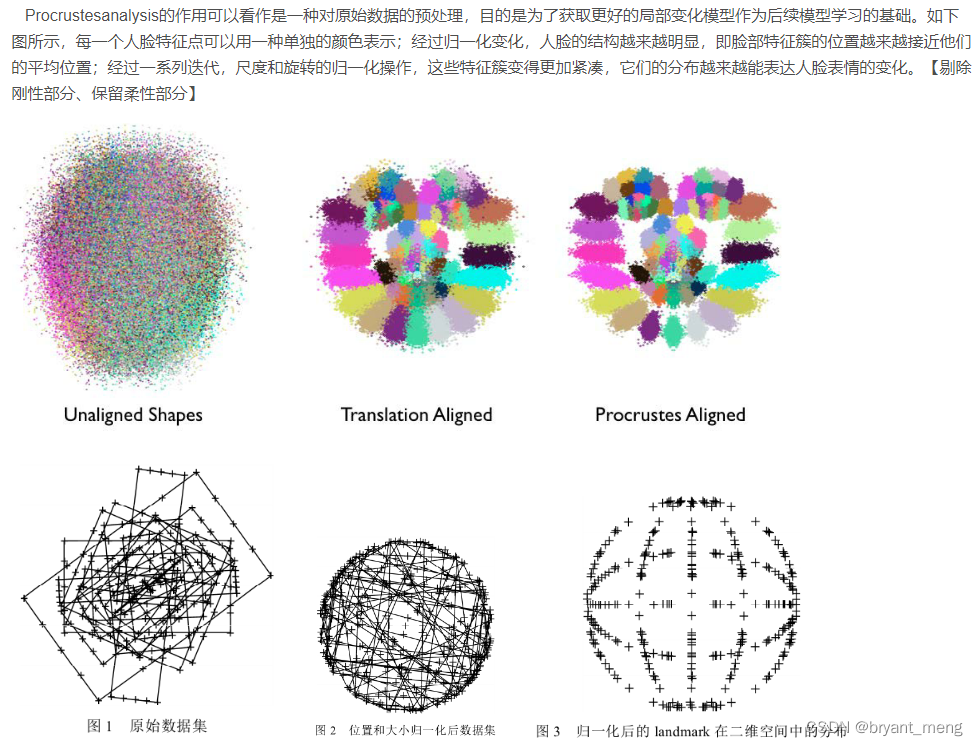

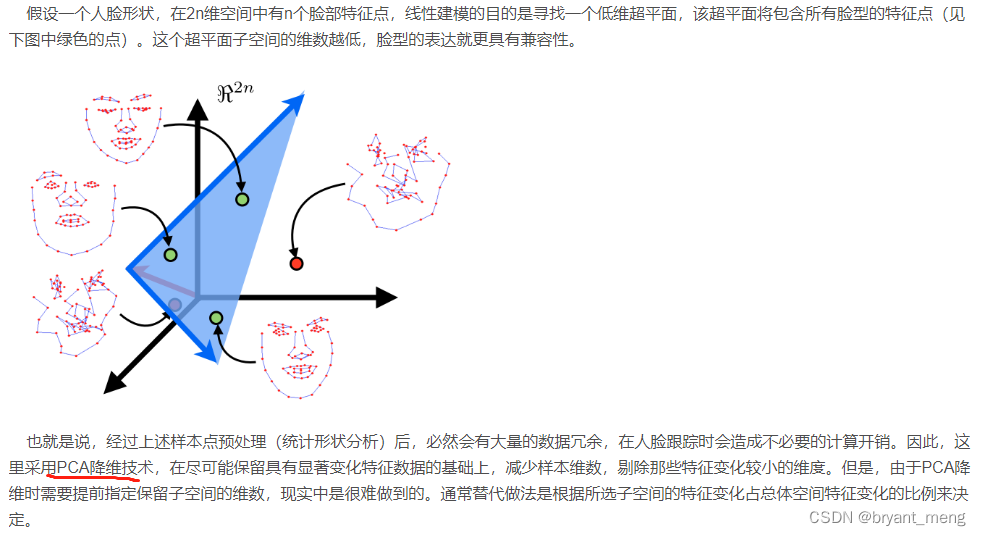

Procrustes Analysis + PAC To analyze the shape distribution of faces in the dataset

Principle comes from 《Master Opencv… Reading notes 》 Non rigid face tracking II

The distribution of projection coefficient of the training samples is represented by a histogram with K bins give the result as follows

Abscissa face angle , Number of ordinate samples

Pose-based data balancing The strategy is to put bin Face samples corresponding to relatively few positions , Repeat sampling , Make it more balanced

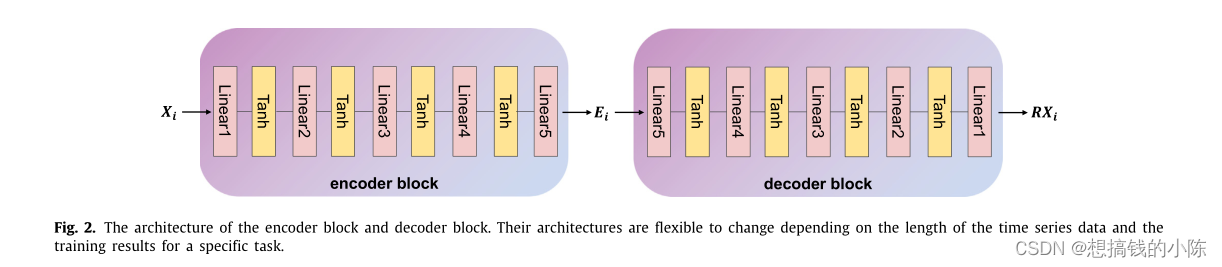

3) Network structure

A focus on loss And sample strategy , The design of network structure is relatively simple

Pose-based data balancing Relieved out-of-plane head rotations problem

There are other factors that affect the final face key point location

in-plane head rotations and inaccurate bounding boxes output from a poor face detector etc.

So the author cascade For a moment

The first stage CNN6, Input 64x64x3, Output keys + Refined offset of face frame ?(refine the input image for the second network by removing the in-plane head rotation and correcting the bounding box, I can't see too much information )

The first stage CNN7, Input 128x127x3, Output keys

5 Experiments

5.1 Datasets and Metrics

AFLW:19 A little bit ,AFLW protocol The evaluation index ——NME + Face frame width

300W:68 A little bit ,NME + inter-pupil distance

5.2 Experiments

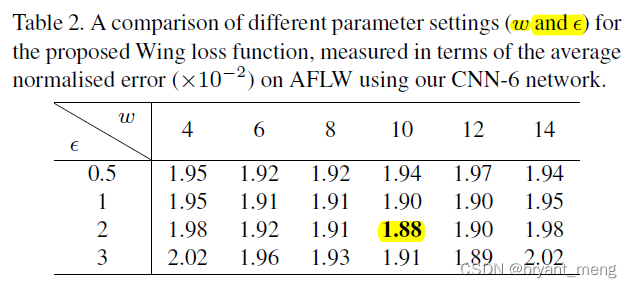

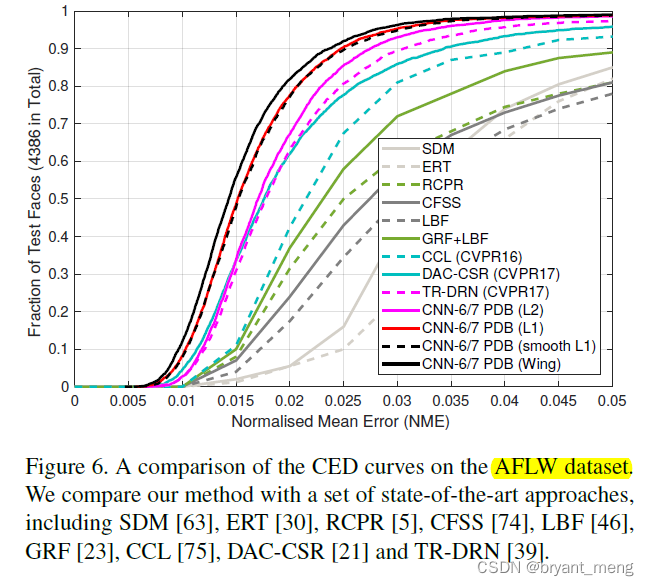

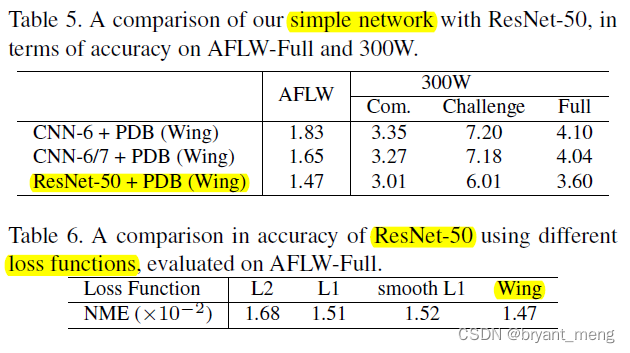

1)loss

AFLW On dataset , Different loss Comparison

Explore the next wing loss in w w w and ε \varepsilon ε Setting of super parameters

2)Pose-based data balancing Strategy

Even if CNN coordination L1 and L2 loss, Also super fierce , explain Pose-based data balancing The strategy is very effective

300W

3)Run time and network architectures

6 Conclusion(own) / Future work

CNN6 What is the output of , Ha ha ha ! I have to check the code

wing loss, chart 4 Is the core source of inspiration

Pose-based data balancing

Angle definition :

The picture is from How to rotate the face in the image ? | CVPR 2018in-plane / out-of-plane, Quote the following two answers

It should be that stress or deformation occurs in a xoy In the plane , Call in-plane, Yes z The component force or displacement of the direction is called out-of-plane

Deformation is relative to a plane , There are some deformations in this plane , Some deformations are out of plane deformations , That's the corresponding in-plane and out-of-plane

Learn others' understanding

边栏推荐

- 【MnasNet】《MnasNet:Platform-Aware Neural Architecture Search for Mobile》

- [mixup] mixup: Beyond Imperial Risk Minimization

- Calculate the total in the tree structure data in PHP

- parser. parse_ Args boolean type resolves false to true

- [CVPR‘22 Oral2] TAN: Temporal Alignment Networks for Long-term Video

- Conversion of numerical amount into capital figures in PHP

- 【Programming】

- [introduction to information retrieval] Chapter 6 term weight and vector space model

- Installation and use of image data crawling tool Image Downloader

- Thesis tips

猜你喜欢

TimeCLR: A self-supervised contrastive learning framework for univariate time series representation

Cognitive science popularization of middle-aged people

![[mixup] mixup: Beyond Imperial Risk Minimization](/img/14/8d6a76b79a2317fa619e6b7bf87f88.png)

[mixup] mixup: Beyond Imperial Risk Minimization

Machine learning theory learning: perceptron

Regular expressions in MySQL

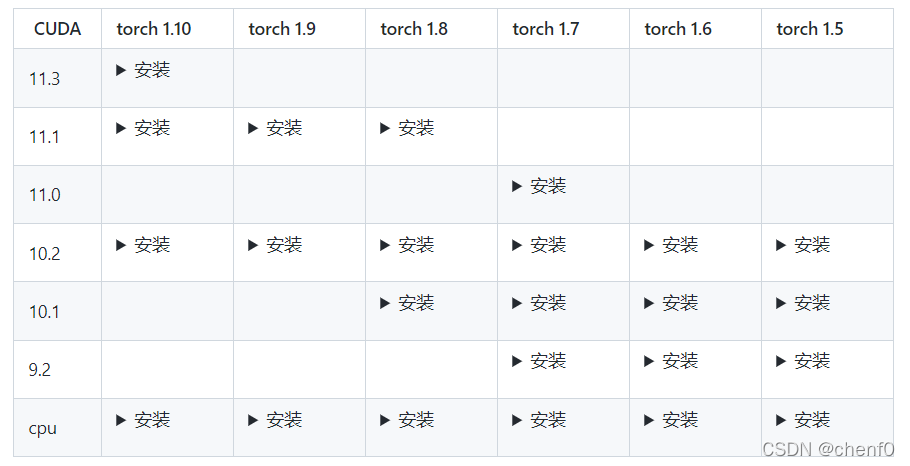

MMDetection安装问题

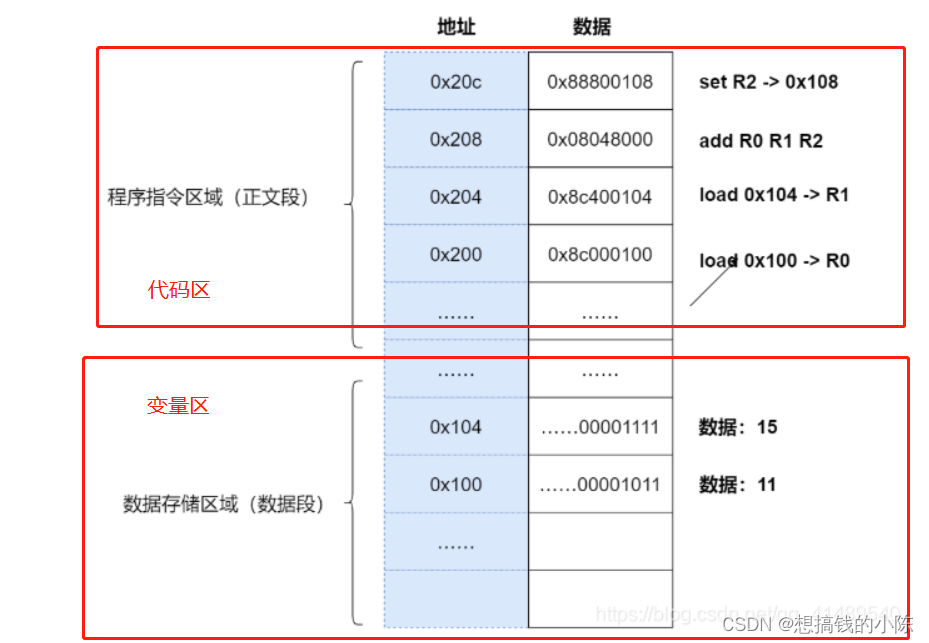

Execution of procedures

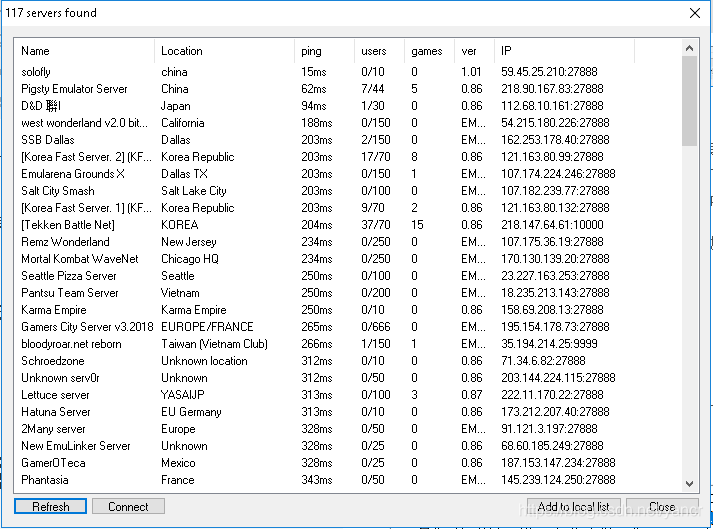

Play online games with mame32k

【FastDepth】《FastDepth:Fast Monocular Depth Estimation on Embedded Systems》

SSM personnel management system

随机推荐

[torch] some ideas to solve the problem that the tensor parameters have gradients and the weight is not updated

[torch] the most concise logging User Guide

Thesis tips

Implement interface Iterable & lt; T>

Drawing mechanism of view (3)

CONDA common commands

Semi supervised mixpatch

基于pytorch的YOLOv5单张图片检测实现

【Random Erasing】《Random Erasing Data Augmentation》

Play online games with mame32k

Timeout docking video generation

解决latex图片浮动的问题

MySQL has no collation factor of order by

深度学习分类优化实战

Transform the tree structure into array in PHP (flatten the tree structure and keep the sorting of upper and lower levels)

使用百度网盘上传数据到服务器上

Traditional target detection notes 1__ Viola Jones

Common machine learning related evaluation indicators

【MnasNet】《MnasNet:Platform-Aware Neural Architecture Search for Mobile》

Implementation of yolov5 single image detection based on onnxruntime