当前位置:网站首页>How to save the trained neural network model (pytorch version)

How to save the trained neural network model (pytorch version)

2022-07-05 17:33:00 【Chasing young feather】

One 、 Save and load models

After training the model with data, an ideal model is obtained , But in practical application, it is impossible to train first and then use , So you have to save the trained model first , Then load it when you need it and use it directly . The essence of a model is a pile of parameters stored in some structure , So there are two ways to save , One way is to save the whole model directly , Then directly load the whole model , But this will consume more memory ; The other is to save only the parameters of the model , When used later, create a new model with the same structure , Then import the saved parameters into the new model .

Two 、 Implementation methods of two cases

(1) Save only the model parameter dictionary ( recommend )

# preservation

torch.save(the_model.state_dict(), PATH)

# Read

the_model = TheModelClass(*args, **kwargs)

the_model.load_state_dict(torch.load(PATH))(2) Save the entire model

# preservation

torch.save(the_model, PATH)

# Read

the_model = torch.load(PATH)3、 ... and 、 Save only model parameters ( Example )

pytorch Will put the parameters of the model in a dictionary , And all we have to do is save this dictionary , Then call .

For example, design a single layer LSTM Network of , And then training , After training, save the parameter Dictionary of the model , Save as... Under the same folder rnn.pt file :

class LSTM(nn.Module):

def __init__(self, input_size, hidden_size, num_layers):

super(LSTM, self).__init__()

self.hidden_size = hidden_size

self.num_layers = num_layers

self.lstm = nn.LSTM(input_size, hidden_size, num_layers, batch_first=True)

self.fc = nn.Linear(hidden_size, 1)

def forward(self, x):

# Set initial states

h0 = torch.zeros(self.num_layers, x.size(0), self.hidden_size).to(device)

# 2 for bidirection

c0 = torch.zeros(self.num_layers, x.size(0), self.hidden_size).to(device)

# Forward propagate LSTM

out, _ = self.lstm(x, (h0, c0))

# out: tensor of shape (batch_size, seq_length, hidden_size*2)

out = self.fc(out)

return out

rnn = LSTM(input_size=1, hidden_size=10, num_layers=2).to(device)

# optimize all cnn parameters

optimizer = torch.optim.Adam(rnn.parameters(), lr=0.001)

# the target label is not one-hotted

loss_func = nn.MSELoss()

for epoch in range(1000):

output = rnn(train_tensor) # cnn output`

loss = loss_func(output, train_labels_tensor) # cross entropy loss

optimizer.zero_grad() # clear gradients for this training step

loss.backward() # backpropagation, compute gradients

optimizer.step() # apply gradients

output_sum = output

# Save the model

torch.save(rnn.state_dict(), 'rnn.pt')After saving, use the trained model to process the data :

# Test the saved model

m_state_dict = torch.load('rnn.pt')

new_m = LSTM(input_size=1, hidden_size=10, num_layers=2).to(device)

new_m.load_state_dict(m_state_dict)

predict = new_m(test_tensor) Here's an explanation , When you save the model rnn.state_dict() Express rnn The parameter Dictionary of this model , When testing the saved model, first load the parameter Dictionary m_state_dict = torch.load('rnn.pt');

Then instantiate one LSTM Antithetic image , Here, we need to ensure that the parameters passed in are consistent with the instantiation rnn Is the same as when the object is passed in , That is, the structure is the same new_m = LSTM(input_size=1, hidden_size=10, num_layers=2).to(device);

Here are the parameters loaded before passing in the new model new_m.load_state_dict(m_state_dict);

Finally, we can use this model to process the data predict = new_m(test_tensor)

Four 、 Save the whole model ( Example )

class LSTM(nn.Module):

def __init__(self, input_size, hidden_size, num_layers):

super(LSTM, self).__init__()

self.hidden_size = hidden_size

self.num_layers = num_layers

self.lstm = nn.LSTM(input_size, hidden_size, num_layers, batch_first=True)

self.fc = nn.Linear(hidden_size, 1)

def forward(self, x):

# Set initial states

h0 = torch.zeros(self.num_layers, x.size(0), self.hidden_size).to(device) # 2 for bidirection

c0 = torch.zeros(self.num_layers, x.size(0), self.hidden_size).to(device)

# Forward propagate LSTM

out, _ = self.lstm(x, (h0, c0)) # out: tensor of shape (batch_size, seq_length, hidden_size*2)

# print("output_in=", out.shape)

# print("fc_in_shape=", out[:, -1, :].shape)

# Decode the hidden state of the last time step

# out = torch.cat((out[:, 0, :], out[-1, :, :]), axis=0)

# out = self.fc(out[:, -1, :]) # Take the last column as out

out = self.fc(out)

return out

rnn = LSTM(input_size=1, hidden_size=10, num_layers=2).to(device)

print(rnn)

optimizer = torch.optim.Adam(rnn.parameters(), lr=0.001) # optimize all cnn parameters

loss_func = nn.MSELoss() # the target label is not one-hotted

for epoch in range(1000):

output = rnn(train_tensor) # cnn output`

loss = loss_func(output, train_labels_tensor) # cross entropy loss

optimizer.zero_grad() # clear gradients for this training step

loss.backward() # backpropagation, compute gradients

optimizer.step() # apply gradients

output_sum = output

# Save the model

torch.save(rnn, 'rnn1.pt')After saving, use the trained model to process the data :

new_m = torch.load('rnn1.pt')

predict = new_m(test_tensor)边栏推荐

- IDEA 项目启动报错 Shorten the command line via JAR manifest or via a classpath file and rerun.

- Knowledge points of MySQL (7)

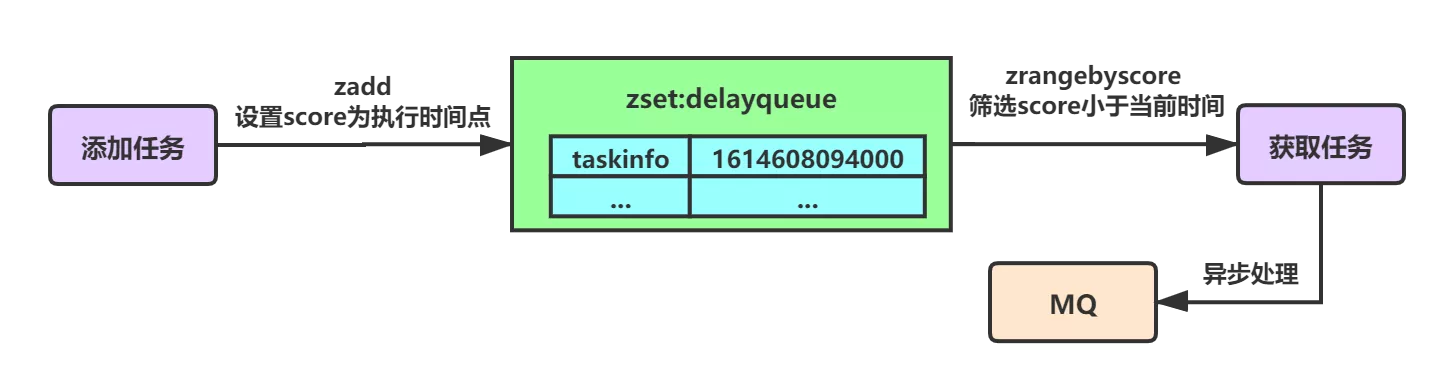

- 基于Redis实现延时队列的优化方案小结

- MySQL之知识点(六)

- WebApp开发-Google官方教程

- Mongodb (quick start) (I)

- CMake教程Step4(安装和测试)

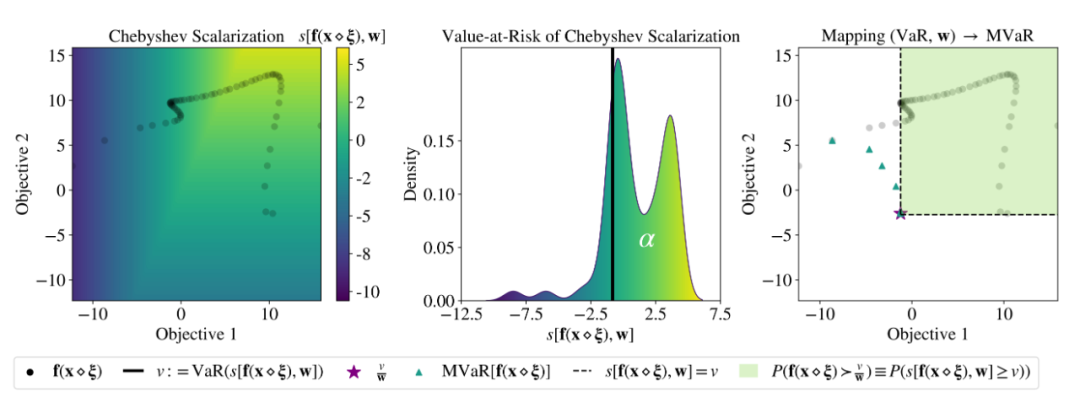

- ICML 2022 | Meta propose une méthode robuste d'optimisation bayésienne Multi - objectifs pour faire face efficacement au bruit d'entrée

- Beijing internal promotion | the machine learning group of Microsoft Research Asia recruits full-time researchers in nlp/ speech synthesis and other directions

- Judge whether a number is a prime number (prime number)

猜你喜欢

7. Scala class

MySQL queries the latest qualified data rows

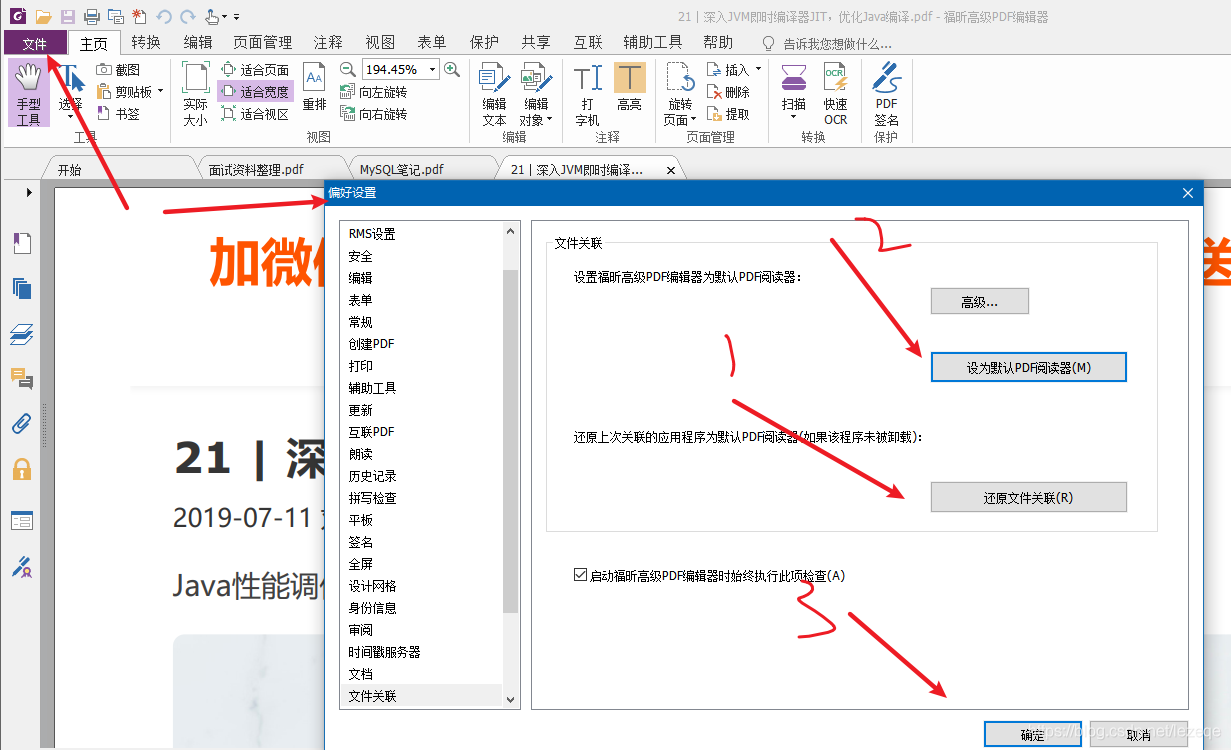

解决“双击pdf文件,弹出”请安装evernote程序

基于Redis实现延时队列的优化方案小结

winedt常用快捷键 修改快捷键latex编译按钮

ICML 2022 | Meta propose une méthode robuste d'optimisation bayésienne Multi - objectifs pour faire face efficacement au bruit d'entrée

Which is more cost-effective, haqu K1 or haqu H1? Who is more worth starting with?

北京内推 | 微软亚洲研究院机器学习组招聘NLP/语音合成等方向全职研究员

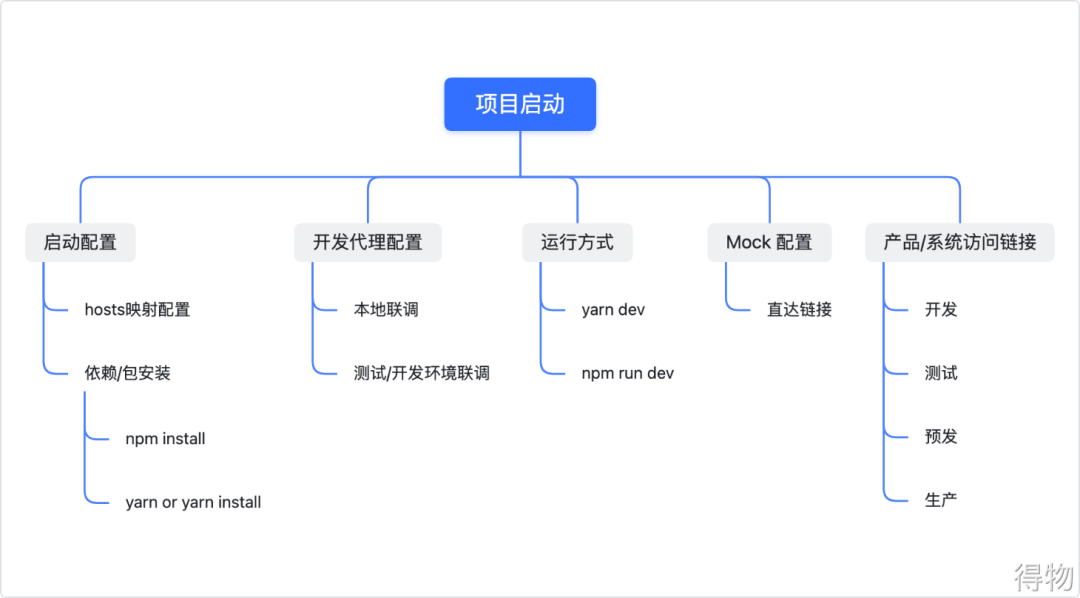

How to write a full score project document | acquisition technology

Compter le temps d'exécution du programme PHP et définir le temps d'exécution maximum de PHP

随机推荐

IDC报告:腾讯云数据库稳居关系型数据库市场TOP 2!

ICML 2022 | meta proposes a robust multi-objective Bayesian optimization method to effectively deal with input noise

How to write a full score project document | acquisition technology

mysql5.6解析JSON字符串方式(支持复杂的嵌套格式)

Flask solves the problem of CORS err

How MySQL uses JSON_ Extract() takes JSON value

Cartoon: how to multiply large integers? (integrated version)

Judge whether a number is a prime number (prime number)

华为云云原生容器综合竞争力,中国第一!

Knowledge points of MySQL (7)

MYSQL group by 有哪些注意事项

一口气读懂 IT发展史

IDEA 项目启动报错 Shorten the command line via JAR manifest or via a classpath file and rerun.

Short the command line via jar manifest or via a classpath file and rerun

漫画:如何实现大整数相乘?(上) 修订版

叩富网开期货账户安全可靠吗?怎么分辨平台是否安全?

統計php程序運行時間及設置PHP最長運行時間

Read the history of it development in one breath

Database design in multi tenant mode

C#(Winform) 当前线程不在单线程单元中,因此无法实例化 ActiveX 控件