当前位置:网站首页>Heating data in data lake?

Heating data in data lake?

2022-07-06 11:21:00 【Jiedao jdon】

Data Lake : End the data island through a repository for big data analysis . Imagine , There is a single place to store all your data for analysis , To support product led growth and business insight . It is sad , The idea of data lake was once ignored , Because early attempts were based on Hadoop On the repository , These repositories are local , Lack of resources and availability Extensibility . We use “Hadoop Hangover ” It's over .

In the past, data lake was famous for its management challenges and slow speed of value realization . But the accelerated adoption of cloud object storage , And the exponential growth of data , Make them attractive again .

in fact , Now more than ever, we need data lake to support data analysis . Although cloud object storage was initially popular as a cost-effective way to temporarily store or archive data , But it has become popular , Because it's cheap 、 Security 、 Durable and elastic . It is not only cost-effective , And it's easy to stream data .

Data lake or data swamp ?

The economics of cloud object storage 、 Built in security and scalability encourage enterprises to store more and more data -- Create a huge data lake with infinite potential for data analysis . Enterprises understand , Have more data ( Not less ) It can become a strategic advantage . Unfortunately , In recent history , Many data Lake projects have failed , Because the data lake has become a data swamp -- It consists of cold data that is not easy to access or use . Many people find out , Sending data to the cloud is easy , But let users of the whole organization have access to these data , And get inspiration from it , It's hard to . These data lakes have become garbage dumps for multi structure data sets , Accumulate and collect digital dust , Strategic advantage without any promise .

In short , Cloud object storage is not built for general analysis , Don't like Hadoop like that . To gain insight , Data must be transformed and removed from the lake , Enter the analysis database , Such as Splunk、MySQL or Oracle, Depending on usage . This process is complex 、 Slow and expensive . It's also a challenge , Because the industry is currently facing a shortage of data engineers , They need to clean up and transform data , And establish the required data pipeline , To incorporate it into these analysis systems .

Gartner Find out , Despite these well-known challenges , More than half of the enterprises plan to invest in the data Lake in the next two years . The data lake has a surprising number of use cases , From investigating network intrusion through security logs to researching and improving customer experience . It is no wonder that enterprises still adhere to the promise of data Lake . that , How can we clear the marsh , Make sure these efforts don't fail ? And the key is , How do we unlock and provide access to data stored in the cloud -- This is the most important of all obstacles ?

Improve the heat of cold cloud storage

It is possible for cloud objects to be stored and heated for data analysis ( And it's the best ), But this requires rethinking framework . We need to ensure that storage has the look and feel of a database , In essence , Turn cloud object storage into a high-performance analysis database or warehouse . Have " Thermal data " Need to access quickly and easily in a few minutes , Not weeks or months , Even when processing tens of megabytes every day . This type of performance requires a different approach to data pipelining , Avoid switching and moving . The required architecture is like compression 、 Index and pass well-known API Publish data to Kibana and / or Looker It's as simple as tools , For one-time storage , Reduce movement and handling .

One of the most important ways to increase the popularity of data analysis is by promoting search . say concretely , Search is the ultimate democratization of data , Allow self-service data flow selection and publishing , Without the need for IT Administrator or database engineer . All data should be completely searchable , And you can use existing data tools for analysis . Imagine , Let users have the ability to search and query at will , Ask questions easily , Easily analyze data . Most well-known data warehouse and data Lake platforms do not provide this key function .

But some forward-looking companies have found ways . With BAI Take communication companies for example , Its data Lake strategy adopts this type of architecture . In major commuter cities ,BAI Provide the most advanced communication infrastructure ( cellular 、Wi-Fi、 radio broadcast 、 Radio and IP The Internet ).BAI Build its data flow on Amazon S3 Centralized data lake on cloud object storage , It's safe there , And comply with many government regulations . Use a data Lake built on cloud object storage , And through many API Data Lake platform activation analysis ,BAI It can be faster than before 、 Easier to find 、 Access and analyze its data , And the cost is more controlled . The company is taking advantage of the insights generated by its global network over the years , Help railway operators maintain traffic flow and optimize routes , Turn data insight into business value . This method has proved particularly valuable in the event of a pandemic , because BAI Be able to learn more about COVID-19 Impact on regional public transport networks around the world , So that they can continue to provide key connections for citizens .

边栏推荐

- When you open the browser, you will also open mango TV, Tiktok and other websites outside the home page

- Error reporting solution - io UnsupportedOperation: can‘t do nonzero end-relative seeks

- [蓝桥杯2021初赛] 砝码称重

- Some problems in the development of unity3d upgraded 2020 VR

- Learning question 1:127.0.0.1 refused our visit

- Neo4j installation tutorial

- Rhcsa certification exam exercise (configured on the first host)

- 【博主推荐】C# Winform定时发送邮箱(附源码)

- Asp access Shaoxing tourism graduation design website

- Ansible实战系列一 _ 入门

猜你喜欢

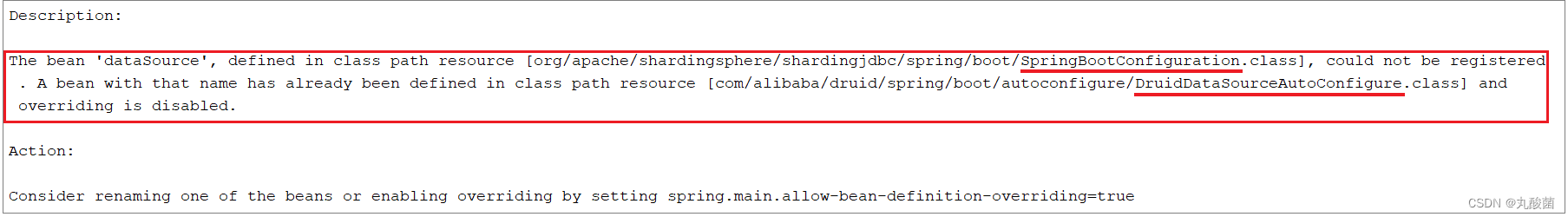

MySQL master-slave replication, read-write separation

![[recommended by bloggers] background management system of SSM framework (with source code)](/img/7f/a6b7a8663a2e410520df75fed368e2.png)

[recommended by bloggers] background management system of SSM framework (with source code)

Invalid global search in idea/pychar, etc. (win10)

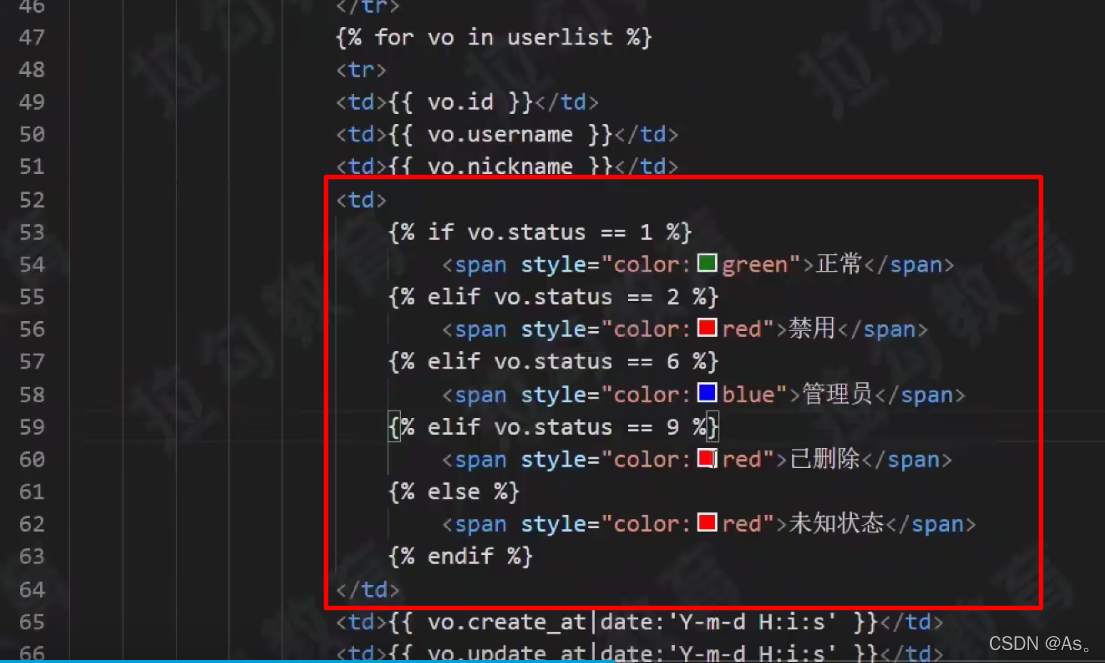

02-项目实战之后台员工信息管理

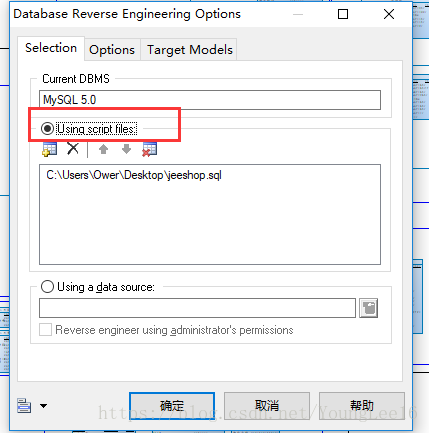

Generate PDM file from Navicat export table

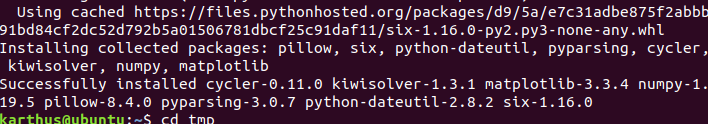

Solve the problem of installing failed building wheel for pilot

MySQL主从复制、读写分离

![[recommended by bloggers] C WinForm regularly sends email (with source code)](/img/5d/57f8599a4f02c569c6c3f4bcb8b739.png)

[recommended by bloggers] C WinForm regularly sends email (with source code)

QT creator runs the Valgrind tool on external applications

QT creator shape

随机推荐

Error reporting solution - io UnsupportedOperation: can‘t do nonzero end-relative seeks

Solution: log4j:warn please initialize the log4j system properly

数数字游戏

Django运行报错:Error loading MySQLdb module解决方法

[recommended by bloggers] C # generate a good-looking QR code (with source code)

Antlr4 uses keywords as identifiers

PyCharm中无法调用numpy,报错ModuleNotFoundError: No module named ‘numpy‘

Image recognition - pyteseract TesseractNotFoundError: tesseract is not installed or it‘s not in your path

QT creator specifies dependencies

基于apache-jena的知识问答

[C language foundation] 04 judgment and circulation

Ansible实战系列一 _ 入门

图像识别问题 — pytesseract.TesseractNotFoundError: tesseract is not installed or it‘s not in your path

How to build a new project for keil5mdk (with super detailed drawings)

QT creator test

[蓝桥杯2020初赛] 平面切分

[蓝桥杯2017初赛]包子凑数

[recommended by bloggers] C MVC list realizes the function of adding, deleting, modifying, checking, importing and exporting curves (with source code)

图片上色项目 —— Deoldify

自动机器学习框架介绍与使用(flaml、h2o)