当前位置:网站首页>NLP model Bert: from introduction to mastery (1)

NLP model Bert: from introduction to mastery (1)

2020-11-06 01:22:00 【Elementary school students in IT field】

List of articles

Before you say anything ,bert Prepare the basic information

Basic data preparation

tensorflow edition : Click on the portal

pytorch edition ( Note that this is achieved by a third party team ): Click on the portal

The paper : Click on delivery door

from 0 To 1 Understand the advantages and disadvantages of the model

Judging from the current trend , It seems to be a reliable way to pre train a language model with a certain model . From before AI2 Of ELMo, To OpenAI Of fine-tune transformer, Until then Google This BERT, It's all about the application of pre trained language models .

BERT This model is different from the other two :

- 1、 When it trains a two-way language model, it replaces a small number of words with Mask Or another random word . The aim is to force the model to increase its memory of the context . As for the probability, that's the sense of peace .

** Reading :** Mission 1: Masked LM

Intuitively , The research team has reason to believe , Depth bidirectional model ratio left-to-right A model or left-to-right and right-to-left The shallow connection of the model is more powerful . Unfortunately , The standard conditional language model can only be trained from left to right or from right to left , Because bidirectional conditionality will allow each word to be grounded in the middle of a multi-level context “see itself”.

To train a deep two-way representation (deep bidirectional representation), The team took a simple approach , Random screening (masking) Partial input token, Then only those that are blocked token. The paper calls this process “masked LM”(MLM), Although in the literature it is often called Cloze Mission (Taylor, 1953).

In this case , And masked token The corresponding final hidden vector is input to the output of the glossary softmax in , It's like the standard LM In the same . In all the experiments of the team , Randomly masked... In each sequence 15% Of WordPiece token. Automatic encoder with de-noising (Vincent et al., 2008) contrary , Only predict masked words Instead of rebuilding the entire input .

Although it does give the team a two-way pre training model , But this method has two disadvantages . First , Pre training and finetuning There's no match , Because in finetuning I never saw [MASK]token. To solve this problem , Teams don't always use practical [MASK]token Replace the quilt “masked” 's vocabulary . contrary , The training data generator is randomly selected 15% Of token. For example, in this sentence “my dog is hairy” in , It chose token yes “hairy”. then , Perform the following procedure :

The data generator will do the following , Instead of always using [MASK] Replace the selected word :

80% Time for : use [MASK] Mark replacement words , for example ,my dog is hairy → my dog is [MASK]

10% Time for : Replace the word with a random word , for example ,my dog is hairy → my dog is apple

10% Time for : Keep the word the same , for example ,my dog is hairy → my dog is hairy. The purpose of this is to bias the representation towards the actual observed words .

Transformer encoder It is not known which words it will be asked to predict or which words have been replaced by random words , So it's forced to keep every input token The distributed context representation of . Besides , Because random substitution only happens in all token Of 1.5%( namely 15% Of 10%), This does not seem to impair the model's ability to understand language .

Use MLM The second drawback is that each batch Only predicted 15% Of token, This suggests that the model may require more pre-training steps to converge . Team proof MLM The rate of convergence is slightly slower than left-to-right Model of ( Forecast each token), but MLM The improvement of the model in the experiment far exceeds the increased training cost .

- 2、 Added a prediction for the next sentence loss. From this point of view, it is relatively new .

Reading :

Mission 2: The next prediction

Many important downstream tasks , Such as Q & A (QA) And natural language reasoning (NLI) Both are based on the understanding of the relationship between two sentences , This is not directly obtained through language modeling .

In order to train a model to understand sentences , Train a binary test task in advance , This task can be generated from any monolingual corpus . To be specific , When you choose a sentence A and B As a pre training sample ,B Yes 50% Is the possibility of A Next sentence of , Also have 50% May be random sentences from corpus . for example :

Input = [CLS] the man went to [MASK] store [SEP]

he bought a gallon [MASK] milk [SEP]

Label = IsNext

Input = [CLS] the man [MASK] to the store [SEP]

penguin [MASK] are flight ##less birds [SEP]

Label = NotNext

The team chose... Completely at random NotNext sentence , The final pre training model is implemented on this task 97%-98% The accuracy of .

BERT The model has the following two characteristics :

First of all , It's a very deep model ,12 layer , It's not wide (wide), There's only... In the middle 1024, And before Transformer The middle layer of the model has 2048. This seems to confirm another point of view of computer image processing —— Deep and narrow Than Shallow and wide A better model .

second ,MLM(Masked Language Model), Use the words on the left and right at the same time , This is in ELMo There has been , Absolutely not original . secondly , about Mask( Occlusion ) Application in language model , Has been Ziang Xie Put forward ( I am very lucky to be involved in this paper ):[1703.02573] Data Noising as Smoothing in Neural Network Language Models. It's also a paper of superstars :Sida Wang,Jiwei Li( Founder and CEO And the one who has the most articles in history NLP scholars ),Andrew Ng,Dan Jurafsky All are Coauthor. But it's a pity that they didn't pay attention to this paper . Use the method of this paper to do Masking, Believe in BRET Maybe we can improve our ability .

Model input

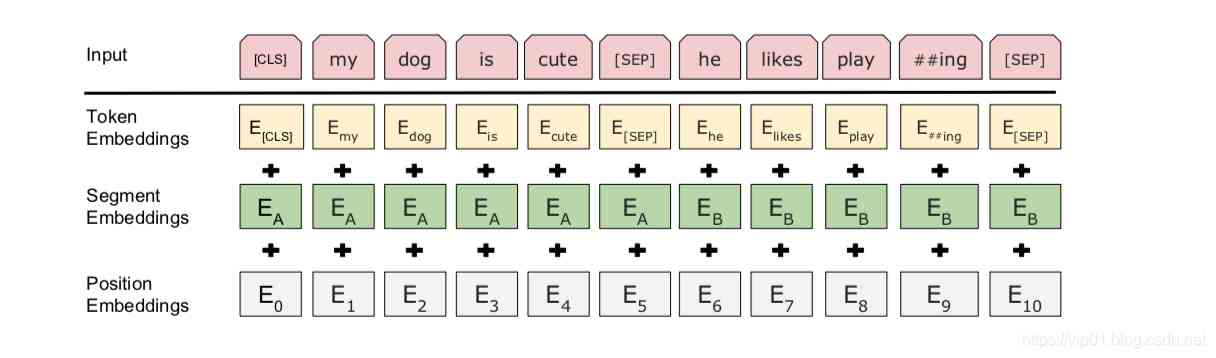

BERT Input means . The input embed is token embeddings, segmentation embeddings and position embeddings The sum of

As follows :

(1) Use WordPiece The embedded (Wu et al., 2016) and 30,000 individual token The vocabulary of . use ## It means participle .

(2) Use learned positional embeddings, The supported sequence length is at most 512 individual token.

The first... Of each sequence token Always special categories are embedded ([CLS]). Corresponds to the token The final hidden state of ( namely ,Transformer Output ) An aggregate sequence representation used as a classification task . For unclassified tasks , This vector will be ignored .

(3) Sentence pairs are packed into a sequence . Distinguish sentences in two ways . First , Use special marks ([SEP]) To separate them . secondly , Add one learned sentence A Embed each... In the first sentence token in , One sentence B Embed each... In the second sentence token in .

(4) For single sentence input , Use only sentence A The embedded .

Reference material :

1. The interpretation of the paper :

NLP Required reading : Ten minutes to read Google BERT Model

https://zhuanlan.zhihu.com/p/51413773

Interpretation of the thesis :BERT Models and fine-tuning

https://zhuanlan.zhihu.com/p/46833276

2. Interpretation of principles

https://zhuanlan.zhihu.com/p/68295881

https://zhuanlan.zhihu.com/p/49271699

http://www.52nlp.cn/tag/tensorflow-bert

版权声明

本文为[Elementary school students in IT field]所创,转载请带上原文链接,感谢

边栏推荐

- 助力金融科技创新发展,ATFX走在行业最前列

- Classical dynamic programming: complete knapsack problem

- vue-codemirror基本用法:实现搜索功能、代码折叠功能、获取编辑器值及时验证

- Brief introduction of TF flags

- After brushing leetcode's linked list topic, I found a secret!

- 一篇文章带你了解CSS 渐变知识

- 從小公司進入大廠,我都做對了哪些事?

- 加速「全民直播」洪流,如何攻克延时、卡顿、高并发难题?

- 教你轻松搞懂vue-codemirror的基本用法:主要实现代码编辑、验证提示、代码格式化

- This article will introduce you to jest unit test

猜你喜欢

Python自动化测试学习哪些知识?

Summary of common string algorithms

CCR炒币机器人:“比特币”数字货币的大佬,你不得不了解的知识

IPFS/Filecoin合法性:保护个人隐私不被泄露

PHP应用对接Justswap专用开发包【JustSwap.PHP】

PHPSHE 短信插件说明

TRON智能钱包PHP开发包【零TRX归集】

Mongodb (from 0 to 1), 11 days mongodb primary to intermediate advanced secret

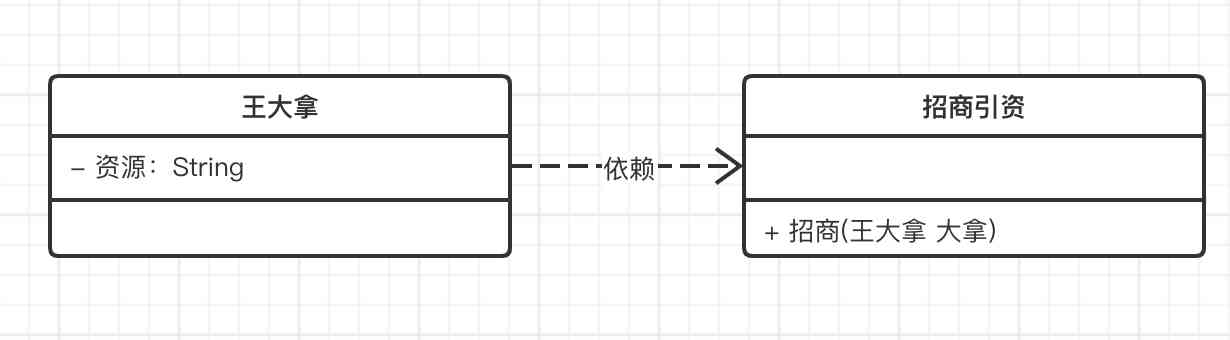

Do not understand UML class diagram? Take a look at this edition of rural love class diagram, a learn!

快快使用ModelArts,零基础小白也能玩转AI!

随机推荐

Vue 3 responsive Foundation

Programmer introspection checklist

“颜值经济”的野望:华熙生物净利率六连降,收购案遭上交所问询

Just now, I popularized two unique skills of login to Xuemei

OPTIMIZER_ Trace details

DevOps是什么

What is the side effect free method? How to name it? - Mario

How do the general bottom buried points do?

In depth understanding of the construction of Intelligent Recommendation System

Existence judgment in structured data

PHPSHE 短信插件说明

Elasticsearch 第六篇:聚合統計查詢

至联云解析:IPFS/Filecoin挖矿为什么这么难?

hadoop 命令总结

Keyboard entry lottery random draw

Group count - word length

6.4 viewresolver view parser (in-depth analysis of SSM and project practice)

业内首发车道级导航背后——详解高精定位技术演进与场景应用

JVM memory area and garbage collection

Using consult to realize service discovery: instance ID customization