当前位置:网站首页>Paging of a scratch (page turning processing)

Paging of a scratch (page turning processing)

2022-07-06 01:07:00 【Keep a low profile】

import scrapy

from bs4 import BeautifulSoup

class BookSpiderSpider(scrapy.Spider):

name = 'book_spider'

allowed_domains = ['17k.com']

start_urls = ['https://www.17k.com/all/book/2_0_0_0_0_0_0_0_1.html']

""" This will be explained later start_requests Is the method swollen or fat """

def start_requests(self):

for url in self.start_urls:

yield scrapy.Request(

url=url,

callback=self.parse

)

def parse(self, response, **kwargs):

print(response.url)

soup = BeautifulSoup(response.text, 'lxml')

trs = soup.find('div', attrs={

'class': 'alltable'}).find('tbody').find_all('tr')[1:]

for tr in trs:

book_type = tr.find('td', attrs={

'class': 'td2'}).find('a').text

book_name = tr.find('td', attrs={

'class': 'td3'}).find('a').text

book_words = tr.find('td', attrs={

'class': 'td5'}).text

book_author = tr.find('td', attrs={

'class': 'td6'}).find('a').text

print(book_type, book_name, book_words, book_author)

#

break

""" This is xpath The way of parsing """

# trs = response.xpath("//div[@class='alltable']/table/tbody/tr")[1:]

# for tr in trs:

# type = tr.xpath("./td[2]/a/text()").extract_first()

# name = tr.xpath("./td[3]/span/a/text()").extract_first()

# words = tr.xpath("./td[5]/text()").extract_first()

# author = tr.xpath("./td[6]/a/text()").extract_first()

# print(type, name, words, author)

""" 1 Find... On the next page url, Request to the next page Pagination logic This logic is the simplest kind Keep climbing linearly """

# next_page_url = soup.find('a', text=' The next page ')['href']

# if 'javascript' not in next_page_url:

# yield scrapy.Request(

# url=response.urljoin(next_page_url),

# method='get',

# callback=self.parse

# )

# 2 Brutal paging logic

""" Get all the url, Just send the request Will pass the engine 、 Scheduler ( aggregate queue ) Finish the heavy work here , Give it to the downloader , Then return to the reptile , But that start_url It will be repeated , The reason for repetition is inheritance Spider This class The methods of the parent class are dont_filter=True This thing So here we need to rewrite Spider The inside of the class start_requests Methods , Filter by default That's it start_url Repetitive questions """

#

#

a_list = soup.find('div', attrs={

'class': 'page'}).find_all('a')

for a in a_list:

if 'javascript' not in a['href']:

yield scrapy.Request(

url=response.urljoin(a['href']),

method='get',

callback=self.parse

)

边栏推荐

- Dynamic programming -- linear DP

- How to extract MP3 audio from MP4 video files?

- [groovy] JSON string deserialization (use jsonslurper to deserialize JSON strings | construct related classes according to the map set)

- Study diary: February 13, 2022

- Intensive learning weekly, issue 52: depth cuprl, distspectrl & double deep q-network

- 面试必刷算法TOP101之回溯篇 TOP34

- Convert binary search tree into cumulative tree (reverse middle order traversal)

- Kotlin core programming - algebraic data types and pattern matching (3)

- Building core knowledge points

- cf:C. The Third Problem【关于排列这件事】

猜你喜欢

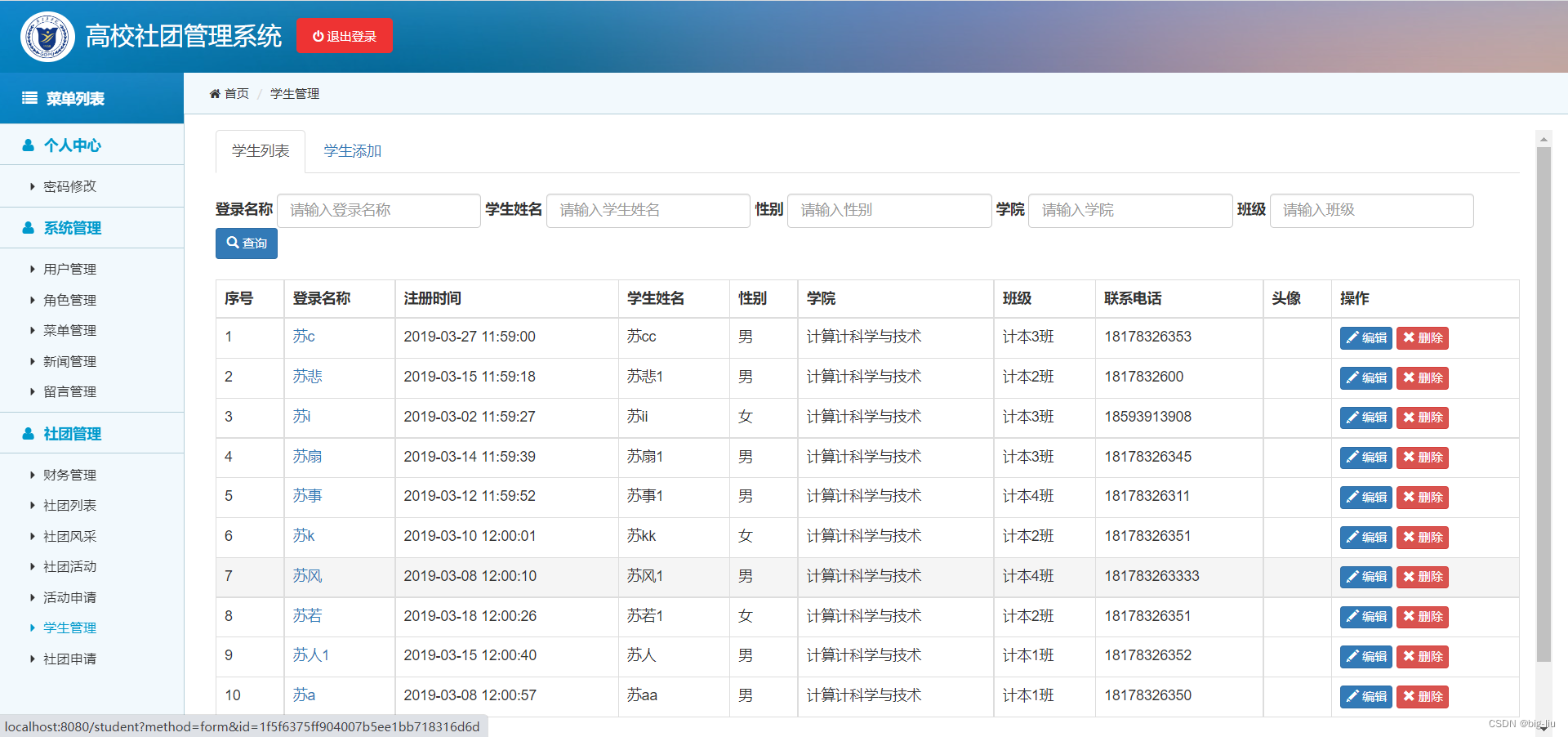

毕设-基于SSM高校学生社团管理系统

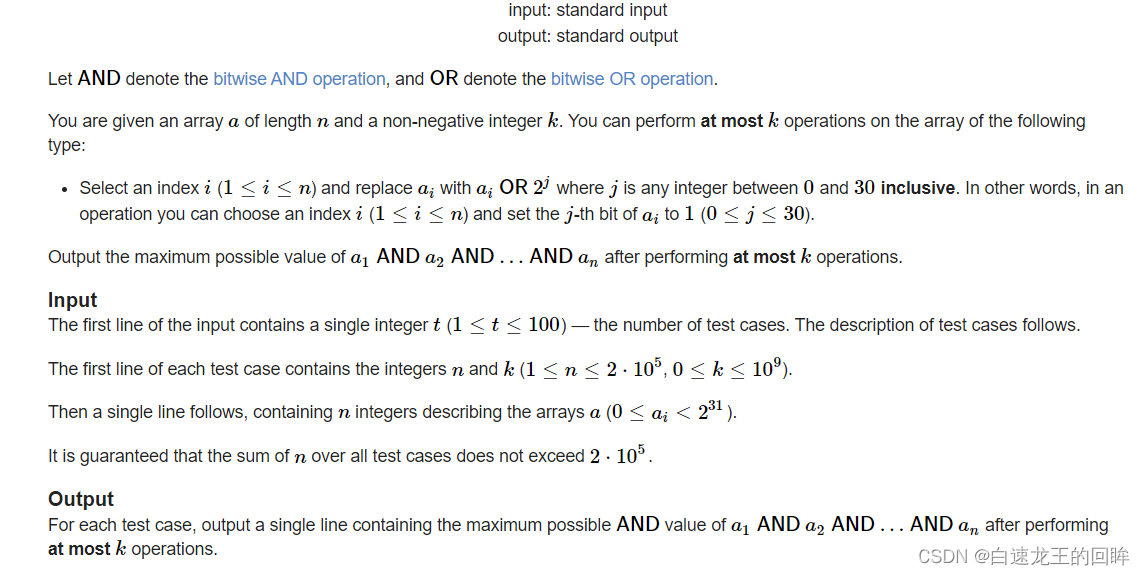

cf:H. Maximal AND【位运算练习 + k次操作 + 最大And】

![[groovy] XML serialization (use markupbuilder to generate XML data | create sub tags under tag closures | use markupbuilderhelper to add XML comments)](/img/d4/4a33e7f077db4d135c8f38d4af57fa.jpg)

[groovy] XML serialization (use markupbuilder to generate XML data | create sub tags under tag closures | use markupbuilderhelper to add XML comments)

程序员搞开源,读什么书最合适?

![[groovy] compile time metaprogramming (compile time method injection | method injection using buildfromspec, buildfromstring, buildfromcode)](/img/e4/a41fe26efe389351780b322917d721.jpg)

[groovy] compile time metaprogramming (compile time method injection | method injection using buildfromspec, buildfromstring, buildfromcode)

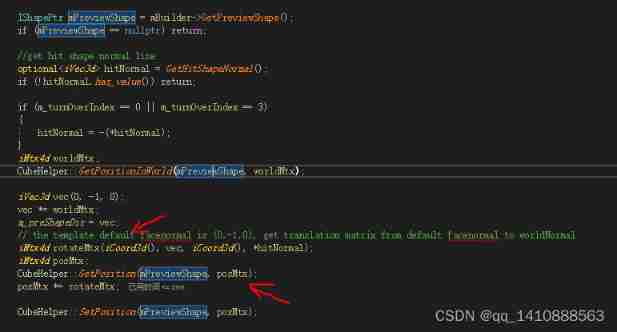

Four dimensional matrix, flip (including mirror image), rotation, world coordinates and local coordinates

![[pat (basic level) practice] - [simple mathematics] 1062 simplest fraction](/img/b4/3d46a33fa780e5fb32bbfe5ab26a7f.jpg)

[pat (basic level) practice] - [simple mathematics] 1062 simplest fraction

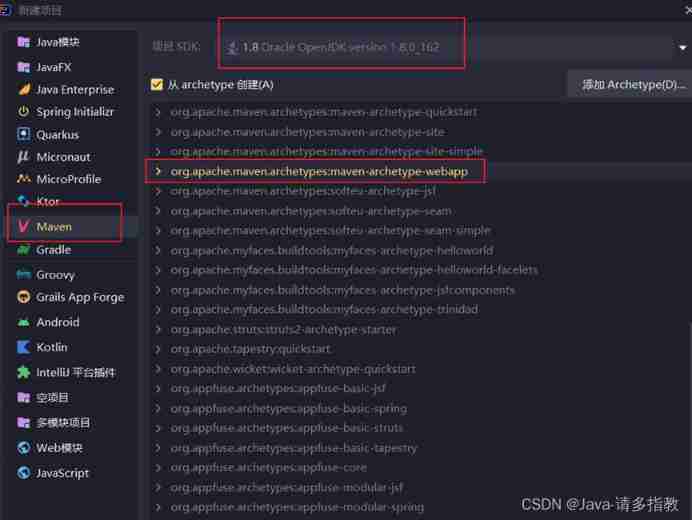

servlet(1)

Folding and sinking sand -- weekly record of ETF

Vulhub vulnerability recurrence 74_ Wordpress

随机推荐

[groovy] JSON string deserialization (use jsonslurper to deserialize JSON strings | construct related classes according to the map set)

How spark gets columns in dataframe --column, $, column, apply

devkit入门

Getting started with devkit

NLP text processing: lemma [English] [put the deformation of various types of words into one form] [wet- > go; are- > be]

logstash清除sincedb_path上传记录,重传日志数据

Browser reflow and redraw

Finding the nearest common ancestor of binary tree by recursion

程序员成长第九篇:真实项目中的注意事项

[groovy] XML serialization (use markupbuilder to generate XML data | set XML tag content | set XML tag attributes)

VSphere implements virtual machine migration

Is chaozhaojin safe? Will it lose its principal

STM32 key chattering elimination - entry state machine thinking

关于softmax函数的见解

[groovy] JSON serialization (convert class objects to JSON strings | convert using jsonbuilder | convert using jsonoutput | format JSON strings for output)

After Luke zettlemoyer, head of meta AI Seattle research | trillion parameters, will the large model continue to grow?

Daily practice - February 13, 2022

[groovy] compile time meta programming (compile time method interception | method interception in myasttransformation visit method)

[groovy] compile time metaprogramming (compile time method interception | find the method to be intercepted in the myasttransformation visit method)

Vulhub vulnerability recurrence 75_ XStream