当前位置:网站首页>Mtcnn face detection

Mtcnn face detection

2022-07-06 20:44:00 【gmHappy】

demo.py

import cv2

from detection.mtcnn import MTCNN

# Detect the face in the picture

def test_image(imgpath):

mtcnn = MTCNN('./mtcnn.pb')

img = cv2.imread(imgpath)

bbox, landmarks, scores = mtcnn.detect_faces(img)

print('total box:', len(bbox))

for box, pts in zip(bbox, landmarks):

box = box.astype('int32')

img = cv2.rectangle(img, (box[1], box[0]), (box[3], box[2]), (255, 0, 0), 3)

pts = pts.astype('int32')

for i in range(5):

img = cv2.circle(img, (pts[i + 5], pts[i]), 1, (0, 255, 0), 2)

cv2.imshow('image', img)

cv2.waitKey()

# Detect faces in the video

def test_camera():

mtcnn = MTCNN('./mtcnn.pb')

cap = cv2.VideoCapture('rtsp://admin:[email protected]/Streaming/Channels/1')

while True:

ret, img = cap.read()

if not ret:

break

bbox, landmarks, scores = mtcnn.detect_faces(img)

print('total box:', len(bbox), scores)

for box, pts in zip(bbox, landmarks):

box = box.astype('int32')

img = cv2.rectangle(img, (box[1], box[0]), (box[3], box[2]), (255, 0, 0), 3)

pts = pts.astype('int32')

for i in range(5):

img = cv2.circle(img, (pts[i], pts[i + 5]), 1, (0, 255, 0), 2)

cv2.imshow('img', img)

cv2.waitKey(1)

if __name__ == '__main__':

# test_image()

test_camera()

- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

- 7.

- 8.

- 9.

- 10.

- 11.

- 12.

- 13.

- 14.

- 15.

- 16.

- 17.

- 18.

- 19.

- 20.

- 21.

- 22.

- 23.

- 24.

- 25.

- 26.

- 27.

- 28.

- 29.

- 30.

- 31.

- 32.

- 33.

- 34.

- 35.

- 36.

- 37.

- 38.

- 39.

- 40.

- 41.

- 42.

- 43.

- 44.

- 45.

- 46.

mtcnn.py

import tensorflow as tf

from detection.align_trans import get_reference_facial_points, warp_and_crop_face

import numpy as np

import cv2

import detection.face_preprocess as face_preprocess

class MTCNN:

def __init__(self, model_path, min_size=40, factor=0.709, thresholds=[0.7, 0.8, 0.8]):

self.min_size = min_size

self.factor = factor

self.thresholds = thresholds

graph = tf.Graph()

with graph.as_default():

with open(model_path, 'rb') as f:

graph_def = tf.GraphDef.FromString(f.read())

tf.import_graph_def(graph_def, name='')

self.graph = graph

config = tf.ConfigProto(

allow_soft_placement=True,

intra_op_parallelism_threads=4,

inter_op_parallelism_threads=4)

config.gpu_options.allow_growth = True

self.sess = tf.Session(graph=graph, config=config)

self.refrence = get_reference_facial_points(default_square=True)

# Face detection

def detect_faces(self, img):

feeds = {

self.graph.get_operation_by_name('input').outputs[0]: img,

self.graph.get_operation_by_name('min_size').outputs[0]: self.min_size,

self.graph.get_operation_by_name('thresholds').outputs[0]: self.thresholds,

self.graph.get_operation_by_name('factor').outputs[0]: self.factor

}

fetches = [self.graph.get_operation_by_name('prob').outputs[0],

self.graph.get_operation_by_name('landmarks').outputs[0],

self.graph.get_operation_by_name('box').outputs[0]]

prob, landmarks, box = self.sess.run(fetches, feeds)

return box, landmarks, prob

# Align to get a single face

def align_face(self, img):

ret = self.detect_faces(img)

if ret is None:

return None

bbox, landmarks, prob = ret

if bbox.shape[0] == 0:

return None

landmarks_copy = landmarks.copy()

landmarks[:, 0:5] = landmarks_copy[:, 5:10]

landmarks[:, 5:10] = landmarks_copy[:, 0:5]

# print(landmarks[0, :])

bbox = bbox[0, 0:4]

bbox = bbox.astype(int)

bbox = bbox[::-1]

bbox_copy = bbox.copy()

bbox[0:2] = bbox_copy[2:4]

bbox[2:4] = bbox_copy[0:2]

# print(bbox)

points = landmarks[0, :].reshape((2, 5)).T

# print(points)

'''

face_img = cv2.rectangle(img, (bbox[0], bbox[1]), (bbox[2], bbox[3]), (0, 0, 255), 6)

for i in range(5):

pts = points[i, :]

face_img = cv2.circle(face_img, (pts[0], pts[1]), 2, (0, 255, 0), 2)

cv2.imshow('img', face_img)

if cv2.waitKey(100000) & 0xFF == ord('q'):

cv2.destroyAllWindows()

'''

warped_face = face_preprocess.preprocess(img, bbox, points, image_size='112,112')

'''

cv2.imshow('face', warped_face)

if cv2.waitKey(100000) & 0xFF == ord('q'):

cv2.destroyAllWindows()

'''

# warped_face = cv2.cvtColor(warped_face, cv2.COLOR_BGR2RGB)

# aligned = np.transpose(warped_face, (2, 0, 1))

# return aligned

return warped_face

# Align to get multiple faces

def align_multi_faces(self, img, limit=None):

boxes, landmarks, _ = self.detect_faces(img)

if limit:

boxes = boxes[:limit]

landmarks = landmarks[:limit]

landmarks_copy = landmarks.copy()

landmarks[:, 0:5] = landmarks_copy[:, 5:10]

landmarks[:, 5:10] = landmarks_copy[:, 0:5]

# print('landmarks', landmark)

faces = []

for idx in range(len(landmarks)):

'''

landmark = landmarks[idx, :]

facial5points = [[landmark[j], landmark[j + 5]] for j in range(5)]

warped_face = warp_and_crop_face(np.array(img), facial5points, self.refrence, crop_size=(112, 112))

faces.append(warped_face)

'''

bbox = boxes[idx, 0:4]

bbox = bbox.astype(int)

bbox = bbox[::-1]

bbox_copy = bbox.copy()

bbox[0:2] = bbox_copy[2:4]

bbox[2:4] = bbox_copy[0:2]

# print(bbox)

points = landmarks[idx, :].reshape((2, 5)).T

# print(points)

warped_face = face_preprocess.preprocess(img, bbox, points, image_size='112,112')

cv2.imshow('faces', warped_face)

# warped_face = cv2.cvtColor(warped_face, cv2.COLOR_BGR2RGB)

# aligned = np.transpose(warped_face, (2, 0, 1))

faces.append(warped_face)

# print('faces',faces)

# print('boxes',boxes)

return faces, boxes, landmarks

- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

- 7.

- 8.

- 9.

- 10.

- 11.

- 12.

- 13.

- 14.

- 15.

- 16.

- 17.

- 18.

- 19.

- 20.

- 21.

- 22.

- 23.

- 24.

- 25.

- 26.

- 27.

- 28.

- 29.

- 30.

- 31.

- 32.

- 33.

- 34.

- 35.

- 36.

- 37.

- 38.

- 39.

- 40.

- 41.

- 42.

- 43.

- 44.

- 45.

- 46.

- 47.

- 48.

- 49.

- 50.

- 51.

- 52.

- 53.

- 54.

- 55.

- 56.

- 57.

- 58.

- 59.

- 60.

- 61.

- 62.

- 63.

- 64.

- 65.

- 66.

- 67.

- 68.

- 69.

- 70.

- 71.

- 72.

- 73.

- 74.

- 75.

- 76.

- 77.

- 78.

- 79.

- 80.

- 81.

- 82.

- 83.

- 84.

- 85.

- 86.

- 87.

- 88.

- 89.

- 90.

- 91.

- 92.

- 93.

- 94.

- 95.

- 96.

- 97.

- 98.

- 99.

- 100.

- 101.

- 102.

- 103.

- 104.

- 105.

- 106.

- 107.

- 108.

- 109.

- 110.

- 111.

- 112.

- 113.

- 114.

- 115.

- 116.

- 117.

- 118.

- 119.

- 120.

- 121.

- 122.

- 123.

- 124.

- 125.

- 126.

- 127.

- 128.

- 129.

- 130.

- 131.

边栏推荐

- 为什么新手在编程社区提问经常得不到回答,甚至还会被嘲讽?

- Extraction rules and test objectives of performance test points

- PG基础篇--逻辑结构管理(事务)

- Leetcode question 448 Find all missing numbers in the array

- JS implementation force deduction 71 question simplified path

- Special topic of rotor position estimation of permanent magnet synchronous motor -- fundamental wave model and rotor position angle

- 逻辑是个好东西

- 1500萬員工輕松管理,雲原生數據庫GaussDB讓HR辦公更高效

- (work record) March 11, 2020 to March 15, 2021

- Catch ball game 1

猜你喜欢

##无yum源安装spug监控

![[weekly pit] information encryption + [answer] positive integer factorization prime factor](/img/d8/a367c26b51d9dbaf53bf4fe2a13917.png)

[weekly pit] information encryption + [answer] positive integer factorization prime factor

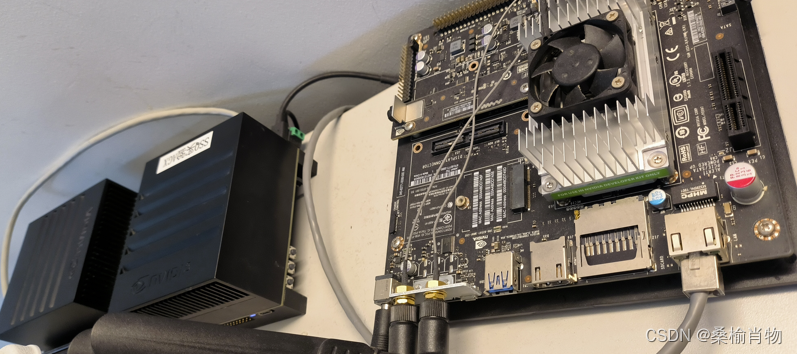

使用.Net驱动Jetson Nano的OLED显示屏

小孩子学什么编程?

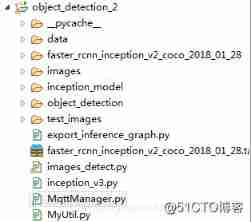

Build your own application based on Google's open source tensorflow object detection API video object recognition system (IV)

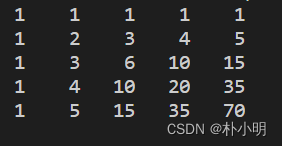

棋盘左上角到右下角方案数(2)

Use of OLED screen

02 basic introduction - data package expansion

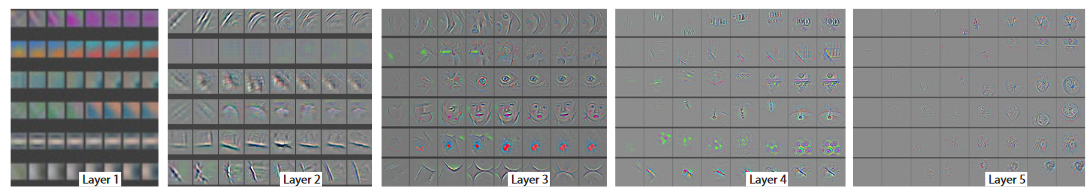

Deep learning classification network -- zfnet

Rhcsa Road

随机推荐

What key progress has been made in deep learning in 2021?

知识图谱之实体对齐二

Pinduoduo lost the lawsuit, and the case of bargain price difference of 0.9% was sentenced; Wechat internal test, the same mobile phone number can register two account functions; 2022 fields Awards an

Common doubts about the introduction of APS by enterprises

Yyds dry goods count re comb this of arrow function

全网最全的新型数据库、多维表格平台盘点 Notion、FlowUs、Airtable、SeaTable、维格表 Vika、飞书多维表格、黑帕云、织信 Informat、语雀

7、数据权限注解

2022 nurse (primary) examination questions and new nurse (primary) examination questions

C language operators

Pycharm remote execution

强化学习-学习笔记5 | AlphaGo

Notes on beagleboneblack

Trends of "software" in robotics Engineering

Intel 48 core new Xeon run point exposure: unexpected results against AMD zen3 in 3D cache

Build your own application based on Google's open source tensorflow object detection API video object recognition system (IV)

SSO single sign on

Quel genre de programmation les enfants apprennent - ils?

APS taps home appliance industry into new growth points

Use of OLED screen

Tencent T4 architect, Android interview Foundation