当前位置:网站首页>Redis shares four cache modes

Redis shares four cache modes

2022-07-04 15:15:00 【1024 questions】

summary

Selection of caching strategy

Cache Aside

Read Through

Write Through

Write-Behind

Summary

summaryIn the system architecture , Caching is one of the easiest ways to provide system performance , Students with a little development experience will inevitably deal with caching , At least I have practiced .

If used properly , Caching can reduce response time 、 Reduce database load and save cost . But if the cache is not used properly , There may be some inexplicable problems .

In different scenarios , The caching strategy used also varies . If in your impression and experience , Caching is just a simple query 、 update operation , Then this article is really worth learning .

ad locum , Explain systematically for everyone 4 Three cache modes and their usage scenarios 、 Process and advantages and disadvantages .

Selection of caching strategyIn essence , Caching strategy depends on data and data access patterns . let me put it another way , How data is written and read .

for example :

Does the system write more and read less ?( for example , Time based logging )

Whether the data is written only once and read many times ?( for example , User profile )

Is the returned data always unique ?( for example , Search for )

Choosing the right caching strategy is the key to improving performance .

There are five common cache strategies :

Cache-Aside Pattern: Bypass caching mode

Read Through Cache Pattern: Read penetration mode

Write Through Cache Pattern: Write through mode

Write Behind Pattern: Also called Write Back, Asynchronous cache write mode

The above cache strategy is divided based on the data reading and writing process , Under some caching strategies, the application only interacts with the cache , Under some caching strategies, applications interact with caches and databases at the same time . Because this is an important dimension of strategy division , Therefore, you need to pay special attention to the following process learning .

Cache AsideCache Aside Is the most common caching mode , Applications can talk directly to caches and databases .Cache Aside It can be used for read and write operations .

Flow chart of read operation :

The process of reading operation :

The application receives a data query ( read ) request ;

Whether the data that the application needs to query is in the cache : If there is (Cache hit), Query the data from the cache , Go straight back to ;

If it doesn't exist (Cache miss), Then retrieve data from the database , And stored in the cache , Return result data ;

Here we need to pay attention to an operation boundary , That is, the database and cache operations are directly operated by the application .

Write the flow chart of the operation :

The write operation here , Including the creation of 、 Update and delete . When writing operations ,Cache Aside The pattern is to update the database first ( increase 、 Delete 、 Change ), Then delete the cache directly .

Cache Aside Patterns can be said to apply to most scenarios , Usually in order to deal with different types of data , There are also two strategies to load the cache :

Load cache when using : When you need to use cached data , Query from the database , After the first query , Subsequent requests get data from the cache ;

Preload cache : Preload the cache information through the program at or after the project starts , such as ” National Information 、 Currency information 、 User information , News “ Wait for data that is not often changed .

Cache Aside It is suitable for reading more and writing less , For example, user information 、 News reports, etc , Once written to the cache , Almost no modification . The disadvantage of this mode is that the cache and database double write may be inconsistent .

Cache Aside It is also a standard model , image Facebook This mode is adopted .

Read ThroughRead-Through and Cache-Aside Very similar , The difference is that the program doesn't need to focus on where to read data ( Cache or database ), It only needs to read data from the cache . Where the data in the cache comes from is determined by the cache .

Cache Aside The caller is responsible for loading the data into the cache , and Read Through The cache service itself will be used to load , So it is transparent to the application side .Read-Through Its advantage is to make the program code more concise .

This involves the application operation boundary problem we mentioned above , Look directly at the flow chart :

In the above flow chart , Focus on the operations in the dotted box , This part of the operation is no longer handled by the application , Instead, the cache handles it itself . in other words , When an application queries a piece of data from the cache , If the data does not exist, the cache will load the data , Finally, the cache returns the data results to the application .

Write Throughstay Cache Aside in , The application needs to maintain two data stores : A cache , A database . This is for applications , It's a little cumbersome .

Write-Through In mode , All writes are cached , Every time you write data to the cache , The cache will persist the data to the corresponding database , And these two operations are completed in one transaction . therefore , Only if you succeed in writing twice can you finally succeed . The downside is write latency , The benefit is data consistency .

It can be understood as , Applications think that the back end is a single storage , And storage itself maintains its own Cache.

Because the program only interacts with the cache , Coding will become simpler and cleaner , This becomes especially obvious when the same logic needs to be reused in multiple places .

When using Write-Through when , Generally, it is used together Read-Through To use .Write-Through The potential use scenario for is the banking system .

Write-Through Applicable cases are :

You need to read the same data frequently

Can't stand data loss ( relative Write-Behind for ) Inconsistent with the data

In the use of Write-Through Special attention should be paid to the effectiveness management of cache , Otherwise, a large amount of cache will occupy memory resources . Even valid cache data is cleared by invalid cache data .

Write-BehindWrite-Behind and Write-Through stay ” The program only interacts with the cache and can only write data through the cache “ This aspect is very similar . The difference is Write-Through The data will be written into the database immediately , and Write-Behind After a while ( Or triggered by other ways ) Write the data together into the database , This asynchronous write operation is Write-Behind The biggest feature .

Database write operations can be done in different ways , One way is to collect all write operations and at a certain point in time ( For example, when the database load is low ) Batch write . Another way is to merge several write operations into a small batch operation , Then the cache collects write operations and writes them in batches .

Asynchronous write operations greatly reduce the request latency and reduce the burden on the database . At the same time, it also magnifies the inconsistency of data . For example, someone directly queries data from the database at this time , But the updated data has not been written to the database , At this time, the queried data is not the latest data .

SummaryDifferent caching modes have different considerations and characteristics , According to the different scenarios of application requirements , You need to choose the appropriate cache mode flexibly . In the process of practice, it is often a combination of multiple modes .

This is about Redis Of 4 This is the end of the article on sharing caching modes , More about Redis Please search the previous articles of SDN or continue to browse the related articles below. I hope you will support SDN more in the future !

边栏推荐

- 压力、焦虑还是抑郁? 正确诊断再治疗

- Summer Review, we must avoid stepping on these holes!

- LeetCode 58. 最后一个单词的长度

- Guitar Pro 8win10最新版吉他学习 / 打谱 / 创作

- 暑期复习,一定要避免踩这些坑!

- MP3是如何诞生的?

- What are the concepts of union, intersection, difference and complement?

- Partial modification - progressive development

- 怎么判断外盘期货平台正规,资金安全?

- LNX efficient search engine, fastdeploy reasoning deployment toolbox, AI frontier paper | showmeai information daily # 07.04

猜你喜欢

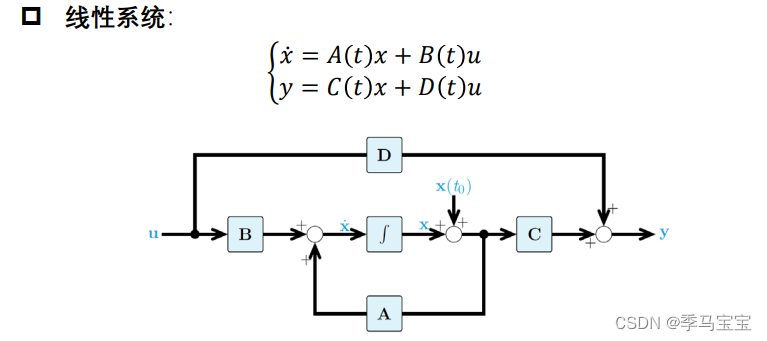

Introduction to modern control theory + understanding

Halcon knowledge: NCC_ Model template matching

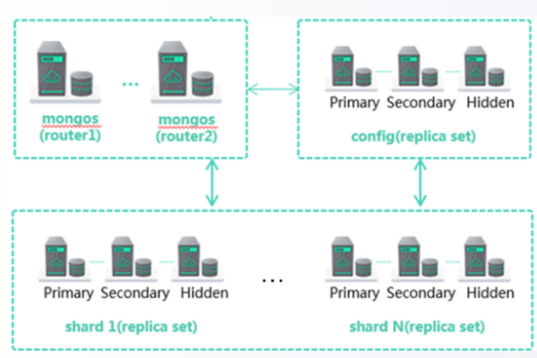

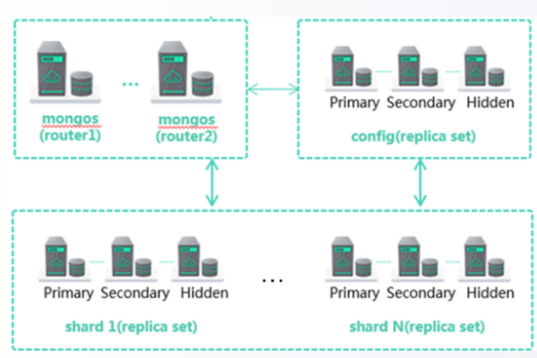

华为云数据库DDS产品深度赋能

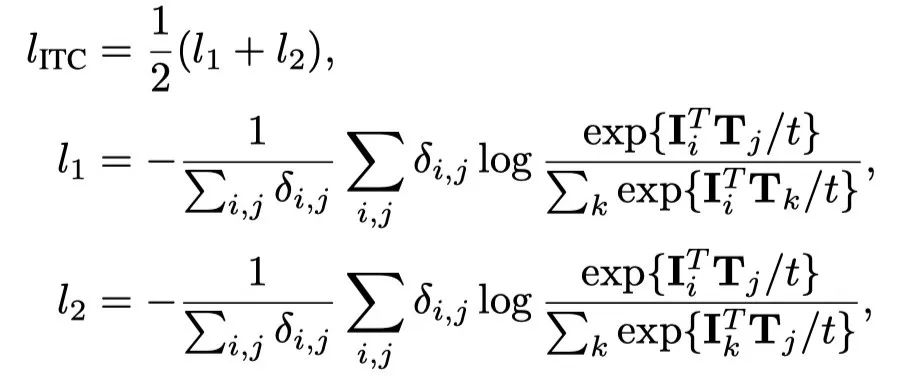

UFO: Microsoft scholars have proposed a unified transformer for visual language representation learning to achieve SOTA performance on multiple multimodal tasks

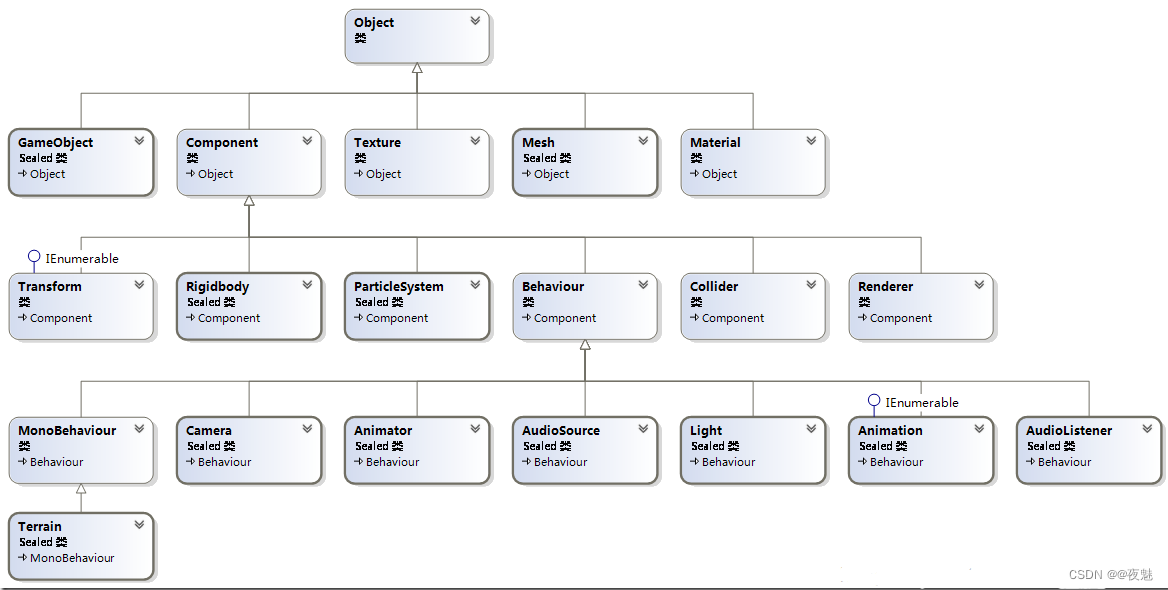

Unity脚本常用API Day03

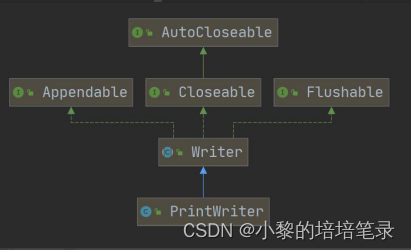

IO流:节点流和处理流详细归纳。

Huawei cloud database DDS products are deeply enabled

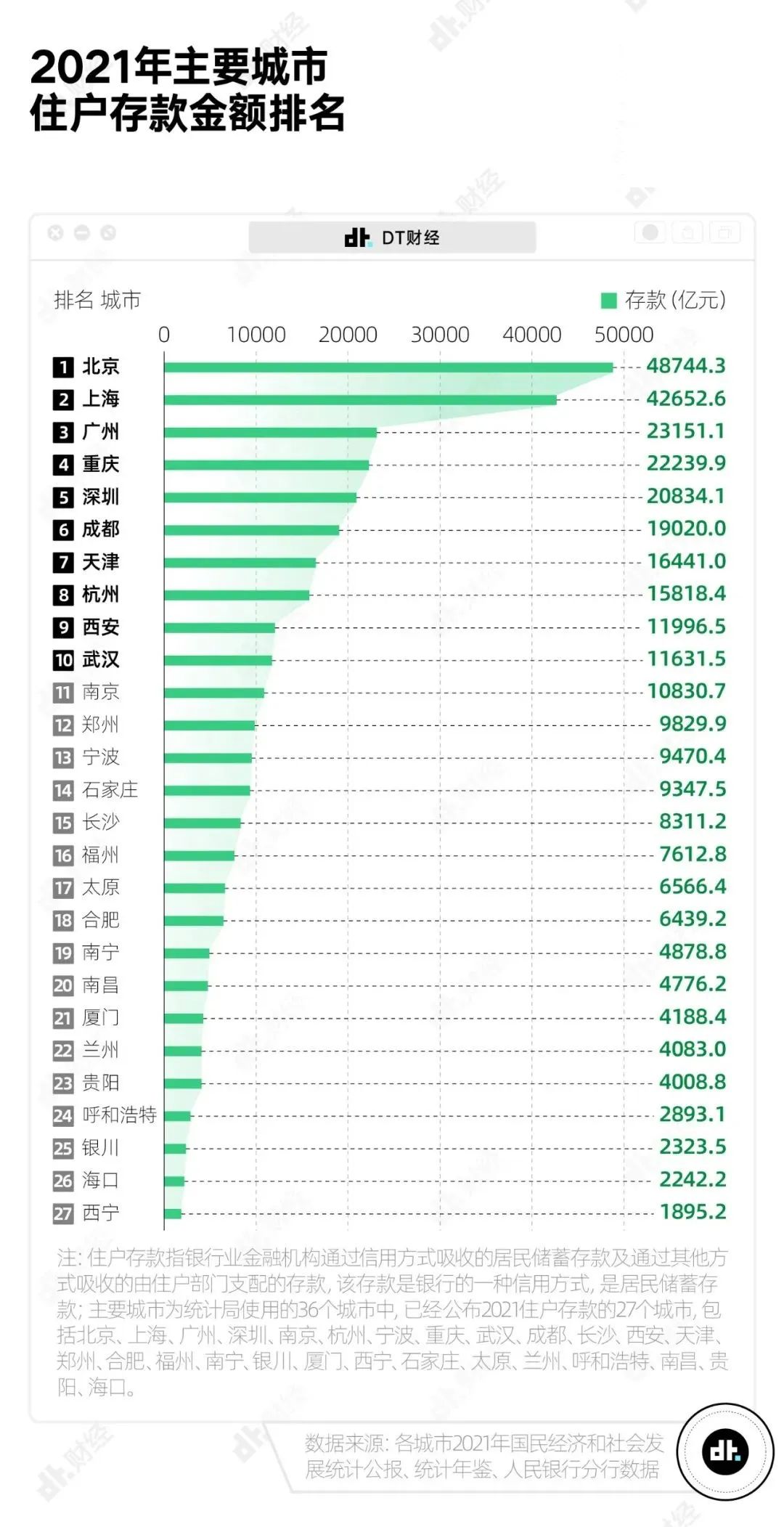

中国主要城市人均存款出炉,你达标了吗?

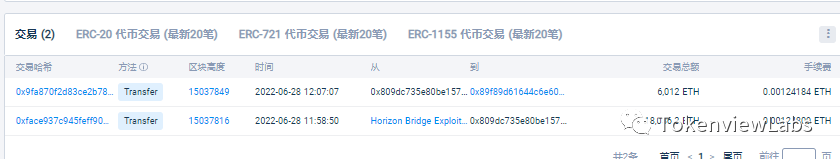

近一亿美元失窃,Horizon跨链桥被攻击事件分析

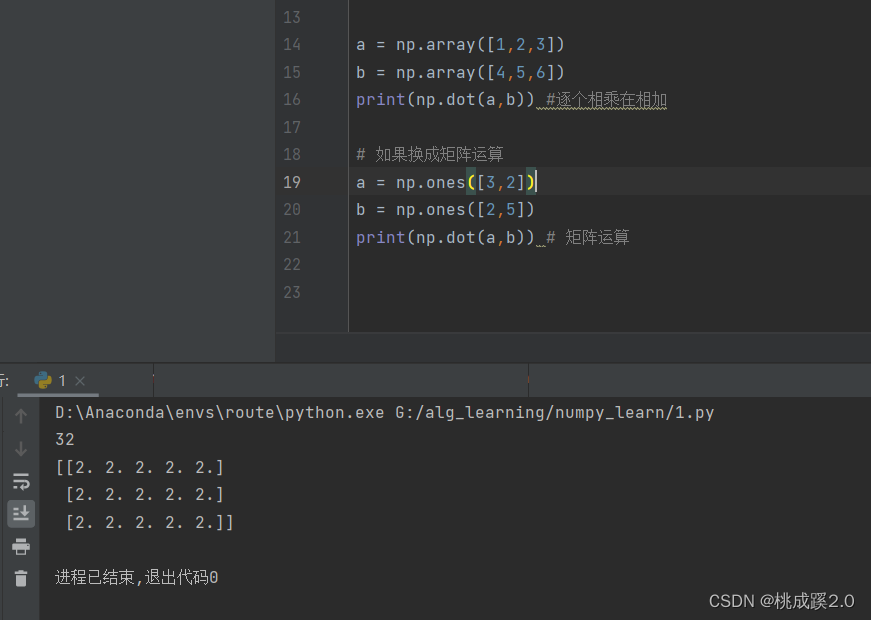

numpy笔记

随机推荐

暑期复习,一定要避免踩这些坑!

【读书会第十三期】FFmpeg 查看媒体信息和处理音视频文件的常用方法

LeetCode 1200 最小絕對差[排序] HERODING的LeetCode之路

夜天之书 #53 Apache 开源社群的“石头汤”

Numpy notes

flutter 报错 No MediaQuery widget ancestor found.

Unity脚本常用API Day03

干货 | fMRI标准报告指南新鲜出炉啦,快来涨知识吧

Unity脚本API—Component组件

浮点数如何与0进行比较?

lnx 高效搜索引擎、FastDeploy 推理部署工具箱、AI前沿论文 | ShowMeAI资讯日报 #07.04

一篇文章搞懂Go语言中的Context

TechSmith Camtasia studio 2022.0.2 screen recording software

Building intelligent gray-scale data system from 0 to 1: Taking vivo game center as an example

数据库函数的用法「建议收藏」

go-zero微服务实战系列(九、极致优化秒杀性能)

Weekly recruitment | senior DBA annual salary 49+, the more opportunities, the closer success!

Is BigDecimal safe to calculate the amount? Look at these five pits~~

近一亿美元失窃,Horizon跨链桥被攻击事件分析

I plan to teach myself some programming and want to work as a part-time programmer. I want to ask which programmer has a simple part-time platform list and doesn't investigate the degree of the receiv