当前位置:网站首页>2019 Alibaba cluster dataset Usage Summary

2019 Alibaba cluster dataset Usage Summary

2022-07-06 18:03:00 【5-StarrySky】

2019 Summary of Alibaba cluster dataset usage

Some details in the dataset

In the offline load instance table (batch_instance) in , There is a problem with the start time and end time of the instance , If you check carefully , You will find that the start time of the instance is actually larger than the end time of the instance , So the subtraction is negative , At the beginning, I adjusted BUG Why didn't you find it , I know that one day I noticed that my workload was negative , I just found out what happened , Here is also the definition of my task quantity :

cloudlet_total = self.cpu_avg * (self.finished_time_data_set - self.start_time_data_set) cloudmem_total = self.mem_avg * (self.finished_time_data_set - self.start_time_data_set)The second pit , It's up there CPU The number of , For offline loads ,instance Inside CPU The quantity is multiplied by 100 The number of , So you need to be standardized , Because in the machine node CPU The quantity of is not divided by 100 Of , Namely 96, Empathy container_mate Of CPU Quantity also needs attention , If it is 400 And so on. , You need to divide by 100

Create a JOB and APP

# Put together APP One APP Include multiple containers

def create_app(self):

container_dao = ContainerDao(self.a)

container_data_list = container_dao.get_container_list()

container_data_groupby_list = container_dao.get_container_data_groupby()

for app_du,count in container_data_groupby_list:

self.app_num += 1

num = 0

app = Apps(app_du)

index_temp = -1

for container_data in container_data_list:

if container_data.app_du == app_du:

index_temp += 1

container = Container(app_du)

container.set_container(container_data)

container.index = index_temp

app.add_container(container)

num += 1

if num == count:

# print(app.app_du + "")

# print(num)

app.set_container_num(num)

self.App_list.append(app)

break

# Create JOB

def create_job(self):

instance_dao = InstanceDao(self.b)

task_dao = TaskDao(self.b)

task_data_list = task_dao.get_task_list()

job_group_by = task_dao.get_job_group_by()

instance_data_list = instance_dao.get_instance_list()

# instance_data_list = instance_dao.get_instance_list()

for job_name,count in job_group_by:

self.job_num += 1

job = Job(job_name)

for task_data in task_data_list:

if task_data.job_name == job_name:

task = Task(job_name)

task.set_task(task_data)

for instance_data in instance_data_list:

if instance_data.job_name == job_name and instance_data.task_name == task_data.task_name:

instance = Instance(task_data.task_name,job_name)

instance.set_instance(instance_data)

task.add_instance(instance)

pass

pass

task.prepared()

job.add_task(task)

pass

# The following statement can be deleted , Removing means removing DAG Relationship

job.DAG()

self.job_list.append(job)

# establish DAG Relationship

def DAG(self):

for task in self.task_list:

str_list = task.get_task_name().replace(task.get_task_name()[0],"")

num_list = str_list.split('_')

# Serial number of the current task M1

start = num_list[0]

del num_list[0]

# The back points to the front M2_1 1 Point to 2 1 yes 2 The precursor of 2 yes 1 In the subsequent

for task_1 in self.task_list:

str_list_1 = task_1.get_task_name().replace(task_1.get_task_name()[0], "")

num_list_1 = str_list_1.split('_')

del num_list_1[0]

# Current task

for num in num_list_1:

if num == start:

# Save successors

task.add_subsequent(task_1.get_task_name())

# Save precursor

task_1.add_precursor(task.get_task_name())

# task_1.add_subsequent(task.get_task_name())

pass

pass

pass

pass

pass

How to read data and create corresponding instances

import pandas as pd

from data.containerData import ContainerData

""" Read container data in data set attribute : a: Choose which dataset , = 1 : Represents a dataset 1 Containers 124 individual Yes 20 Group = 2 : Represents a dataset 2 Containers 500 individual Yes 66 Group = 3 : Represents a dataset 3 Containers 1002 individual Yes 122 Group """

# Here is just contaienr For example

class ContainerDao:

def __init__(self, a):

# Row number

self.a = a

# Read the container data from the data set , And deposit in contaianer_list

def get_container_list(self):

# Extract data from a dataset : 0 Containers ID 1 machine ID 2 Time stamp 3 Deployment domain 4 state 5 Needed cpu Number 6cpu Limited quantity 7 Memory size

columns = ['container_id','time_stamp','app_du','status','cpu_request','cpu_limit','mem_size','container_type','finished_time']

filepath_or_buffer = "G:\\experiment\\data_set\\container_meta\\app_"+str(self.a)+".csv"

container_dao = pd.read_csv(filepath_or_buffer, names=columns)

temp_list = container_dao.values.tolist()

# print(temp_list)

container_data_list = list()

for data in temp_list:

# print(data)

temp_container = ContainerData()

temp_container.set_container_data(data)

container_data_list.append(temp_container)

# print(temp_container)

return container_data_list

def get_container_data_groupby(self):

""" columns = ['container_id', 'machine_id ', 'time_stamp', 'app_du', 'status', 'cpu_request', 'cpu_limit', 'mem_size'] filepath_or_buffer = "D:\\experiment\\data_set\\container_meta\\app_" + str(self.a) + ".csv" container_dao = pd.read_csv(filepath_or_buffer, names=columns) temp_container_dao = container_dao temp_container_dao['count'] = 0 temp_container_dao.groupby(['app_du']).count()['count'] :return: """

temp_filepath = "G:\\experiment\\data_set\\container_meta\\app_" + str(self.a) + "_groupby.csv"

container_dao_groupby = pd.read_csv(temp_filepath)

container_data_groupby_list = container_dao_groupby.values.tolist()

return container_data_groupby_list

Corresponding container_data class :

class ContainerData:

def __init__(self):

pass

# Containers in data sets ID

def set_container_id(self, container_id):

self.container_id = container_id

# Machines in data sets ID

def set_machine_id(self, machine_id):

self.machine_id = machine_id

# Deployment container group in data set ( Used to build clusters )

def set_deploy_unit(self, app_du):

self.app_du = app_du

# Timestamp in data set

def set_time_stamp(self, time_stamp):

self.time_stamp = time_stamp

# In dataset cpu Limit requests

def set_cpu_limit(self, cpu_limit):

self.cpu_limit = cpu_limit

# In dataset cpu request

def set_cpu_request(self, cpu_request):

self.cpu_request = cpu_request

# The size of memory requested in the dataset

def set_mem_size(self, mem_size):

self.mem_request = mem_size

# Container status in the dataset

def set_state(self, state):

self.state = state

pass

def set_container_type(self,container_type):

self.container_type = container_type

pass

def set_finished_time(self,finished_time):

self.finished_time = finished_time

# Initialize objects with datasets

def set_container_data(self, data):

# Containers ID

self.set_container_id(data[0])

# self.set_machine_id(data[1])

# Container timestamp ( Arrival time )

self.set_time_stamp(data[1])

# Container group

self.set_deploy_unit(data[2])

# state

self.set_state(data[3])

# cpu demand

self.set_cpu_request(data[4])

# cpu Limit

self.set_cpu_limit(data[5])

# Memory size

self.set_mem_size(data[6])

# Container type

self.set_container_type(data[7])

# Completion time

self.set_finished_time(data[8])

Last

边栏推荐

- I want to say more about this communication failure

- IP, subnet mask, gateway, default gateway

- 二分(整数二分、实数二分)

- Jielizhi obtains the currently used dial information [chapter]

- Interview shock 62: what are the precautions for group by?

- 偷窃他人漏洞报告变卖成副业,漏洞赏金平台出“内鬼”

- OpenCV中如何使用滚动条动态调整参数

- Transfer data to event object in wechat applet

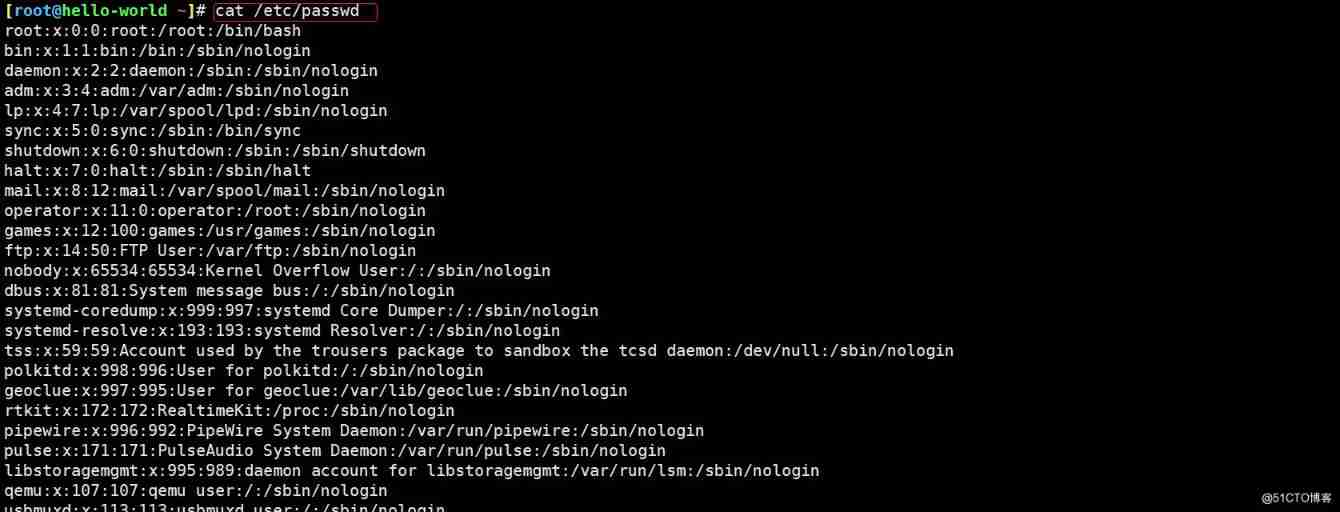

- Running the service with systemctl in the container reports an error: failed to get D-Bus connection: operation not permitted (solution)

- 带你穿越古罗马,元宇宙巴士来啦 #Invisible Cities

猜你喜欢

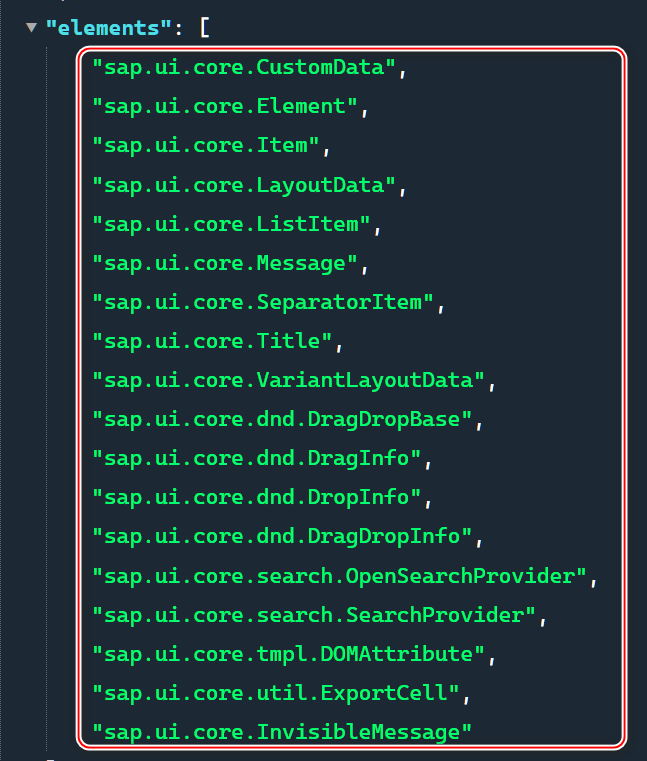

Manifest of SAP ui5 framework json

![Jerry's updated equipment resource document [chapter]](/img/6c/17bd69b34c7b1bae32604977f6bc48.jpg)

Jerry's updated equipment resource document [chapter]

一体化实时 HTAP 数据库 StoneDB,如何替换 MySQL 并实现近百倍性能提升

Awk command exercise

![Jerry's access to additional information on the dial [article]](/img/a1/28b2a5f7c16cbcde1625a796f0d188.jpg)

Jerry's access to additional information on the dial [article]

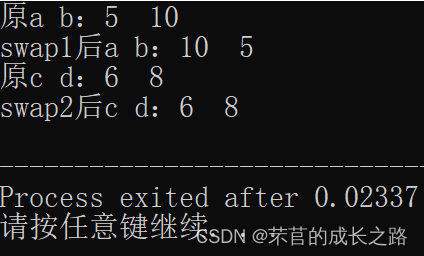

C语言通过指针交换两个数

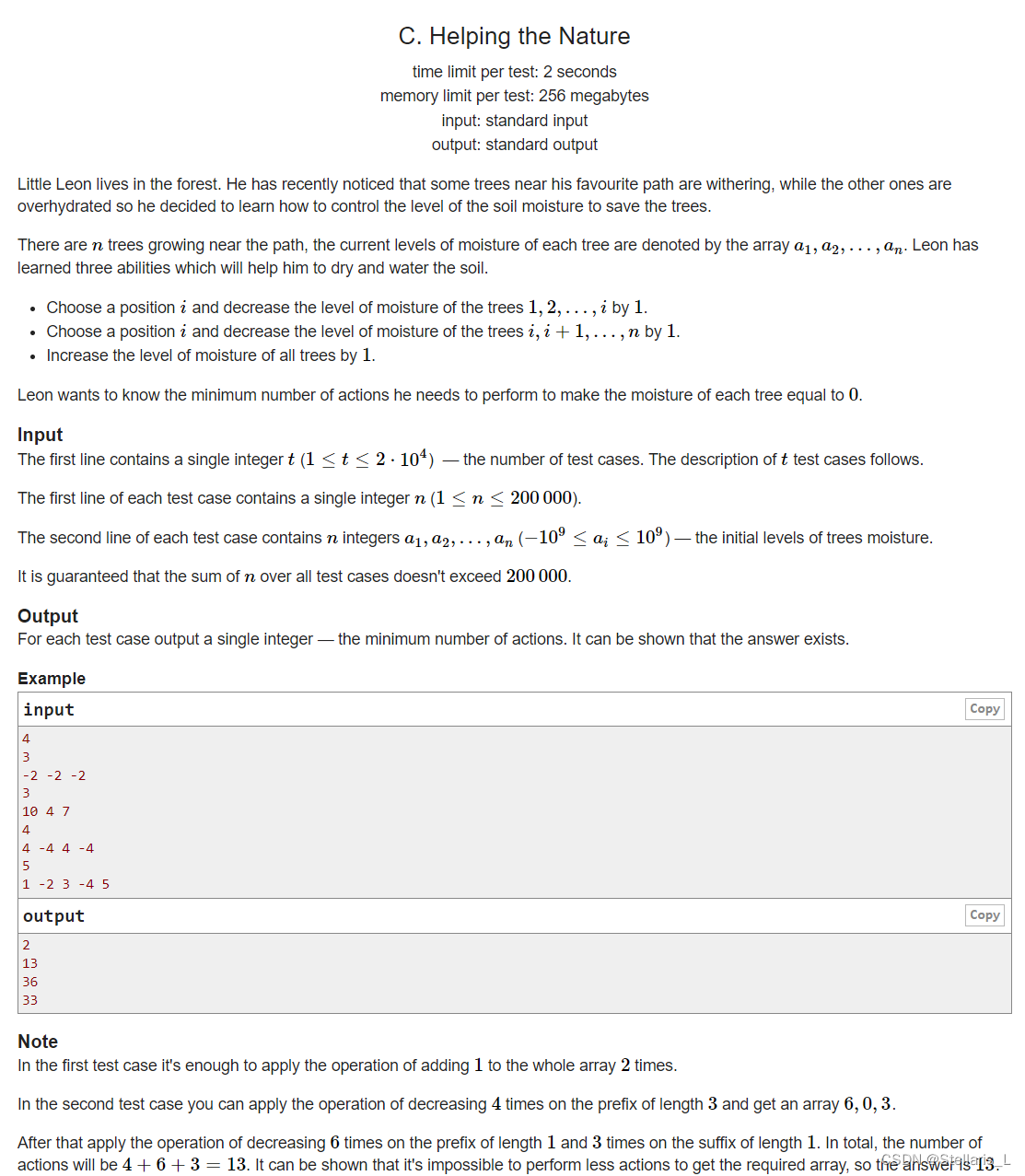

1700C - Helping the Nature

李书福为何要亲自挂帅造手机?

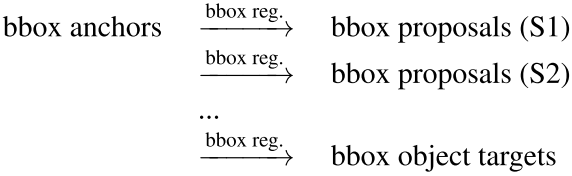

RepPoints:可形变卷积的进阶

declval(指导函数返回值范例)

随机推荐

sql语句优化,order by desc速度优化

Jielizhi obtains the currently used dial information [chapter]

Video fusion cloud platform easycvr adds multi-level grouping, which can flexibly manage access devices

78 year old professor Huake has been chasing dreams for 40 years, and the domestic database reaches dreams to sprint for IPO

李书福为何要亲自挂帅造手机?

Growth of operation and maintenance Xiaobai - week 7

【Android】Kotlin代码编写规范化文档

Spark accumulator and broadcast variables and beginners of sparksql

What is the reason why the video cannot be played normally after the easycvr access device turns on the audio?

酷雷曼多种AI数字人形象,打造科技感VR虚拟展厅

Jerry's watch reading setting status [chapter]

OpenCV中如何使用滚动条动态调整参数

在一台服务器上部署多个EasyCVR出现报错“Press any to exit”,如何解决?

How to use scroll bars to dynamically adjust parameters in opencv

Getting started with pytest ----- test case pre post, firmware

Shell input a string of numbers to determine whether it is a mobile phone number

Today in history: the mother of Google was born; Two Turing Award pioneers born on the same day

[introduction to MySQL] the first sentence · first time in the "database" Mainland

Kivy tutorial: support Chinese in Kivy to build cross platform applications (tutorial includes source code)

Optimization of middle alignment of loading style of device player in easycvr electronic map