当前位置:网站首页>Mapreduce实例(十):ChainMapReduce

Mapreduce实例(十):ChainMapReduce

2022-07-06 09:01:00 【笑看风云路】

大家好,我是风云,欢迎大家关注我的博客 或者 微信公众号【笑看风云路】,在未来的日子里我们一起来学习大数据相关的技术,一起努力奋斗,遇见更好的自己!

实现原理

一些复杂的任务难以用一次MapReduce处理完成,需要多次MapReduce才能完成任务。

链式处理

Hadoop2.0开始MapReduce作业支持链式处理,类似于工厂的生产线,每一个阶段都有特定的任务要处理,比如提供原配件——>组装——>打印出厂日期,等等。通过这样进一步的分工,从而提高了生产效率,我们Hadoop中的链式MapReduce也是如此,这些Mapper可以像水流一样,一级一级向后处理,有点类似于Linux的管道。前一个Mapper的输出结果直接可以作为下一个Mapper的输入,形成一个流水线。

链式MapReduce的执行规则:整个Job中只能有一个Reducer,在Reducer前面可以有一个或者多个Mapper,在Reducer的后面可以有0个或者多个Mapper。

Hadoop2.0支持的链式处理MapReduce作业有以下三种:

(1)顺序链接MapReduce作业

类似于Unix中的管道:mapreduce-1 | mapreduce-2 | mapreduce-3 …,每一个阶段创建一个job,并将当前输入路径设为前一个的输出。在最后阶段删除链上生成的中间数据。

(2)具有复杂依赖的MapReduce链接

若mapreduce-1处理一个数据集, mapreduce-2 处理另一个数据集,而mapreduce-3对前两个做内部链接。这种情况通过Job和JobControl类管理非线性作业间的依赖。如x.addDependingJob(y)意味着x在y完成前不会启动。

(3)预处理和后处理的链接

一般将预处理和后处理写为Mapper任务。可以自己进行链接或使用ChainMapper和ChainReducer类,生成作业表达式类似于:

MAP+ | REDUCE | MAP*

如以下作业: Map1 | Map2 | Reduce | Map3 | Map4,把Map2和Reduce视为MapReduce作业核心。Map1作为前处理,Map3, Map4作为后处理。

ChainMapper使用模式:预处理作业,ChainReducer使用模式:设置Reducer并添加后处理Mapper

本实验中用到的就是第三种作业模式:预处理和后处理的链接,生成作业表达式类似于 Map1 | Map2 | Reduce | Map3

执行流程

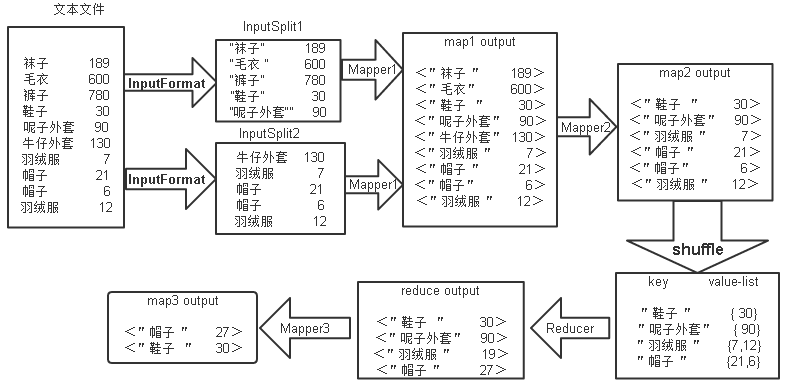

mapreduce执行的大体流程如下图所示:

由上图可知,ChainMapReduce的执行流程为:

①首先将文本文件中的数据通过InputFormat实例切割成多个小数据集InputSplit,然后通过RecordReader实例将小数据集InputSplit解析为<key,value>的键值对并提交给Mapper1;

②Mapper1里的map函数将输入的value进行切割,把商品名字段作为key值,点击数量字段作为value值,筛选出value值小于等于600的<key,value>,将<key,value>输出给Mapper2;

③Mapper2里的map函数再筛选出value值小于100的<key,value>,并将<key,value>输出;

④Mapper2输出的<key,value>键值对先经过shuffle,将key值相同的所有value放到一个集合,形成<key,value-list>,然后将所有的<key,value-list>输入给Reducer;

⑤Reducer里的reduce函数将value-list集合中的元素进行累加求和作为新的value,并将<key,value>输出给Mapper3;

⑥Mapper3里的map函数筛选出key值小于3个字符的<key,value>,并将<key,value>以文本的格式输出到hdfs上。该ChainMapReduce的Java代码主要分为四个部分,分别为:FilterMapper1,FilterMapper2,SumReducer,FilterMapper3。

代码编写

FilterMapper1代码

public static class FilterMapper1 extends Mapper<LongWritable, Text, Text, DoubleWritable> {

private Text outKey = new Text(); //声明对象outKey

private DoubleWritable outValue = new DoubleWritable(); //声明对象outValue

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, DoubleWritable>.Context context)

throws IOException,InterruptedException {

String line = value.toString();

if (line.length() > 0) {

String[] splits = line.split("\t"); //按行对内容进行切分

double visit = Double.parseDouble(splits[1].trim());

if (visit <= 600) {

//if循环,判断visit是否小于等于600

outKey.set(splits[0]);

outValue.set(visit);

context.write(outKey, outValue); //调用context的write方法

}

}

}

}

首先定义输出的key和value的类型,然后在map方法中获取文本行内容,用Split(“\t”)对行内容进行切分,把包含点击量的字段转换成double类型并赋值给visit,用if判断,如果visit小于等于600,则设置商品名称字段作为key,设置该visit作为value,用context的write方法输出<key,value>。

FilterMapper2代码

public static class FilterMapper2 extends Mapper<Text, DoubleWritable, Text, DoubleWritable> {

@Override

protected void map(Text key, DoubleWritable value, Mapper<Text, DoubleWritable, Text, DoubleWritable>.Context context)

throws IOException,InterruptedException {

if (value.get() < 100) {

context.write(key, value);

}

}

}

接收mapper1传来的数据,通过value.get()获取输入的value值,再用if判断如果输入的value值小于100,则直接将输入的key赋值给输出的key,输入的value赋值给输出的value,输出<key,value>。

SumReducer代码

public static class SumReducer extends Reducer<Text, DoubleWritable, Text, DoubleWritable> {

private DoubleWritable outValue = new DoubleWritable();

@Override

protected void reduce(Text key, Iterable<DoubleWritable> values, Reducer<Text, DoubleWritable, Text, DoubleWritable>.Context context)

throws IOException, InterruptedException {

double sum = 0;

for (DoubleWritable val : values) {

sum += val.get();

}

outValue.set(sum);

context.write(key, outValue);

}

}

FilterMapper2输出的<key,value>键值对先经过shuffle,将key值相同的所有value放到一个集合,形成<key,value-list>,然后将所有的<key,value-list>输入给SumReducer。在reduce函数中,用增强版for循环遍历value-list中元素,将其数值进行累加并赋值给sum,然后用outValue.set(sum)方法把sum的类型转变为DoubleWritable类型并将sum设置为输出的value,将输入的key赋值给输出的key,最后用context的write()方法输出<key,value>。

FilterMapper3代码

public static class FilterMapper3 extends Mapper<Text, DoubleWritable, Text, DoubleWritable> {

@Override

protected void map(Text key, DoubleWritable value, Mapper<Text, DoubleWritable, Text, DoubleWritable>.Context context)

throws IOException, InterruptedException {

if (key.toString().length() < 3) {

//for循环,判断key值是否大于3

System.out.println("写出去的内容为:" + key.toString() +"++++"+ value.toString());

context.write(key, value);

}

}

}

接收reduce传来的数据,通过key.toString().length()获取key值的字符长度,再用if判断如果key值的字符长度小于3,则直接将输入的key赋值给输出的key,输入的value赋值给输出的value,输出<key,value>。

完整代码

package mapreduce;

import java.io.IOException;

import java.net.URI;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.chain.ChainMapper;

import org.apache.hadoop.mapreduce.lib.chain.ChainReducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.mapreduce.lib.partition.HashPartitioner;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.io.DoubleWritable;

public class ChainMapReduce {

private static final String INPUTPATH = "hdfs://localhost:9000/mymapreduce10/in/goods_0";

private static final String OUTPUTPATH = "hdfs://localhost:9000/mymapreduce10/out";

public static void main(String[] args) {

try {

Configuration conf = new Configuration();

FileSystem fileSystem = FileSystem.get(new URI(OUTPUTPATH), conf);

if (fileSystem.exists(new Path(OUTPUTPATH))) {

fileSystem.delete(new Path(OUTPUTPATH), true);

}

Job job = new Job(conf, ChainMapReduce.class.getSimpleName());

FileInputFormat.addInputPath(job, new Path(INPUTPATH));

job.setInputFormatClass(TextInputFormat.class);

ChainMapper.addMapper(job, FilterMapper1.class, LongWritable.class, Text.class, Text.class, DoubleWritable.class, conf);

ChainMapper.addMapper(job, FilterMapper2.class, Text.class, DoubleWritable.class, Text.class, DoubleWritable.class, conf);

ChainReducer.setReducer(job, SumReducer.class, Text.class, DoubleWritable.class, Text.class, DoubleWritable.class, conf);

ChainReducer.addMapper(job, FilterMapper3.class, Text.class, DoubleWritable.class, Text.class, DoubleWritable.class, conf);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(DoubleWritable.class);

job.setPartitionerClass(HashPartitioner.class);

job.setNumReduceTasks(1);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(DoubleWritable.class);

FileOutputFormat.setOutputPath(job, new Path(OUTPUTPATH));

job.setOutputFormatClass(TextOutputFormat.class);

System.exit(job.waitForCompletion(true) ? 0 : 1);

} catch (Exception e) {

e.printStackTrace();

}

}

public static class FilterMapper1 extends Mapper<LongWritable, Text, Text, DoubleWritable> {

private Text outKey = new Text();

private DoubleWritable outValue = new DoubleWritable();

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, DoubleWritable>.Context context)

throws IOException,InterruptedException {

String line = value.toString();

if (line.length() > 0) {

String[] splits = line.split("\t");

double visit = Double.parseDouble(splits[1].trim());

if (visit <= 600) {

outKey.set(splits[0]);

outValue.set(visit);

context.write(outKey, outValue);

}

}

}

}

public static class FilterMapper2 extends Mapper<Text, DoubleWritable, Text, DoubleWritable> {

@Override

protected void map(Text key, DoubleWritable value, Mapper<Text, DoubleWritable, Text, DoubleWritable>.Context context)

throws IOException,InterruptedException {

if (value.get() < 100) {

context.write(key, value);

}

}

}

public static class SumReducer extends Reducer<Text, DoubleWritable, Text, DoubleWritable> {

private DoubleWritable outValue = new DoubleWritable();

@Override

protected void reduce(Text key, Iterable<DoubleWritable> values, Reducer<Text, DoubleWritable, Text, DoubleWritable>.Context context)

throws IOException, InterruptedException {

double sum = 0;

for (DoubleWritable val : values) {

sum += val.get();

}

outValue.set(sum);

context.write(key, outValue);

}

}

public static class FilterMapper3 extends Mapper<Text, DoubleWritable, Text, DoubleWritable> {

@Override

protected void map(Text key, DoubleWritable value, Mapper<Text, DoubleWritable, Text, DoubleWritable>.Context context)

throws IOException, InterruptedException {

if (key.toString().length() < 3) {

System.out.println("写出去的内容为:" + key.toString() +"++++"+ value.toString());

context.write(key, value);

}

}

}

}

-------------- end ----------------

微信公众号:扫描下方二维码或 搜索 笑看风云路 关注

边栏推荐

- 基于WEB的网上购物系统的设计与实现(附:源码 论文 sql文件)

- Publish and subscribe to redis

- SimCLR:NLP中的对比学习

- QML type: locale, date

- Using label template to solve the problem of malicious input by users

- Parameterization of postman

- xargs命令的基本用法

- Redis之主从复制

- Global and Chinese market of appointment reminder software 2022-2028: Research Report on technology, participants, trends, market size and share

- go-redis之初始化連接

猜你喜欢

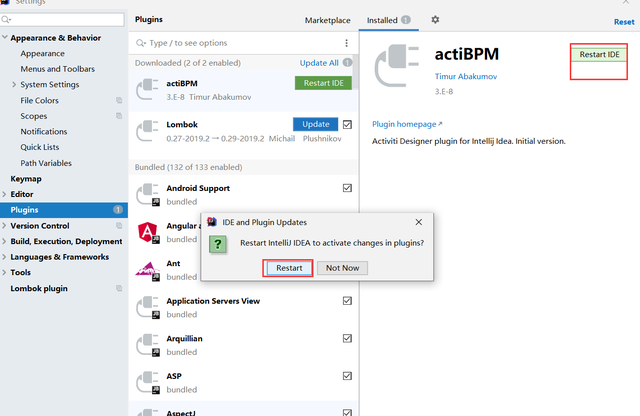

工作流—activiti7环境搭建

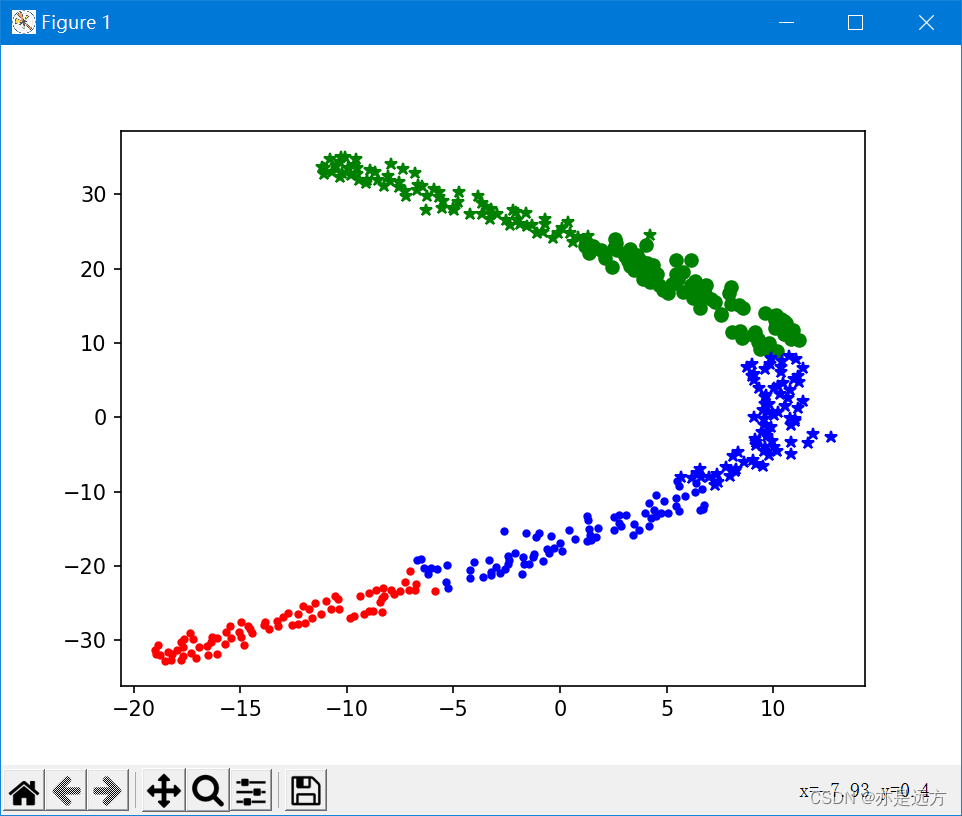

Multivariate cluster analysis

Lua script of redis

Redis之cluster集群

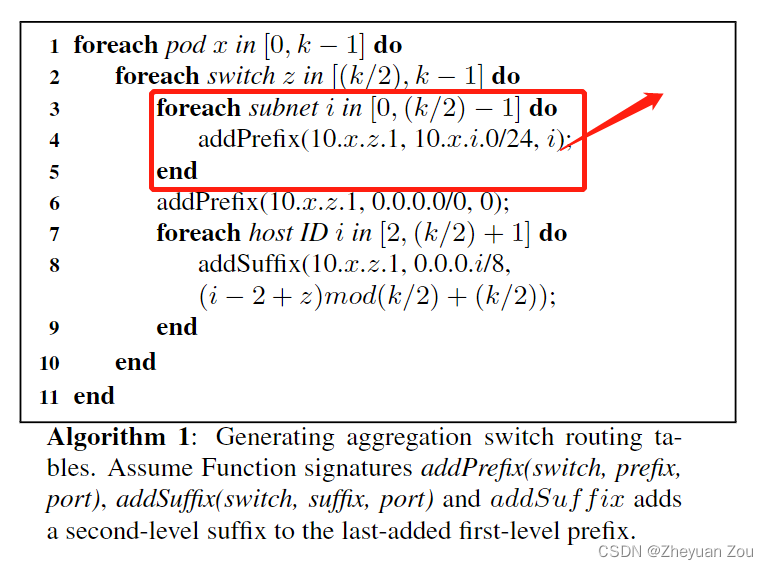

Advance Computer Network Review(1)——FatTree

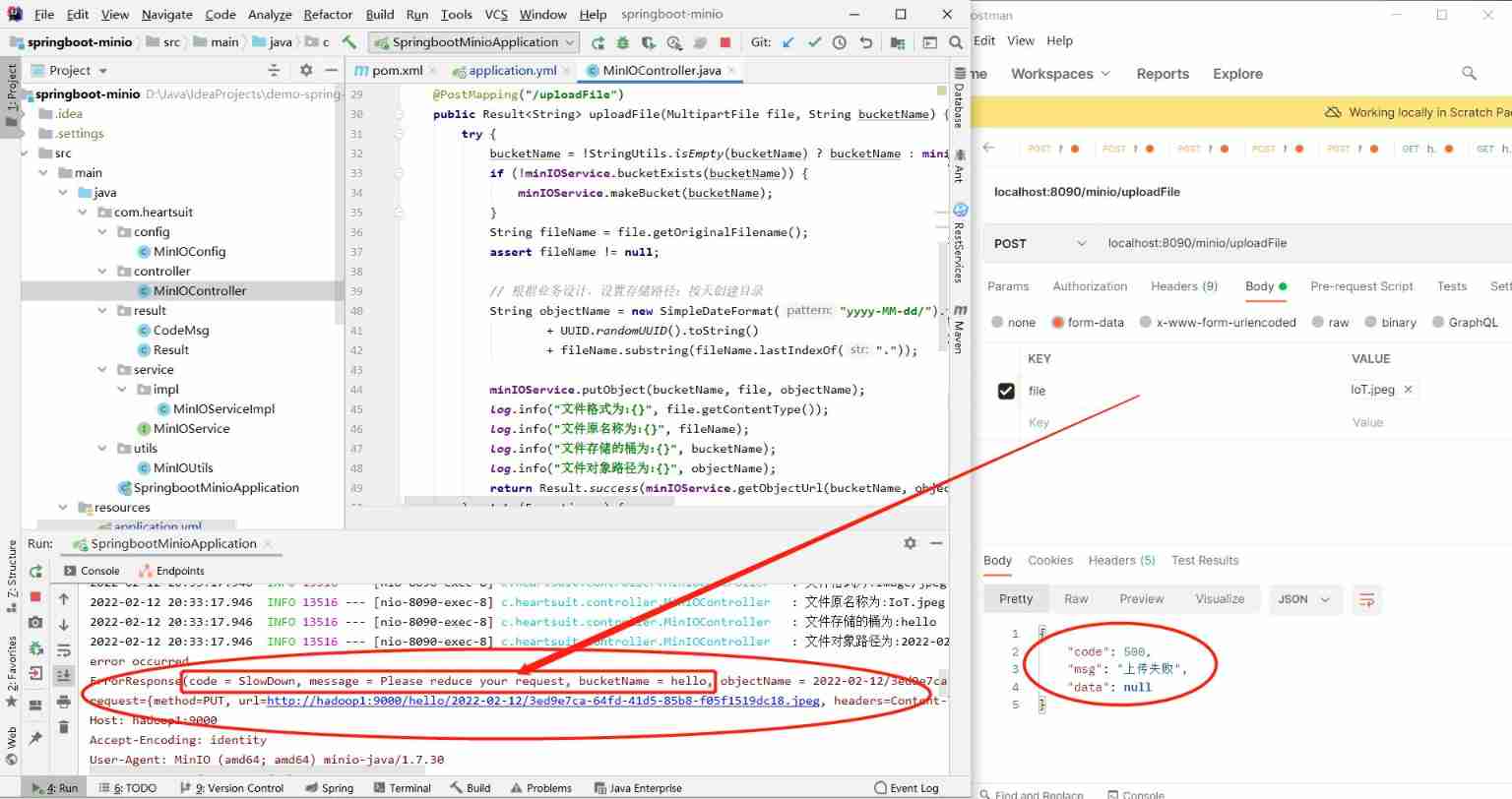

Minio distributed file storage cluster for full stack development

LeetCode41——First Missing Positive——hashing in place & swap

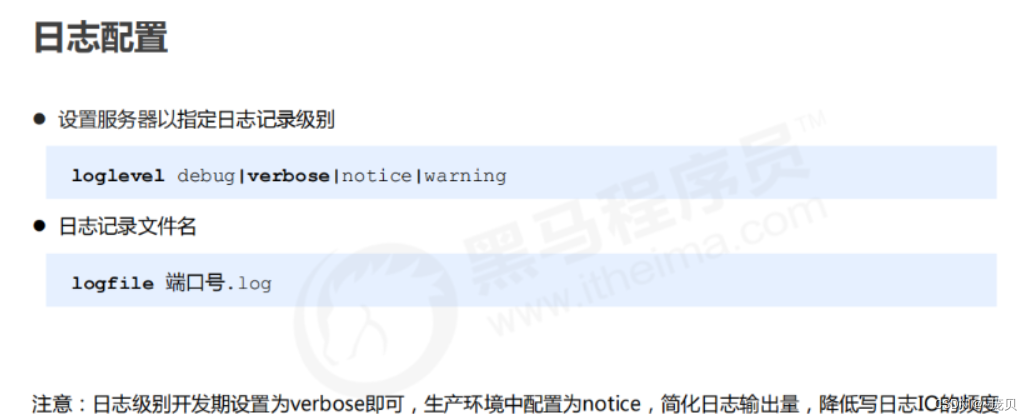

Redis之核心配置

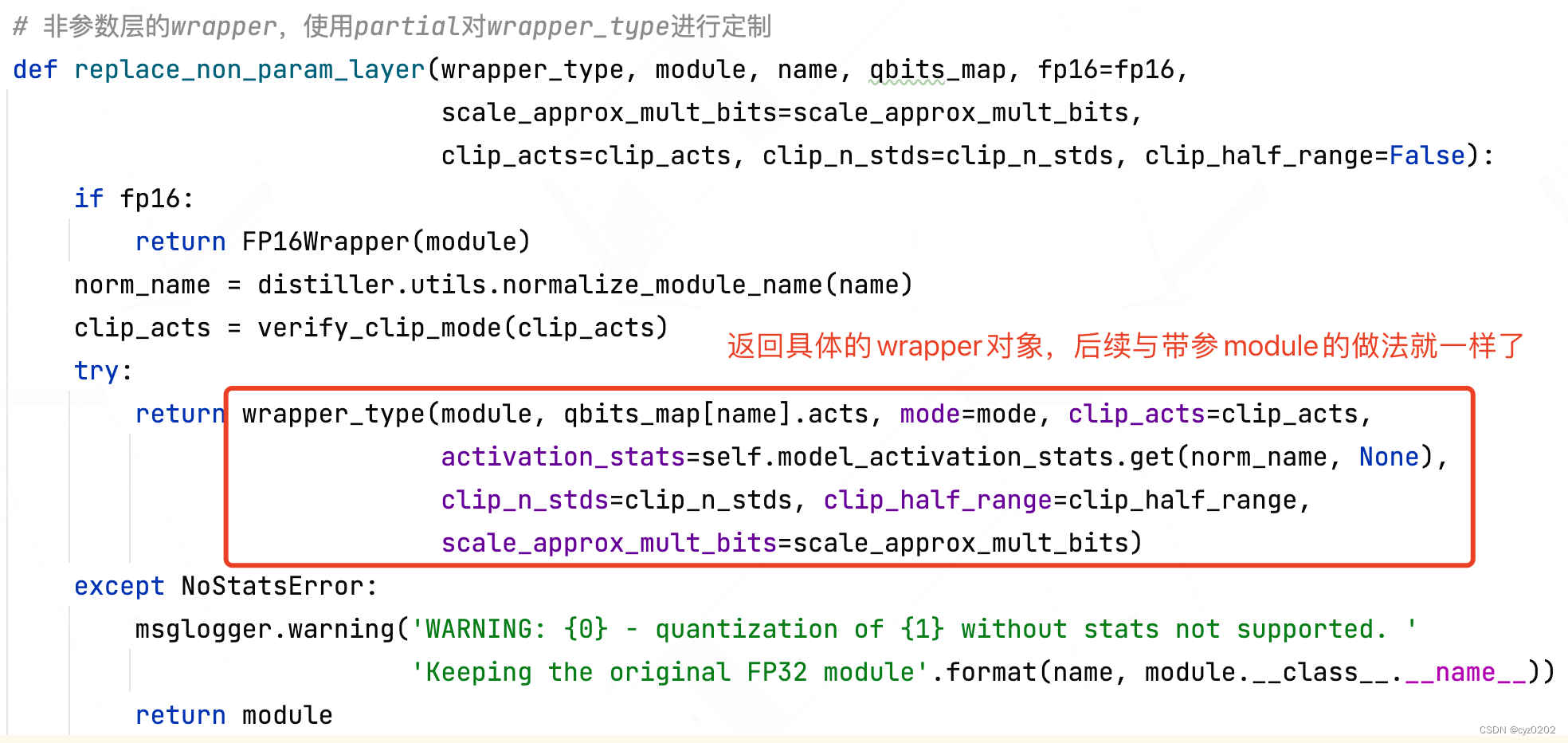

Intel distiller Toolkit - Quantitative implementation 2

Ijcai2022 collection of papers (continuously updated)

随机推荐

Global and Chinese market of bank smart cards 2022-2028: Research Report on technology, participants, trends, market size and share

Heap (priority queue) topic

Redis之哨兵模式

leetcode-14. Longest common prefix JS longitudinal scanning method

[text generation] recommended in the collection of papers - Stanford researchers introduce time control methods to make long text generation more smooth

Le modèle sentinelle de redis

MapReduce工作机制

Redis之核心配置

基于B/S的影视创作论坛的设计与实现(附:源码 论文 sql文件 项目部署教程)

Global and Chinese markets for hardware based encryption 2022-2028: Research Report on technology, participants, trends, market size and share

Redis之cluster集群

Mise en œuvre de la quantification post - formation du bminf

QDialog

Redis cluster

The five basic data structures of redis are in-depth and application scenarios

CUDA implementation of self defined convolution attention operator

Appears when importing MySQL

xargs命令的基本用法

Kratos战神微服务框架(一)

Redis connection redis service command