当前位置:网站首页>Where do you find the materials for those articles that have read 10000?

Where do you find the materials for those articles that have read 10000?

2022-07-02 07:54:00 【Xiaoyi We Media】

I once did a small survey in the we media circle , More than half of the people think that in the whole creative process , It takes the longest time to find material , It may take only a few minutes to write an article , But finding the material may take a day or even longer ,

If the daily output is relatively small, it's OK to say , If there are many articles to be written every day , That would be fatal . Today, I'm here to share some materials used in the creation of articles .

1、 Focus on real-time hotspots

The reason why hot spots can become hot spots , It's because everyone's attention is very high , Grasp the hot spot , Equal to you have a point of writing , Play according to this point , You can write what you are interested in .

2、 We media that pay attention to peers V

Every field has Top The author of , We need to keep an eye on the dynamic and writing content of these authors , See what they send every day ,

The official account of WeChat , You can pay more attention to some authors in the industry , Read their articles for inspiration , You can directly use the easy author monitoring function , Monitor them all .

3、 Keyword search on major platforms

The headline number

As the number one we media in China , There are quite a lot of material resources inside .

Enter the front page of headline , In the upper right corner, we can see the search box , Enter keywords , Click the search button on the right .

browser

Baidu 、 Google 、 You can search directly in Sogou browser , Directly enter the content you want to search , Of course, official account 、 I know there are some materials for reference , But don't copy directly , Pay attention to copyright ,

Last , We media can't take shape in a day or two , Some people have millions of reading just a few days after opening , That's what you don't know about his accumulation of materials on weekdays . Want to write a high reading article , We must do the most basic work , There is no shortcut !

边栏推荐

- Mmdetection model fine tuning

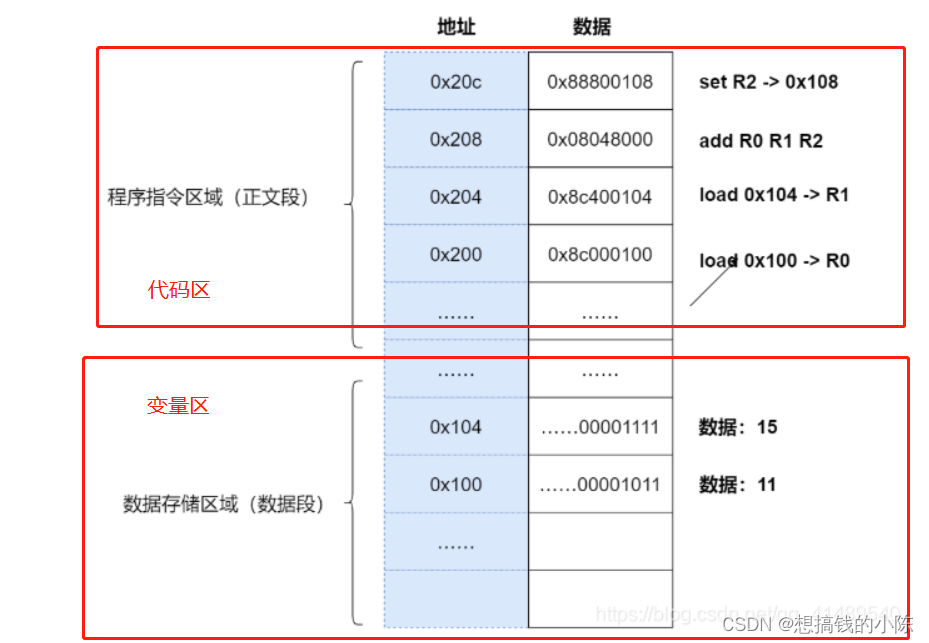

- Memory model of program

- [Sparse to Dense] Sparse to Dense: Depth Prediction from Sparse Depth samples and a Single Image

- open3d环境错误汇总

- latex公式正体和斜体

- 用MLP代替掉Self-Attention

- TimeCLR: A self-supervised contrastive learning framework for univariate time series representation

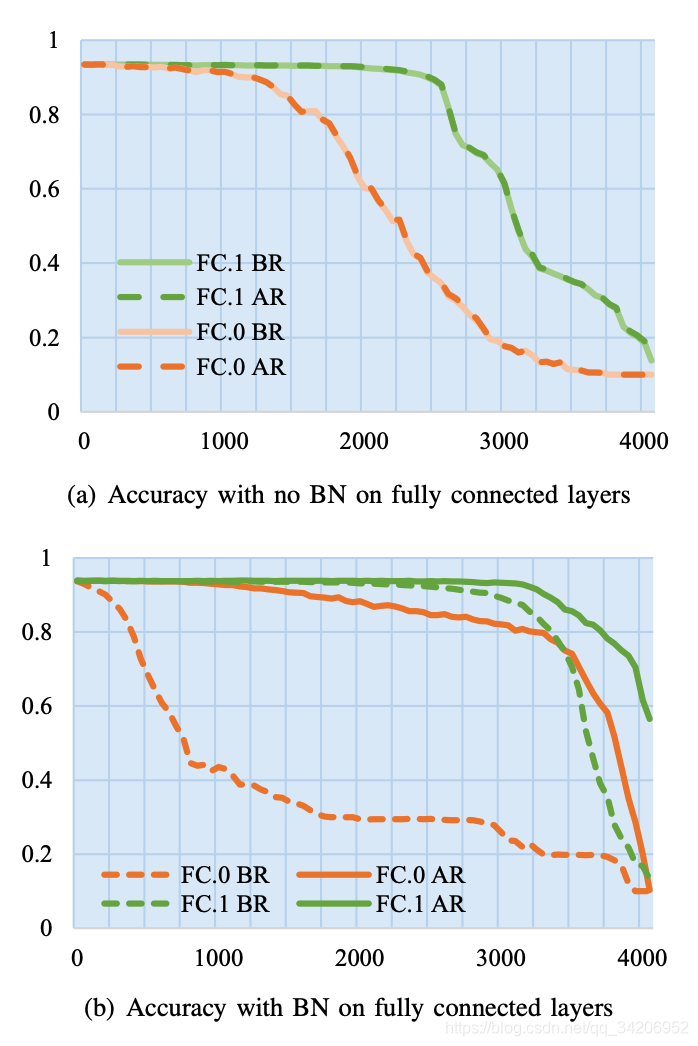

- 【Batch】learning notes

- CPU register

- Hystrix dashboard cannot find hystrix Stream solution

猜你喜欢

![[CVPR‘22 Oral2] TAN: Temporal Alignment Networks for Long-term Video](/img/bc/c54f1f12867dc22592cadd5a43df60.png)

[CVPR‘22 Oral2] TAN: Temporal Alignment Networks for Long-term Video

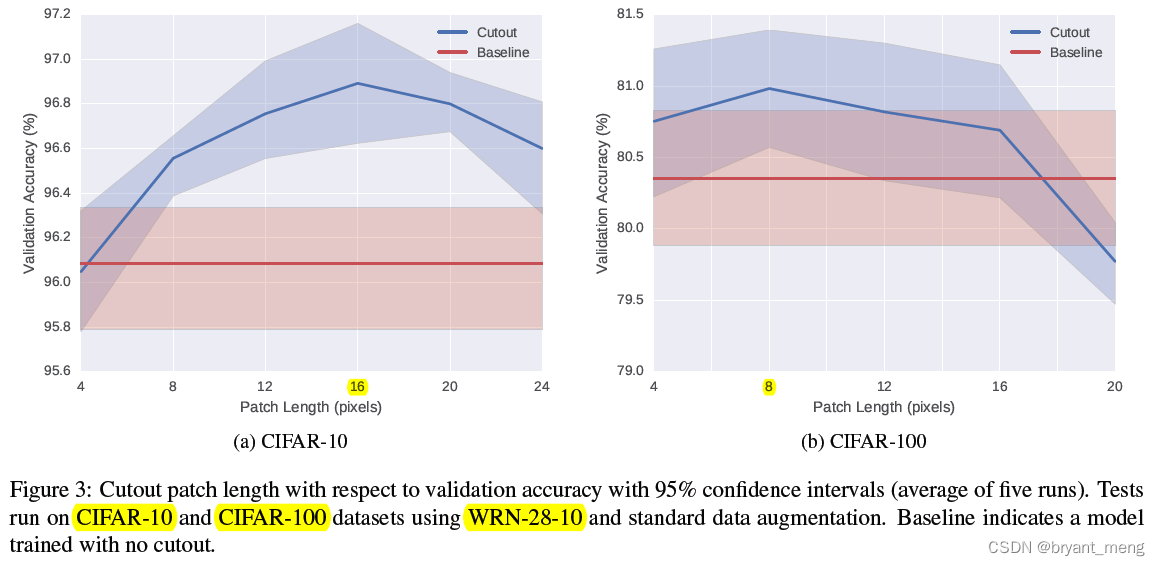

【Cutout】《Improved Regularization of Convolutional Neural Networks with Cutout》

【双目视觉】双目立体匹配

【BiSeNet】《BiSeNet:Bilateral Segmentation Network for Real-time Semantic Segmentation》

Implementation of yolov5 single image detection based on pytorch

Embedding malware into neural networks

Execution of procedures

【AutoAugment】《AutoAugment:Learning Augmentation Policies from Data》

【Batch】learning notes

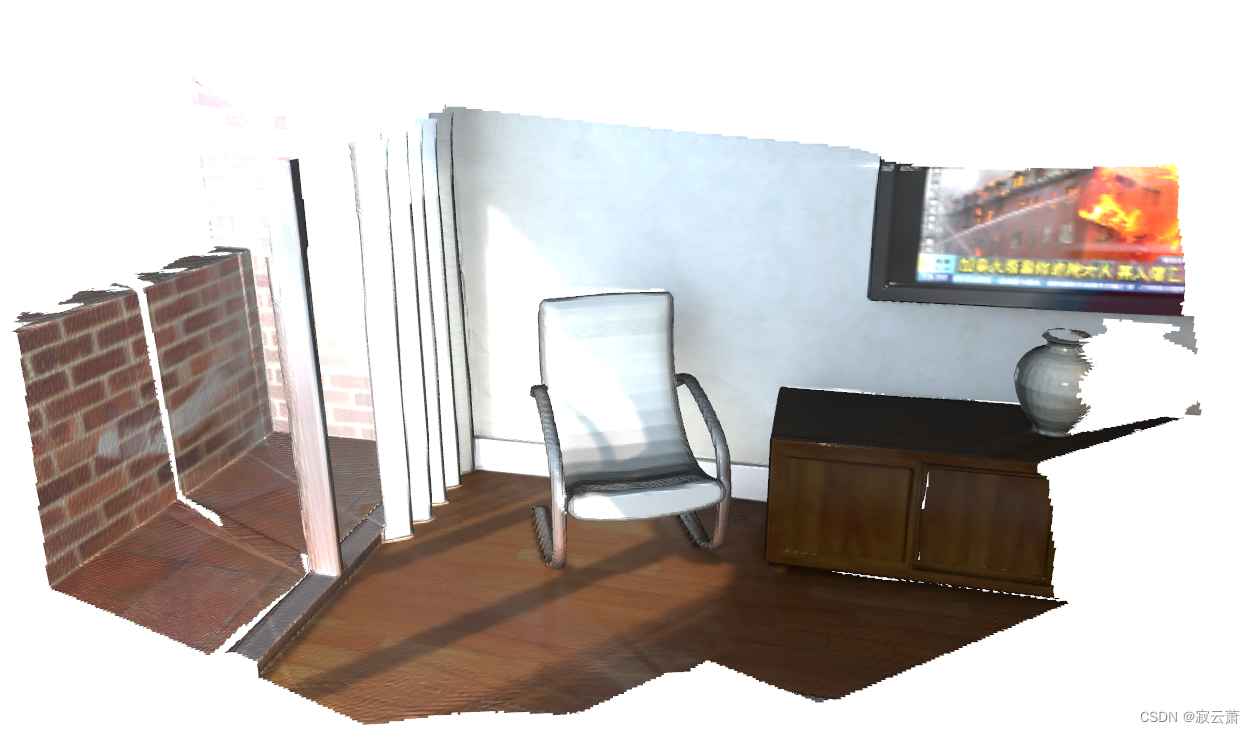

open3d学习笔记五【RGBD融合】

随机推荐

【C#笔记】winform中保存DataGridView中的数据为Excel和CSV

解决jetson nano安装onnx错误(ERROR: Failed building wheel for onnx)总结

【Mixup】《Mixup:Beyond Empirical Risk Minimization》

What if the laptop task manager is gray and unavailable

【TCDCN】《Facial landmark detection by deep multi-task learning》

【AutoAugment】《AutoAugment:Learning Augmentation Policies from Data》

yolov3训练自己的数据集(MMDetection)

【MnasNet】《MnasNet:Platform-Aware Neural Architecture Search for Mobile》

Replace self attention with MLP

【学习笔记】Matlab自编高斯平滑器+Sobel算子求导

【Random Erasing】《Random Erasing Data Augmentation》

MoCO ——Momentum Contrast for Unsupervised Visual Representation Learning

Solve the problem of latex picture floating

latex公式正体和斜体

win10+vs2017+denseflow编译

【Mixup】《Mixup:Beyond Empirical Risk Minimization》

Calculate the total in the tree structure data in PHP

open3d环境错误汇总

Daily practice (19): print binary tree from top to bottom

用MLP代替掉Self-Attention