当前位置:网站首页>Pytorch common loss function

Pytorch common loss function

2022-07-06 18:58:00 【m0_ sixty-one million eight hundred and ninety-nine thousand on】

Reproduced in :

Regression loss function - You know (zhihu.com)

PyTorch Summary of loss functions in | Dreamhouse blog (dreamhomes.top)

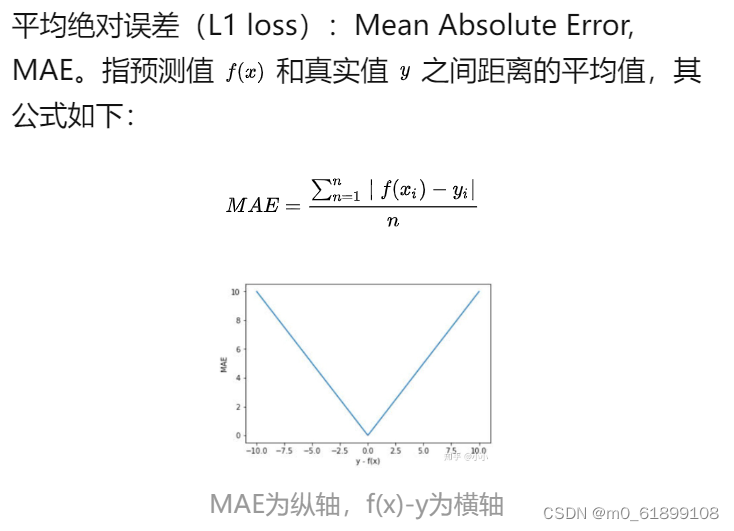

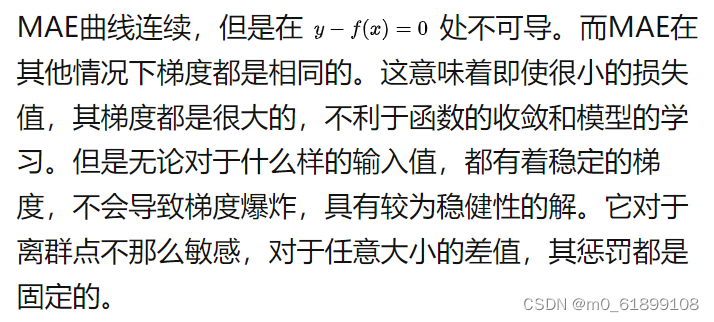

1、L1 loss

def mean_absolute_error(y_true, y_pred):

return K.mean(K.abs(y_pred - y_true), axis=-1)

# The sample code

import torch

from torch import nn

input_data = torch.FloatTensor([[3], [4], [5]]) # batch_size, output

target_data = torch.FloatTensor([[2], [5], [8]]) # batch_size, output

loss_func = nn.L1Loss()

loss = loss_func(input_data, target_data)

print(loss) # 1.6667

# Verification code

print((abs(3-2) + abs(4-5) + abs(5-8)) / 3) # 1.6666

2、L2 loss

def mean_squared_error(y_true, y_pred):

return K.mean(K.square(y_pred - y_true), axis=-1)

# The sample code

import torch

from torch import nn

input_data = torch.FloatTensor([[3], [4], [5]]) # batch_size, output

target_data = torch.FloatTensor([[2], [5], [8]]) # batch_size, output

loss_func = nn.MSELoss()

loss = loss_func(input_data, target_data)

print(loss) # 3.6667

# verification

print(((3-2)**2 + (4-5)**2 + (5-8)**2)/3) # 3.6666666666666665

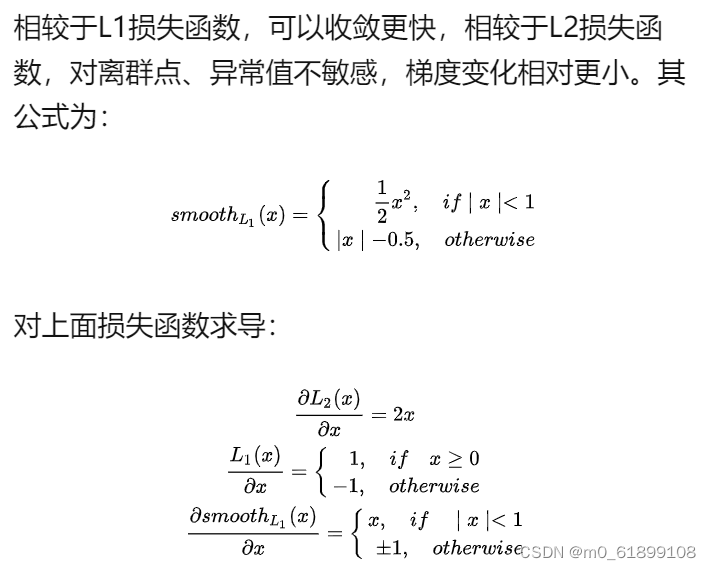

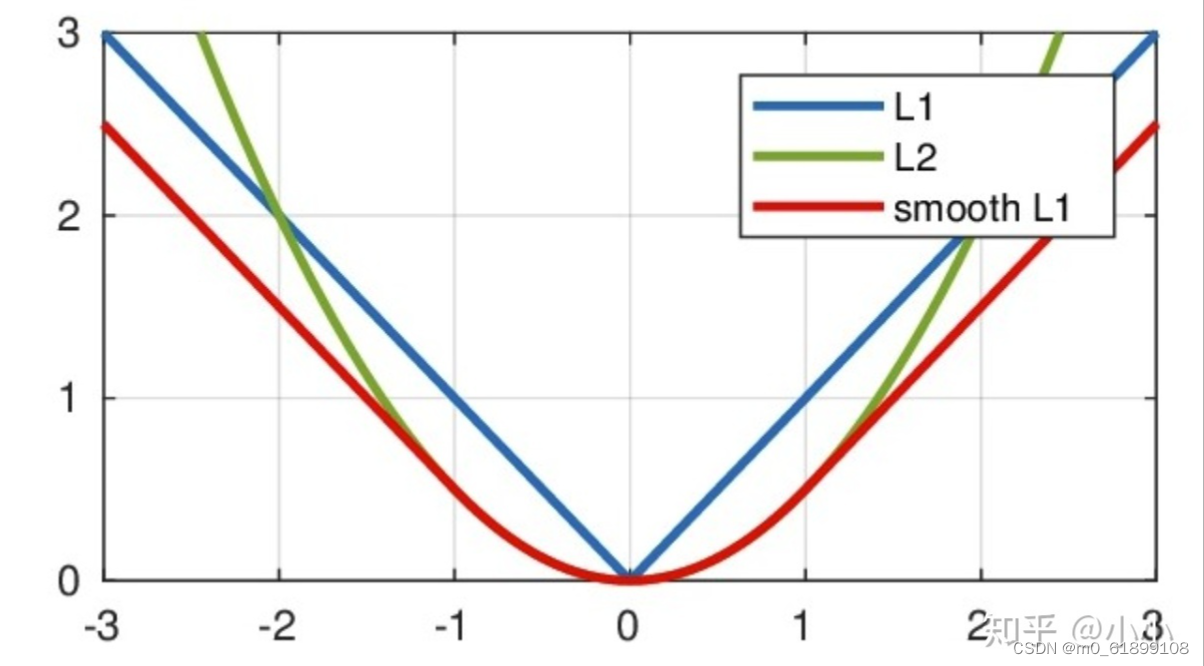

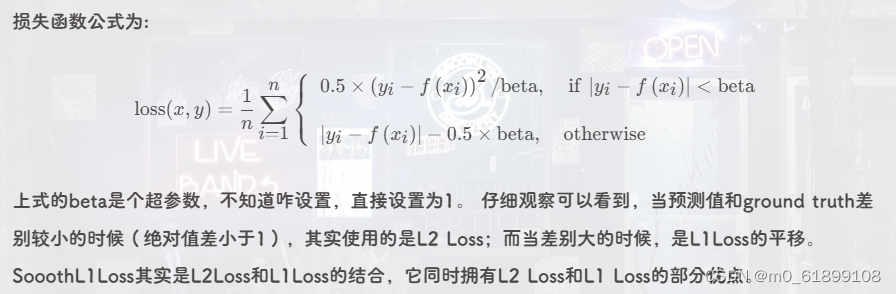

3、smooth L1 loss

stay Faster RCNN and SSD Used in smooth L1 Loss function .

# The sample code

import torch

from torch import nn

input_data = torch.FloatTensor([[3], [4], [5]]) # batch_size, output

target_data = torch.FloatTensor([[2], [4.1], [8]]) # batch_size, output

loss_func = nn.SmoothL1Loss()

loss = loss_func(input_data, target_data)

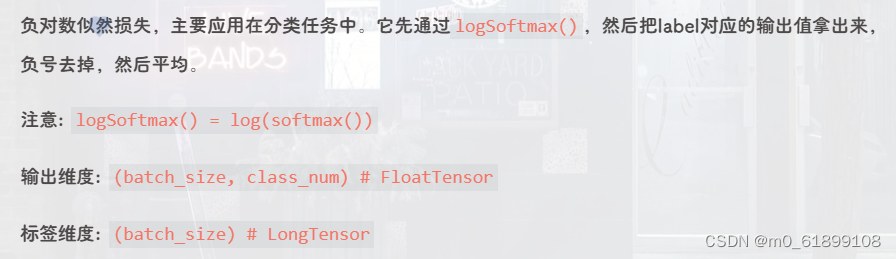

print(loss) # Output :1.00174、NLLLoss

# Sample code

# Three samples Make three categories Use NLLLoss

import torch

from torch import nn

input = torch.randn(3, 3)

print(input)

# tensor([[ 0.0550, -0.5005, -0.4188],

# [ 0.7060, 1.1139, -0.0016],

# [ 0.3008, -0.9968, 0.5147]])

label = torch.LongTensor([0, 2, 1]) # real label

loss_func = nn.NLLLoss()

loss = loss_func(temp, label)

print(loss) # Loss 1.6035

# Verification code

output = torch.FloatTensor([

[ 0.0550, -0.5005, -0.4188],

[ 0.7060, 1.1139, -0.0016],

[ 0.3008, -0.9968, 0.5147]]

)

# 1. softmax + log = torch.log_softmax()

sm = nn.Softmax(dim=1)

temp = torch.log(sm(input))

print(temp)

# tensor([[-0.7868, -1.3423, -1.2607],

# [-1.0974, -0.6896, -1.8051],

# [-0.9210, -2.2185, -0.7070]])

# 2. because label by [0, 2, 1]

# So the first line takes the first value -0.7868. The second line takes the third value -1.8051, The third line takes the second value -2.2185. Then throw the minus sign away . Frankly speaking That is, the negative value of the logarithm corresponds to label That's cross entropy .

print((0.7868 + 1.8051 + 2.2185) / 3) # Output 1.6034666666666666

5、CrossEntropyLoss

# The sample code

# Three samples are classified Same as the above data

import torch

from torch import nn

loss_func1 = nn.CrossEntropyLoss()

output = torch.FloatTensor([

[ 0.0550, -0.5005, -0.4188],

[ 0.7060, 1.1139, -0.0016],

[ 0.3008, -0.9968, 0.5147]]

)

true_label = torch.LongTensor([0, 2, 1]) # Notice the label id Must be from 0 Start Can't say label id yes 1,2,3 Must be 0,1,2

loss = loss_func1(output, true_label)

print(loss) # Output : 1.6035

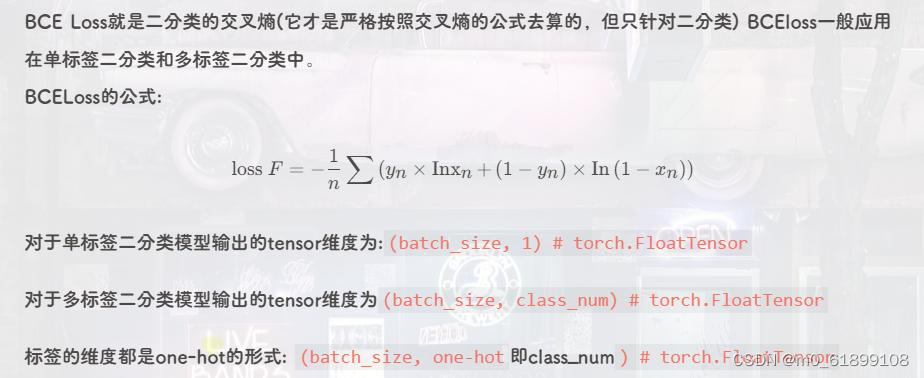

6、BCELoss

One sample multi label classification

# Sample code One sample multi label classification

import torch

from torch import nn

bce = nn.BCELoss()

output = torch.FloatTensor(

[

[ 0.0550, -0.5005, -0.4188],

[ 0.7060, 1.1139, -0.0016],

[ 0.3008, -0.9968, 0.5147]

]

)

# Be careful Output needs to go through sigmoid

s = nn.Sigmoid()

output = s(output)

# Suppose it is the classification of multiple labels for one piece of data

label = torch.FloatTensor(

[

[1, 0, 1],

[0, 0, 1],

[1, 1, 0]

]

)

loss = bce(output, label)

print(loss) # Output : 0.9013

# Verification code

# 1. Model output

output = torch.FloatTensor(

[

[ 0.0550, -0.5005, -0.4188],

[ 0.7060, 1.1139, -0.0016],

[ 0.3008, -0.9968, 0.5147]

]

)

# 2. after sigmoid

s = nn.Sigmoid()

output = s(output)

# print(output)

# tensor([[0.5137, 0.3774, 0.3968],

# [0.6695, 0.7529, 0.4996],

# [0.5746, 0.2696, 0.6259]])

label = torch.FloatTensor(

[

[1, 0, 1],

[0, 0, 1],

[1, 1, 0]

]

)

# According to the label and sigmoid Calculate calculate

# first line

sum_1 = 0

sum_1 += 1 * torch.log(torch.tensor(0.5137)) + (1 - 1) * torch.log(torch.tensor(1 - 0.5137)) # First column

sum_1 += 0 * torch.log(torch.tensor(0.3774)) + (1 - 0) * torch.log(torch.tensor(1 - 0.3774)) # Second column

sum_1 += 1 * torch.log(torch.tensor(0.3968)) + (1 - 1) * torch.log(torch.tensor(1 - 0.3968)) # The third column

avg_1 = sum_1 / 3

# The second line

sum_2 = 0

sum_2 += 0 * torch.log(torch.tensor(0.6695)) + (1 - 0) * torch.log(torch.tensor(1 - 0.6695)) # First column

sum_2 += 0 * torch.log(torch.tensor(0.7529)) + (1 - 0) * torch.log(torch.tensor(1 - 0.7529)) # Second column

sum_2 += 1 * torch.log(torch.tensor(0.4996)) + (1 - 1) * torch.log(torch.tensor(1 - 0.4996)) # The third column

avg_2 = sum_2 / 3

# The third line

sum_3 = 0

sum_3 += 1 * torch.log(torch.tensor(0.5746)) + (1 - 1) * torch.log(torch.tensor(1 - 0.5746)) # First column

sum_3 += 1 * torch.log(torch.tensor(0.2696)) + (1 - 1) * torch.log(torch.tensor(1 - 0.2696)) # Second column

sum_3 += 0 * torch.log(torch.tensor(0.6259)) + (1 - 0) * torch.log(torch.tensor(1 - 0.6259)) # The third column

avg_3 = sum_3 / 3

result = -(avg_1 + avg_2 + avg_3) / 3

print(result) # Output 0.9013

Dichotomous problem

# Sample code

# Two samples , Two classification

import torch

from torch import nn

bce = nn.BCELoss()

output = torch.FloatTensor(

[

[ 0.0550, -0.5005],

[ 0.7060, 1.1139]

]

)

# Be careful Output needs to go through sigmoid

s = nn.Sigmoid()

output = s(output)

# Suppose it is the classification of multiple labels for one piece of data

label = torch.FloatTensor(

[

[1, 0],

[0, 1]

]

)

loss = bce(output, label)

print(loss) # Output 0.6327

# Verification code

output = torch.FloatTensor(

[

[ 0.0550, -0.5005],

[ 0.7060, 1.1139]

]

)

# Be careful Output needs to go through sigmoid

s = nn.Sigmoid()

output = s(output)

# print(output)

# tensor([[0.5137, 0.3774],

# [0.6695, 0.7529]])

# true_label = [[1, 0], [0, 1]]

sum_1 = 0

sum_1 += 1 * torch.log(torch.tensor(0.5137)) + (1 - 1) * torch.log(torch.tensor(1 - 0.5137))

sum_1 += 0 * torch.log(torch.tensor(0.3774)) + (1 - 0) * torch.log(torch.tensor(1 - 0.3774))

avg_1 = sum_1 / 2

sum_2 = 0

sum_2 += 0 * torch.log(torch.tensor(0.6695)) + (1 - 0) * torch.log(torch.tensor(1 - 0.6695))

sum_2 += 1 * torch.log(torch.tensor(0.7529)) + (1 - 1) * torch.log(torch.tensor(1 - 0.7529))

avg_2 = sum_2 / 2

print(-(avg_1 + avg_2) / 2) # Output 0.63277、BCEWithLogitsLoss

# The sample code

# Use the above two samples to classify the data

import torch

from torch import nn

bce_logit = nn.BCEWithLogitsLoss()

output = torch.FloatTensor(

[

[ 0.0550, -0.5005],

[ 0.7060, 1.1139]

]

) # without Sigmoid

label = torch.FloatTensor(

[

[1, 0],

[0, 1]

]

)

loss = bce_logit(output, label)

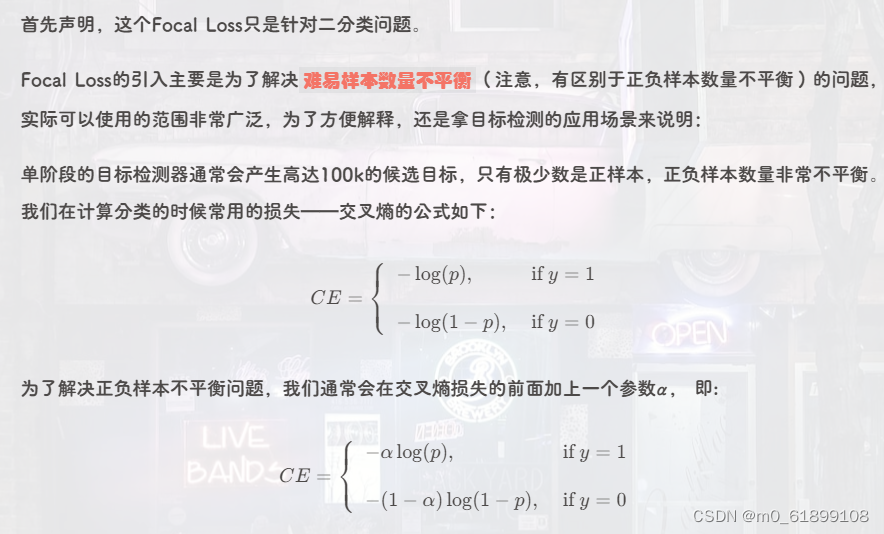

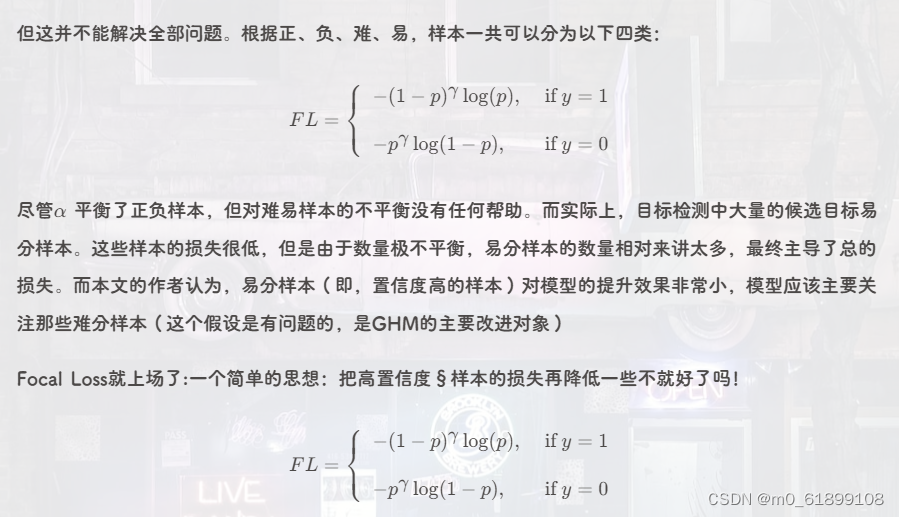

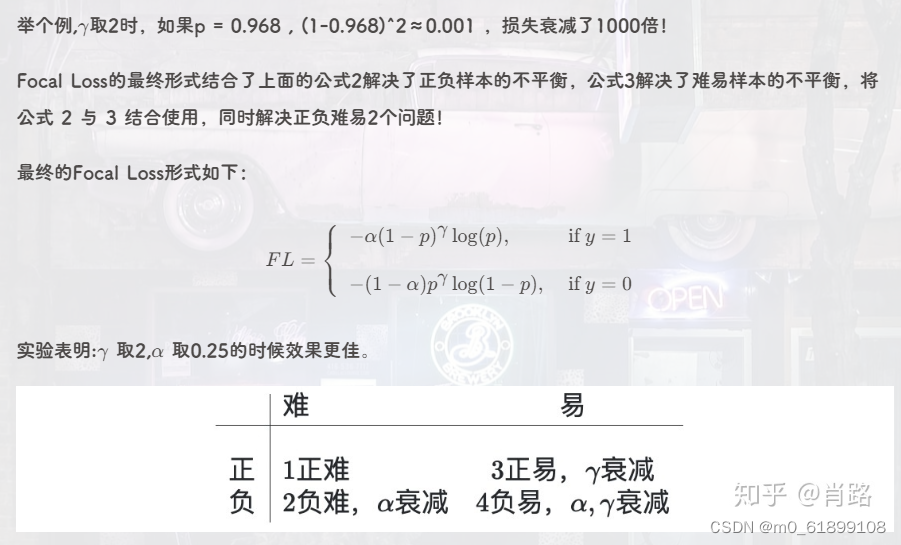

print(loss) # tensor(0.6327)8、Focal Loss

# Code implementation

import torch

import torch.nn.functional as F

def reduce_loss(loss, reduction):

reduction_enum = F._Reduction.get_enum(reduction)

# none: 0, elementwise_mean:1, sum: 2

if reduction_enum == 0:

return loss

elif reduction_enum == 1:

return loss.mean()

elif reduction_enum == 2:

return loss.sum()

def weight_reduce_loss(loss, weight=None, reduction='mean', avg_factor=None):

if weight is not None:

loss = loss * weight

if avg_factor is None:

loss = reduce_loss(loss, reduction)

else:

# if reduction is mean, then average the loss by avg_factor

if reduction == 'mean':

loss = loss.sum() / avg_factor

# if reduction is 'none', then do nothing, otherwise raise an error

elif reduction != 'none':

raise ValueError('avg_factor can not be used with reduction="sum"')

return loss

def py_sigmoid_focal_loss(pred, target, weight=None, gamma=2.0, alpha=0.25, reduction='mean', avg_factor=None):

# Be careful Input pred No need to go through sigmoid

pred_sigmoid = pred.sigmoid()

target = target.type_as(pred)

pt = (1 - pred_sigmoid) * target + pred_sigmoid * (1 - target)

focal_weight = (alpha * target + (1 - alpha) *

(1 - target)) * pt.pow(gamma)

# Let's find this function of cross entropy Yes pred the sigmoid

loss = F.binary_cross_entropy_with_logits(

pred, target, reduction='none') * focal_weight

# print(loss)

''' Output

tensor([[0.0394, 0.0506],

[0.3722, 0.0043]])

'''

loss = weight_reduce_loss(loss, weight, reduction, avg_factor)

return loss

if __name__ == '__main__':

output = torch.FloatTensor(

[

[0.0550, -0.5005],

[0.7060, 1.1139]

]

)

label = torch.FloatTensor(

[

[1, 0],

[0, 1]

]

)

loss = py_sigmoid_focal_loss(output, label)

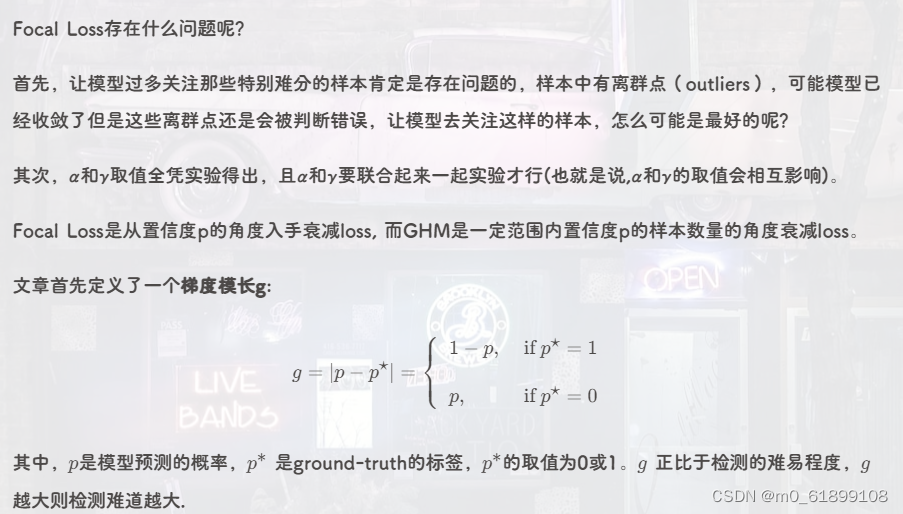

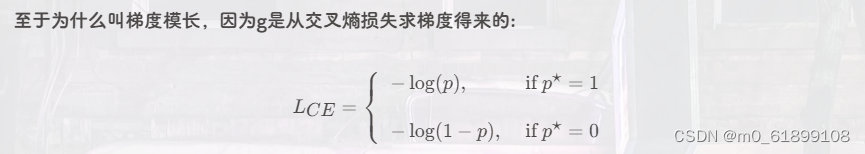

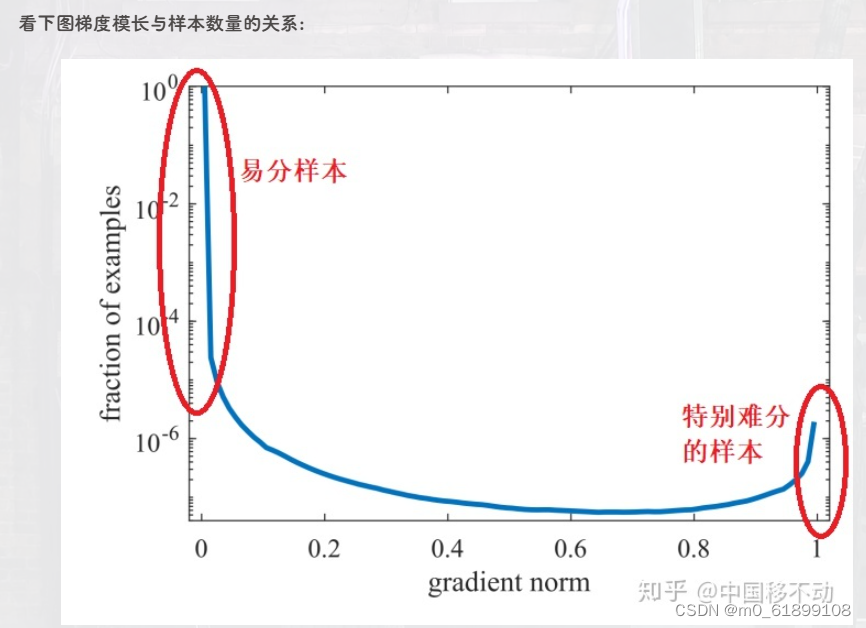

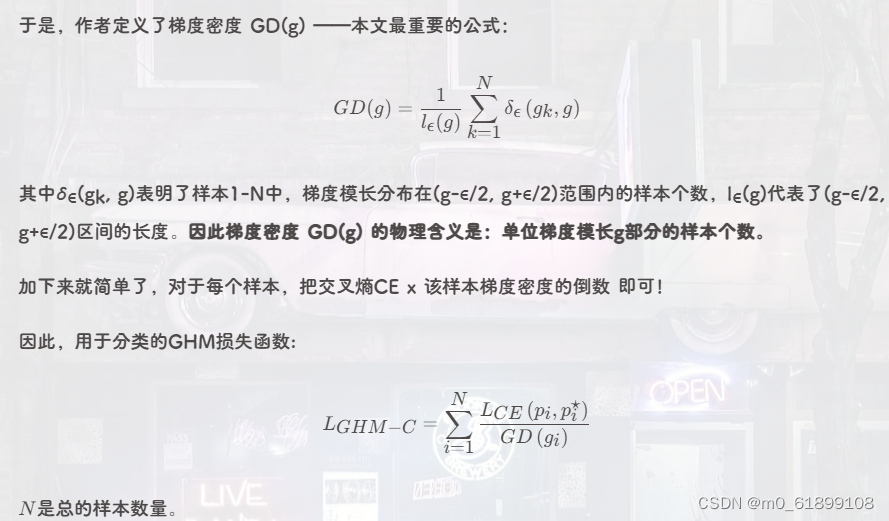

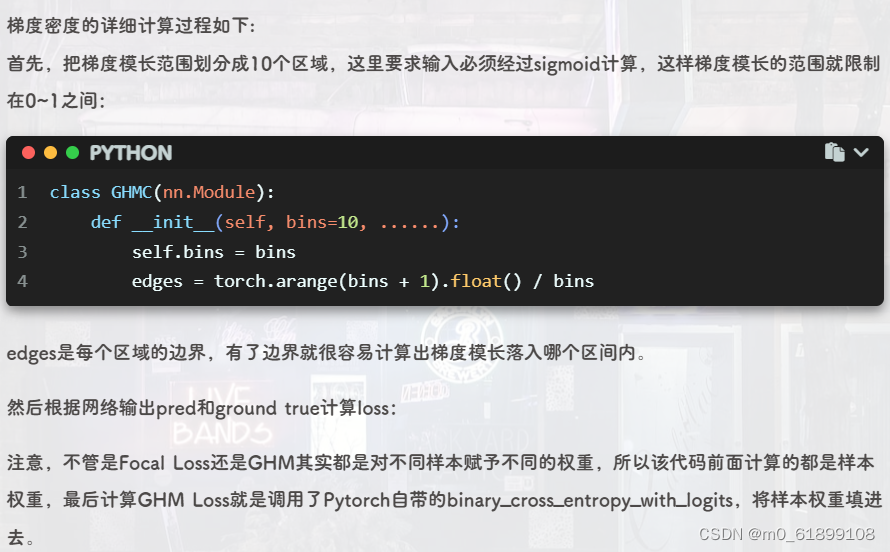

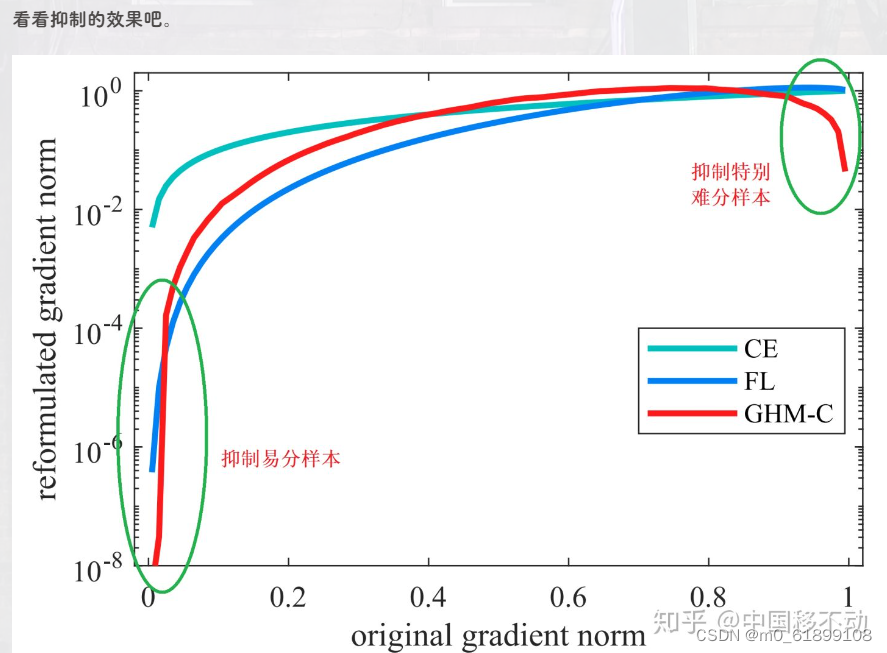

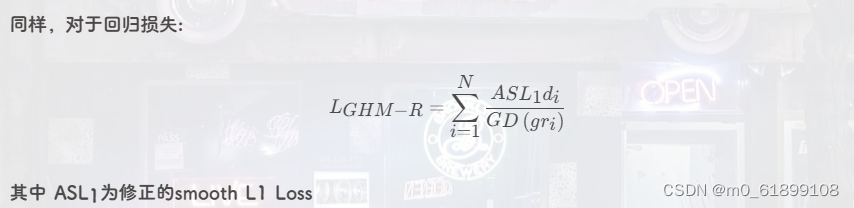

print(loss)9、GHM Loss

Code implementation

# Code implementation

import torch

from torch import nn

import torch.nn.functional as F

class GHM_Loss(nn.Module):

def __init__(self, bins, alpha):

super(GHM_Loss, self).__init__()

self._bins = bins

self._alpha = alpha

self._last_bin_count = None

def _g2bin(self, g):

return torch.floor(g * (self._bins - 0.0001)).long()

def _custom_loss(self, x, target, weight):

raise NotImplementedError

def _custom_loss_grad(self, x, target):

raise NotImplementedError

def forward(self, x, target):

g = torch.abs(self._custom_loss_grad(x, target)).detach()

bin_idx = self._g2bin(g)

bin_count = torch.zeros((self._bins))

for i in range(self._bins):

bin_count[i] = (bin_idx == i).sum().item()

N = (x.size(0) * x.size(1))

if self._last_bin_count is None:

self._last_bin_count = bin_count

else:

bin_count = self._alpha * self._last_bin_count + (1 - self._alpha) * bin_count

self._last_bin_count = bin_count

nonempty_bins = (bin_count > 0).sum().item()

gd = bin_count * nonempty_bins

gd = torch.clamp(gd, min=0.0001)

beta = N / gd

return self._custom_loss(x, target, beta[bin_idx])

class GHMC_Loss(GHM_Loss):

# Classified loss

def __init__(self, bins, alpha):

super(GHMC_Loss, self).__init__(bins, alpha)

def _custom_loss(self, x, target, weight):

return F.binary_cross_entropy_with_logits(x, target, weight=weight)

def _custom_loss_grad(self, x, target):

return torch.sigmoid(x).detach() - target

class GHMR_Loss(GHM_Loss):

# Return to loss

def __init__(self, bins, alpha, mu):

super(GHMR_Loss, self).__init__(bins, alpha)

self._mu = mu

def _custom_loss(self, x, target, weight):

d = x - target

mu = self._mu

loss = torch.sqrt(d * d + mu * mu) - mu

N = x.size(0) * x.size(1)

return (loss * weight).sum() / N

def _custom_loss_grad(self, x, target):

d = x - target

mu = self._mu

return d / torch.sqrt(d * d + mu * mu)

if __name__ == '__main__':

# This loss function does not need to be done by itself sigmoid

output = torch.FloatTensor(

[

[0.0550, -0.5005],

[0.7060, 1.1139]

]

)

label = torch.FloatTensor(

[

[1, 0],

[0, 1]

]

)

loss_func = GHMC_Loss(bins=10, alpha=0.75)

loss = loss_func(output, label)

print(loss)10、mean_absolute_percentage_error

mape: and mae The difference is that , Add the difference between the predicted value and the actual value divided by the actual value , Then find the mean .

def mean_absolute_percentage_error(y_true, y_pred):

diff = K.abs((y_true - y_pred) / K.clip(K.abs(y_true),

K.epsilon(),

None))

return 100. * K.mean(diff, axis=-1)11、mean_squared_logarithmic_error

msle: Take the logarithm , Make a difference , square , Accumulate and average .

def mean_squared_logarithmic_error(y_true, y_pred):

first_log = K.log(K.clip(y_pred, K.epsilon(), None) + 1.)

second_log = K.log(K.clip(y_true, K.epsilon(), None) + 1.)

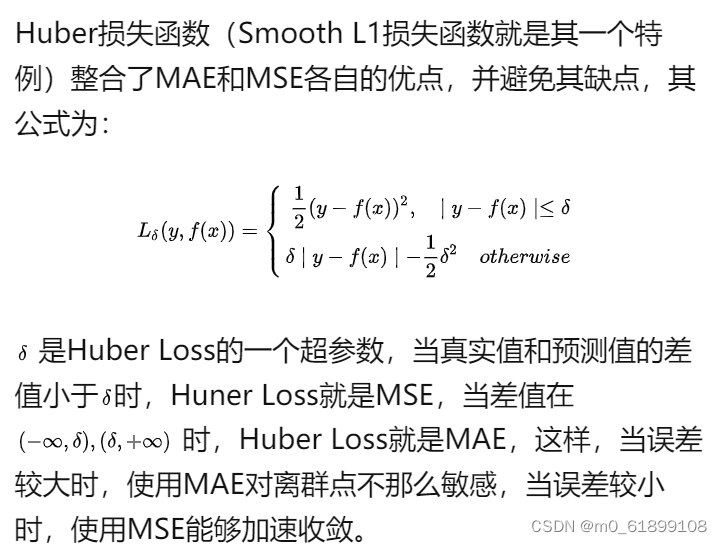

return K.mean(K.square(first_log - second_log), axis=-1)12、Huber Loss

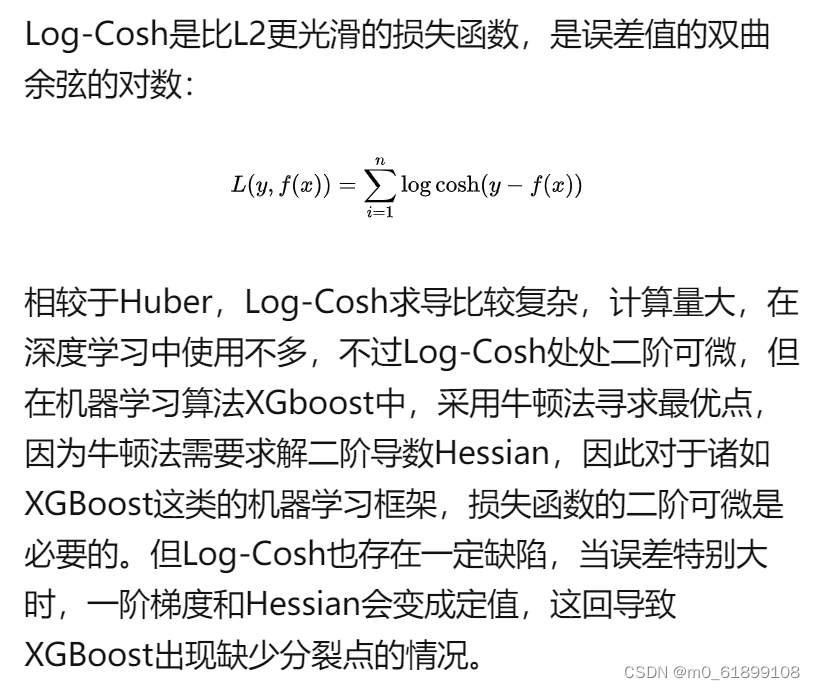

13、Log-Cosh Loss

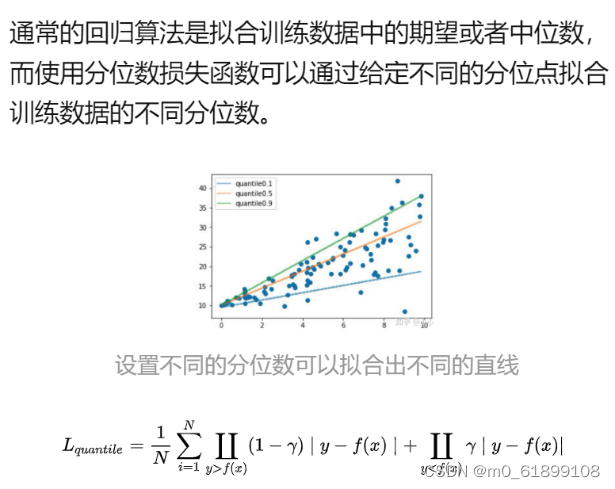

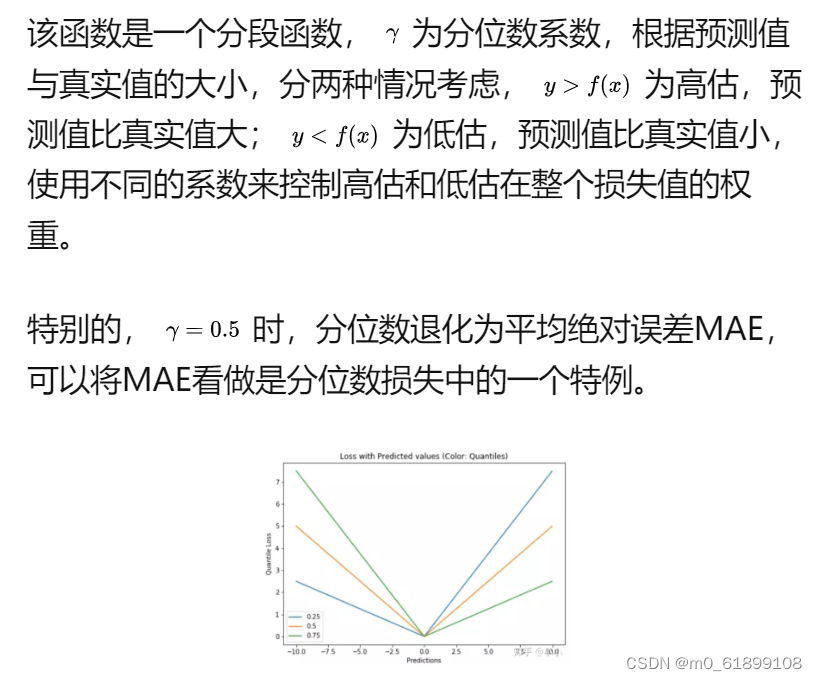

14、Quantile Loss Quantile loss

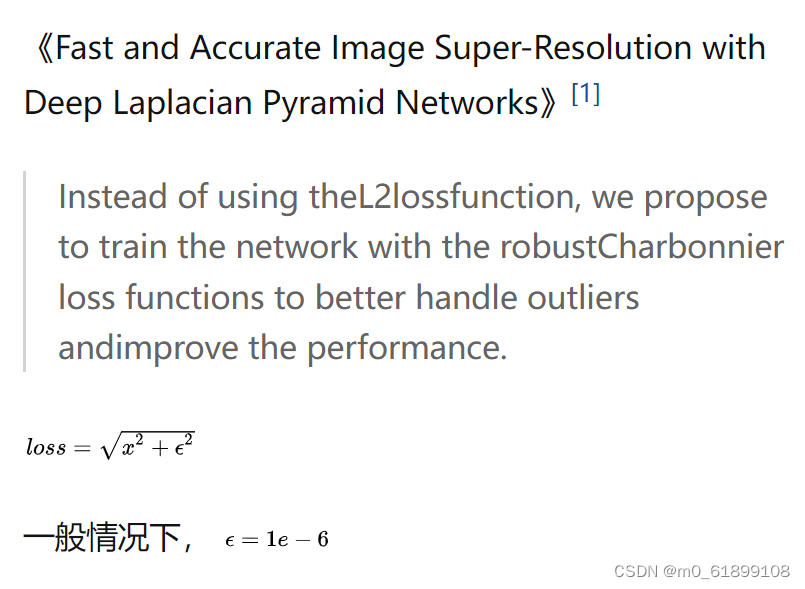

15、charbonnier

class L1_Charbonnier_loss(torch.nn.Module):

"""L1 Charbonnierloss."""

def __init__(self):

super(L1_Charbonnier_loss, self).__init__()

self.eps = 1e-6

def forward(self, X, Y):

diff = torch.add(X, -Y)

error = torch.sqrt(diff * diff + self.eps)

loss = torch.mean(error)

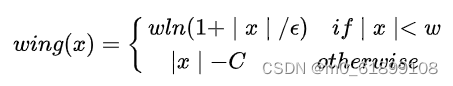

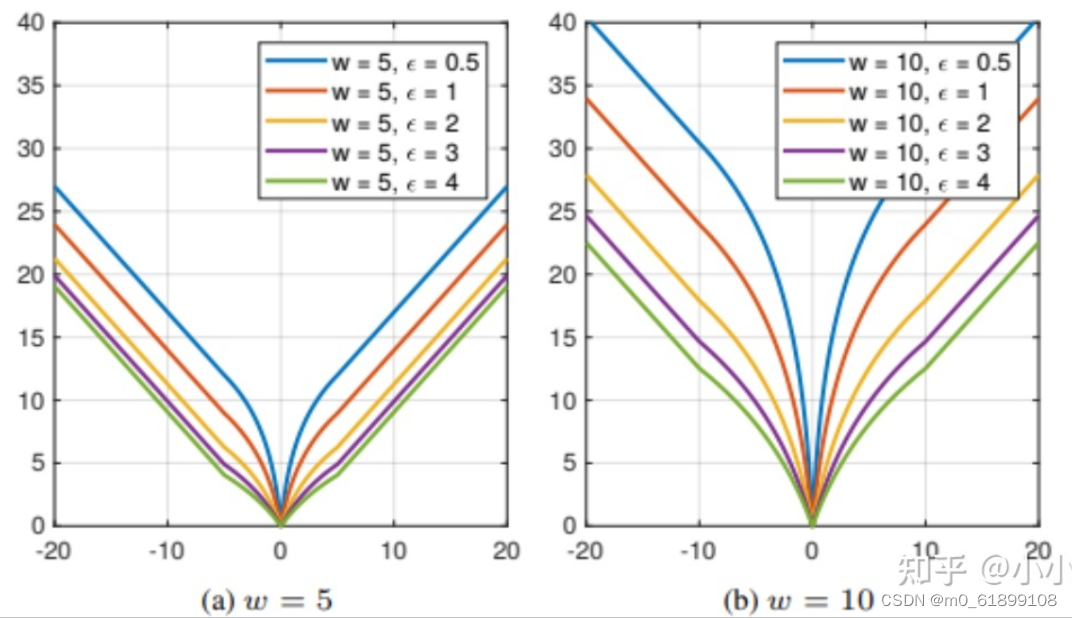

return loss16、wing loss

边栏推荐

- 线代笔记....

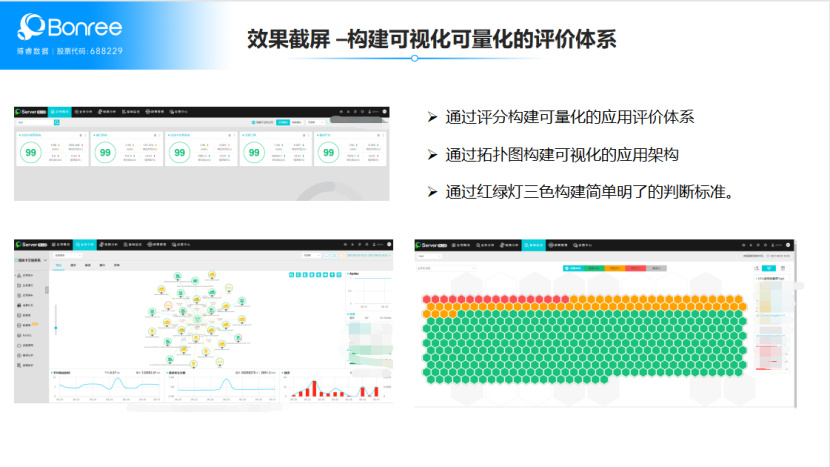

- 监控界的最强王者,没有之一!

- 10、 Process management

- Openmv4 learning notes 1 --- one click download, background knowledge of image processing, lab brightness contrast

- 【论文笔记】TransUNet: Transformers Make StrongEncoders for Medical Image Segmentation

- Camel case with Hungarian notation

- 44 colleges and universities were selected! Publicity of distributed intelligent computing project list

- Yutai micro rushes to the scientific innovation board: Huawei and Xiaomi fund are shareholders to raise 1.3 billion

- Reptiles have a good time. Are you full? These three bottom lines must not be touched!

- 徐翔妻子应莹回应“股评”:自己写的!

猜你喜欢

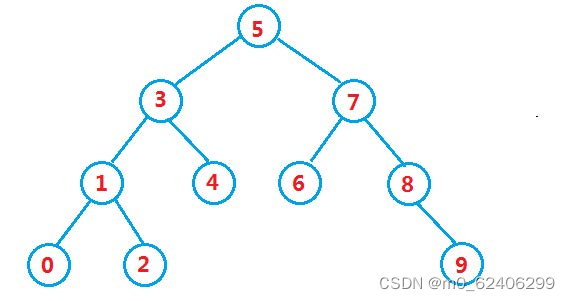

Binary search tree

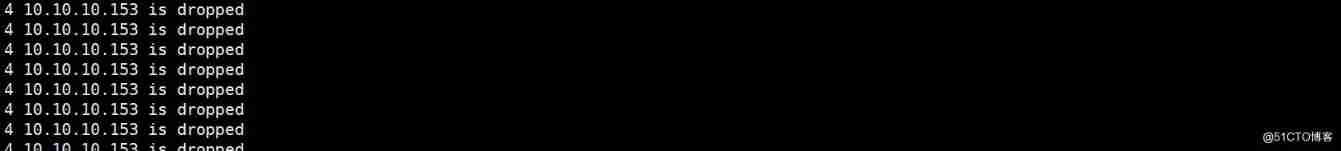

Solve DoS attack production cases

能源行业的数字化“新”运维

Based on butterfly species recognition

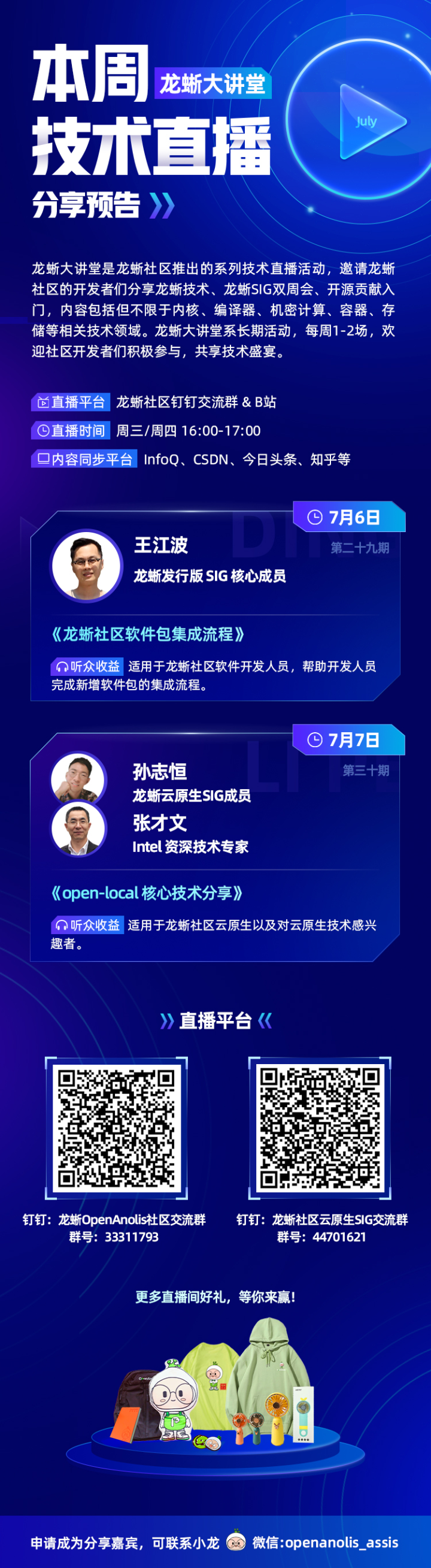

Unlock 2 live broadcast themes in advance! Today, I will teach you how to complete software package integration Issues 29-30

手写一个的在线聊天系统(原理篇1)

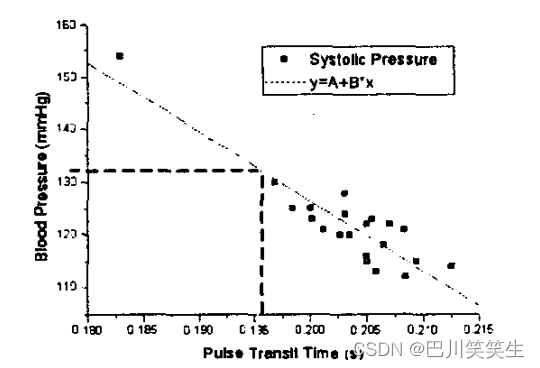

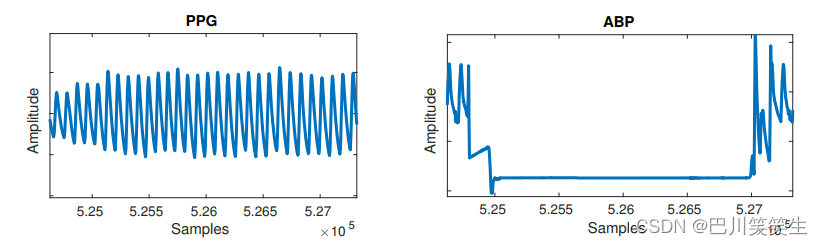

用于远程医疗的无创、无袖带血压测量【翻译】

Lucun smart sprint technology innovation board: annual revenue of 400million, proposed to raise 700million

helm部署etcd集群

根据PPG估算血压利用频谱谱-时间深度神经网络【翻】

随机推荐

基于ppg和fft神经网络的光学血压估计【翻译】

The role of applet in industrial Internet

Some recruitment markets in Shanghai refuse to recruit patients with covid-19 positive

Execution process of MySQL query request - underlying principle

R语言使用order函数对dataframe数据进行排序、基于单个字段(变量)进行降序排序(DESCENDING)

Using block to realize the traditional values between two pages

应用使用Druid连接池经常性断链问题分析

青龙面板最近的库

Crawling data encounters single point login problem

Yutai micro rushes to the scientific innovation board: Huawei and Xiaomi fund are shareholders to raise 1.3 billion

Helm deploy etcd cluster

关于静态类型、动态类型、id、instancetype

How are you in the first half of the year occupied by the epidemic| Mid 2022 summary

Wx applet learning notes day01

How to improve website weight

JDBC驱动器、C3P0、Druid和JDBCTemplate相关依赖jar包

Self supervised heterogeneous graph neural network with CO comparative learning

Stm32+hc05 serial port Bluetooth design simple Bluetooth speaker

Understanding disentangling in β- VAE paper reading notes

QPushButton绑定快捷键的注意事项