当前位置:网站首页>事故指标统计

事故指标统计

2022-07-06 02:18:00 【小胖超凶哦!】

[[email protected] ~]# hive

Logging initialized using configuration in jar:file:/usr/local/soft/hive-1.2.1/lib/hive-common-1.2.1.jar!/hive-log4j.properties

hive> select sgfssj from dwd.base_acd_file order by sgfssj limit 10;

2006-01-22 09:30:00.0

2006-12-21 00:15:00.0

2006-12-21 13:50:00.0

2006-12-21 16:30:00.0

2006-12-21 18:02:00.0

2006-12-22 11:30:00.0

2006-12-22 13:30:00.0

2006-12-22 14:30:00.0

2006-12-22 17:30:00.0

2006-12-22 23:55:00.0

Time taken: 28.559 seconds, Fetched: 10 row(s)

hive> select sgfssj from dwd.base_acd_file order by sgfssj desc limit 10;

OK

2020-10-14 17:28:00.0

2020-10-14 11:16:00.0

2020-10-13 23:06:00.0

2020-10-13 19:25:00.0

2020-10-13 14:12:00.0

2020-10-13 12:10:00.0

2020-10-12 16:04:00.0

2020-10-12 11:50:00.0

2020-10-12 07:38:00.0

2020-10-12 07:30:00.0

Time taken: 21.673 seconds, Fetched: 10 row(s)

hive> select current_date;

OK

2022-07-05

Time taken: 0.459 seconds, Fetched: 1 row(s)

hive> select year(sgfssj),count(*) from dwd.base_acd_file group by year(sgfssj);

2006 43

2007 1082

2008 1070

2009 1377

2010 1579

2011 2604

2012 2117

2013 1802

2014 1936

2015 1991

2016 2094

2017 1933

2018 2373

2019 2617

2020 1930

Time taken: 22.075 seconds, Fetched: 15 row(s)

hive> select substr(sgfssj,1,10)

> ,count(1)as dr_sgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10);

Time taken: 45.99 seconds, Fetched: 4966 row(s)

hive> select t1.tjrq

> ,t1.dr_sgs

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1;

Time taken: 45.99 seconds, Fetched: 4966 row(s)hive> select t1.tjrq

> ,t1.dr_sgs

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> ,lag(t1.dr_sgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_sgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1;

Time taken: 73.903 seconds, Fetched: 4966 row(s)

hive> exit;

[[email protected] ~]# cd /usr/local/soft/spark-2.4.5/

[[email protected] spark-2.4.5]# ls

bin examples LICENSE NOTICE README.md yarn

conf jars licenses python RELEASE

data kubernetes logs R sbin

[[email protected] spark-2.4.5]# spark-sql --conf spark.sql.shuffle.partitions=2

spark-sql> show databases;

22/07/05 11:11:24 INFO codegen.CodeGenerator: Code generated in 227.508135 ms

default

dwd

Time taken: 0.351 seconds, Fetched 2 row(s)

22/07/05 11:11:24 INFO thriftserver.SparkSQLCLIDriver: Time taken: 0.351 seconds, Fetched 2 row(s)

spark-sql> use dwd;

Time taken: 0.041 seconds

22/07/05 11:11:31 INFO thriftserver.SparkSQLCLIDriver: Time taken: 0.041 seconds

spark-sql> show tables;

22/07/05 11:11:41 INFO spark.ContextCleaner: Cleaned accumulator 2

22/07/05 11:11:41 INFO spark.ContextCleaner: Cleaned accumulator 0

22/07/05 11:11:41 INFO spark.ContextCleaner: Cleaned accumulator 1

22/07/05 11:11:41 INFO codegen.CodeGenerator: Code generated in 13.604985 ms

dwd base_acd_file false

dwd base_acd_filehuman false

dwd base_bd_drivinglicense false

dwd base_bd_vehicle false

dwd base_vio_force false

dwd base_vio_surveil false

dwd base_vio_violation false

Time taken: 0.119 seconds, Fetched 7 row(s)

22/07/05 11:11:41 INFO thriftserver.SparkSQLCLIDriver: Time taken: 0.119 seconds, Fetched 7 row(s)

spark-sql> select tt1.tjrq

> ,tt1.dr_sgs

> ,tt1.jn_sgs

> ,tt1.qntq_sgs

> ,NVL(round((abs(tt1.dr_sgs-tt1.qntq_sgs)/NVL(tt1.qntq_sgs,1))*100,2),0) as tb_sgs

> ,if(tt1.dr_sgs-tt1.qntq_sgs>0,"上升",'下降') as tb_sgs_bj

> from(

> select t1.tjrq

> ,t1.dr_sgs

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> ,lag(t1.dr_sgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_sgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1

> )tt1;

Time taken: 0.596 seconds, Fetched 4966 row(s)

22/07/05 11:32:08 INFO thriftserver.SparkSQLCLIDriver: Time taken: 0.596 seconds, Fetched 4966 row(s)

spark-sql> select tt1.tjrq

> ,tt1.dr_sgs

> ,tt1.jn_sgs

> ,tt1.qntq_sgs

> ,NVL(round((abs(tt1.dr_sgs-tt1.qntq_sgs)/NVL(tt1.qntq_sgs,1))*100,2),0) as tb_sgs

> ,if(tt1.dr_sgs-tt1.qntq_sgs>0,"上升",'下降') as tb_sgs_bj

> from(

> select t1.tjrq

> ,t1.dr_sgs

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> ,lag(t1.dr_sgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_sgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1

> )tt1

> order by tt1.tjrq;

Time taken: 5.46 seconds, Fetched 4966 row(s)

22/07/05 14:36:35 INFO thriftserver.SparkSQLCLIDriver: Time taken: 5.46 seconds, Fetched 4966 row(s)spark-sql> select tt1.tjrq

> ,tt1.dr_sgs

> ,tt1.jn_sgs

> ,tt1.qntq_sgs

>

> ,NVL(round((abs(tt1.dr_sgs-tt1.qntq_sgs)/NVL(tt1.qntq_sgs,1))*100,2),0) as tb_sgs

> ,if(tt1.dr_sgs-tt1.qntq_sgs>0,"上升",'下降') as tb_sgs_bj

>

> ,NVL(round((abs(tt1.dr_swsgs-tt1.qntq_swsgs)/NVL(tt1.qntq_swsgs,1))*100,2),0) as tb_swsgs

> ,if(tt1.dr_swsgs-tt1.qntq_swsgs>0,"上升",'下降') as tb_swsgs_bj

> from(

> select t1.tjrq

> ,t1.dr_sgs

> ,t1.dr_swsgs

>

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> ,lag(t1.dr_sgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_sgs

>

> ,sum(t1.dr_swsgs)over (partition by substr(t1.tjrq,1,4))as jn_swsgs

> ,lag(t1.dr_swsgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_swsgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> ,sum(if(swrs7>0,1,0))as dr_swsgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1

> )tt1

> order by tt1.tjrq;

Time taken: 5.372 seconds, Fetched 4966 row(s)

22/07/05 15:17:19 INFO thriftserver.SparkSQLCLIDriver: Time taken: 5.372 seconds, Fetched 4966 row(s)spark-sql> select tt1.tjrq

> ,tt1.dr_sgs

> ,tt1.jn_sgs

> ,tt1.qntq_sgs

> ,tt1.dr_swsgs

> ,tt1.qntq_swsgs

>

> ,NVL(round((abs(tt1.dr_sgs-tt1.qntq_sgs)/NVL(tt1.qntq_sgs,1))*100,2),0) as tb_sgs

> ,if(tt1.dr_sgs-tt1.qntq_sgs>0,"上升",'下降') as tb_sgs_bj

>

> ,NVL(round((abs(tt1.dr_swsgs-tt1.qntq_swsgs)/NVL(tt1.qntq_swsgs,1))*100,2),0) as tb_swsgs

> ,if(tt1.dr_swsgs-tt1.qntq_swsgs>0,"上升",'下降') as tb_swsgs_bj

> from(

> select t1.tjrq

> ,t1.dr_sgs

> ,t1.dr_swsgs

>

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> ,lag(t1.dr_sgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_sgs

>

> ,sum(t1.dr_swsgs)over (partition by substr(t1.tjrq,1,4))as jn_swsgs

> ,lag(t1.dr_swsgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_swsgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> ,sum(if(swrs7>0,1,0))as dr_swsgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1

> )tt1

> order by tt1.tjrq;

Time taken: 4.13 seconds, Fetched 4966 row(s)

22/07/05 15:20:00 INFO thriftserver.SparkSQLCLIDriver: Time taken: 4.13 seconds, Fetched 4966 row(s)spark-sql> select tt1.tjrq

> ,tt1.dr_sgs

> ,tt1.jn_sgs

> ,tt1.qntq_sgs

> ,tt1.dr_swsgs

> ,tt1.jn_swsgs

> ,tt1.qntq_swsgs

>

> ,NVL(round((abs(tt1.dr_sgs-tt1.qntq_sgs)/NVL(tt1.qntq_sgs,1))*100,2),0) as tb_sgs

> ,if(tt1.dr_sgs-tt1.qntq_sgs>0,"上升",'下降') as tb_sgs_bj

>

> ,NVL(round((abs(tt1.dr_swsgs-tt1.qntq_swsgs)/NVL(tt1.qntq_swsgs,1))*100,2),0) as tb_swsgs

> ,if(tt1.dr_swsgs-tt1.qntq_swsgs>0,"上升",'下降') as tb_swsgs_bj

> from(

> select t1.tjrq

> ,t1.dr_sgs

> ,t1.dr_swsgs

>

> ,sum(t1.dr_sgs)over (partition by substr(t1.tjrq,1,4))as jn_sgs

> ,lag(t1.dr_sgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_sgs

>

> ,sum(t1.dr_swsgs)over (partition by substr(t1.tjrq,1,4))as jn_swsgs

> ,lag(t1.dr_swsgs,1,1)over (partition by substr(t1.tjrq,6,5)order by substr(t1.tjrq,1,4))as qntq_swsgs

> from(

> select substr(sgfssj,1,10)as tjrq

> ,count(1)as dr_sgs

> ,sum(if(swrs7>0,1,0))as dr_swsgs

> from dwd.base_acd_file

> group by substr(sgfssj,1,10)

> )t1

> )tt1

> order by tt1.tjrq;

Time taken: 5.653 seconds, Fetched 4966 row(s)

22/07/05 15:23:09 INFO thriftserver.SparkSQLCLIDriver: Time taken: 5.653 seconds, Fetched 4966 row(s)边栏推荐

- Global and Chinese market of commercial cheese crushers 2022-2028: Research Report on technology, participants, trends, market size and share

- 零基础自学STM32-野火——GPIO复习篇——使用绝对地址操作GPIO

- A basic lintcode MySQL database problem

- Leetcode3, implémenter strstr ()

- HDU_p1237_简单计算器_stack

- General process of machine learning training and parameter optimization (discussion)

- Global and Chinese markets for single beam side scan sonar 2022-2028: Research Report on technology, participants, trends, market size and share

- Ali test Open face test

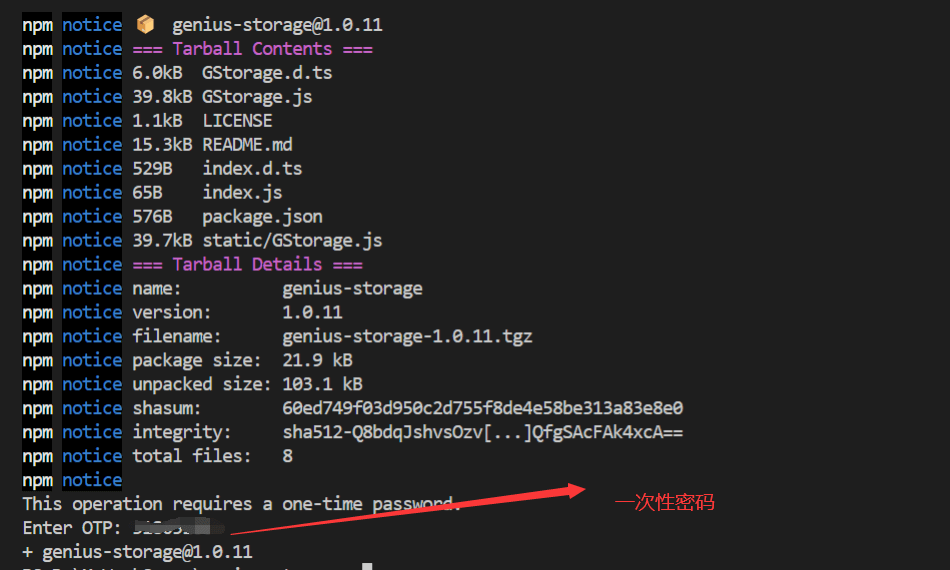

- 使用npm发布自己开发的工具包笔记

- 2022 China eye Expo, Shandong vision prevention and control exhibition, myopia, China myopia correction Exhibition

猜你喜欢

0211 embedded C language learning

Publish your own toolkit notes using NPM

Pangolin Library: subgraph

Keyword static

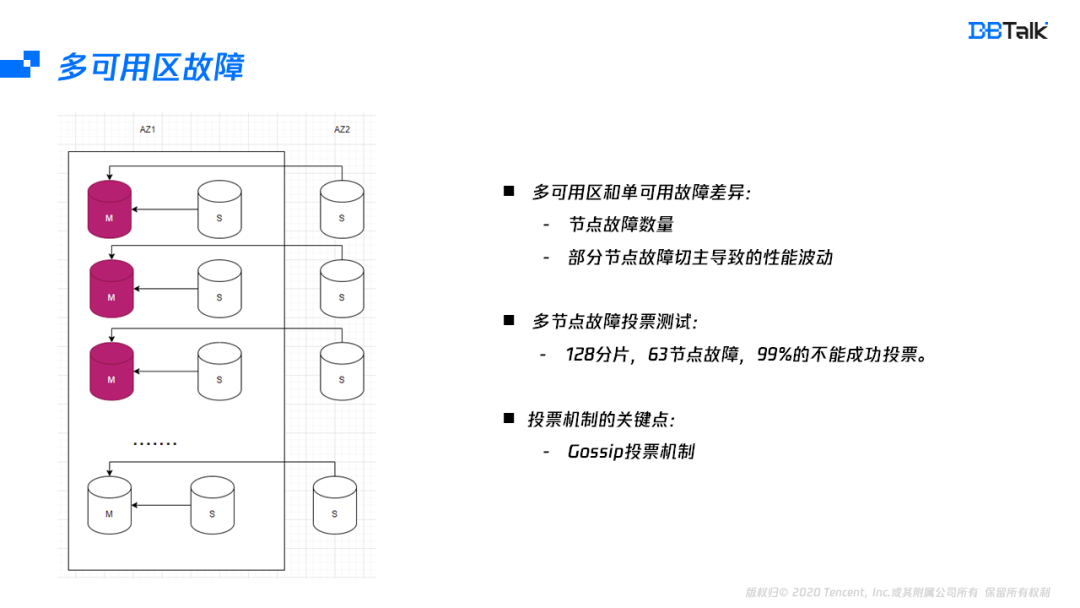

How does redis implement multiple zones?

Lecture 4 of Data Engineering Series: sample engineering of data centric AI

02. Go language development environment configuration

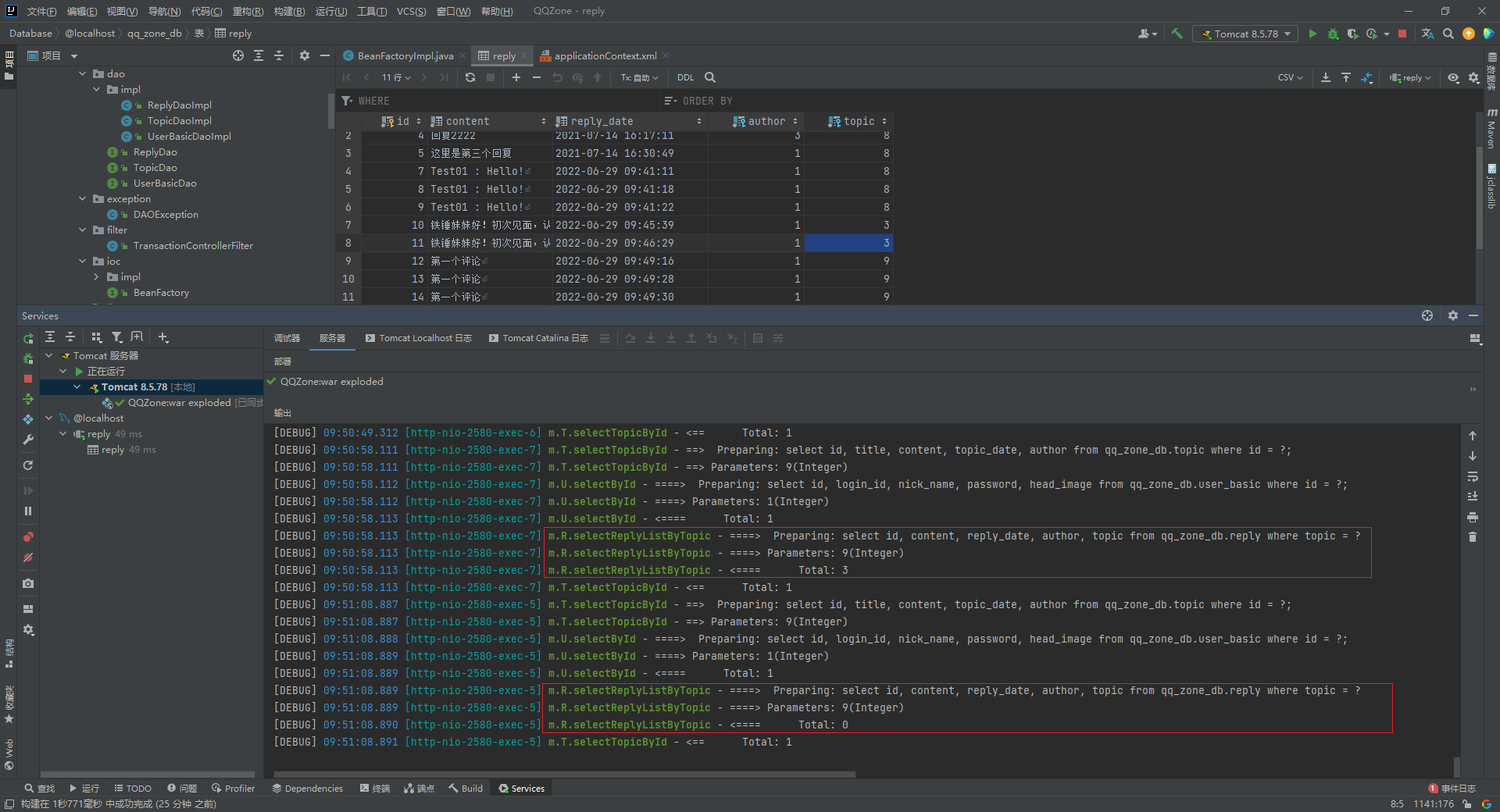

同一个 SqlSession 中执行两条一模一样的SQL语句查询得到的 total 数量不一样

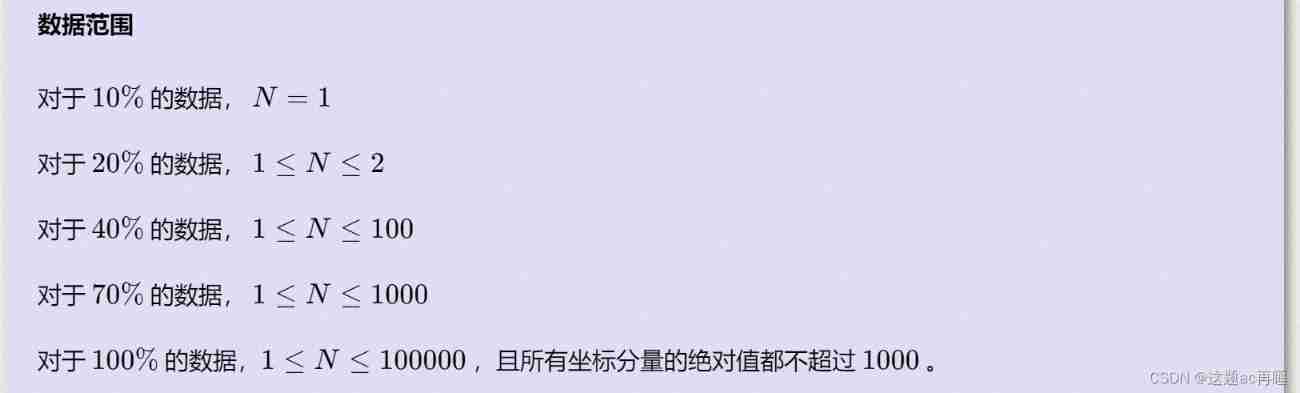

Jisuanke - t2063_ Missile interception

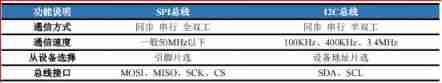

SPI communication protocol

随机推荐

一题多解,ASP.NET Core应用启动初始化的N种方案[上篇]

LeetCode 103. Binary tree zigzag level order transverse - Binary Tree Series Question 5

ftp上传文件时出现 550 Permission denied,不是用户权限问题

Social networking website for college students based on computer graduation design PHP

论文笔记: 极限多标签学习 GalaXC (暂存, 还没学完)

抓包整理外篇——————状态栏[ 四]

PAT甲级 1033 To Fill or Not to Fill

The intelligent material transmission system of the 6th National Games of the Blue Bridge Cup

Sword finger offer 12 Path in matrix

[solution] every time idea starts, it will build project

SSM 程序集

leetcode-2. Palindrome judgment

Method of changing object properties

PHP campus movie website system for computer graduation design

[coppeliasim] efficient conveyor belt

RDD conversion operator of spark

构建库函数的雏形——参照野火的手册

How to generate rich text online

The ECU of 21 Audi q5l 45tfsi brushes is upgraded to master special adjustment, and the horsepower is safely and stably increased to 305 horsepower

Ali test Open face test