当前位置:网站首页>On the back door of deep learning model

On the back door of deep learning model

2022-07-02 07:59:00 【MezereonXP】

About deep learning safety , It can be roughly divided into two pieces : Counter samples (Adversarial Example) as well as back door (Backdoor)

For the confrontation sample, please check my previous article ---- Against sample attacks

This time we mainly focus on the backdoor attack in deep learning . The back door , That is a hidden , A channel that is not easily found . In some special cases , This channel will be exposed .

Then in the deep learning , What about the back door ? Here I might as well take the image classification task as an example , We have a picture of a dog in our hand , By classifier , With 99% The degree of confidence (confidence) Classified as a dog . If I add a pattern to this image ( Like a small red circle ), By classifier , With 80% The confidence is classified as cat .

Then we will call this special pattern trigger (Trigger), This classifier is called a classifier with a back door .

Generally speaking , Backdoor attack is composed of these two parts , That is, triggers and models with backdoors

The trigger will trigger the classifier , Make it erroneously classified into the specified category ( Of course, it can also be unspecified , Just make it wrong , Generally speaking, we are talking about designated categories , If other , Special instructions will be given ).

We have already introduced the backdoor attack , Here we mainly focus on several issues :

- How to get the model with back door and the corresponding trigger

- How to make a hidden back door

- How to detect the back door in the model

This time we will focus on the first and second questions , How to get the model with back door and the corresponding trigger .

Generally speaking , We will operate the training data , The backdoor attack is realized by modifying the training data , Such means , be called Based on poisoning (poisoning-based) The back door of .

Here it is with Poison attack Make a difference , The purpose of poisoning attack is to poison data , Reduce the generalization ability of the model (Reduce model generalization), The purpose of backdoor attack is to invalidate the input of the model with trigger , The input without trigger behaves normally .

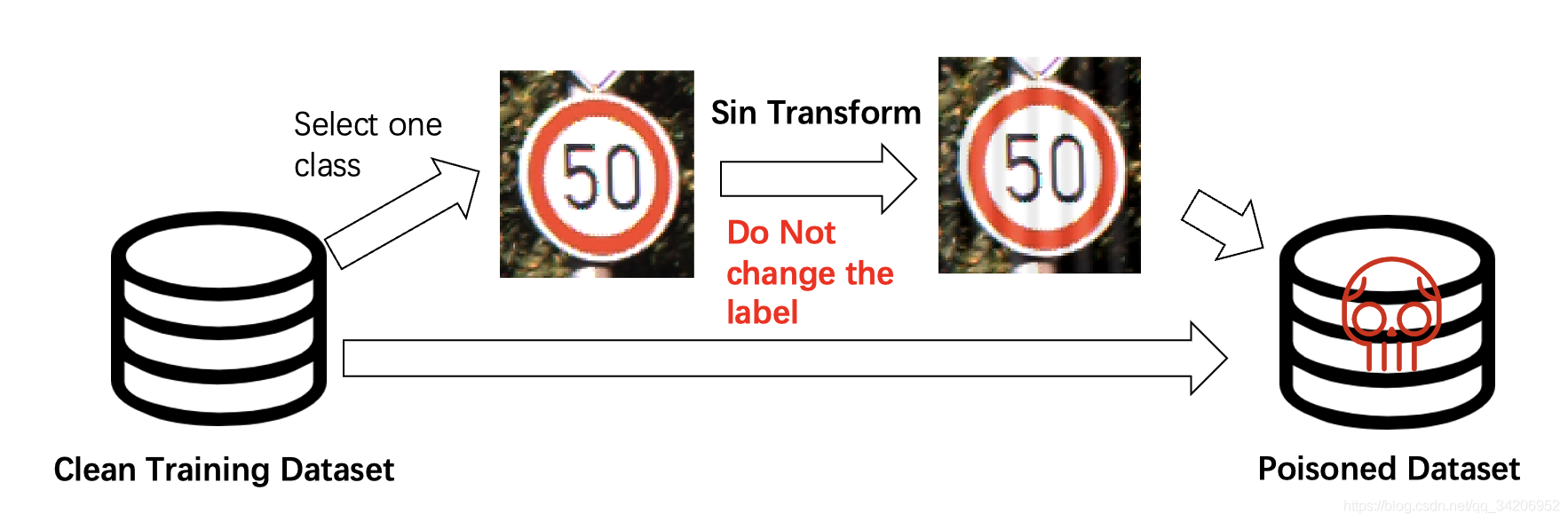

BadNet

First, let's introduce the most classic attacks , from Gu Et al , It's very simple , Is to randomly select samples from the training data set , Add trigger , And modify their real tags , Then put it back , Build a toxic data set .

Gu et al. Badnets: Evaluating backdooring attacks on deep neural networks

This kind of method often needs to modify the label , So is there a way not to modify the label ?

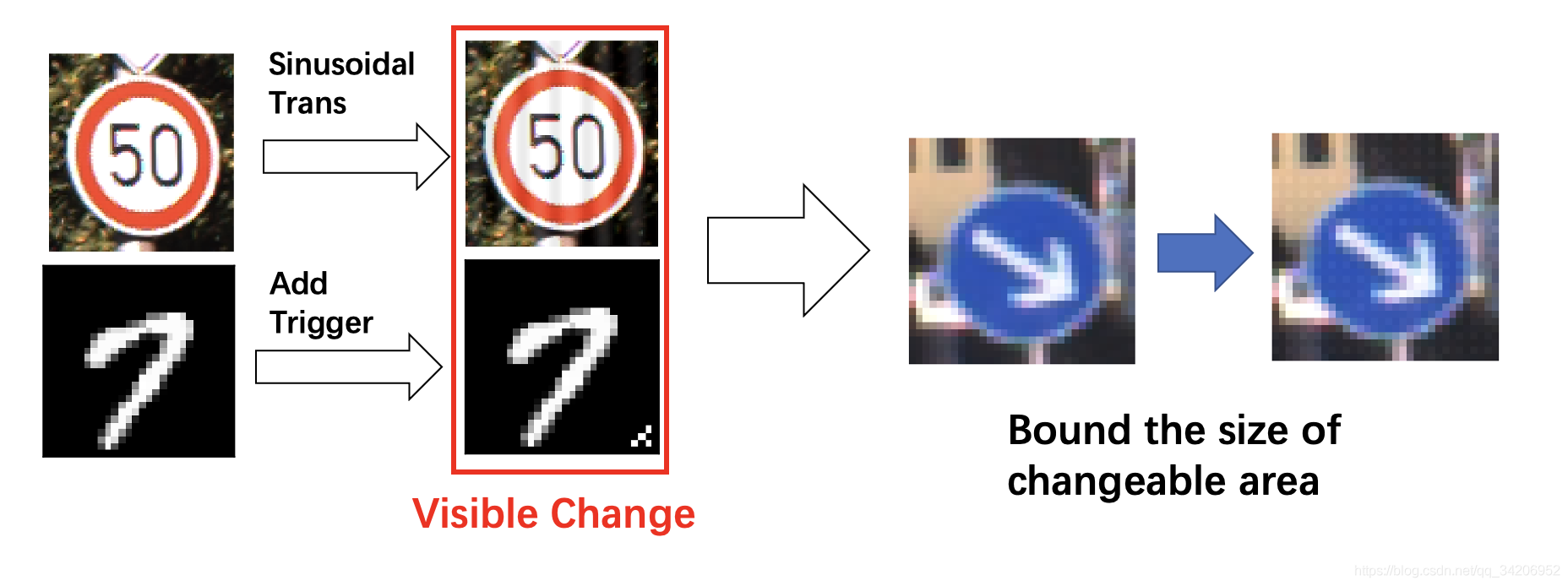

Clean Label

clean label The way is not to modify the label , As shown in the figure below , Just add a special transformation , At the same time, the trigger of this method is relatively hidden ( The trigger is the corresponding transformation ).

Barni et al. A new Backdoor Attack in CNNs by training set corruption without label poisoning

More subtle triggers Hiding Triggers

Liao Et al. Proposed a way to generate triggers , This method will restrict the impact of the trigger on the original image , As shown in the figure below :

Basically, the human eye can't distinguish whether a picture has a trigger .

Liao et al. Backdoor Embedding in Convolutional Neural Network Models via Invisible Perturbation

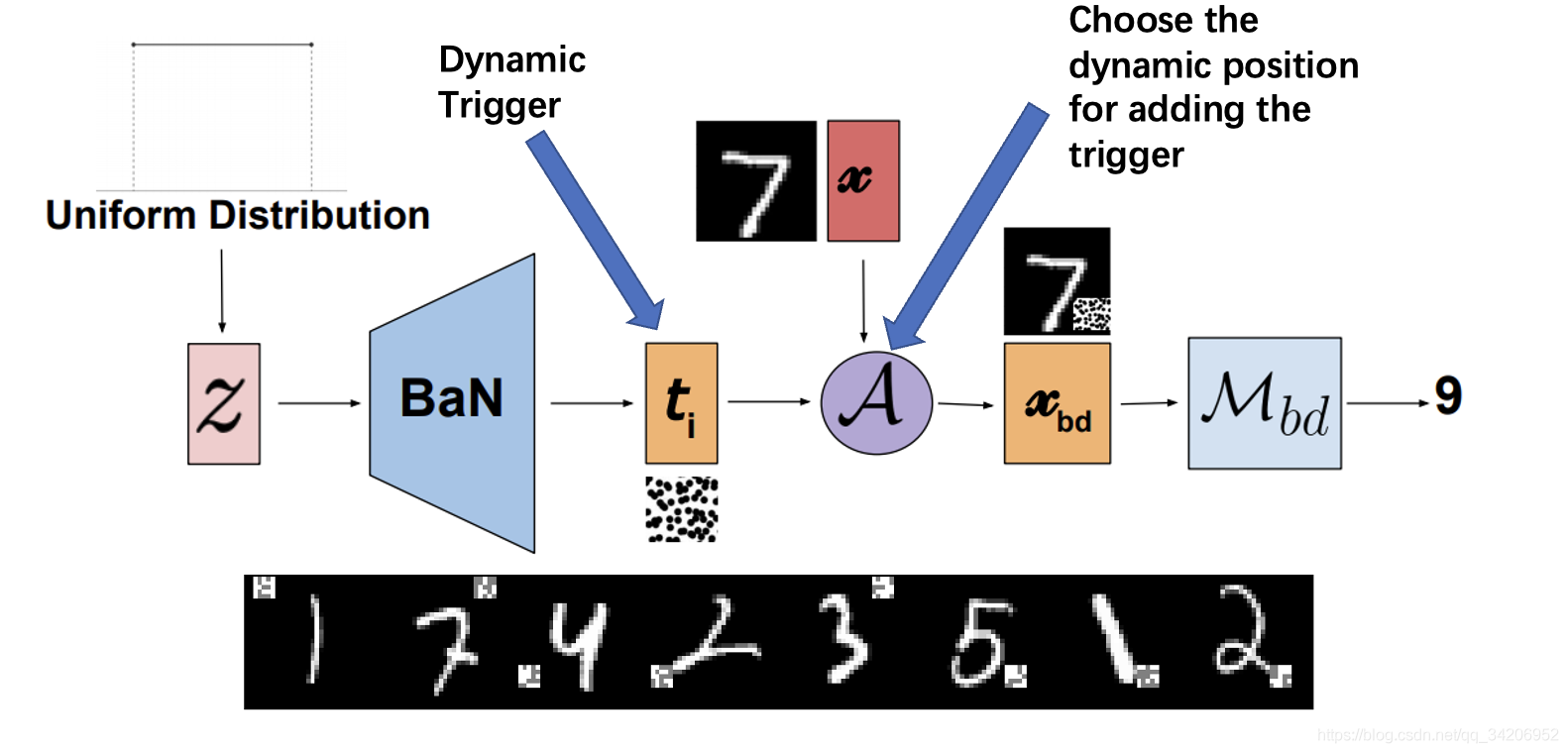

Dynamic triggers Dynamic Backdoor

This method consists of Salem And others raised it , Dynamically determine the location and style of triggers through a network , Enhanced the effect of the attack .

Salem et al. Dynamic Backdoor Attacks Against Machine Learning Models

边栏推荐

- Meta Learning 简述

- SQL server如何卸载干净

- Using super ball embedding to enhance confrontation training

- [learning notes] matlab self compiled Gaussian smoother +sobel operator derivation

- 最长等比子序列

- Go functions make, slice, append

- 浅谈深度学习中的对抗样本及其生成方法

- 静态库和动态库

- 用C# 语言实现MYSQL 真分页

- Open3d learning note 4 [surface reconstruction]

猜你喜欢

![[learning notes] matlab self compiled image convolution function](/img/82/43fc8b2546867d89fe2d67881285e9.png)

[learning notes] matlab self compiled image convolution function

It's great to save 10000 pictures of girls

![How do vision transformer work? [interpretation of the paper]](/img/93/5f967b876fbd63c07b8cfe8dd17263.png)

How do vision transformer work? [interpretation of the paper]

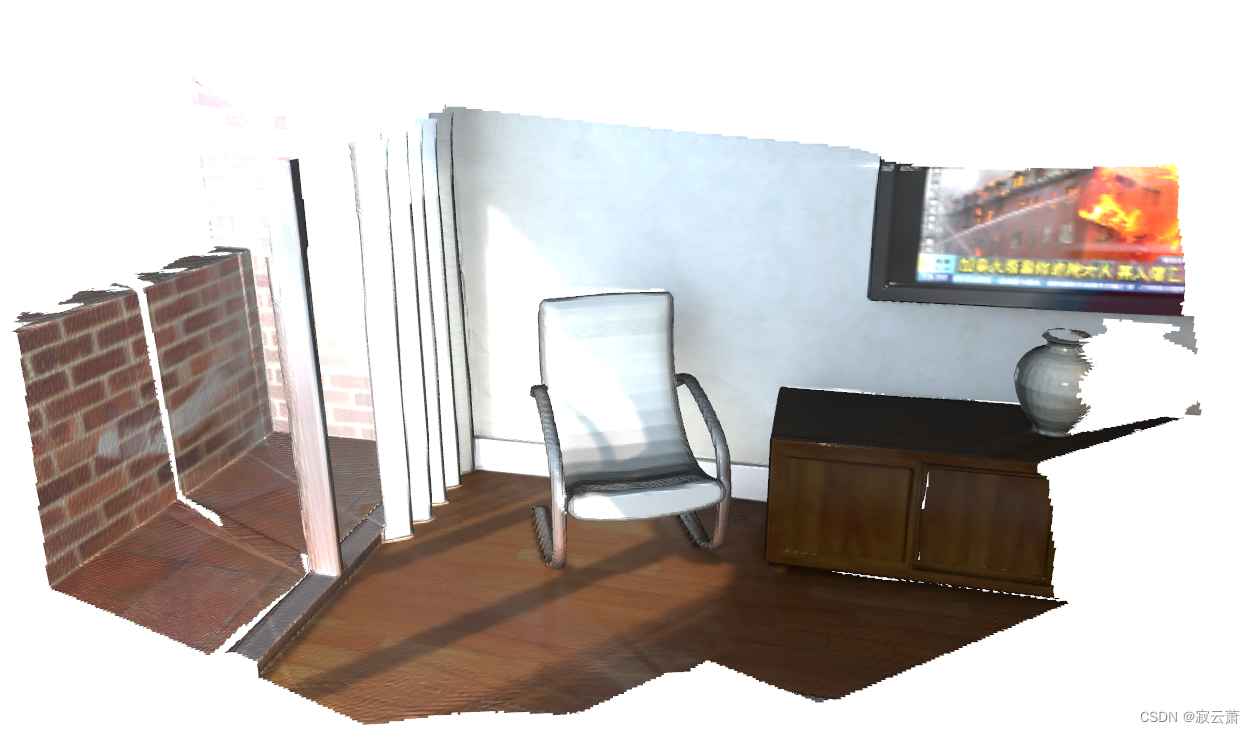

【Sparse-to-Dense】《Sparse-to-Dense:Depth Prediction from Sparse Depth Samples and a Single Image》

open3d学习笔记五【RGBD融合】

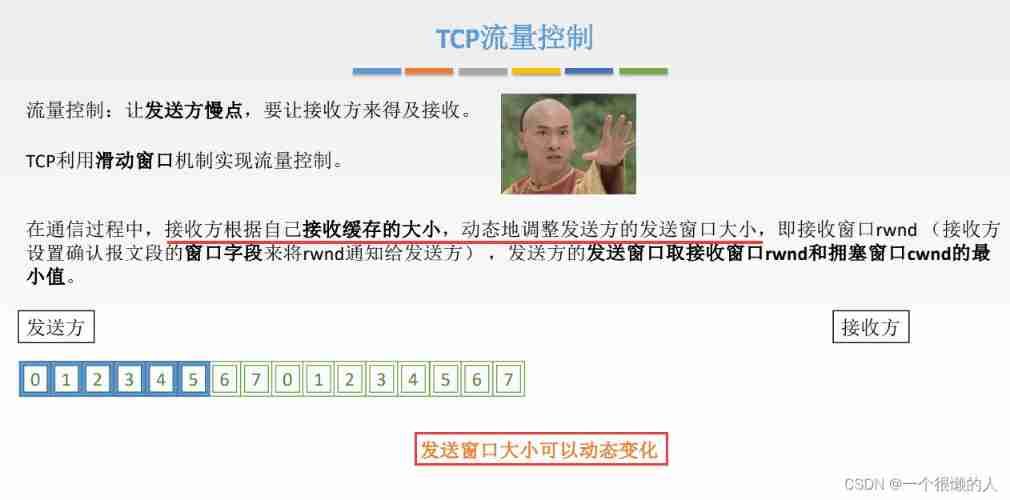

Network metering - transport layer

应对长尾分布的目标检测 -- Balanced Group Softmax

【BiSeNet】《BiSeNet:Bilateral Segmentation Network for Real-time Semantic Segmentation》

Open3D学习笔记一【初窥门径,文件读取】

Target detection for long tail distribution -- balanced group softmax

随机推荐

我的vim配置文件

Yolov3 trains its own data set (mmdetection)

Apple added the first iPad with lightning interface to the list of retro products

服务器的内网可以访问,外网却不能访问的问题

使用C#语言来进行json串的接收

最长等比子序列

In the era of short video, how to ensure that works are more popular?

Open3D学习笔记一【初窥门径,文件读取】

Replace convolution with full connection layer -- repmlp

Open3d learning note 5 [rgbd fusion]

Real world anti sample attack against semantic segmentation

Hystrix dashboard cannot find hystrix Stream solution

业务架构图

SQL server如何卸载干净

针对语义分割的真实世界的对抗样本攻击

Income in the first month of naked resignation

Brief introduction of prompt paradigm

A brief analysis of graph pooling

【DIoU】《Distance-IoU Loss:Faster and Better Learning for Bounding Box Regression》

What if the laptop can't search the wireless network signal