当前位置:网站首页>Automatic derivation of introduction to deep learning (pytoch)

Automatic derivation of introduction to deep learning (pytoch)

2022-07-03 10:33:00 【-Plain heart to warm】

Automatic derivation

Automatic derivation

Chain rule and automatic derivation

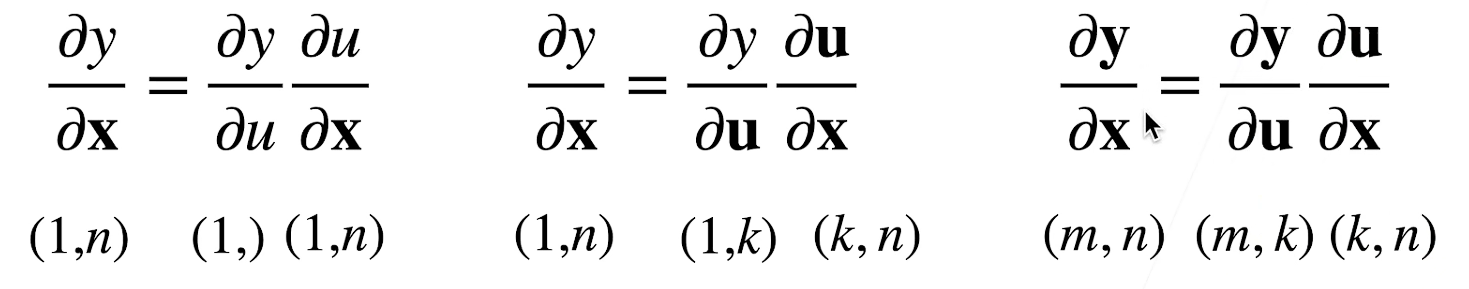

Vector chain rule

Scalar chain rule

y = f ( u ) , u = g ( x ) ∂ y ∂ x = ∂ y ∂ u ∂ u ∂ x y=f(u),u=g(x) \quad\ {\partial y \over \partial x}={\partial y \over \partial u}{\partial u \over \partial x} y=f(u),u=g(x) ∂x∂y=∂u∂y∂x∂uExpand to vector

Example 1

Example 2

Automatic derivation

- Automatic derivation calculates the derivative of a function at a specified value

- It is different from

- Sign derivation

l n [ 1 ] : = D [ 4 x 3 + x 2 + 3 , x ] ln[1]:= D[4x^3+x^2+3, x] ln[1]:=D[4x3+x2+3,x]

O u t [ 1 ] = 2 x + 12 x 2 Out[1]= 2x+12x^2 Out[1]=2x+12x2 - Numerical derivation

∂ f ( x ) ∂ x = l i m h − > 0 f ( x + h ) − f ( x ) h {\partial f(x) \over \partial x }= lim_{h->0}{f(x+h) - f(x) \over h} ∂x∂f(x)=limh−>0hf(x+h)−f(x)

- Sign derivation

Calculation chart

- Decompose the code into operands

- The calculation is expressed as an acyclic graph

- Show construction

- Tensorflow/Theano/MXNet

from mxnet import sym

a = sym.var()

b = sym.var()

c = 2 * a + b

# bind data into a and b later

First define the formula , Then bring the value into

- Implicit construction

- Pytorch/MXNet

from mxnet import autograd, nd

with autograd.record():

a = nd.ones((2, 1))

b = nd.ones((2, 1))

c = 2 * a + b

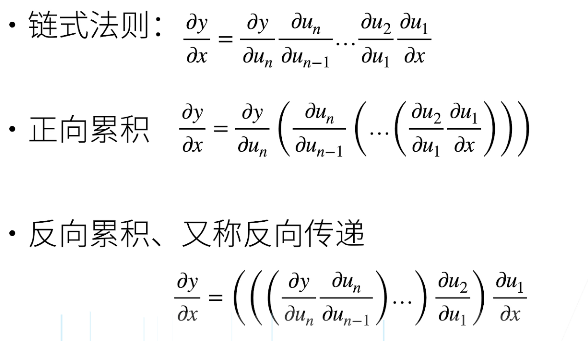

Two modes of automatic derivation

Reverse accumulation

Reverse cumulative summary

- Construction calculation diagram

- Forward direction : Execution diagram , Store intermediate results

- reverse : Execute the diagram from the opposite direction

- Remove unwanted branches

- Remove unwanted branches

Complexity

- Computational complexity :O(n),n Is the number of operands

- Usually the cost of forward and direction is similar

- Memory complexity :O(n), Because you need to store all the intermediate results in the forward direction

Because you want to store all the intermediate results , So it's very expensive GPU resources

- Compared with positive accumulation :

- O(n) Computational complexity is used to calculate the gradient of a variable

- O(1) Memory complexity

Automatic derivation implementation

Automatic derivation

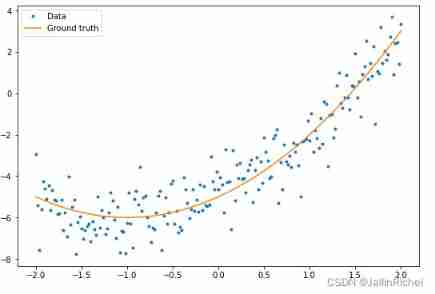

Let's say we want to test the function y = 2 x T x y = 2x^Tx y=2xTx About column vectors x Derivation

import torch

x = torch.arange(4.0)

x

tensor([0., 1., 2., 3.])

In our calculation y About x Before the gradient of , We need a place to store gradients .

x.requires_grad(True) # Equivalent to `x = torch.arange(4.0, requires_grad=True)`

x.grad # The default value is None

Now let's calculate y.

y = 2 * torch.dot(x, x)

y

tensor(28.)

Automatically calculate by calling the back propagation function y About x The gradient of each component

y.backward()

x.grad

tensor([ 0., 4., 8., 12.])

The calculated value should be 4x, You can verify that

x.grad == 4 * x

tensor([True, True, True, True])

Now let's calculate x Another function of

# By default ,PyTorch It accumulates gradients , We need to clear the previous value

x.grad.zero_()

y = x.sum()

y.backward()

x.grad

tensor([1., 1., 1., 1.])

Deep learning , Our purpose is not to calculate the differential matrix , It is the sum of the partial derivatives calculated separately for each sample in the batch .

# For non scalars `backword` Need to pass in a `gradient` Parameters , This parameter specifies the differential parameter

x.grad.zero_()

y = x * x

# Equivalent to y.backword(torch.ones(len(x))

y.sum().backward()

x.grad

tensor([0., 2., 4., 6.])

Why do we do this when we take the derivative sum operation ?

Gradient can only be scalar ( That is, a number ) Output implicitly creates .

Move some calculations out of the recorded calculation diagram

x.grad.zero_()

y = x * x

u = y.detach() # Make the parameter constant

z = u * x

z.sum().backward()

x.grad == u

tensor([True, True, True, True])

Later, when some network parameters are fixed , It is useful to

x.grad.zero_()

y.sum().backward()

x.grad == 2 * x

tensor([True, True, True, True])

Even if the calculation diagram of the construction function needs to pass Python control flow ( for example , Conditions 、 Loop or any function call ), We can still calculate the gradient of the variable .

def f(a):

b = a * 2

while b.norm() < 1000:

b = b * 2

if b.sum() > 0:

c = b

else:

c = 100 * b

return c

a = torch.randn(size=(), requires_grad=True)

d = f(a)

d.backward()

a.grad == d / a

tensor(True)

QA

The difference between explicit construction and implicit construction ?

Show calculation : Give the formula first and then the value

Implicit calculation : Give the value first and then the formulaWhy does deep learning generally take derivatives from scalars ?

because Loss Most of the time it's scalar .

边栏推荐

- Anaconda installation package reported an error packagesnotfounderror: the following packages are not available from current channels:

- Deep Reinforcement learning with PyTorch

- 2-program logic

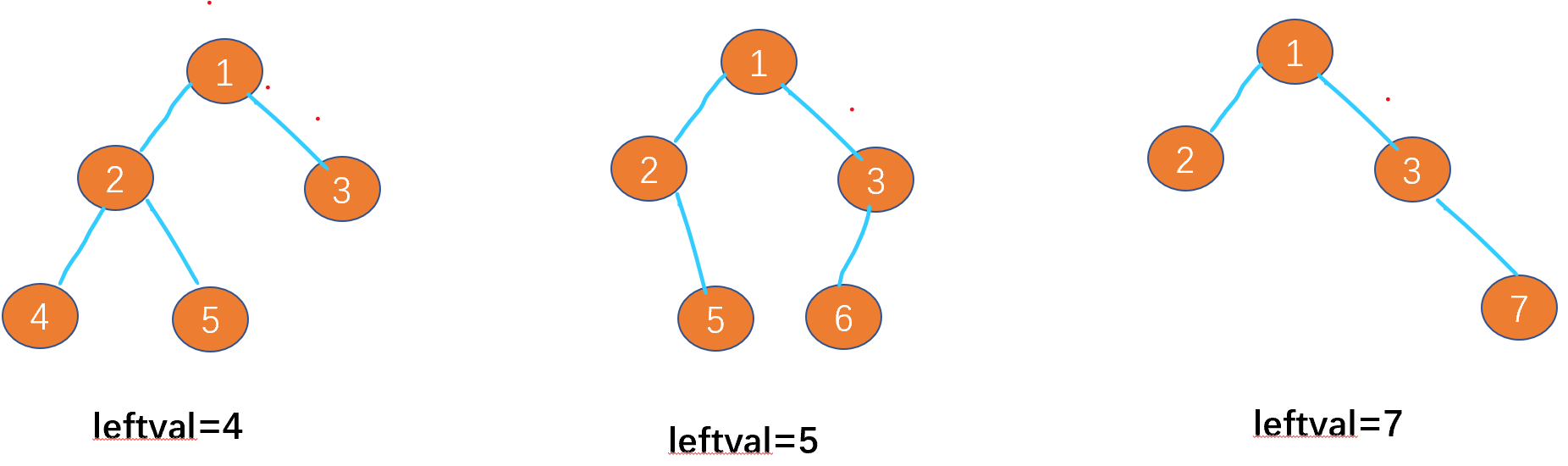

- Leetcode-513:找树的左下角值

- Free online markdown to write a good resume

- Hands on deep learning pytorch version exercise solution - 2.3 linear algebra

- 多层感知机(PyTorch)

- 20220606 Mathematics: fraction to decimal

- 20220608其他:逆波兰表达式求值

- 20220601 Mathematics: zero after factorial

猜你喜欢

Are there any other high imitation projects

MySQL报错“Expression #1 of SELECT list is not in GROUP BY clause and contains nonaggre”解决方法

Leetcode-513: find the lower left corner value of the tree

Configure opencv in QT Creator

Tensorflow - tensorflow Foundation

Leetcode - the k-th element in 703 data flow (design priority queue)

一个30岁的测试员无比挣扎的故事,连躺平都是奢望

mysql5.7安装和配置教程(图文超详细版)

Ut2013 learning notes

『快速入门electron』之实现窗口拖拽

随机推荐

神经网络入门之模型选择(PyTorch)

20220601数学:阶乘后的零

Leetcode刷题---35

Stroke prediction: Bayesian

Hands on deep learning pytorch version exercise solution -- implementation of 3-2 linear regression from scratch

[LZY learning notes dive into deep learning] 3.4 3.6 3.7 softmax principle and Implementation

Leetcode刷题---852

Step 1: teach you to trace the IP address of [phishing email]

Jetson TX2 刷机

一步教你溯源【钓鱼邮件】的IP地址

SQL Server Management Studio cannot be opened

Out of the box high color background system

Model evaluation and selection

Leetcode-513: find the lower left corner value of the tree

安装yolov3(Anaconda)

20220606数学:分数到小数

What can I do to exit the current operation and confirm it twice?

20220608其他:逆波兰表达式求值

深度学习入门之自动求导(Pytorch)

Timo background management system