当前位置:网站首页>Tips and tricks of image segmentation summarized from 39 Kabul competitions

Tips and tricks of image segmentation summarized from 39 Kabul competitions

2022-07-07 19:15:00 【Tom Hardy】

The author 丨 Derrick Mwiti

Source AI park

Edit the market platform

Reading guide

The author took part in 39 individual Kaggle match , According to the order of the whole competition , Summarized the data processing before the game , Model training , And post-processing can help everyone tips and tricks, A lot of skills and experience , Now I want to share it with you .

Imagine , If you can get all the tips and tricks, You need to go to a Kaggle match . I've passed 39 individual Kaggle match , Include :

Data Science Bowl 2017 – $1,000,000

Intel & MobileODT Cervical Cancer Screening – $100,000

2018 Data Science Bowl – $100,000

Airbus Ship Detection Challenge – $60,000

Planet: Understanding the Amazon from Space – $60,000

APTOS 2019 Blindness Detection – $50,000

Human Protein Atlas Image Classification – $37,000

SIIM-ACR Pneumothorax Segmentation – $30,000

Inclusive Images Challenge – $25,000

Now dig up all this knowledge for you !

External data

Use LUng Node Analysis Grand Challenge data , Because this dataset contains annotation details from radiology .

Use LIDC-IDRI data , Because it has all the radiologic descriptions that found the tumor .

Use Flickr CC, Wikipedia universal data set

Use Human Protein Atlas Dataset

Use IDRiD Data sets

Data exploration and intuition

Use 0.5 The threshold value for 3D Segmentation and clustering

Make sure there are no differences in the label distribution between the training set and the test set

Preprocessing

Use DoG(Difference of Gaussian) methods blob testing , Use skimage The method in .

Using a patch Input for training , In order to reduce training time .

Use cudf Load data , Do not use Pandas, Because reading data is faster .

Make sure all images have the same orientation .

In histogram equalization , Use contrast limit .

Use OpenCV General image preprocessing .

Using automated active learning , Manually add .

Scale all images to the same resolution , You can use the same model to scan different thicknesses .

Normalize the scanned image to 3D Of numpy Array .

For a single image, the dark channel prior method is used for image defogging .

Convert all images into Hounsfield Company ( Concepts in radiology ).

Use RGBY To find redundant images .

Develop a sampler , Make the label more balanced .

Fake the test image to improve the score .

The image /Mask Downsampling to 320x480.

Histogram equalization (CLAHE) When you use kernel size by 32×32

take DCM Turn into PNG.

When there are redundant images , Calculate... For each image md5 hash value .

Data to enhance

Use albumentations Data enhancement .

Use random 90 Degree of rotation .

Use horizontal flip , Flip up and down .

You can try larger geometric transformations : Elastic transformation , Affine transformation , Spline affine transformation , Occipital distortion .

Use random HSV.

Use loss-less Enhance to generalize , Prevent useful image information from appearing big loss.

application channel shuffling.

Data enhancement based on the frequency of categories .

Use Gaussian noise .

Yes 3D Image use lossless Rearrangement for data enhancement .

0 To 45 Degree random rotation .

from 0.8 To 1.2 Random scaling .

Brightness conversion .

Random change hue And saturation .

Use D4:https://en.wikipedia.org/wiki/Dihedral_group enhance .

In histogram equalization, use contrast limitation .

Use AutoAugment:https://arxiv.org/pdf/1805.09501.pdf Enhancement strategy .

Model

structure

Use U-net As infrastructure , And adjust to fit 3D The input of .

Use automated active learning and add manual tagging .

Use inception-ResNet v2 architecture Different receptive field training characteristics were used in the structure .

Use Siamese networks Conduct confrontation training .

Use _ResNet50_, Xception, Inception ResNet v2 x 5, The last layer uses full connectivity .

Use global max-pooling layer, Whatever input size , Returns a fixed length output .

Use stacked dilated convolutions.

VoxelNet.

stay LinkNet To replace the addition with splicing and conv1x1.

Generalized mean pooling.

Use 224x224x3 The input of , use Keras NASNetLarge Training models from scratch .

Use 3D Convolution network .

Use ResNet152 As a pre training feature extractor .

take ResNet The last full connection layer of is replaced by 3 One use dropout The full connection layer of .

stay decoder Transpose convolution is used in .

Use VGG As infrastructure .

Use C3D The Internet , Use adjusted receptive fields, At the end of the network 64 unit bottleneck layer .

Use... With pre training weights UNet The structure of type is in 8bit RGB Improve convergence and binary segmentation performance on the input image .

Use LinkNet, Because it's fast and saves memory .

MASKRCNN

BN-Inception

Fast Point R-CNN

Seresnext

UNet and Deeplabv3

Faster RCNN

SENet154

ResNet152

NASNet-A-Large

EfficientNetB4

ResNet101

GAPNet

PNASNet-5-Large

Densenet121

AC-GAN

XceptionNet (96), XceptionNet (299), Inception v3 (139), InceptionResNet v2 (299), DenseNet121 (224)

AlbuNet (resnet34) from ternausnets

SpaceNet

Resnet50 from selim_sef SpaceNet 4

SCSEUnet (seresnext50) from selim_sef SpaceNet 4

A custom Unet and Linknet architecture

FPNetResNet50 (5 folds)

FPNetResNet101 (5 folds)

FPNetResNet101 (7 folds with different seeds)

PANetDilatedResNet34 (4 folds)

PANetResNet50 (4 folds)

EMANetResNet101 (2 folds)

RetinaNet

Deformable R-FCN

Deformable Relation Networks

hardware setup

Use of the AWS GPU instance p2.xlarge with a NVIDIA K80 GPU

Pascal Titan-X GPU

Use of 8 TITAN X GPUs

6 GPUs: 2_1080Ti + 4_1080

Server with 8×NVIDIA Tesla P40, 256 GB RAM and 28 CPU cores

Intel Core i7 5930k, 2×1080, 64 GB of RAM, 2x512GB SSD, 3TB HDD

GCP 1x P100, 8x CPU, 15 GB RAM, SSD or 2x P100, 16x CPU, 30 GB RAM

NVIDIA Tesla P100 GPU with 16GB of RAM

Intel Core i7 5930k, 2×1080, 64 GB of RAM, 2x512GB SSD, 3TB HDD

980Ti GPU, 2600k CPU, and 14GB RAM

Loss function

Dice Coefficient , Because it works well on unbalanced data .

Weighted boundary loss The aim is to reduce segmentation and prediction ground truth Distance between .

MultiLabelSoftMarginLoss Use one-versus-all Loss optimized multiple tags .

Balanced cross entropy (BCE) with logit loss The weights of positive and negative samples are assigned by coefficients .

Lovasz be based on sub-modular Lost convex Lovasz Extend to optimize the average directly IoU Loss .

FocalLoss + Lovasz take Focal loss and Lovasz losses Add up to get .

Arc margin loss By adding margin To maximize the separability of face categories .

Npairs loss Calculation y_true and y_pred Between npairs Loss .

take BCE and Dice loss combined .

LSEP – A sort of pairwise sort loss , Smooth everywhere, so it's easy to optimize .

Center loss At the same time, learn the feature centers of each category , And punish the samples which are too far away from the feature center .

Ring Loss The standard loss function is enhanced , Such as Softmax.

Hard triplet loss Training network for feature embedding , Maximize the distance between features of different categories .

1 + BCE – Dice Contains BCE and DICE Loss plus 1.

Binary cross-entropy – log(dice) Binary cross entropy minus dice loss Of log.

BCE, dice and focal A combination of losses .

BCE + DICE - Dice Loss smoothed by calculation dice The coefficient gives .

Focal loss with Gamma 2 The upgrade of standard cross entropy loss .

BCE + DICE + Focal – 3 Add up the losses .

Active Contour Loss Added area and size information , And integrated into the deep learning model .

1024 * BCE(results, masks) + BCE(cls, cls_target)

Focal + kappa – Kappa It's a loss for multi category classification , Here and Focal loss Add up .

ArcFaceLoss — For face recognition Additive Angular Margin Loss.

soft Dice trained on positives only – Using the prediction probability Soft Dice.

2.7 * BCE(pred_mask, gt_mask) + 0.9 * DICE(pred_mask, gt_mask) + 0.1 * BCE(pred_empty, gt_empty) A custom loss .

nn.SmoothL1Loss().

Use Mean Squared Error objective function, In some scenarios, it is better than binary cross entropy loss .

Training skills

Try different learning rates .

Try different batch size.

Use SGD + momentum And design the learning rate strategy by hand .

Too much enhancement reduces accuracy .

Cut and train on the image , Full scale image prediction .

Use Keras Of ReduceLROnPlateau() As a learning rate strategy .

Do not use data to enhance training to the platform period , And then for some epochs Use hardware and software to enhance .

Freeze all layers except the last one , Use 1000 Images to fine tune , As a first step .

Use category sampling

Use when debugging the last layer dropout And enhanced

Use pseudo tags to improve scores

Use Adam stay plateau It's time to slow down the learning rate

use SGD Use Cyclic Learning rate strategy

If the verification loss continues 2 individual epochs No reduction , Reduce the learning rate

take 10 individual batches The worst of the batch Repeat training

Use default UNET Training

Yes patch Overlap , So the edge pixels are covered twice

Super parameter debugging : Training rate , Non maximum suppression and fractional threshold in reasoning

Remove the bounding box of low confidence score .

Training different convolution networks for model integration .

stay F1score Stop training when you start to fall .

Use different learning rates .

Use the cascade method with 5 folds The method of training ANN, repeat 30 Time .

Evaluate and verify

The training and test sets are divided unevenly by category

When debugging the last layer , Use cross validation to avoid over fitting .

Use 10 Cross validation integration for classification .

Use... When testing 5-10 Fold cross validation to integrate .

Integration method

Integration using a simple voting method

For models with many categories, use LightGBM, Using original features .

Yes 2 The layer model uses CatBoost.

Use ‘curriculum learning’ To speed up model training , In this training mode , The model is trained on simple samples , Then train on difficult samples .

Use ResNet50, InceptionV3, and InceptionResNetV2 To integrate .

Use integration for object detection .

Yes Mask RCNN, YOLOv3, and Faster RCNN To integrate .

post-processing

Use test time augmentation , A random transformation of an image is carried out, and the results are averaged after many tests .

Test the probability of equilibrium , Instead of using predicted categories .

Geometric averaging of the predicted results .

When reasoning, they overlap , because UNet The prediction of marginal areas is not very good .

Non maximum suppression and bounding box shrinkage are performed .

In case segmentation, watershed algorithm is used to separate objects .

This article is only for academic sharing , If there is any infringement , Please contact to delete .

3D Visual workshop boutique course official website :3dcver.com

1. Multi sensor data fusion technology for automatic driving field

2. For the field of automatic driving 3D Whole stack learning route of point cloud target detection !( Single mode + Multimodal / data + Code )

3. Thoroughly understand the visual three-dimensional reconstruction : Principle analysis 、 Code explanation 、 Optimization and improvement

4. China's first point cloud processing course for industrial practice

5. laser - Vision -IMU-GPS The fusion SLAM Algorithm sorting and code explanation

6. Thoroughly understand the vision - inertia SLAM: be based on VINS-Fusion The class officially started

7. Thoroughly understand based on LOAM Framework of the 3D laser SLAM: Source code analysis to algorithm optimization

8. Thorough analysis of indoor 、 Outdoor laser SLAM Key algorithm principle 、 Code and actual combat (cartographer+LOAM +LIO-SAM)

10. Monocular depth estimation method : Algorithm sorting and code implementation

11. Deployment of deep learning model in autopilot

12. Camera model and calibration ( Monocular + Binocular + fisheye )

13. blockbuster ! Four rotor aircraft : Algorithm and practice

14.ROS2 From entry to mastery : Theory and practice

15. The first one in China 3D Defect detection tutorial : theory 、 Source code and actual combat

16. be based on Open3D Introduction and practical tutorial of point cloud processing

blockbuster !3DCVer- Academic paper writing contribution Communication group Established

Scan the code to add a little assistant wechat , can Apply to join 3D Visual workshop - Academic paper writing and contribution WeChat ac group , The purpose is to communicate with each other 、 Top issue 、SCI、EI And so on .

meanwhile You can also apply to join our subdivided direction communication group , At present, there are mainly 3D Vision 、CV& Deep learning 、SLAM、 Three dimensional reconstruction 、 Point cloud post processing 、 Autopilot 、 Multi-sensor fusion 、CV introduction 、 Three dimensional measurement 、VR/AR、3D Face recognition 、 Medical imaging 、 defect detection 、 Pedestrian recognition 、 Target tracking 、 Visual products landing 、 The visual contest 、 License plate recognition 、 Hardware selection 、 Academic exchange 、 Job exchange 、ORB-SLAM Series source code exchange 、 Depth estimation Wait for wechat group .

Be sure to note : Research direction + School / company + nickname , for example :”3D Vision + Shanghai Jiaotong University + quietly “. Please note... According to the format , Can be quickly passed and invited into the group . Original contribution Please also contact .

▲ Long press and add wechat group or contribute

▲ The official account of long click attention

3D Vision goes from entry to mastery of knowledge : in the light of 3D In the field of vision Video Course cheng ( 3D reconstruction series 、 3D point cloud series 、 Structured light series 、 Hand eye calibration 、 Camera calibration 、 laser / Vision SLAM、 Automatically Driving, etc )、 Summary of knowledge points 、 Introduction advanced learning route 、 newest paper Share 、 Question answer Carry out deep cultivation in five aspects , There are also algorithm engineers from various large factories to provide technical guidance . meanwhile , The planet will be jointly released by well-known enterprises 3D Vision related algorithm development positions and project docking information , Create a set of technology and employment as one of the iron fans gathering area , near 4000 Planet members create better AI The world is making progress together , Knowledge planet portal :

Study 3D Visual core technology , Scan to see the introduction ,3 Unconditional refund within days

There are high quality tutorial materials in the circle 、 Answer questions and solve doubts 、 Help you solve problems efficiently

Feel useful , Please give me a compliment ~

边栏推荐

- State mode - Unity (finite state machine)

- 手把手教姐姐写消息队列

- Mathematical analysis_ Notes_ Chapter 11: Fourier series

- The live broadcast reservation channel is open! Unlock the secret of fast launching of audio and video applications

- How to estimate the value of "not selling pens" Chenguang?

- Reinforcement learning - learning notes 8 | Q-learning

- Numpy——2.数组的形状

- How to choose the appropriate automated testing tools?

- 【牛客网刷题系列 之 Verilog进阶挑战】~ 多bit MUX同步器

- Seize Jay Chou

猜你喜欢

随机推荐

[sword finger offer] 59 - I. maximum value of sliding window

Creative changes brought about by the yuan universe

[information security laws and regulations] review

最长公共前缀(leetcode题14)

2022上半年朋友圈都在传的10本书,找到了

炒股如何开户?请问一下手机开户股票开户安全吗?

Business experience in virtual digital human

I feel cheated. Wechat tests the function of "size number" internally, and two wechat can be registered with the same mobile number

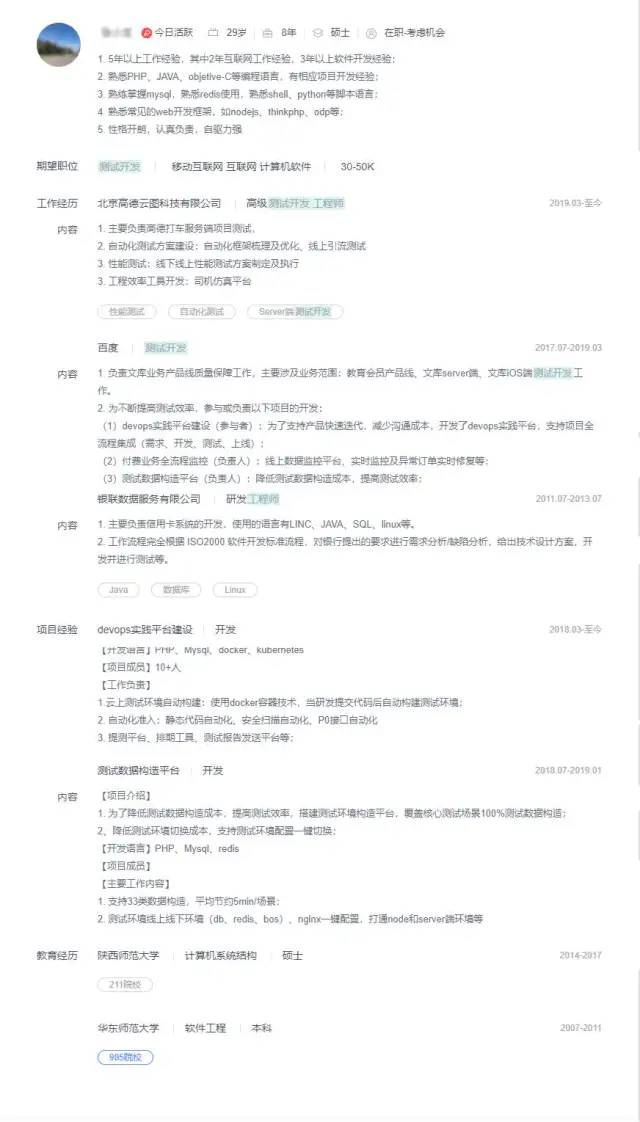

【软件测试】从企业版BOSS直聘,看求职简历,你没被面上是有原因的

POJ 1182: food chain (parallel search) [easy to understand]

Redis cluster and expansion

2022年推荐免费在线接收短信平台(国内、国外)

Former richest man, addicted to farming

Redis集群与扩展

Differences between rip and OSPF and configuration commands

Borui data was selected in the 2022 love analysis - Panoramic report of it operation and maintenance manufacturers

ip netns 命令(备忘)

网易云信参与中国信通院《实时音视频服务(RTC)基础能力要求及评估方法》标准编制...

【牛客网刷题系列 之 Verilog进阶挑战】~ 多bit MUX同步器

SlashData开发者工具榜首等你而定!!!