当前位置:网站首页>[recommendation system 02] deepfm, youtubednn, DSSM, MMOE

[recommendation system 02] deepfm, youtubednn, DSSM, MMOE

2022-07-07 10:35:00 【ECCUSXR】

1 DeepFM

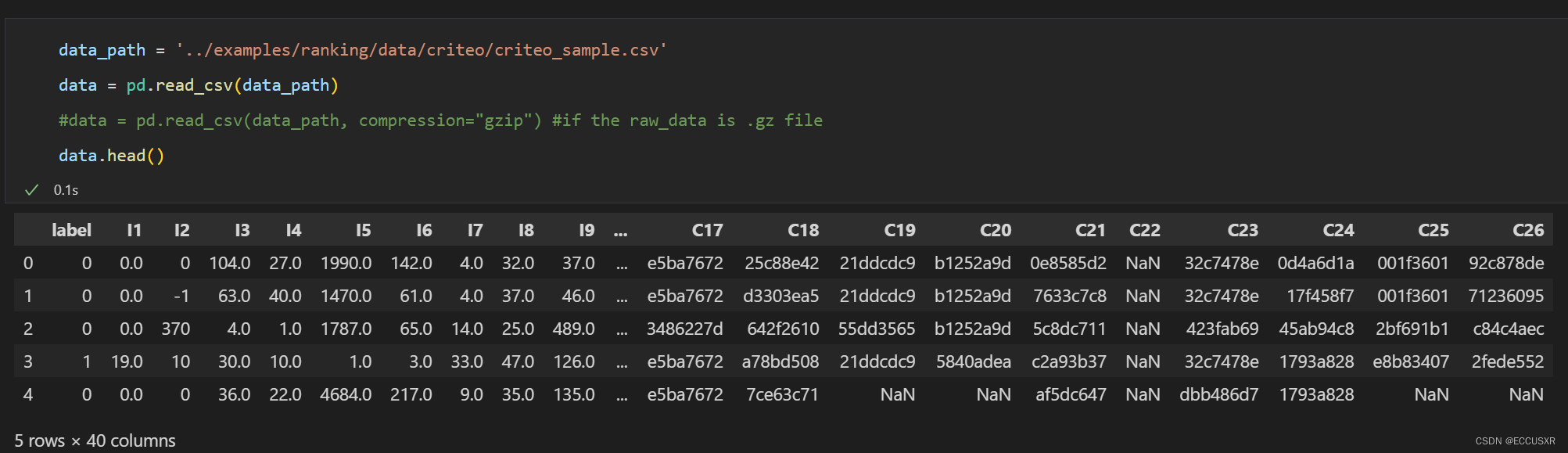

Online advertising data set : Criteo Labs

describe : Contains millions of click feedback records of display advertisements , This data can be used as the click through rate (CTR) The basis of the forecast .

The dataset has 40 Features , The first column is the label , The value of 1 Indicates that the advertisement has been clicked , And value 0 Indicates that the advertisement has not been clicked . Other features include 13 individual dense The characteristics and 26 individual sparse features .

1.1 Feature Engineering

- Dense features : Also known as numerical characteristics , For example, salary 、 Age . In this tutorial Dense The feature performs two operations :

- MinMaxScaler normalization , Make its value in [0,1] Between

- Discretize it into new Sparse features

- Sparse features : Also known as category type feature , Such as gender 、 Education . In this tutorial Sparse Feature directly LabelEncoder Coding operation , Map the original category string to a number , In the model, we will generate Embedding vector .

1.2 Torch-RecHub frame

- Torch-RecHub The framework is mainly based on PyTorch and sklearn, Easy to use and expand 、 It can reproduce the practical recommendation model in the industry , Highly modular , Support common Layer, Support common sorting models 、 Recall model 、 Multi task learning ;

- Usage method : Use DataGenerator Build a data loader , By building lightweight models , And model training is carried out based on the unified trainer , Finally, complete the model evaluation .

1.3 The background

DeepFM Huawei Noah Ark laboratory in 2017 The model proposed in .

FM(Factorization Machines, Factorizer ) It is intended to solve the problem that it is difficult to train model parameters in the scene of sparse data .FM As a recommendation algorithm, it is widely used in recommendation systems and computational advertising , It is usually used to predict the click through rate CTR(click-through rate) And conversion CVR(conversion rate).

https://zhuanlan.zhihu.com/p/342803984

- solve DNN( All connected neural networks ) limitations : The network parameter is too large , take One Hot The feature is transformed into Dense Vector

- FNN( Feedforward neural networks ) and PNN( Probabilistic neural networks ): Use pre trained FM modular , Connect to DNN It's formed on the surface FNN Model , After that Embedding layer and hidden layer1 Add a product layer , Use product layer Replace FM Pre training layer , formation PNN Model ( Don't understand, )

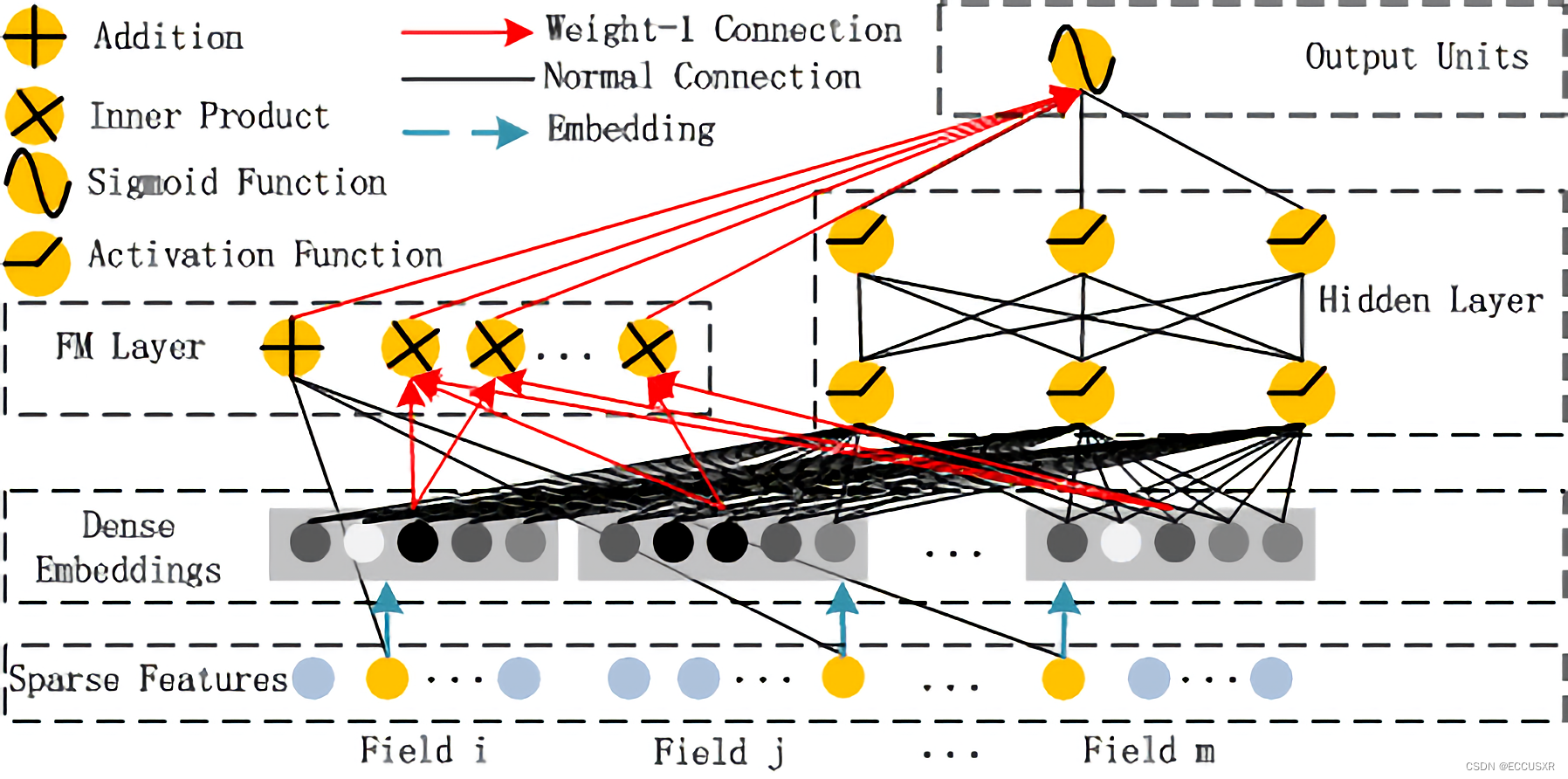

2 FM part

FM Layer It is mainly composed of first-order features and second-order features , after Sigmoid obtain logitcs

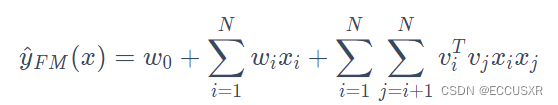

Model formula :

-

FM The model formula of is a general fitting equation , Different loss functions can be used to solve regression、classification Other questions ,FM New samples can be predicted in linear time .

advantage :

- The vector inner product is used as the weight of the cross feature , It can be used when the data is very sparse , Effectively train the weight of cross features ( Because you don't need two features that are not zero at the same time )

- Through formula optimization , obtain O(nk) The computational complexity of , Computational efficiency is very high

- Although the overall feature space in the recommended scenario is very large , however FM The training and prediction only need to deal with the non-zero features in the samples , This also improves the speed of model training and online prediction .

- Due to the high computational efficiency of the model , And it can automatically mine long tail low-frequency materials in sparse scenes , Applicable to recall 、 There are three stages of rough and fine discharge . When applied at different stages , Sample construction 、 Fitting objectives and online services are different “

shortcoming : Only the second-order intersection of features can be displayed , There is nothing we can do about higher-order crossover .

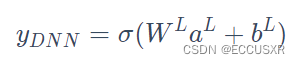

3 Deep part

Model composition :

- Use full connection to connect Dense Embedding Input to Hidden Layer, solve DNN The problem of parameter explosion in

- Embedding The output of the layer is to put all id Class features correspond to embedding The vectors are connected together , And type in DNN in

Model formula :

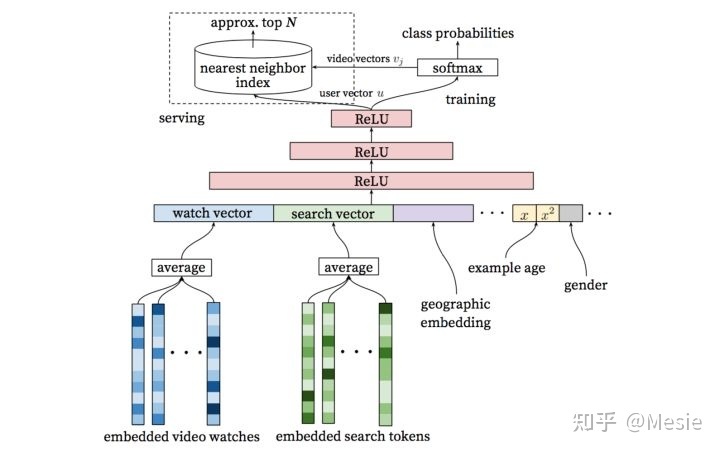

4 YoutubeDNN

according to User embedding and item imbedding Use nearest neighbor search Method recall , stay softmax Using negative sampling .

Input data is highly sparse , Use embeding and average pooling Handle , Get users to watch / Search interest

Discrete data can be used embeding Handle , Continuous data can be processed by normalization or bucket division

skip-gram: The word vector is trained by predicting the context word with the head word , The input of the model is the central word , Obtain sequence samples in the form of sliding window , After getting the central word , According to the matrix multiplication of word vector , Get the word vector of the head word , Then multiply it by the context matrix , Get the similarity between the central word and each word , adopt softmax Get similar probability , And choose the one with the greatest probability index Output .

Convert a word into one-hot The vector is multiplied by the embedded matrix to obtain the embedded vector , Send in softmax Get the prediction .

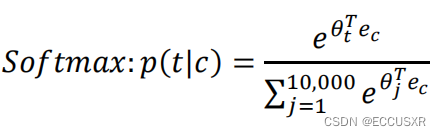

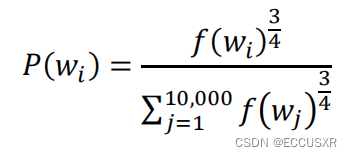

Build loss function ( Suppose the dimension has 10000 individual ):

- YoutubeDNN training: The right part is similar to skip-gram, But the central word is used directly embedding obtain , The left part splices the user's feature vectors into a large vector and then DNN Dimension reduction

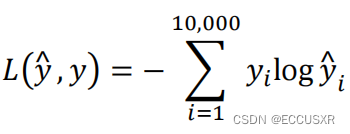

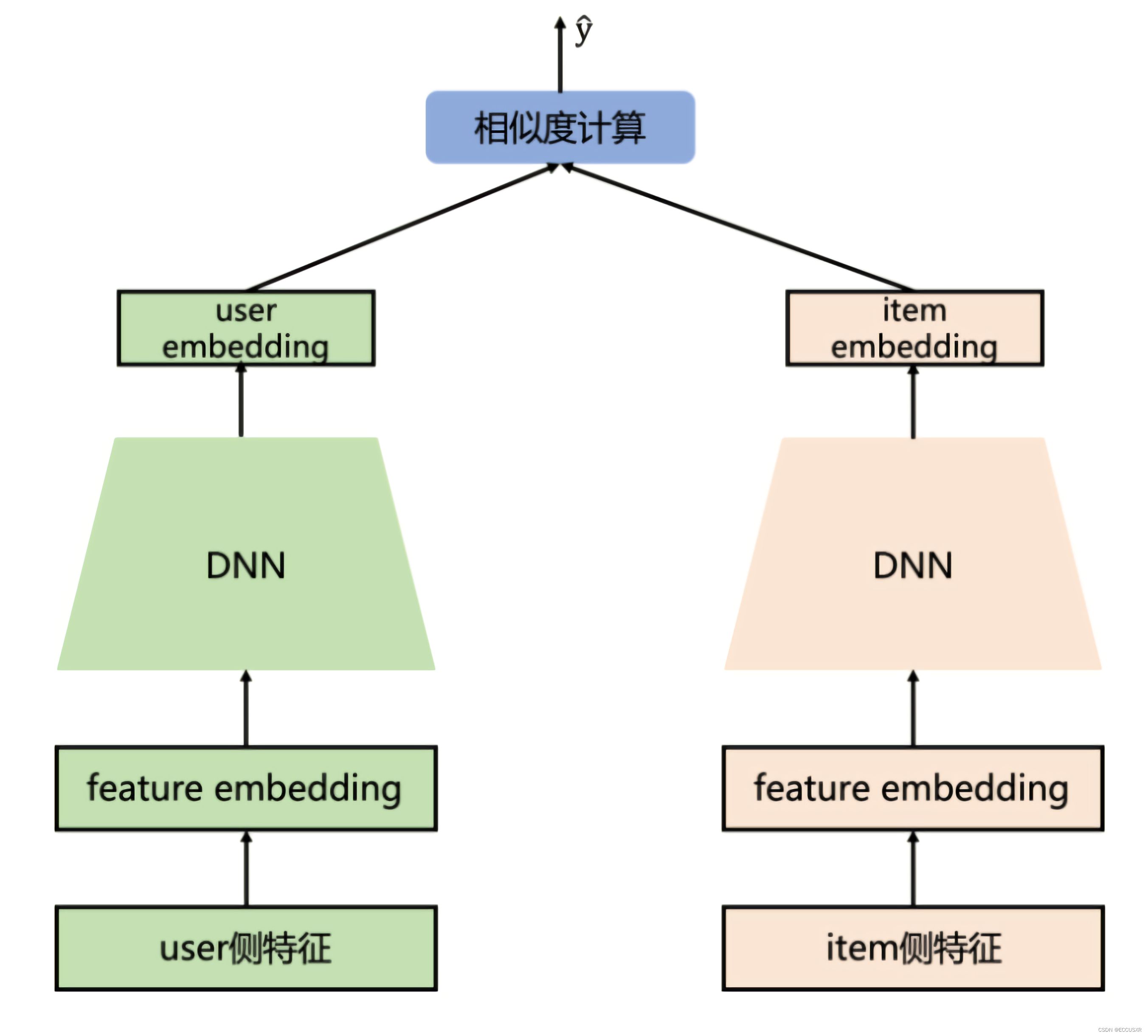

5 DSSM

Deep Structured Semantic Models The twin tower model

stay NLP Medium negative sampling is not target In the sample .k Usually take 5-20, It cannot be uniform sampling , Even sampling will get many words with high frequency , It can be done in the following way :

6 The concept of multitasking

First, what is multi task learning ?

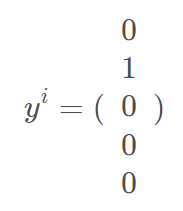

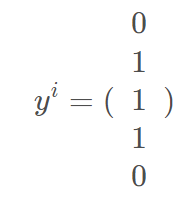

In general classification tasks , Usually, each instance corresponds to one label. As shown in the figure below . The first i Instances only correspond to the second class .

However , In multi classification learning , An instance will correspond to multiple label.

Loss function :

[ Failed to transfer the external chain picture , The origin station may have anti-theft chain mechanism , It is suggested to save the pictures and upload them directly (img-JR9CDPYb-1656385819493)(C:\Users\96212\AppData\Roaming\Typora\typora-user-images\image-20220628104734434.png)]

m Sample size

j For the first time j individual lable

Multitasking learning will share the same low-order features

For multi task learning , We can try a large enough neural network to handle all tasks

7 MMOE

Task relationship modeling in multi expert mixed multi task learning

The paper https://dl.acm.org/doi/10.1145/3219819.3220007

MMOE Information about the model :

Video introduction Of youtube Address :https://www.youtube.com/watch?v=Dweg47Tswxw

keras Framework implementations The open source address of :https://github.com/drawbridge/k

- Multitask model : Learn commonalities and differences between different tasks , It can improve the quality and efficiency of modeling .

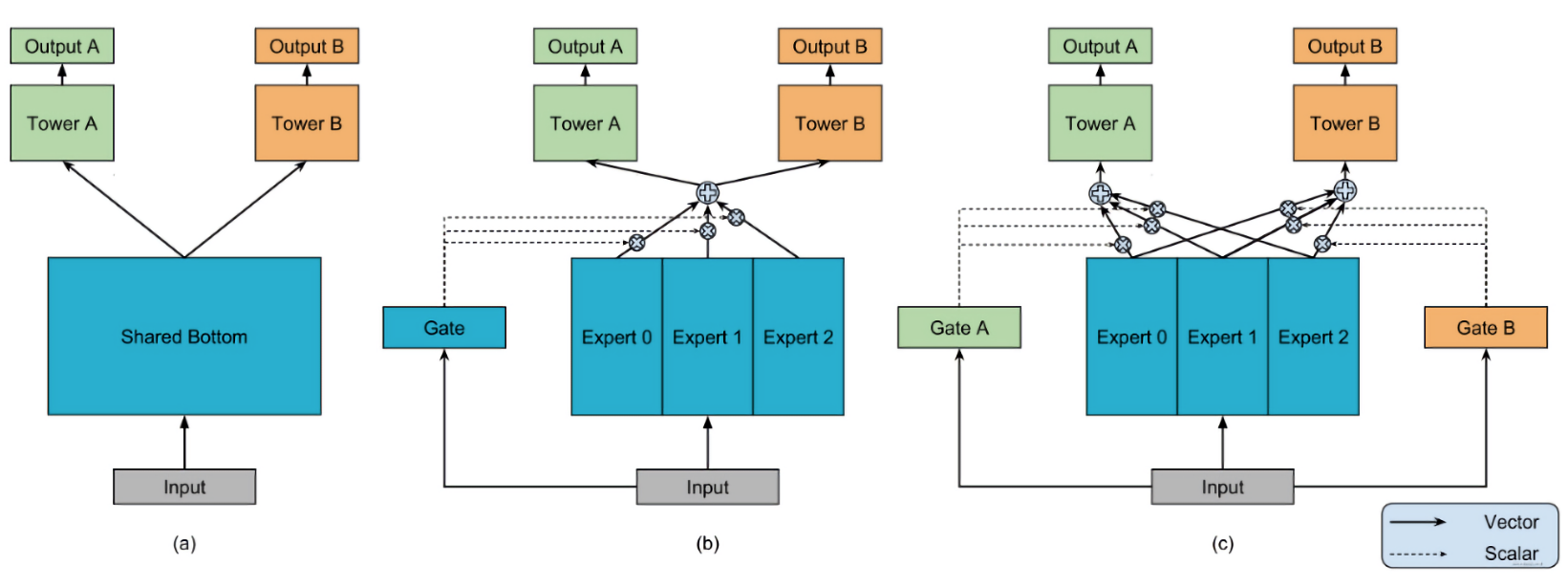

- Multi task model design pattern :

- Hard Parameter Sharing Method : The bottom layer is the shared hidden layer , Learn the common pattern of each task , The upper layer uses some specific full connection layers to learn specific task patterns

- Soft Parameter Sharing Method : Underlying does not use shared shared bottom, It's more than one tower, To different tower Assign different weights

- Task sequence dependency modeling : This is suitable for different tasks with a certain sequence dependency

Hybrid expert system (MoE) It's a neural network , It's one of them combine Model of . Data generation methods applicable to data sets are different . Different from the general neural network, it separates and trains multiple models according to the data , Each model is called Experts , and Door control module Used to choose which expert to use , The actual output of the model is the combination of the output of each model and the weight of the gating model . Each expert model can adopt different functions ( Various linear or nonlinear functions ). Hybrid expert system is to integrate multiple models into a single task .

Hybrid expert system has two architectures :competitive MoE and cooperative MoE.competitive MoE The local area of the data in is forcibly concentrated in each discrete space of the data , and cooperative MoE There are no mandatory restrictions .

MoE Model principle : Based on multiple

ExpertAggregate output , Through the gating network mechanism ( Attention networks ) Get eachExpertThe weight ofbe based on OMOE Model , Every

ExpertTasks have a gated networkcharacteristic :

- Avoid task conflicts , Adjust according to different door controls , Select those that are helpful for the current task

ExpertCombine - Build relationships between tasks

- Flexible parameter sharing

- The model can converge quickly during training

- Avoid task conflicts , Adjust according to different door controls , Select those that are helpful for the current task

边栏推荐

- 嵌入式工程师如何提高工作效率

- 移动端通过设置rem使页面内容及字体大小自动调整

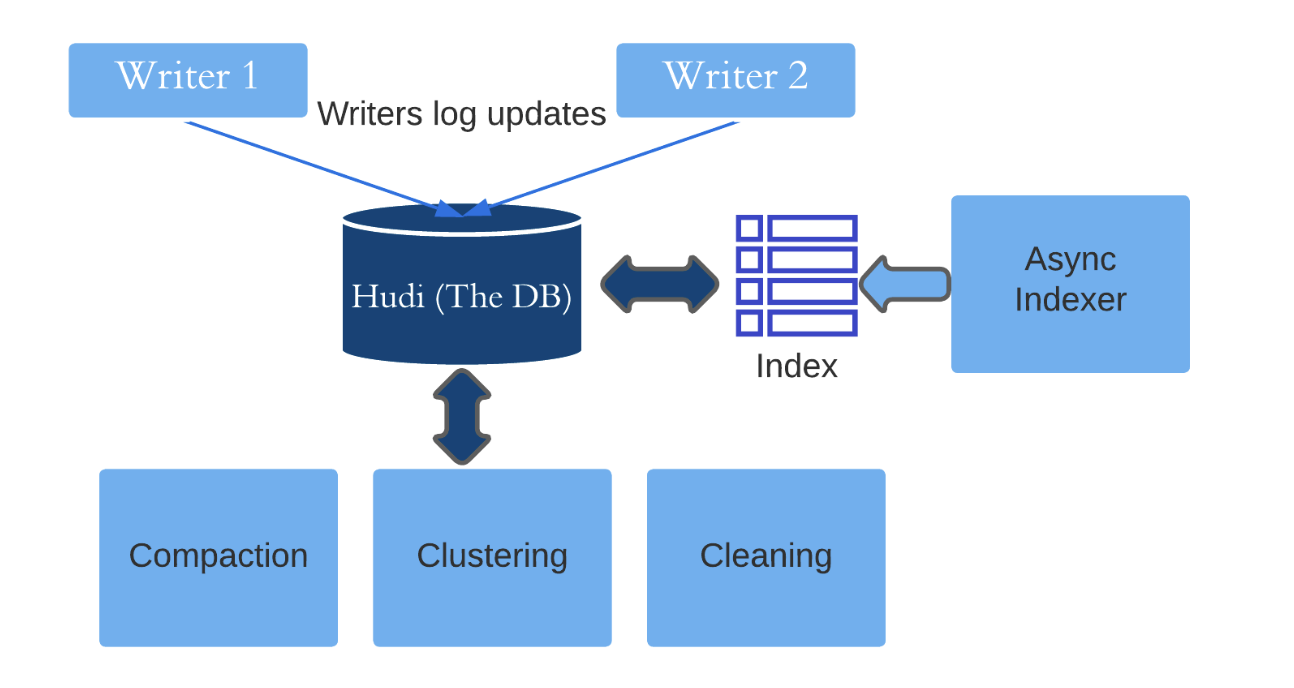

- 深入理解Apache Hudi异步索引机制

- 软考中级电子商务师含金量高嘛?

- Socket通信原理和实践

- When there are pointer variable members in the custom type, the return value and parameters of the assignment operator overload must be reference types

- Some properties of leetcode139 Yang Hui triangle

- 深入分析ERC-4907协议的主要内容,思考此协议对NFT市场流动性意义!

- leetcode-303:区域和检索 - 数组不可变

- The variables or functions declared in the header file cannot be recognized after importing other people's projects and adding the header file

猜你喜欢

P2788 math 1 - addition and subtraction

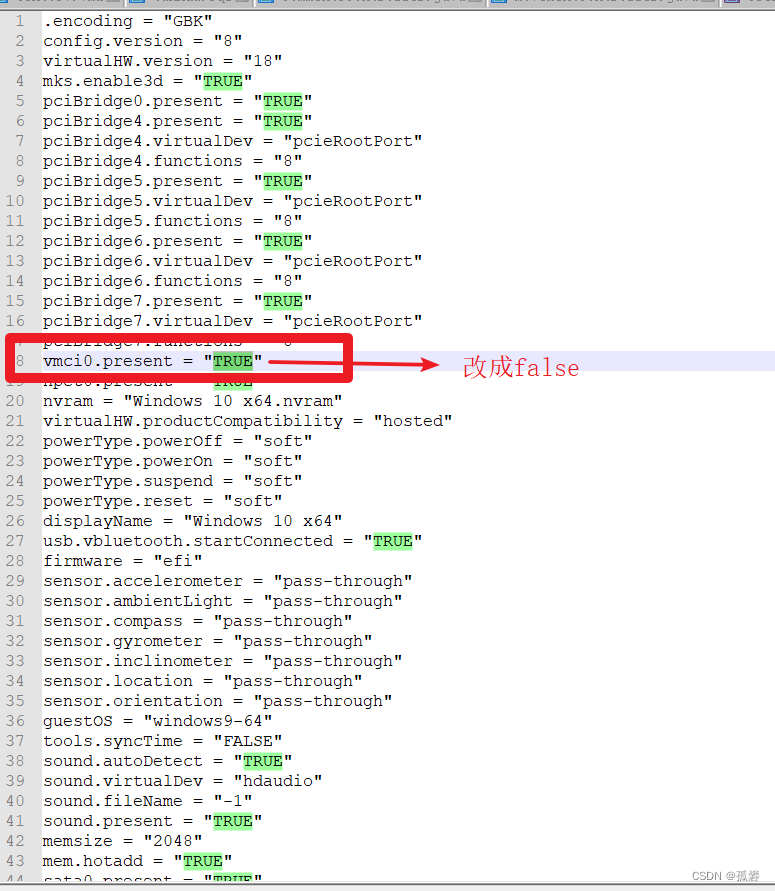

无法打开内核设备“\\.\VMCIDev\VMX”: 操作成功完成。是否在安装 VMware Workstation 后重新引导? 模块“DevicePowerOn”启动失败。 未能启动虚拟机。

Review of the losers in the postgraduate entrance examination

P1223 排队接水/1319:【例6.1】排队接水

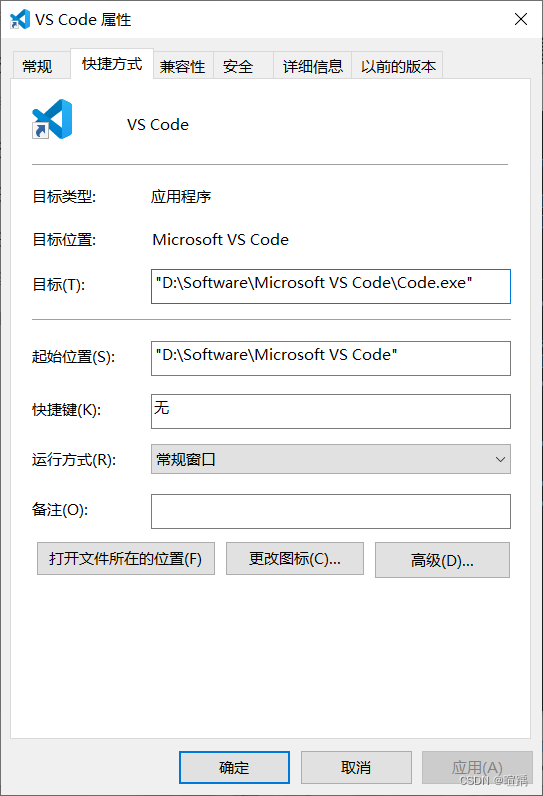

Vs code specifies the extension installation location

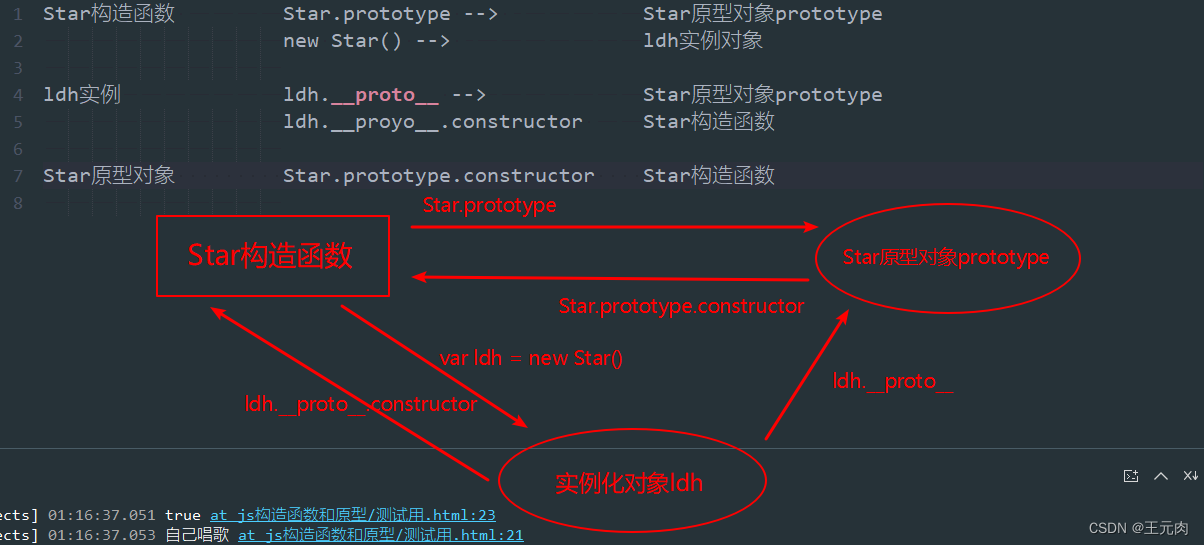

Prototype and prototype chain

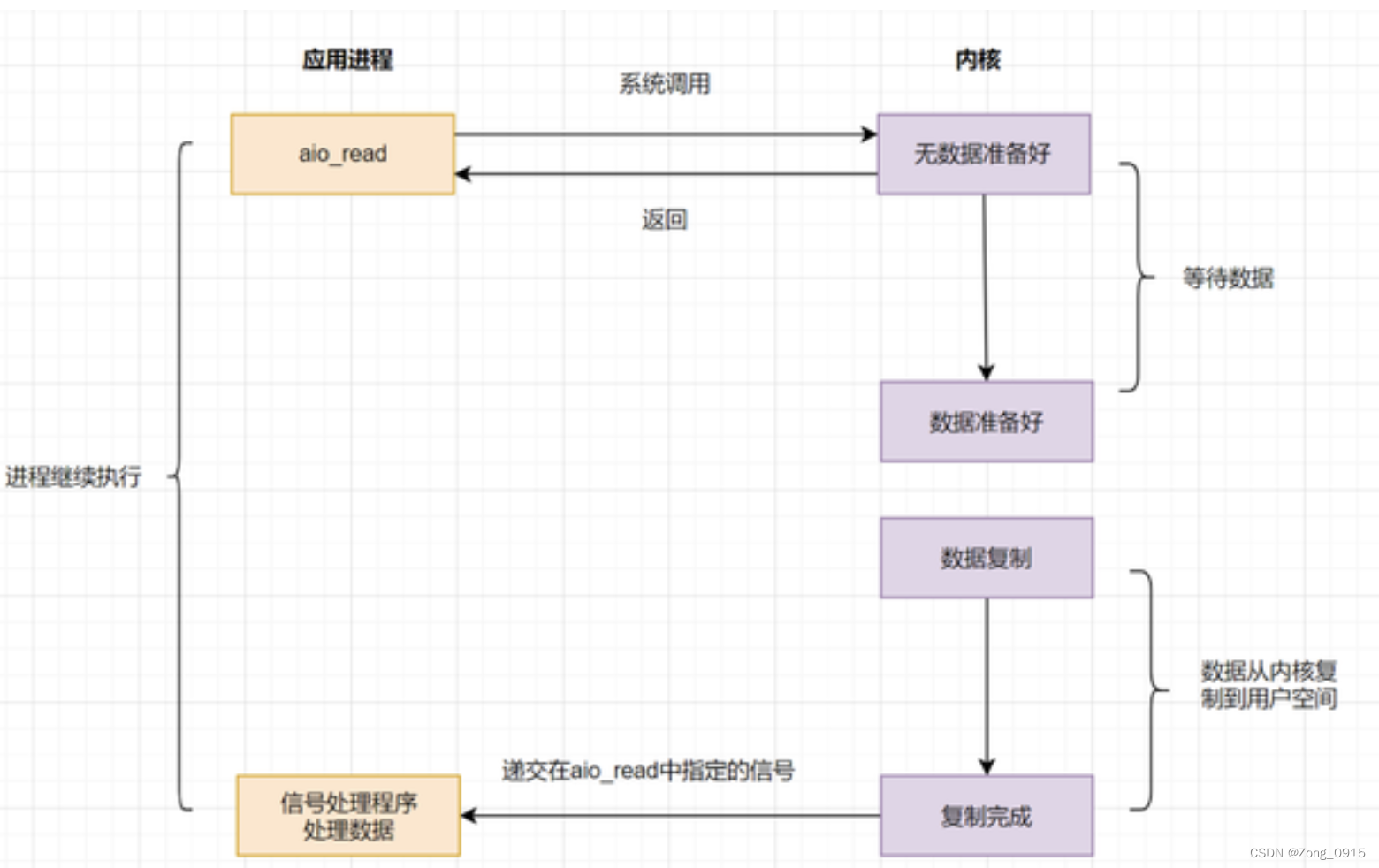

IO模型复习

深入理解Apache Hudi异步索引机制

![[second on] [jeecgboot] modify paging parameters](/img/59/55313e3e0cf6a1f7f6b03665e77789.png)

[second on] [jeecgboot] modify paging parameters

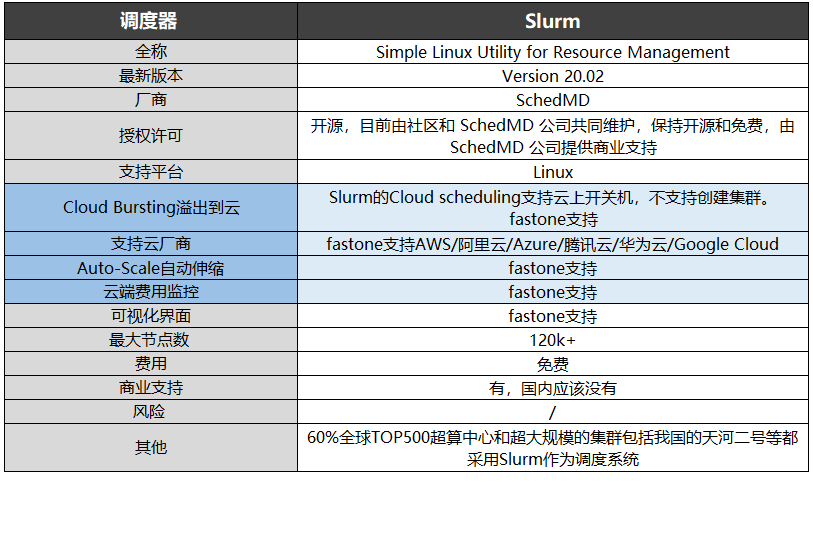

基于HPC场景的集群任务调度系统LSF/SGE/Slurm/PBS

随机推荐

Summary of router development knowledge

Deeply analyze the main contents of erc-4907 agreement and think about the significance of this agreement to NFT market liquidity!

The variables or functions declared in the header file cannot be recognized after importing other people's projects and adding the header file

移动端通过设置rem使页面内容及字体大小自动调整

Elegant controller layer code

如何顺利通过下半年的高级系统架构设计师?

1321:【例6.3】删数问题(Noip1994)

Vs code specifies the extension installation location

IDA中常见快捷键

Openinstall and Hupu have reached a cooperation to mine the data value of sports culture industry

Jump to the mobile terminal page or PC terminal page according to the device information

5个chrome简单实用的日常开发功能详解,赶快解锁让你提升更多效率!

XML configuration file parsing and modeling

求方程ax^2+bx+c=0的根(C语言)

【STM32】STM32烧录程序后SWD无法识别器件的问题解决方法

简单易修改的弹框组件

Talking about the return format in the log, encapsulation format handling, exception handling

软考一般什么时候出成绩呢?在线蹬?

Using U2 net deep network to realize -- certificate photo generation program

Why is the reflection efficiency low?