当前位置:网站首页>Backup tidb cluster to persistent volume

Backup tidb cluster to persistent volume

2022-07-07 21:25:00 【Tianxiang shop】

This document describes how to put Kubernetes On TiDB The data of the cluster is backed up to Persistent volume On . Persistent volumes described in this article , Means any Kubernetes Supported persistent volume types . This paper aims to backup data to the network file system (NFS) For example, storage .

The backup method described in this document is based on TiDB Operator Of CustomResourceDefinition (CRD) Realization , Bottom use BR Tools to obtain cluster data , Then store the backup data to a persistent volume .BR Its full name is Backup & Restore, yes TiDB Command line tools for distributed backup and recovery , Used to deal with TiDB Cluster for data backup and recovery .

Use scenarios

If you have the following requirements for data backup , Consider using BR take TiDB Cluster data in Ad-hoc Backup or Scheduled full backup Backup to persistent volumes :

- The amount of data that needs to be backed up is large , And it requires faster backup

- Need to backup data directly SST file ( Key value pair )

If there are other backup requirements , Reference resources Introduction to backup and recovery Choose the right backup method .

Be careful

- BR Only support TiDB v3.1 And above .

- Use BR The data backed up can only be restored to TiDB In the database , Cannot recover to other databases .

Ad-hoc Backup

Ad-hoc Backup supports full backup and incremental backup .Ad-hoc Backup by creating a custom Backup custom resource (CR) Object to describe a backup .TiDB Operator According to this Backup Object to complete the specific backup process . If an error occurs during the backup , The program will not automatically retry , At this time, it needs to be handled manually .

This document assumes that the deployment is in Kubernetes test1 In this namespace TiDB colony demo1 Data backup , The following is the specific operation process .

The first 1 Step : Get ready Ad-hoc Backup environment

Download the file backup-rbac.yaml To the server performing the backup .

Execute the following command , stay

test1In this namespace , To create a backup RBAC Related resources :kubectl apply -f backup-rbac.yaml -n test1Confirm that you can start from Kubernetes Access the... Used to store backup data in the cluster NFS The server , And you have configured TiKV Mounting is the same as backup NFS Share the directory to the same local directory .TiKV mount NFS The specific configuration method of can refer to the following configuration :

spec: tikv: additionalVolumes: # Specify volume types that are supported by Kubernetes, Ref: https://kubernetes.io/docs/concepts/storage/persistent-volumes/#types-of-persistent-volumes - name: nfs nfs: server: 192.168.0.2 path: /nfs additionalVolumeMounts: # This must match `name` in `additionalVolumes` - name: nfs mountPath: /nfsIf you use it TiDB Version below v4.0.8, You also need to do the following . If you use it TiDB by v4.0.8 And above , You can skip this step .

Make sure you have a backup database

mysql.tidbTabularSELECTandUPDATEjurisdiction , Used to adjust before and after backup GC Time .establish

backup-demo1-tidb-secretsecret For storing access TiDB Password corresponding to the user of the cluster .kubectl create secret generic backup-demo1-tidb-secret --from-literal=password=${password} --namespace=test1

The first 2 Step : Back up data to persistent volumes

establish

BackupCR, And back up the data to NFS:kubectl apply -f backup-nfs.yamlbackup-nfs.yamlThe contents of the document are as follows , This example will TiDB The data of the cluster is fully exported and backed up to NFS:--- apiVersion: pingcap.com/v1alpha1 kind: Backup metadata: name: demo1-backup-nfs namespace: test1 spec: # # backupType: full # # Only needed for TiDB Operator < v1.1.10 or TiDB < v4.0.8 # from: # host: ${tidb-host} # port: ${tidb-port} # user: ${tidb-user} # secretName: backup-demo1-tidb-secret br: cluster: demo1 clusterNamespace: test1 # logLevel: info # statusAddr: ${status-addr} # concurrency: 4 # rateLimit: 0 # checksum: true # options: # - --lastbackupts=420134118382108673 local: prefix: backup-nfs volume: name: nfs nfs: server: ${nfs_server_ip} path: /nfs volumeMount: name: nfs mountPath: /nfsIn the configuration

backup-nfs.yamlWhen you file , Please refer to the following information :If you need incremental backup , Only need

spec.br.optionsSpecifies the last backup timestamp--lastbackuptsthat will do . Restrictions on incremental backups , May refer to Use BR Back up and restore ..spec.localIndicates the persistent volume related configuration , Detailed explanation reference Local Storage field introduction .spec.brSome parameter items in can be omitted , Such aslogLevel、statusAddr、concurrency、rateLimit、checksum、timeAgo. more.spec.brFor detailed explanation of fields, please refer to BR Field is introduced .If you use it TiDB by v4.0.8 And above , BR Will automatically adjust

tikv_gc_life_timeParameters , No configuration requiredspec.tikvGCLifeTimeandspec.fromField .more

BackupCR Detailed explanation of fields , Reference resources Backup CR Field is introduced .

Create good

BackupCR after ,TiDB Operator Will be based onBackupCR Automatically start backup . You can check the backup status through the following command :kubectl get bk -n test1 -owide

Backup example

Back up all cluster data

Backing up data from a single database

Back up the data of a single table

Use the table library filtering function to back up the data of multiple tables

Scheduled full backup

Users set backup policies to TiDB The cluster performs scheduled backup , At the same time, set the retention policy of backup to avoid too many backups . Scheduled full backup through customized BackupSchedule CR Object to describe . A full backup will be triggered every time the backup time point , The bottom layer of scheduled full backup passes Ad-hoc Full backup . The following are the specific steps to create a scheduled full backup :

The first 1 Step : Prepare a scheduled full backup environment

Same as Ad-hoc Backup environment preparation .

The first 2 Step : Regularly back up the data to the persistent volume

establish

BackupScheduleCR, Turn on TiDB Scheduled full backup of the cluster , Back up the data to NFS:kubectl apply -f backup-schedule-nfs.yamlbackup-schedule-nfs.yamlThe contents of the document are as follows :--- apiVersion: pingcap.com/v1alpha1 kind: BackupSchedule metadata: name: demo1-backup-schedule-nfs namespace: test1 spec: #maxBackups: 5 #pause: true maxReservedTime: "3h" schedule: "*/2 * * * *" backupTemplate: # Only needed for TiDB Operator < v1.1.10 or TiDB < v4.0.8 # from: # host: ${tidb_host} # port: ${tidb_port} # user: ${tidb_user} # secretName: backup-demo1-tidb-secret br: cluster: demo1 clusterNamespace: test1 # logLevel: info # statusAddr: ${status-addr} # concurrency: 4 # rateLimit: 0 # checksum: true local: prefix: backup-nfs volume: name: nfs nfs: server: ${nfs_server_ip} path: /nfs volumeMount: name: nfs mountPath: /nfsFrom above

backup-schedule-nfs.yamlThe file configuration example shows ,backupScheduleThe configuration of consists of two parts . Part of it isbackupScheduleUnique configuration , The other part isbackupTemplate.backupScheduleFor specific introduction of unique configuration items, please refer to BackupSchedule CR Field is introduced .backupTemplateSpecify the configuration related to cluster and remote storage , Fields and Backup CR Mediumspecequally , Please refer to Backup CR Field is introduced .

After the scheduled full backup is created , Check the status of the backup through the following command :

kubectl get bks -n test1 -owideCheck all the backup pieces below the scheduled full backup :

kubectl get bk -l tidb.pingcap.com/backup-schedule=demo1-backup-schedule-nfs -n test1

边栏推荐

- Klocwork code static analysis tool

- 私募基金在中国合法吗?安全吗?

- Intelligent software analysis platform embold

- 【函数递归】简单递归的5个经典例子,你都会吗?

- Demon daddy A1 speech listening initial challenge

- Can Huatai Securities achieve Commission in case of any accident? Is it safe to open an account

- DataTable数据转换为实体

- 特征生成

- [UVALive 6663 Count the Regions] (dfs + 离散化)[通俗易懂]

- Datatable data conversion to entity

猜你喜欢

How to meet the dual needs of security and confidentiality of medical devices?

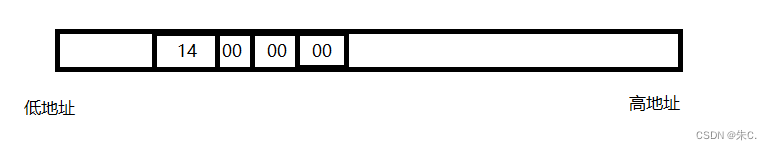

C语言 整型 和 浮点型 数据在内存中存储详解(内含原码反码补码,大小端存储等详解)

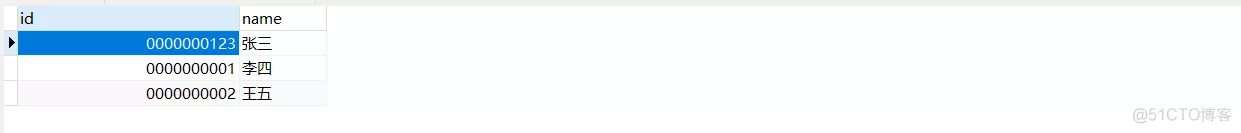

MySQL约束之默认约束default与零填充约束zerofill

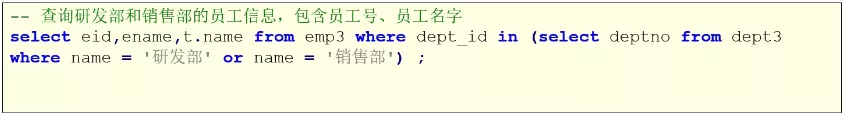

Mysql子查询关键字的使用方式(exists)

Usage of MySQL subquery keywords (exists)

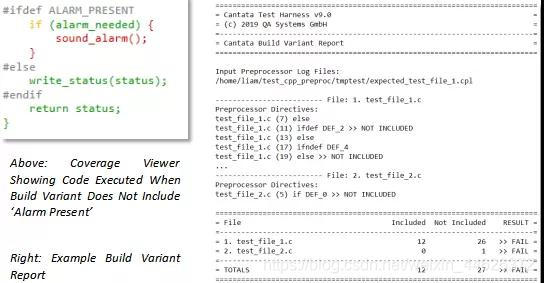

Cantata9.0 | new features

Default constraint and zero fill constraint of MySQL constraint

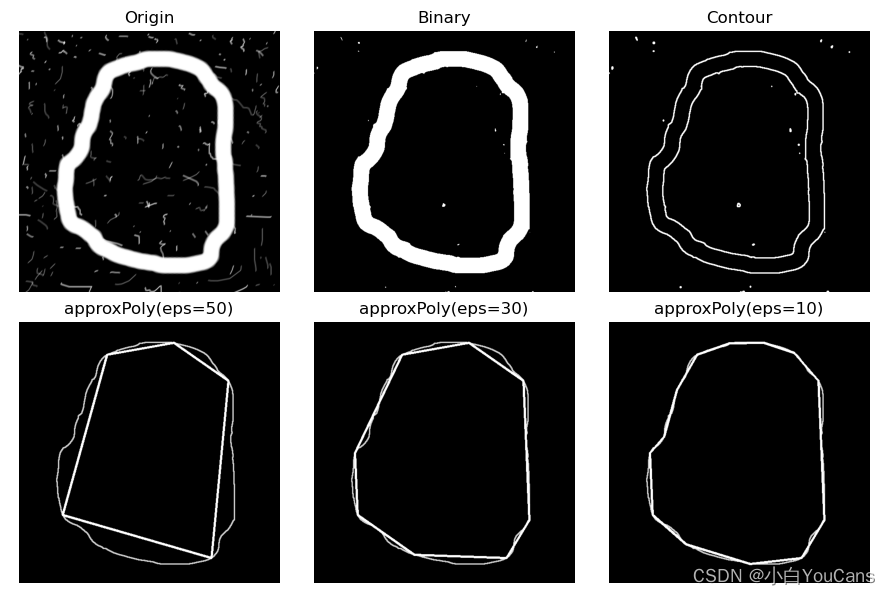

【OpenCV 例程200篇】223. 特征提取之多边形拟合(cv.approxPolyDP)

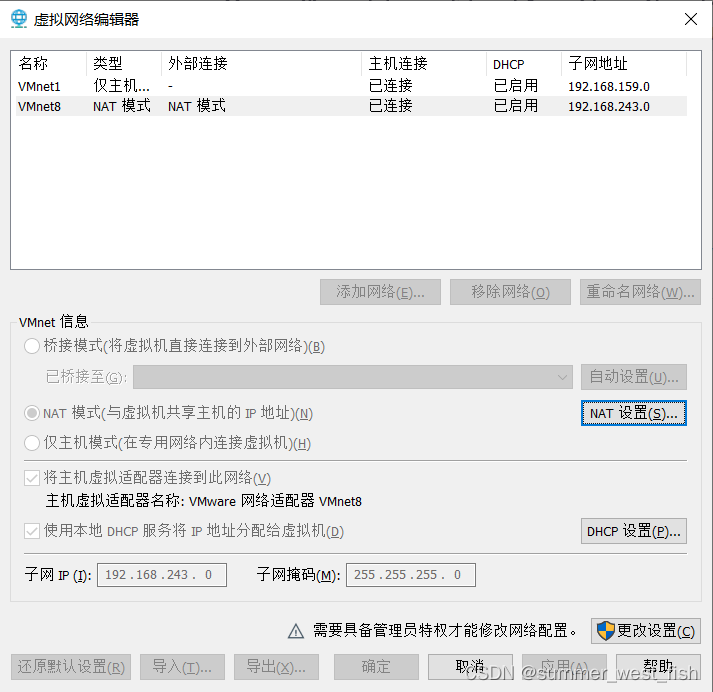

Virtual machine network configuration in VMWare

神兵利器——敏感文件发现工具

随机推荐

Object-C programming tips timer "suggestions collection"

Phoenix JDBC

Jetty: configure connector [easy to understand]

Small guide for rapid formation of manipulator (12): inverse kinematics analysis

Implement secondary index with Gaussian redis

MinGW MinGW-w64 TDM-GCC等工具链之间的差别与联系「建议收藏」

Postgresql数据库character varying和character的区别说明

Deadlock conditions and preventive treatment [easy to understand]

UVA 11080 – place the guards

201215-03-19 - cocos2dx memory management - specific explanation "recommended collection"

SQL injection error report injection function graphic explanation

Is private equity legal in China? Is it safe?

The difference between NPM uninstall and RM direct deletion

Datatable data conversion to entity

死锁的产生条件和预防处理[通俗易懂]

Mysql子查询关键字的使用方式(exists)

Virtual machine network configuration in VMWare

Hdu4876zcc love cards (multi check questions)

Focusing on safety in 1995, Volvo will focus on safety in the field of intelligent driving and electrification in the future

Feature generation